Sure on-line dangers to kids are on the rise, in keeping with a latest report from Thorn, a know-how nonprofit whose mission is to construct know-how to defend kids from sexual abuse. Analysis shared within the Rising On-line Tendencies in Youngster Sexual Abuse 2023 report, signifies that minors are more and more taking and sharing sexual photographs of themselves. This exercise might happen consensually or coercively, as youth additionally report a rise in dangerous on-line interactions with adults.

“In our digitally linked world, little one sexual abuse materials is definitely and more and more shared on the platforms we use in our each day lives,” stated John Starr, VP of Strategic Affect at Thorn. “Dangerous interactions between youth and adults are usually not remoted to the darkish corners of the net. As quick because the digital group builds modern platforms, predators are co-opting these areas to take advantage of kids and share this egregious content material.”

These traits and others shared within the Rising On-line Tendencies report align with what different little one security organizations are reporting. The Nationwide Heart for Lacking and Exploited Youngsters (NCMEC) ‘s CyberTipline has seen a 329% improve in little one sexual abuse materials (CSAM) information reported within the final 5 years. In 2022 alone, NCMEC obtained greater than 88.3 million CSAM information.

A number of components could also be contributing to the rise in stories:

- Extra platforms are deploying instruments, corresponding to Thorn’s Safer product, to detect identified CSAM utilizing hashing and matching.

- On-line predators are extra brazen and deploying novel applied sciences, corresponding to chatbots, to scale their enticement. From 2021 to 2022, NCMEC noticed an 82% improve in stories of on-line enticement of youngsters for sexual acts.

- Self-generated CSAM (SG-CSAM) is on the rise. From 2021 to 2022 alone, the Web Watch Basis famous a 9% rise in SG-CSAM.

This content material is a possible danger for each platform that hosts user-generated content material—whether or not a profile image or expansive cloud cupboard space.

Solely know-how can deal with the size of this challenge

Hashing and matching is likely one of the most vital items of know-how that tech firms can make the most of to assist hold customers and platforms shielded from the dangers of internet hosting this content material whereas additionally serving to to disrupt the viral unfold of CSAM and the cycles of revictimization.

Hundreds of thousands of CSAM information are shared on-line yearly. A big portion of those information are of beforehand reported and verified CSAM. As a result of the content material is understood and has been beforehand added to an NGO hash listing, it may be detected utilizing hashing and matching.

What’s hashing and matching?

Put merely, hashing and matching is a programmatic technique to detect CSAM and disrupt its unfold on-line. Two kinds of hashing are generally used: perceptual and cryptographic hashing. Each applied sciences convert a file into a novel string of numbers known as a hash worth. It is like a digital fingerprint for each bit of content material.

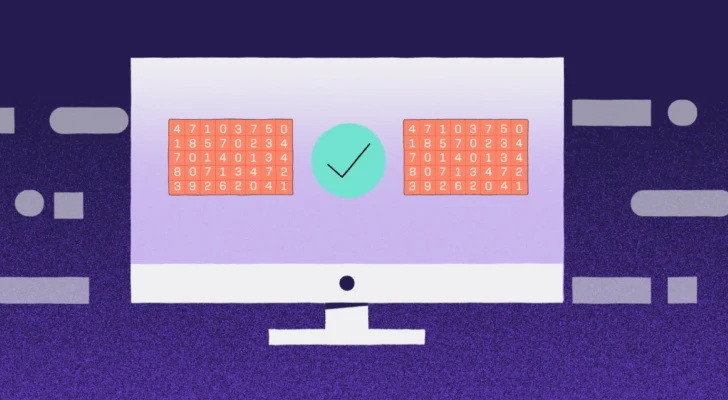

To detect CSAM, content material is hashed, and the ensuing hash values are in contrast towards hash lists of identified CSAM. This system permits tech firms to establish, block, or take away this illicit content material from their platforms.

Increasing the corpus of identified CSAM

Hashing and matching is the muse of CSAM detection. As a result of it depends upon matching towards hash lists of beforehand reported and verified content material, the variety of identified CSAM hash values within the database that an organization matches towards is essential.

Safer, a device for proactive CSAM detection constructed by Thorn, affords entry to a big database aggregating 29+ million identified CSAM hash values. Safer additionally allows know-how firms to share hash lists with one another (both named or anonymously), additional increasing the corpus of identified CSAM, which helps to disrupt its viral unfold.

Eliminating CSAM from the web

To eradicate CSAM from the web, tech firms and NGOs every have a job to play. “Content material-hosting platforms are key companions, and Thorn is dedicated to empowering the tech trade with instruments and assets to fight little one sexual abuse at scale,” Starr added. “That is about safeguarding our kids. It is also about serving to tech platforms defend their customers and themselves from the dangers of internet hosting this content material. With the precise instruments, the web could be safer.”

In 2022, Safer hashed greater than 42.1 billion photographs and movies for his or her prospects. That resulted in 520,000 information of identified CSAM being discovered on their platforms. Thus far, Safer has helped its prospects establish greater than two million items of CSAM on their platforms.

The extra platforms that make the most of CSAM detection instruments, the higher probability there may be that the alarming rise of kid sexual abuse materials on-line could be reversed.