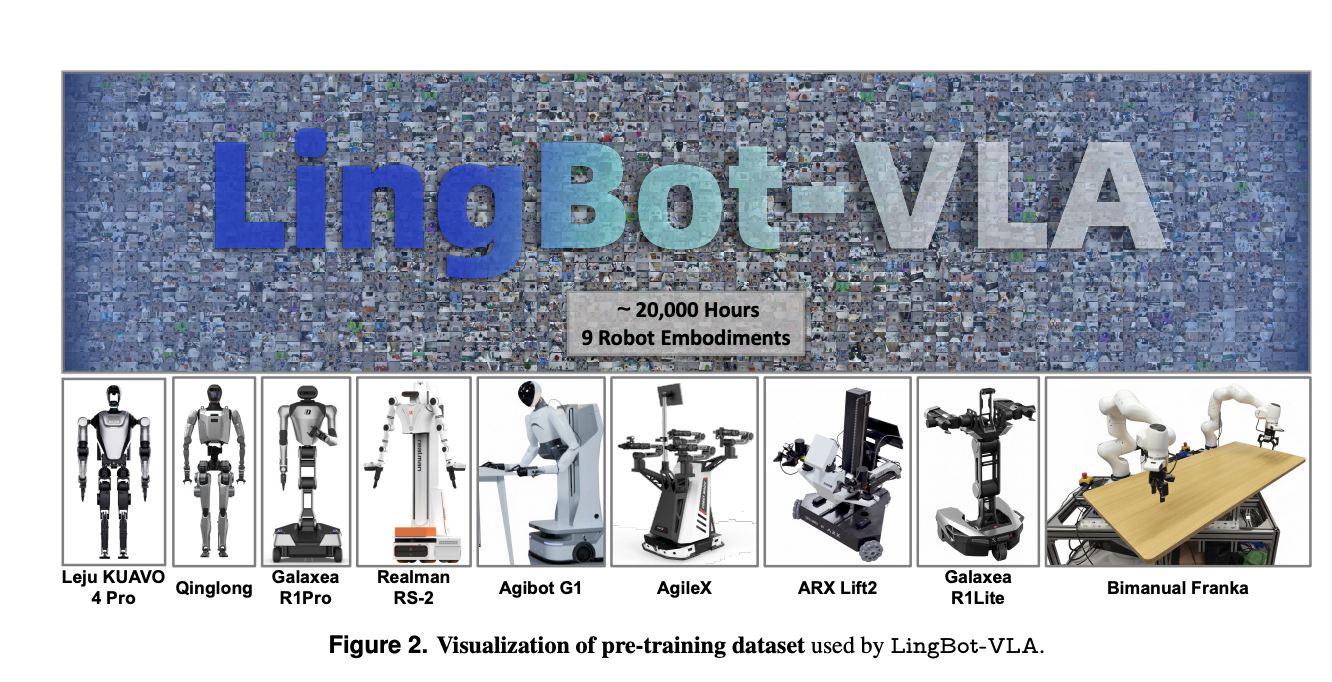

How do you construct a single imaginative and prescient language motion mannequin that may management many various twin arm robots in the true world? LingBot-VLA is Ant Group Robbyant’s new Imaginative and prescient Language Motion basis mannequin that targets sensible robotic manipulation in the true world. It’s educated on about 20,000 hours of teleoperated bimanual information collected from 9 twin arm robotic embodiments and is evaluated on the big scale GM-100 benchmark throughout 3 platforms. The mannequin is designed for cross morphology generalization, information environment friendly submit coaching, and excessive coaching throughput on commodity GPU clusters.

Giant scale twin arm dataset throughout 9 robotic embodiments

The pre-training dataset is constructed from actual world teleoperation on 9 well-liked twin arm configurations. These embody AgiBot G1, AgileX, Galaxea R1Lite, Galaxea R1Pro, Realman Rs 02, Leju KUAVO 4 Professional, Qinglong humanoid, ARX Lift2, and a Bimanual Franka setup. All techniques have twin 6 or 7 diploma of freedom arms with parallel grippers and a number of RGB-D cameras that present multi view observations

Teleoperation makes use of VR management for AgiBot G1 and isomorphic arm management for AgileX. For every scene the recorded movies from all views are segmented by human annotators into clips that correspond to atomic actions. Static frames at the beginning and finish of every clip are eliminated to scale back redundancy. Process stage and sub activity stage language directions are then generated with Qwen3-VL-235B-A22B. This pipeline yields synchronized sequences of photographs, directions, and motion trajectories for pre-training.

To characterize motion variety the analysis group visualizes essentially the most frequent atomic actions in coaching and checks by way of phrase clouds. About 50 p.c of atomic actions within the take a look at set don’t seem inside the high 100 most frequent actions within the coaching set. This hole ensures that analysis stresses cross activity generalization fairly than frequency based mostly memorization.

Structure, Combination of Transformers, and Move Matching actions

LingBot-VLA combines a powerful multimodal spine with an motion knowledgeable by way of a Combination of Transformers structure. The imaginative and prescient language spine is Qwen2.5-VL. It encodes multi-view operational photographs and the pure language instruction right into a sequence of multimodal tokens. In parallel, the motion knowledgeable receives robotic proprioceptive state and chunks of previous actions. Each branches share a self consideration module that performs layer smart joint sequence modeling over commentary and motion tokens.

At every time step the mannequin varieties an commentary sequence that concatenates tokens from 3 digicam views, the duty instruction, and the robotic state. The motion sequence is a future motion chunk with a temporal horizon set to 50 throughout pre-training. The coaching goal is conditional Move Matching. The mannequin learns a vector discipline that transports Gaussian noise to the bottom fact motion trajectory alongside a linear likelihood path. This offers a steady motion illustration and produces clean, temporally coherent management appropriate for exact twin arm manipulation.

LingBot-VLA makes use of blockwise causal consideration over the joint sequence. Commentary tokens can attend to one another bidirectionally. Motion tokens can attend to all commentary tokens and solely to previous motion tokens. This masks prevents info leakage from future actions into present observations whereas nonetheless permitting the motion knowledgeable to use the complete multimodal context at every resolution step.

Spatial notion by way of LingBot Depth distillation

Many VLA fashions battle with depth reasoning when depth sensors fail or return sparse measurements. LingBot-VLA addresses this by integrating LingBot-Depth, a separate spatial notion mannequin based mostly on Masked Depth Modeling. LingBot-Depth is educated in a self supervised means on a big RGB-D corpus and learns to reconstruct dense metric depth when elements of the depth map are masked, usually in areas the place bodily sensors are inclined to fail.

In LingBot-VLA the visible queries from every digicam view are aligned with LingBot-Depth tokens by way of a projection layer and a distillation loss. Cross consideration maps VLM queries into the depth latent house and the coaching minimizes their distinction from LingBot-Depth options. This injects geometry conscious info into the coverage and improves efficiency on duties that require correct 3D spatial reasoning, comparable to insertion, stacking, and folding below muddle and occlusion.

GM-100 actual world benchmark throughout 3 platforms

The primary analysis makes use of GM-100, an actual world benchmark with 100 manipulation duties and 130 filtered teleoperated trajectories per activity on every of three {hardware} platforms. Experiments evaluate LingBot-VLA with π0.5, GR00T N1.6, and WALL-OSS below a shared submit coaching protocol. All strategies nice tune from public checkpoints with the identical dataset, batch dimension 256, and 20 epochs. Success Charge measures completion of all subtasks inside 3 minutes and Progress Rating tracks partial completion.

On GM-100, LingBot-VLA with depth achieves cutting-edge averages throughout the three platforms. The common Success Charge is 17.30 p.c and the typical Progress Rating is 35.41 p.c. π0.5 reaches 13.02 p.c SR (success price) and 27.65 p.c PS (progress rating). GR00T N1.6 and WALL-OSS are decrease at 7.59 p.c SR, 15.99 p.c PS and 4.05 p.c SR, 10.35 p.c PS respectively. LingBot-VLA with out depth already outperforms GR00T N1.6 and WALL-OSS and the depth variant provides additional positive aspects.

In RoboTwin 2.0 simulation with 50 duties, fashions are educated on 50 demonstrations per activity in clear scenes and 500 per activity in randomized scenes. LingBot-VLA with depth reaches 88.56 p.c common Success Charge in clear scenes and 86.68 p.c in randomized scenes. π0.5 reaches 82.74 p.c and 76.76 p.c in the identical settings. This exhibits constant positive aspects from the identical structure and depth integration when area randomization is powerful.

Scaling conduct and information environment friendly submit coaching

The analysis group analyzes scaling legal guidelines by various pre-training information from 3,000 to twenty,000 hours on a subset of 25 duties. Each Success Charge and Progress Rating improve monotonically with information quantity, with no saturation on the largest scale studied. That is the primary empirical proof that VLA fashions preserve favorable scaling on actual robotic information at this dimension.

Additionally they research information effectivity of submit coaching on AgiBot G1 utilizing 8 consultant GM-100 duties. With solely 80 demonstrations per activity LingBot-VLA already surpasses π0.5 that makes use of the complete 130 demonstration set, in each Success Charge and Progress Rating. As extra trajectories are added the efficiency hole widens. This confirms that the pre-trained coverage transfers with solely dozens to round 100 activity particular trajectories, which immediately reduces adaptation value for brand new robots or duties.

Coaching throughput and open supply toolkit

LingBot-VLA comes with a coaching stack optimized for multi-node effectivity. The codebase makes use of a FSDP model technique for parameters and optimizer states, hybrid sharding for the motion knowledgeable, blended precision with float32 reductions and bfloat16 storage, and operator stage acceleration with fused consideration kernels and torch compile.

On an 8 GPU setup the analysis group reported throughput of 261 samples per second per GPU for Qwen2.5-VL-3B and PaliGemma-3B-pt-224 mannequin configurations. This corresponds to a 1.5 occasions to 2.8 occasions speedup in contrast with present VLA oriented codebases comparable to StarVLA, Dexbotic, and OpenPI evaluated on the identical Libero based mostly benchmark. Throughput scales near linearly when transferring from 8 to 256 GPUs. The complete submit coaching toolkit is launched as open supply.

Key Takeaways

- LingBot-VLA is a Qwen2.5-VL based mostly imaginative and prescient language motion basis mannequin educated on about 20,000 hours of actual world twin arm teleoperation throughout 9 robotic embodiments, which permits robust cross morphology and cross activity generalization.

- The mannequin integrates LingBot Depth by way of function distillation so imaginative and prescient tokens are aligned with a depth completion knowledgeable, which considerably improves 3D spatial understanding for insertion, stacking, folding, and different geometry delicate duties.

- On the GM-100 actual world benchmark, LingBot-VLA with depth achieves about 17.30 p.c common Success Charge and 35.41 p.c common Progress Rating, which is greater than π0.5, GR00T N1.6, and WALL OSS below the identical submit coaching protocol.

- LingBot-VLA exhibits excessive information effectivity in submit coaching, since on AgiBot G1 it will probably surpass π0.5 that makes use of 130 demonstrations per activity whereas utilizing solely about 80 demonstrations per activity, and efficiency continues to enhance as extra trajectories are added.

Try the Paper, Mannequin Weight, Repo and Challenge Web page. Additionally, be happy to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be a part of us on telegram as effectively.