Picture by Creator

In right this moment’s data-driven world, information evaluation and insights provide help to get essentially the most out of it and provide help to make higher selections. From an organization’s perspective, it offers a Aggressive Benefit and personaliz?s the entire course of.

This tutorial will discover essentially the most potent Python library pandas, and we’ll focus on crucial capabilities of this library which are vital for information evaluation. Learners may also comply with this tutorial as a result of its simplicity and effectivity. In the event you don’t have python put in in your system, you should utilize Google Colaboratory.

You possibly can obtain the dataset from that hyperlink.

import pandas as pd

df = pd.read_csv("kaggle_sales_data.csv", encoding="Latin-1") # Load the information

df.head() # Present first 5 rows

Output:

On this part, we’ll focus on numerous capabilities that provide help to to get extra about your information. Like viewing it or getting the imply, common, min/max, or getting details about the dataframe.

1. Information Viewing

-

df.head(): It shows the primary 5 rows of the pattern information

-

df.tail(): It shows the final 5 rows of the pattern information

-

df.pattern(n): It shows the random n variety of rows within the pattern information

-

df.form: It shows the pattern information’s rows and columns (dimensions).

It signifies that our dataset has 2823 rows, every containing 25 columns.

2. Statistics

This part accommodates the capabilities that provide help to carry out statistics like common, min/max, and quartiles in your information.

-

df.describe(): Get the fundamental statistics of every column of the pattern information

-

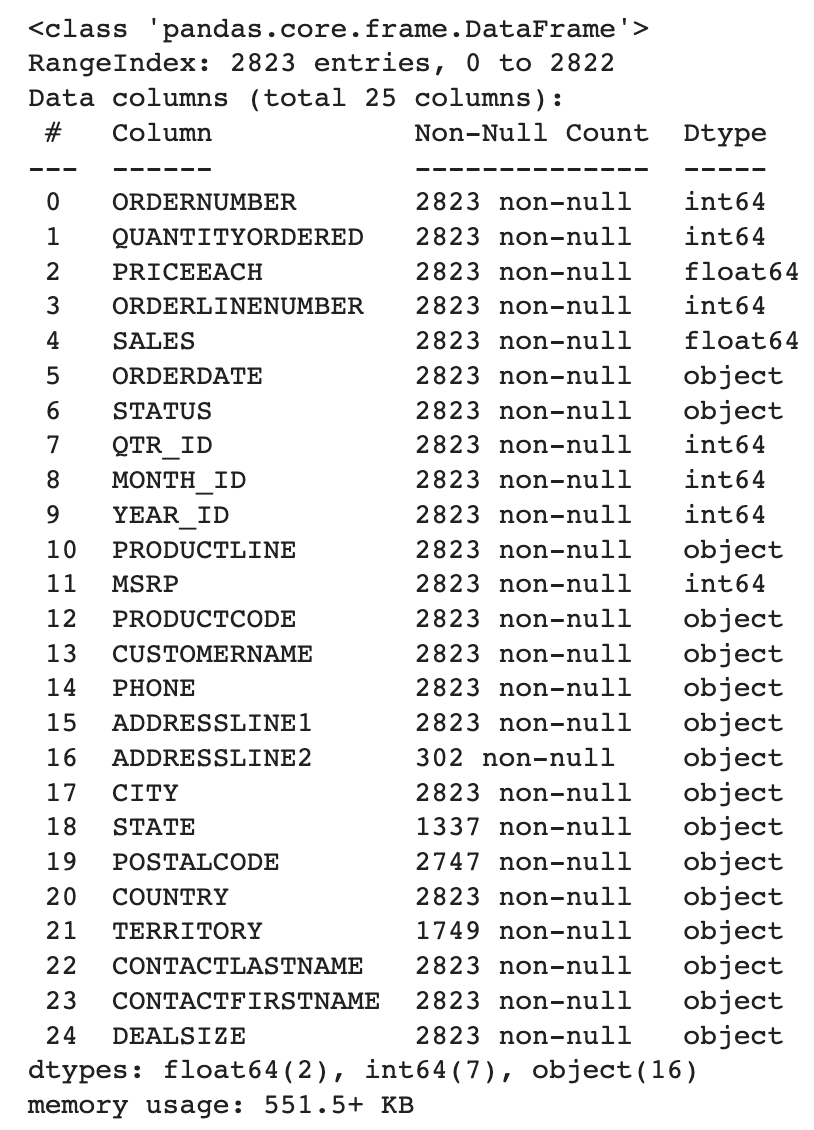

df.information(): Get the details about the assorted information sorts used and the non-null depend of every column.

-

df.corr(): This could provide the correlation matrix between all of the integer columns within the information body.

-

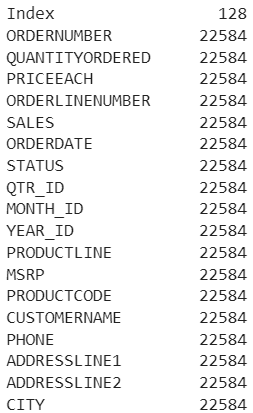

df.memory_usage(): It should let you know how a lot reminiscence is being consumed by every column.

3. Information Choice

It’s also possible to choose the information of any particular row, column, and even a number of columns.

-

df.iloc[row_num]: It should choose a specific row based mostly on its index

For ex-,

-

df[col_name]: It should choose the actual column

For ex-,

Output:

-

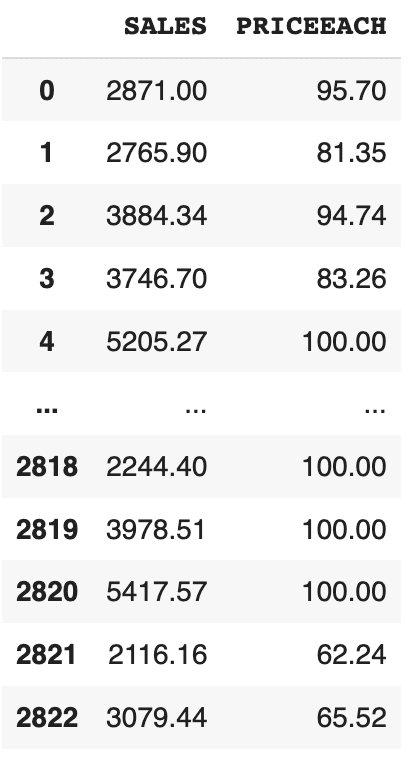

df[[‘col1’, ‘col2’]]: It should choose a number of columns given

For ex-,

df[["SALES", "PRICEEACH"]]

Output:

These capabilities are used to deal with the lacking information. Some rows within the information comprise some null and rubbish values, which might hamper the efficiency of our educated mannequin. So, it’s at all times higher to appropriate or take away these lacking values.

-

df.isnull(): This may establish the lacking values in your dataframe. -

df.dropna(): This may take away the rows containing lacking values in any column. -

df.fillna(val): This may fill the lacking values withvalgiven within the argument. -

df[‘col’].astype(new_data_type): It will probably convert the information sort of the chosen columns to a special information sort.

For ex-,

We’re changing the information sort of the SALES column from float to int.

Right here, we’ll use some useful capabilities in information evaluation, like grouping, sorting, and filtering.

- Aggregation Capabilities:

You possibly can group a column by its identify after which apply some aggregation capabilities like sum, min/max, imply, and so forth.

df.groupby("col_name_1").agg({"col_name_2": "sum"})

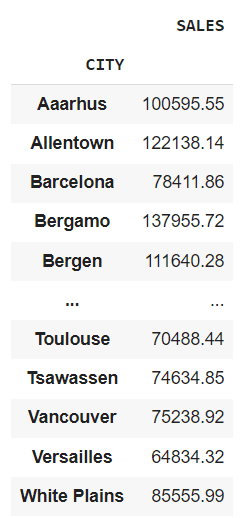

For ex-,

df.groupby("CITY").agg({"SALES": "sum"})

It will provide you with the entire gross sales of every metropolis.

If you wish to apply a number of aggregations at a single time, you possibly can write them like that.

For ex-,

aggregation = df.agg({"SALES": "sum", "QUANTITYORDERED": "imply"})

Output:

SALES 1.003263e+07

QUANTITYORDERED 3.509281e+01

dtype: float64

- Filtering Information:

We are able to filter the information in rows based mostly on a selected worth or a situation.

For ex-,

Shows the rows the place the worth of gross sales is larger than 5000

It’s also possible to filter the dataframe utilizing the question() operate. It should additionally generate an identical output as above.

For ex,

- Sorting Information:

You possibly can type the information based mostly on a selected column, both within the ascending order or within the descending order.

For ex-,

df.sort_values("SALES", ascending=False) # Kinds the information in descending order

- Pivot Tables:

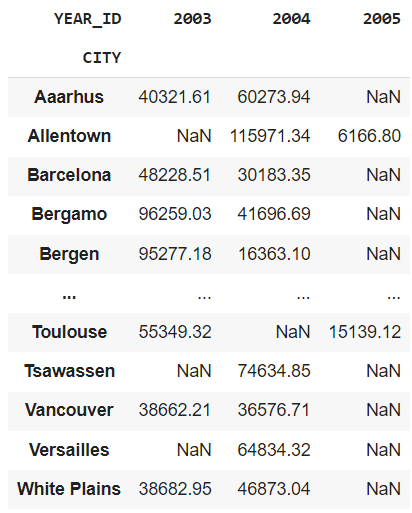

We are able to create pivot tables that summarize the information utilizing particular columns. That is very helpful in analyzing the information while you solely need to think about the impact of specific columns.

For ex-,

pd.pivot_table(df, values="SALES", index="CITY", columns="YEAR_ID", aggfunc="sum")

Let me break this for you.

-

values: It accommodates the column for which you need to populate the desk’s cells. -

index: The column utilized in it is going to grow to be the row index of the pivot desk, and every distinctive class of this column will grow to be a row within the pivot desk. -

columns: It accommodates the headers of the pivot desk, and every distinctive component will grow to be the column within the pivot desk. -

aggfunc: This is similar aggregator operate we mentioned earlier.

Output:

This output reveals a chart which depicts the entire gross sales in a specific metropolis for a selected yr.

6. Combining Information Frames

We are able to mix and merge a number of information frames both horizontally or vertically. It should concatenate two information frames and return a single merged information body.

For ex-,

combined_df = pd.concat([df1, df2])

You possibly can merge two information frames based mostly on a standard column. It’s helpful while you need to mix two information frames that share a standard identifier.

For ex,

merged_df = pd.merge(df1, df2, on="common_col")

7. Making use of Customized Capabilities

You possibly can apply customized capabilities in accordance with your wants in both a row or a column.

For ex-,

def cus_fun(x):

return x * 3

df["Sales_Tripled"] = df["SALES"].apply(cus_fun, axis=0)

We’ve written a customized operate that may triple the gross sales worth for every row. axis=0 signifies that we need to apply the customized operate on a column, and axis=1 implies that we need to apply the operate on a row.

Within the earlier methodology you need to write a separate operate after which to name it from the apply() methodology. Lambda operate lets you use the customized operate contained in the apply() methodology itself. Let’s see how we will try this.

df["Sales_Tripled"] = df["SALES"].apply(lambda x: x * 3)

Applymap:

We are able to additionally apply a customized operate to each component of the dataframe in a single line of code. However a degree to recollect is that it’s relevant to all the weather within the dataframe.

For ex-,

df = df.applymap(lambda x: str(x))

It should convert the information sort to a string of all the weather within the dataframe.

8. Time Collection Evaluation

In arithmetic, time sequence evaluation means analyzing the information collected over a selected time interval, and pandas have capabilities to carry out the sort of evaluation.

Conversion to DateTime Object Mannequin:

We are able to convert the date column right into a datetime format for simpler information manipulation.

For ex-,

df["ORDERDATE"] = pd.to_datetime(df["ORDERDATE"])

Output:

Calculate Rolling Common:

Utilizing this methodology, we will create a rolling window to view information. We are able to specify a rolling window of any measurement. If the window measurement is 5, then it means a 5-day information window at the moment. It will probably provide help to take away fluctuations in your information and assist establish patterns over time.

For ex-

rolling_avg = df["SALES"].rolling(window=5).imply()

Output:

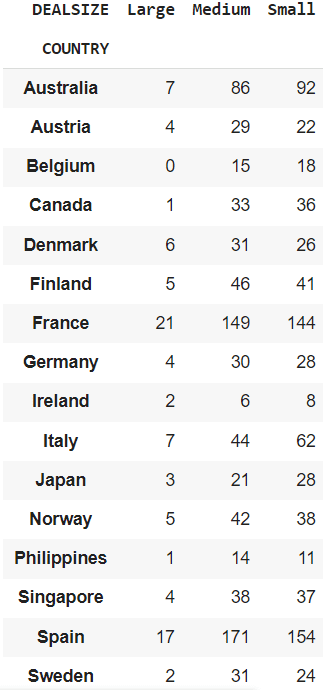

9. Cross Tabulation

We are able to carry out cross-tabulation between two columns of a desk. It’s usually a frequency desk that reveals the frequency of occurrences of assorted classes. It will probably provide help to to grasp the distribution of classes throughout completely different areas.

For ex-,

Getting a cross-tabulation between the COUNTRY and DEALSIZE.

cross_tab = pd.crosstab(df["COUNTRY"], df["DEALSIZE"])

It will probably present you the order measurement (‘DEALSIZE’) ordered by completely different international locations.

10. Dealing with Outliers

Outliers in information signifies that a specific level goes far past the typical vary. Let’s perceive it by an instance. Suppose you have got 5 factors, say 3, 5, 6, 46, 8. Then we will clearly say that the quantity 46 is an outlier as a result of it’s far past the typical of the remainder of the factors. These outliers can result in flawed statistics and needs to be faraway from the dataset.

Right here pandas come to the rescue to search out these potential outliers. We are able to use a way referred to as Interquartile Vary(IQR), which is a standard methodology for locating and dealing with these outliers. It’s also possible to examine this methodology if you need info on it. You possibly can learn extra about them right here.

Let’s see how we will try this utilizing pandas.

Q1 = df["SALES"].quantile(0.25)

Q3 = df["SALES"].quantile(0.75)

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

outliers = df[(df["SALES"] < lower_bound) | (df["SALES"] > upper_bound)]

Q1 is the primary quartile representing the twenty fifth percentile of the information and Q3 is the third quartile representing the seventy fifth percentile of the information.

lower_bound variable shops the decrease sure that’s used for locating potential outliers. Its worth is about to 1.5 instances the IQR beneath Q1. Equally, upper_bound calculates the higher sure, 1.5 instances the IQR above Q3.

After which, you filter out the outliers which are lower than the decrease or larger than the higher sure.

Python pandas library allows us to carry out superior information evaluation and manipulations. These are only some of them. You’ll find some extra instruments in this pandas documentation. One vital factor to recollect is that the collection of strategies may be particular which caters to your wants and the dataset you’re utilizing.

Aryan Garg is a B.Tech. Electrical Engineering pupil, presently within the remaining yr of his undergrad. His curiosity lies within the subject of Internet Improvement and Machine Studying. He have pursued this curiosity and am desirous to work extra in these instructions.