Rita El Khoury / Android Authority

TL;DR

- Oura has launched its first proprietary AI mannequin centered on ladies’s well being.

- The function combines clinician-reviewed analysis with customers’ information to interpret cycle, fertility, being pregnant, and menopause developments.

- It’s rolling out now in Oura Labs inside Oura Advisor.

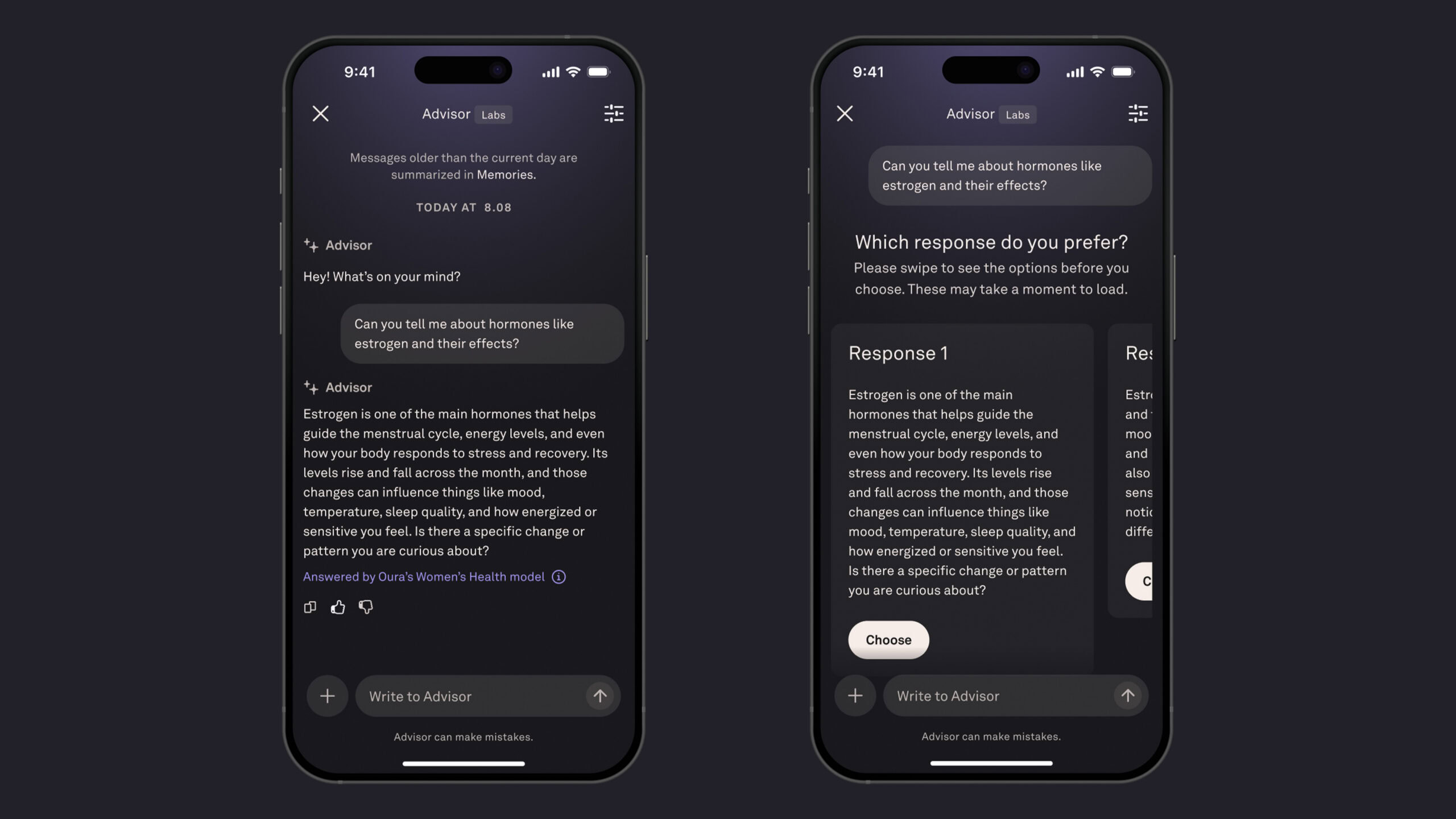

Oura is increasing its give attention to ladies’s well being with a brand new AI mannequin. The function is the corporate’s first proprietary AI system and goals to show ring information into personalised steerage. It’s rolling out for testing in Oura Labs inside Oura Advisor, the corporate’s in-app AI assistant. In contrast to earlier Advisor updates that used normal AI programs, the brand new software runs on a customized mannequin constructed round clinician-reviewed ladies’s well being analysis.

In accordance with Oura, the system interprets long-term developments, together with sleep, cycle monitoring, exercise, stress, and being pregnant indicators, by way of the lens of broader ladies’s well being information, to supply contextual steerage. The corporate positions the function as a conversational software quite than a medical service, noting that responses are tuned to be supportive and non-dismissive.

Ladies’s well being is a significant wearable battleground as firms race to translate information into insights about cycles, fertility, and menopause. A mannequin constructed round that complexity offers Oura a clearer framework than making use of a normal chatbot to well being metrics. As at all times, this comes with acquainted limitations. AI steerage (even when clinically knowledgeable) isn’t diagnostic.

Oura is, nevertheless, cautious to emphasise privateness because the function enters testing. The corporate says the mannequin runs on Oura-controlled infrastructure and that conversations usually are not offered, shared, or used to coach public AI programs. Participation in Oura Labs is non-obligatory.

If profitable, instruments like this might mark a broader shift in wearable well being from monitoring metrics to decoding them. The problem isn’t gathering information anymore as a lot as turning long-term patterns into steerage that’s genuinely helpful.

Thanks for being a part of our group. Learn our Remark Coverage earlier than posting.