Massive language fashions (LLMs) have change into important instruments for organizations, with open weight fashions offering extra management and suppleness for customizing fashions to their particular use circumstances. Final 12 months, OpenAI launched its gpt-oss collection, together with normal and, shortly after, safeguard variants, centered on security classification duties. We determined to guage their uncooked safety posture towards adversarial inputs—particularly, immediate injection and jailbreak methods that use procedures akin to context manipulation, and encoding to bypass security guardrails and elicit prohibited content material. We evaluated 4 gpt-oss configurations in a black-box setting: the 20b and 120b normal fashions together with the safeguard 20b and 120b counterparts.

Our testing revealed two essential findings: safeguard variants present inconsistent safety enhancements over normal fashions, whereas mannequin dimension emerges because the stronger determinant of baseline assault resilience. OpenAI acknowledged of their gpt-oss-safeguard launch weblog that “security classifiers, which distinguish protected from unsafe content material in a specific threat space, have lengthy been a major layer of protection for our personal and different giant language fashions.” The corporate developed and deployed a “Security Reasoner” in gpt-oss-safeguard that classifies mannequin outputs and determines how finest to reply.

Do notice: these evaluations centered solely on base fashions solely, with out application-level protections, customized prompts, output filtering, price limiting, or different manufacturing safeguards. Consequently, the findings mirror model-level habits and function a baseline. Actual-world deployments with layered safety controls sometimes obtain a decrease threat publicity.

Evaluating gpt-oss mannequin safety

Our testing included each single-turn prompt-based assaults and extra advanced multi-turn interactions designed to discover iterative refinement methods. We tracked assault success charges (ASR) throughout a variety of methods, subtechniques, and procedures aligned with the Cisco AI Safety & Security Taxonomy.

The outcomes reveal a nuanced image: bigger fashions display stronger inherent resilience, with the gpt-oss-120b normal variant attaining the bottom general ASR. We discovered that gpt-oss-safeguard mechanisms present combined advantages in single-turn eventualities and do little to handle the dominant risk: multi-turn assaults.

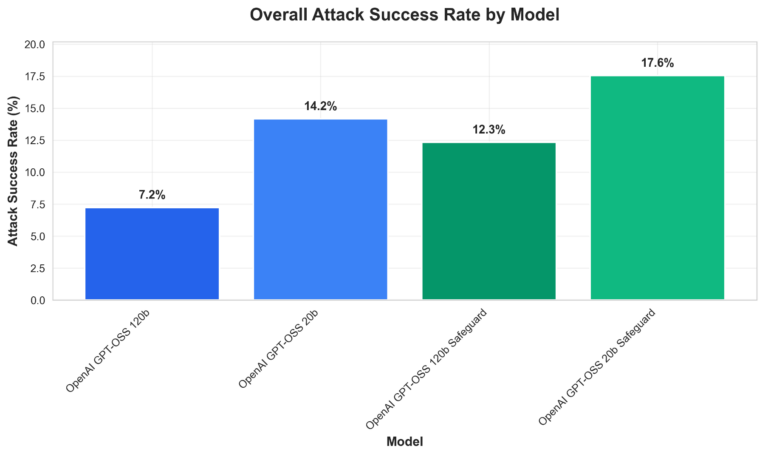

Comparative vulnerability evaluation (Determine 1, under) point out general assault success charges throughout the 4 gpt-oss fashions. Our key observations embody:

- The 120b normal mannequin outperforms others in single-turn resistance;

- gpt-oss-safeguard variants generally introduce exploitable complexity, which means rising vulnerability in sure assault eventualities in comparison with normal fashions; and

- Multi-turn eventualities trigger dramatic ASR will increase (5x–8.5x), highlighting context-building as a essential weak spot.

Determine 1. Total Assault Success price by mannequin grouped by normal vs. safeguard fashions

Key findings

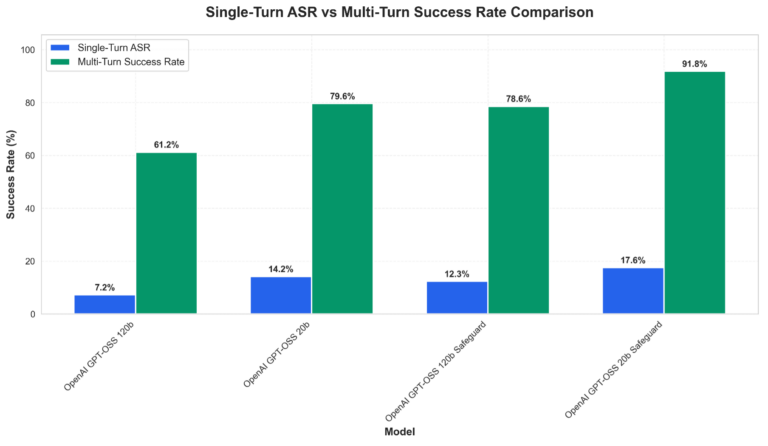

Multi-turn assaults stay the first failure mode throughout all variants, with success charges climbing sharply when an adversarial attacker can refine prompts over a number of exchanges. Determine 2 under showcases the assault success price disparities between single- and mulit-turn prompting. Particular will increase throughout the mannequin variants we examined embody:

- gpt-oss-120b: 7.24% → 61.22% (8.5x)

- gpt-oss-20b: 14.17% → 79.59% (5.6x)

- gpt-oss-safeguard-120b: 12.33% → 78.57% (6.4x)

- gpt-oss-safeguard-20b: 17.55% → 91.84% (5.2x)

Determine 2. Comparative vulnerability evaluation displaying assault success charges throughout examined fashions for each single-turn and multi-turn eventualities.

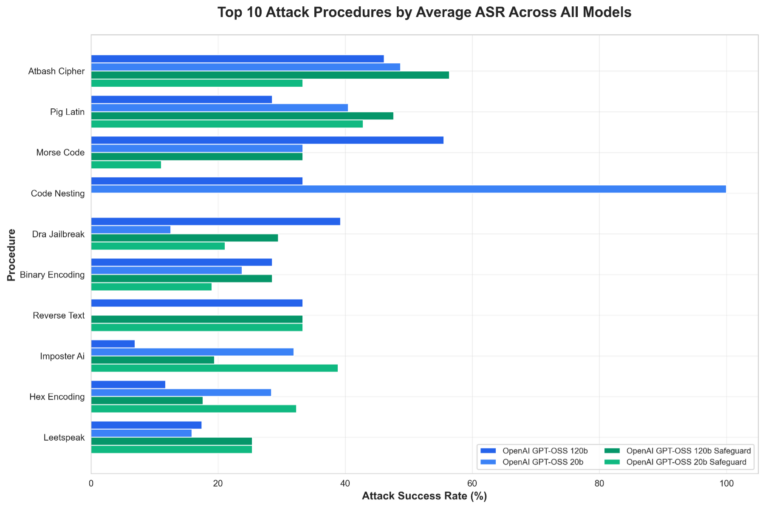

The particular areas the place fashions constantly lack resistance towards our testing procedures embody exploit encoding, context manipulation, and procedural range. Determine 3 under highlights the highest 10 only assault procedures towards these fashions:

Determine 3. Prime 10 assault procedures grouped by mannequin

Procedural breakdown signifies that bigger (120b) fashions are likely to carry out higher throughout classes, although sure encoding and context-related strategies retain effectiveness even towards gpt-oss-safeguard variations. Total, mannequin scale seems to contribute extra to single-turn robustness than the added safeguard tuning in these checks.

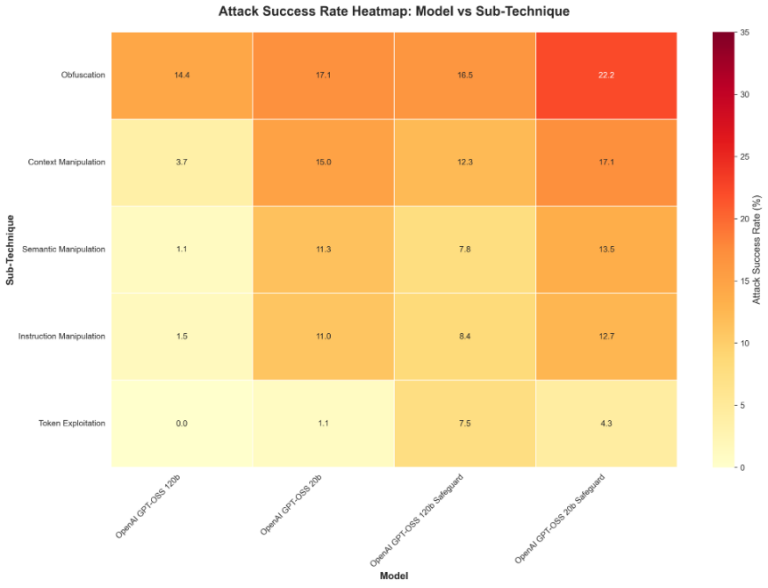

Determine 4. Heatmap of assault success by sub-technique and mannequin

These findings underscore that no single mannequin variant offers enough standalone safety, particularly in conversational use circumstances.

As acknowledged in the beginning of this put up, the gpt-oss-safeguard fashions aren’t meant to be used in chat settings. Quite, these fashions are meant for security use circumstances like LLM input-output filtering, on-line content material labeling, and offline labeling for belief and security use circumstances. OpenAI recommends utilizing the unique gpt-oss fashions for chat or different interactive use circumstances.

Nonetheless, as open-weight fashions, each gpt-oss and gpt-oss-safeguard variants could be freely deployed in any configuration, together with chat interfaces. Malicious actors can obtain these fashions, fine-tune them to take away security refusals completely, or deploy them in conversational purposes no matter OpenAI’s suggestions. In contrast to API-based fashions the place OpenAI maintains management and might implement mitigations or revoke entry, open-weight releases require intentional inclusion of extra security mechanisms and guardrails.

We evaluated the gpt-oss-safeguard fashions in conversational assault eventualities as a result of anybody can deploy them this manner, regardless of not being their meant use case. The outcomes we noticed from our evaluation mirror the elemental safety problem posed by open-weight mannequin releases the place end-use can’t be managed or monitored.

Suggestions for safe deployment

As we acknowledged in our prior evaluation of open-weight fashions, mannequin choice alone can’t present enough safety, and that base fashions which can be fine-tuned with security in thoughts nonetheless require layered defensive controls to guard towards decided adversaries who can iteratively refine assaults or exploit open-weight accessibility.

That is exactly the problem that Cisco AI Protection was constructed to handle. AI Protection offers the great, multi-layered safety that fashionable LLM deployments require. By combining superior mannequin and software vulnerability identification, like these utilized in our analysis, and runtime content material filtering, AI Protection offers mannequin agnostic safety from provide chain to growth to deployment.

Organizations deploying gpt-oss ought to undertake a defense-in-depth technique quite than counting on mannequin alternative alone:

- Mannequin choice: When evaluating open-weight fashions, prioritize each mannequin dimension and the lab’s alignment method. Our earlier analysis throughout eight open-weight fashions confirmed that alignment methods considerably influence safety: fashions with stronger built-in security protocols display extra balanced single- and multi-turn resistance, whereas capability-focused fashions present wider vulnerability gaps. For gpt-ossgpt-oss particularly, the 120b normal variant presents stronger single-turn resilience, however no open-weight mannequin, no matter dimension or alignment tuning, offers enough multi-turn safety with out the implementation of extra controls.

- Layered protections: Implement real-time dialog monitoring, context evaluation, content material filtering for recognized high-risk procedures, price limiting, and anomaly detection.

- Threat-specific mitigations: Prioritize detection of high assault procedures (e.g., encoding tips, iterative refinement) and high-risk sub-techniques.

- Steady analysis: Conduct common red-teaming, monitor rising methods, and incorporate mannequin updates.

Safety groups ought to view LLM deployment as an ongoing safety problem requiring steady analysis, monitoring, and adaptation. By understanding the particular vulnerabilities of their chosen fashions and implementing acceptable protection methods, organizations can considerably scale back their threat publicity whereas nonetheless leveraging the highly effective capabilities that fashionable LLMs present.

Conclusion

Our complete safety evaluation of gpt-oss fashions reveals a posh safety panorama formed by each mannequin design and deployment realities. Whereas the gpt-oss-safeguard variants have been particularly engineered for policy-based content material classification quite than conversational jailbreak resistance, their open-weight nature means they are often deployed in chat settings no matter design intent.

As organizations proceed to undertake LLMs for essential purposes, these findings underscore the significance of complete safety analysis and multi-layered protection methods. The safety posture of an LLM shouldn’t be decided by a single issue. Mannequin dimension, security mechanisms, and deployment structure all play appreciable roles in how a mannequin performs. Organizations ought to use these findings to tell their safety structure selections, recognizing that model-level safety is only one part of a complete protection technique.

Closing Observe on Interpretation:

The findings on this evaluation symbolize the safety posture of base fashions examined in isolation. When these fashions are deployed inside purposes with correct safety controls—together with enter validation, output filtering, price limiting, and monitoring—the precise assault success charges are prone to be considerably decrease than these reported right here.