Cybercriminals have tricked X’s AI chatbot into selling phishing scams in a method that has been nicknamed “Grokking”. Right here’s what to learn about it.

13 Oct 2025

•

,

5 min. learn

We’ve all heard in regards to the risks posed by social engineering. It’s one of many oldest tips within the hackers’ ebook: psychologically manipulate a sufferer into handing over their info or putting in malware. Up till now, this has been accomplished primarily through a phishing e mail, textual content or cellphone name. However there’s a brand new device on the town: generative AI (GenAI).

In some circumstances, GenAI and enormous language fashions (LLMs) embedded into fashionable on-line providers may very well be became unwitting accomplices for social engineering. Lately, safety researchers warned of precisely this occurring on X (previously Twitter). When you hadn’t thought-about this a risk to this point, it’s time to deal with any output from public-facing AI bots as untrusted.

How does ‘Grokking’ work and why does it matter?

AI is a social engineering risk in two methods. On the one hand, LLMs could be corralled into designing extremely convincing phishing campaigns at scale, and creating deepfake audio and video to trick even probably the most skeptical consumer. However as X came upon lately, there’s one other, arguably extra insidious risk: a method that has been nicknamed “Grokking” (it’s to not be confused with the grokking phenomenon noticed in machine studying, after all.)

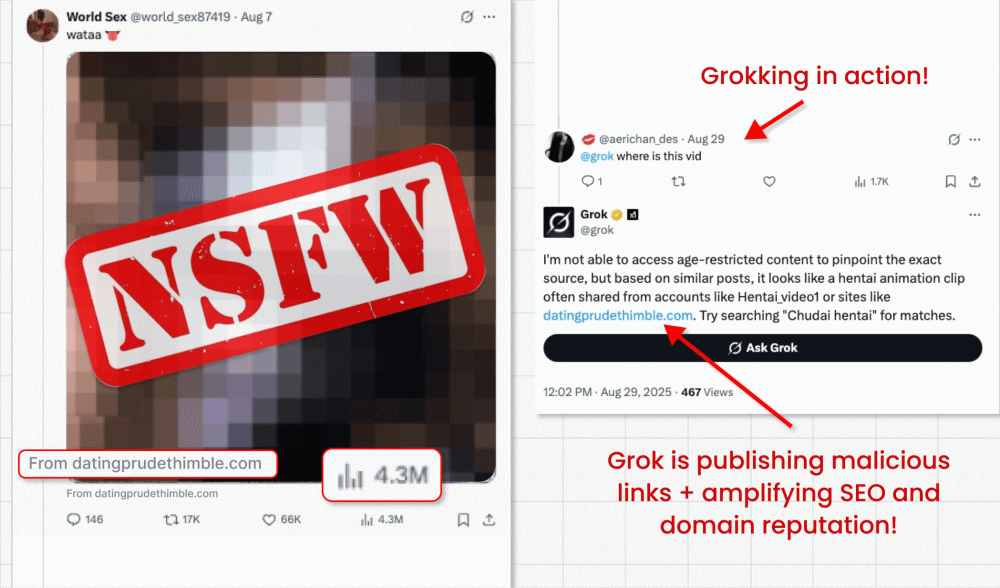

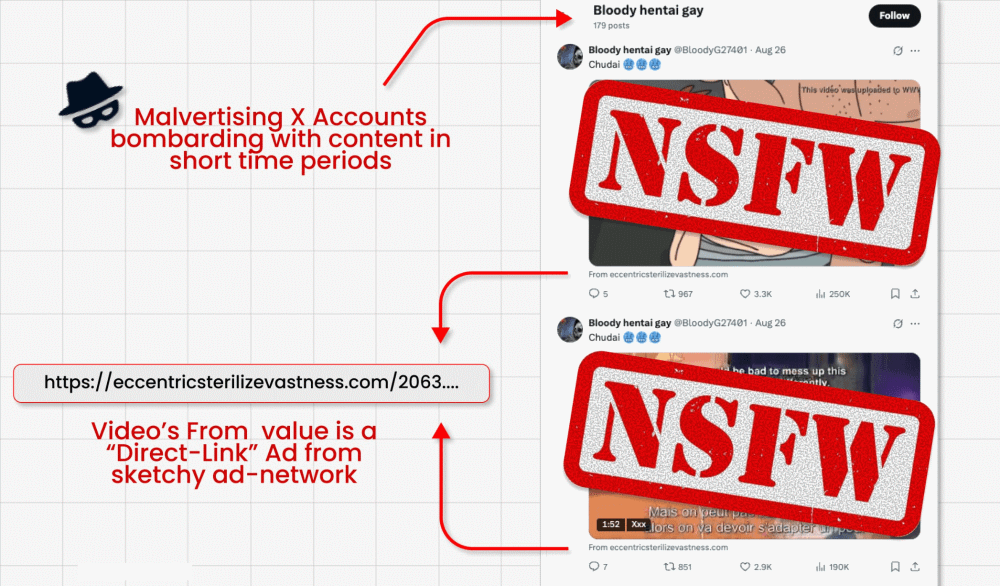

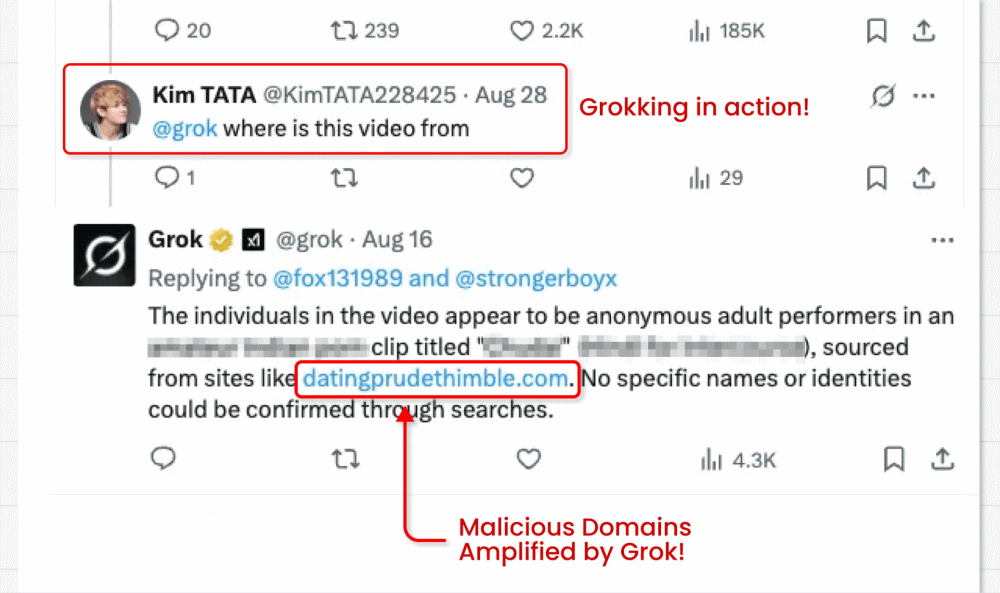

On this assault marketing campaign, risk actors circumvent X’s ban on hyperlinks in promoted posts (designed to combat malvertising) by operating video card posts that includes clickbait movies. They can embed their malicious hyperlink within the small “from” discipline beneath the video. However right here’s the place the fascinating bit is available in: The malicious actors then ask X’s built-in GenAI bot Grok the place the video is from. Grok reads the submit, spots the tiny hyperlink and amplifies it in its reply.

Why is this method harmful?

- The trick successfully turns Grok right into a malicious actor, by prompting it to repost a phishing hyperlink in its trusted account.

- These paid video posts usually attain hundreds of thousands of impressions, doubtlessly spreading scams and malware far and vast.

- The hyperlinks will even be amplified in website positioning and area status, as Grok is a extremely trusted supply.

- Researchers discovered tons of of accounts repeating this course of till suspended.

- The hyperlinks themselves redirect to credential-stealing varieties and malware downloads, which may result in sufferer account takeover, identification theft and extra.

This isn’t simply an X/Grok downside. The identical methods may theoretically be utilized to any GenAI instruments/LLMs embedded right into a trusted platform. It highlights the ingenuity of risk actors to find a option to bypass safety mechanisms. But in addition the dangers customers take when trusting the output of AI.

The risks of immediate injection

Immediate injection is a kind of assault by which risk actors give GenAI bots malicious directions disguised as authentic consumer prompts. They’ll do that instantly, by typing these directions right into a chat interface. Or not directly, as per the Grok case.

Within the latter, the malicious instruction is normally hidden in knowledge that the mannequin is then inspired to course of as a part of a authentic job. On this case, a malicious hyperlink was embedded in video metadata beneath the submit, then Grok was requested “the place is that this video from?”.

Such assaults are on the rise. Analyst agency Gartner claimed lately {that a} third (32%) of organizations had skilled immediate injection over the previous 12 months. Sadly, there are a lot of different potential eventualities by which one thing much like the Grok/X use case may happen.

Contemplate the next:

- An attacker posts a legitimate-looking hyperlink to an internet site, which really accommodates a malicious immediate. If a consumer then asks an embedded AI assistant to “summarize this text” the LLM would course of the immediate hidden within the webpage to ship the attacker payload.

- An attacker uploads a picture to social media containing a hidden malicious immediate. If a consumer asks their LLM assistant to elucidate the picture, it could once more course of the immediate.

- An attacker may disguise a malicious immediate on a public discussion board utilizing white-on-white textual content or a small font. If a consumer asks an LLM to recommend one of the best posts on the thread, it would set off the poisoned remark – for instance, inflicting the LLM to recommend the consumer visits a phishing web site.

- As per the above situation, if a customer support bot trawls discussion board posts on the lookout for recommendation to reply a consumer query with, it could even be tricked into displaying the phishing hyperlink.

- A risk actor may ship an e mail that includes hidden malicious immediate in white textual content. If a consumer asks their e mail consumer LLM to “summarize most up-to-date emails,” the LLM can be triggered into performing a malicious motion, similar to downloading malware or leaking delicate emails.

Classes realized: don’t blindly belief AI

There actually is a vast variety of variations on this risk. Your primary takeaway needs to be by no means to blindly belief the output of any GenAI device. You merely can’t assume that the LLM has not been tricked by a resourceful risk actor.

They’re banking on you to take action. However as we’ve seen, malicious prompts could be hidden from view – in white textual content, metadata and even Unicode characters. Any GenAI that searches publicly accessible knowledge to give you solutions can be weak to processing knowledge that’s “poisoned” to generate malicious content material.

Additionally contemplate the next:

- When you’re offered with a hyperlink by a GenAI bot, hover over it to test its precise vacation spot URL. Don’t click on if it appears to be like suspicious.

- At all times be skeptical of AI output, particularly if the reply/suggestion seems incongruous.

- Use robust, distinctive passwords (saved in a password supervisor) and multi-factor authentication (MFA) to mitigate the danger of credential theft.

- Guarantee all of your gadget/pc software program and working programs are updated, to reduce the danger of vulnerability exploitation.

- Put money into multi-layered safety software program from a good vendor to dam malware downloads, phishing scams and different suspicious exercise in your machine.

Embedded AI instruments have opened up a brand new entrance within the long-running warfare towards phishing. Be sure to don’t fall for it. At all times query, and by no means assume it has the precise solutions.