Picture by Creator

Giant Language Fashions (LLMs) like GPT-4 and LLaMA2 have entered the [data labeling] chat. LLMs have come a great distance and may now label knowledge and tackle duties traditionally carried out by people. Whereas acquiring knowledge labels with an LLM is extremely fast and comparatively low cost, there’s nonetheless one huge subject, these fashions are the last word black bins. So the burning query is: how a lot belief ought to we put within the labels these LLMs generate? In at present’s publish, we break down this conundrum to determine some basic tips for gauging the arrogance we are able to have in LLM-labeled knowledge.

The outcomes introduced beneath are from an experiment carried out by Toloka utilizing well-liked fashions and a dataset in Turkish. This isn’t a scientific report however quite a brief overview of attainable approaches to the issue and a few solutions for how you can decide which technique works greatest in your software.

Earlier than we get into the main points, right here’s the massive query: When can we belief a label generated by an LLM, and when ought to we be skeptical? Figuring out this will help us in automated knowledge labeling and can be helpful in different utilized duties like buyer assist, content material era, and extra.

The Present State of Affairs

So, how are folks tackling this subject now? Some straight ask the mannequin to spit out a confidence rating, some have a look at the consistency of the mannequin’s solutions over a number of runs, whereas others look at the mannequin’s log chances. However are any of those approaches dependable? Let’s discover out.

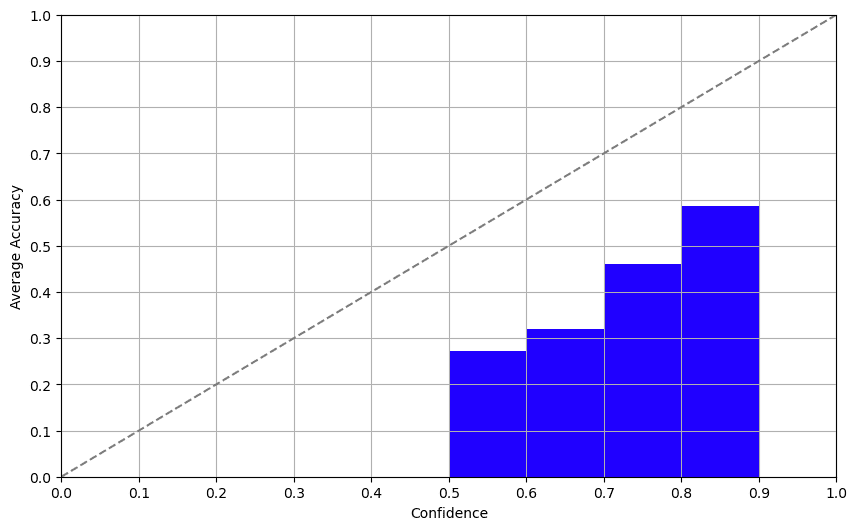

What makes a “good” confidence measure? One easy rule to observe is that there needs to be a constructive correlation between the arrogance rating and the accuracy of the label. In different phrases, a better confidence rating ought to imply a better chance of being appropriate. You’ll be able to visualize this relationship utilizing a calibration plot, the place the X and Y axes symbolize confidence and accuracy, respectively.

Strategy 1: Self-Confidence

The self-confidence method entails asking the mannequin about its confidence straight. And guess what? The outcomes weren’t half dangerous! Whereas the LLMs we examined struggled with the non-English dataset, the correlation between self-reported confidence and precise accuracy was fairly strong, that means fashions are properly conscious of their limitations. We acquired related outcomes for GPT-3.5 and GPT-4 right here.

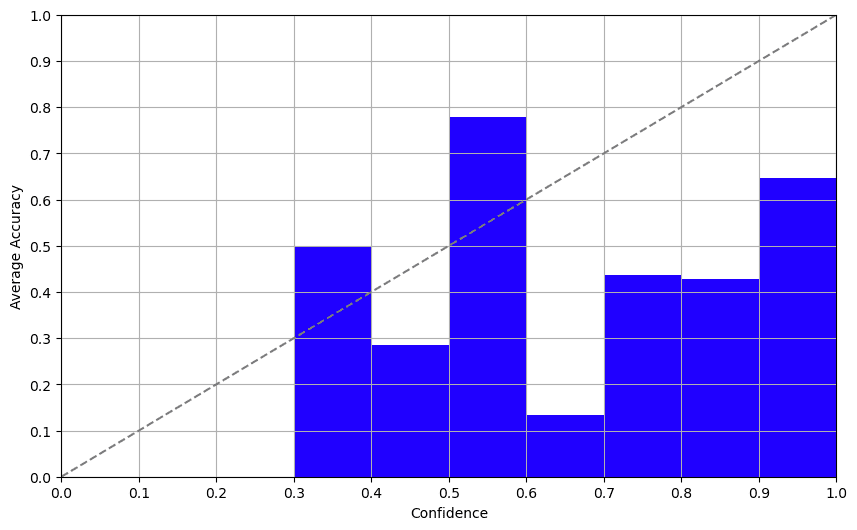

Strategy 2: Consistency

Set a excessive temperature (~0.7–1.0), label the identical merchandise a number of occasions, and analyze the consistency of the solutions, for extra particulars, see this paper. We tried this with GPT-3.5 and it was, to place it calmly, a dumpster hearth. We prompted the mannequin to reply the identical query a number of occasions and the outcomes have been constantly erratic. This method is as dependable as asking a Magic 8-Ball for all times recommendation and shouldn’t be trusted.

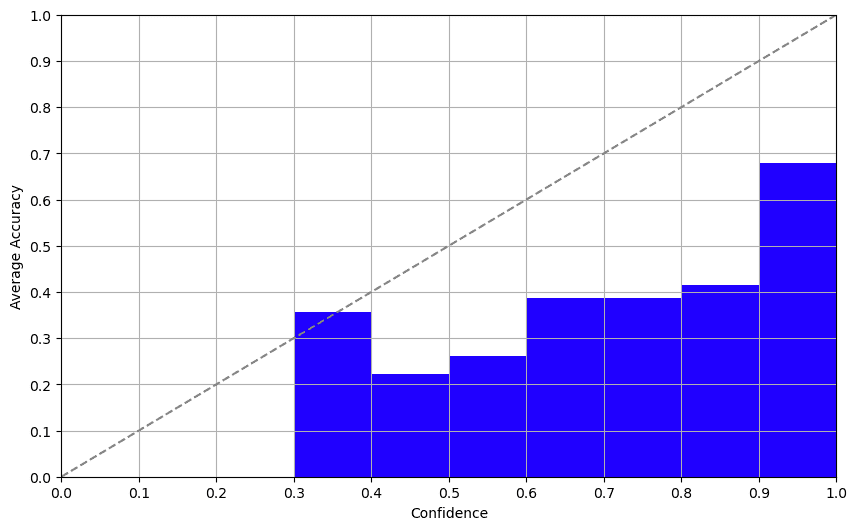

Strategy 3: Log Possibilities

Log chances supplied a nice shock. Davinci-003 returns logprobs of the tokens within the completion mode. Inspecting this output, we acquired a surprisingly respectable confidence rating that correlated properly with accuracy. This technique affords a promising method to figuring out a dependable confidence rating.

So, what did we study? Right here it’s, no sugar-coating:

- Self-Confidence: Helpful, however deal with with care. Biases are reported extensively.

- Consistency: Simply don’t. Except you get pleasure from chaos.

- Log Possibilities: A surprisingly good wager for now if the mannequin means that you can entry them.

The thrilling half? Log chances seem like fairly sturdy even with out fine-tuning the mannequin, regardless of this paper reporting this technique to be overconfident. There’s room for additional exploration.

A logical subsequent step might be to discover a golden method that mixes the perfect elements of every of those three approaches, or explores new ones. So, should you’re up for a problem, this might be your subsequent weekend venture!

Alright, ML aficionados and newbies, that’s a wrap. Bear in mind, whether or not you’re engaged on knowledge labeling or constructing the following huge conversational agent – understanding mannequin confidence is vital. Don’t take these confidence scores at face worth and ensure you do your homework!

Hope you discovered this insightful. Till subsequent time, hold crunching these numbers and questioning these fashions.

Ivan Yamshchikov is a professor of Semantic Information Processing and Cognitive Computing on the Middle for AI and Robotics, Technical College of Utilized Sciences Würzburg-Schweinfurt. He additionally leads the Information Advocates group at Toloka AI. His analysis pursuits embrace computational creativity, semantic knowledge processing and generative fashions.