Stanford researchers have launched OpenJarvis, an open-source framework for constructing private AI brokers that run solely on-device. The mission comes from Stanford’s Scaling Intelligence Lab and is offered as each a analysis platform and deployment-ready infrastructure for local-first AI programs. Its focus isn’t solely mannequin execution, but in addition the broader software program stack required to make on-device brokers usable, measurable, and adaptable over time.

Why OpenJarvis?

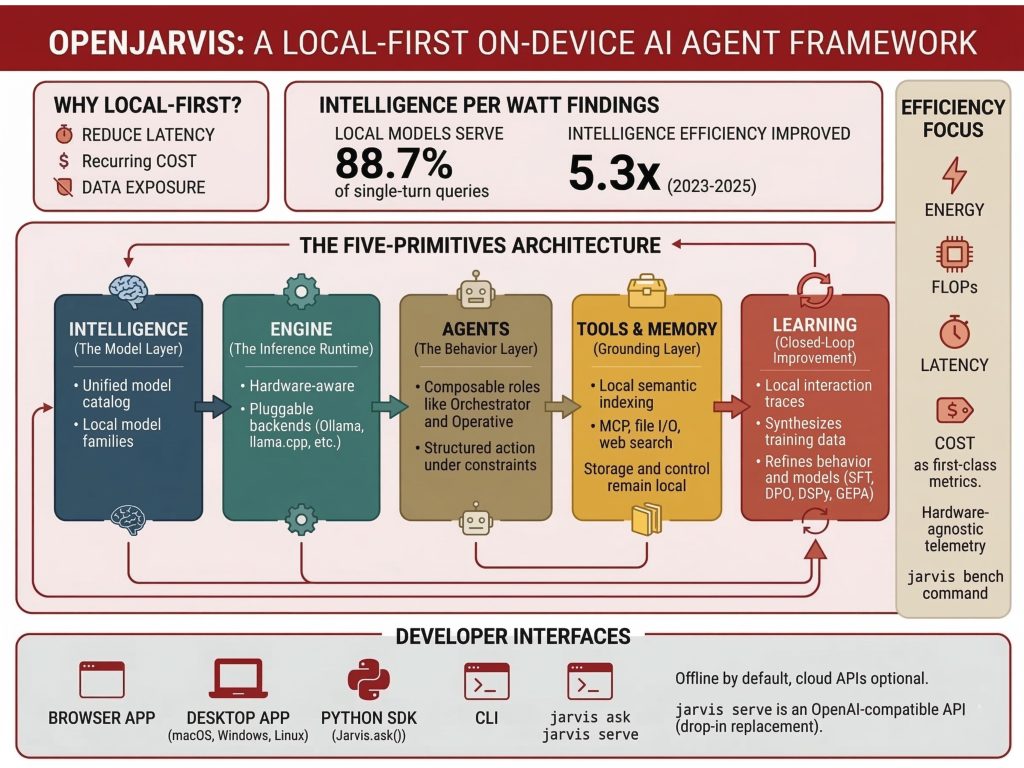

In keeping with the Stanford analysis workforce, most present private AI initiatives nonetheless maintain the native element comparatively skinny whereas routing core reasoning by exterior cloud APIs. That design introduces latency, recurring value, and information publicity issues, particularly for assistants/brokers that function over private recordsdata, messages, and protracted person context. OpenJarvis is designed to shift that stability by making native execution the default and cloud utilization non-compulsory.

The analysis workforce ties this launch to its earlier Intelligence Per Watt analysis. In that work, they report that native language fashions and native accelerators can precisely serve 88.7% of single-turn chat and reasoning queries at interactive latencies, whereas intelligence effectivity improved 5.3× from 2023 to 2025. OpenJarvis is positioned because the software program layer that follows from that consequence: if fashions and shopper {hardware} have gotten sensible for extra native workloads, then builders want an ordinary stack for constructing and evaluating these programs.

The 5-Primitives Structure

On the architectural degree, OpenJarvis is organized round 5 primitives: Intelligence, Engine, Brokers, Instruments & Reminiscence, and Studying. The analysis workforce describes these as composable abstractions that may be benchmarked, substituted, and optimized independently or used collectively as an built-in system. This issues as a result of native AI initiatives typically combine inference, orchestration, instruments, retrieval, and adaptation logic right into a single hard-to-reproduce software. OpenJarvis as a substitute tries to present every layer a extra express function.

Intelligence: The Mannequin Layer

The Intelligence primitive is the mannequin layer. It sits above a altering set of native mannequin households and gives a unified mannequin catalog so builders wouldn’t have to manually observe parameter counts, {hardware} match, or reminiscence tradeoffs for each launch. The objective is to make mannequin alternative simpler to check individually from different elements of the system, such because the inference backend or agent logic.

Engine: The Inference Runtime

The Engine primitive is the inference runtime. It’s a widespread interface over backends akin to Ollama, vLLM, SGLang, llama.cpp, and cloud APIs. The engine layer is framed extra broadly as hardware-aware execution, the place instructions akin to jarvis init detect obtainable {hardware} and advocate an acceptable engine and mannequin configuration, whereas jarvis physician helps preserve that setup. For builders, this is likely one of the extra sensible elements of the design: the framework doesn’t assume a single runtime, however treats inference as a pluggable layer.

Brokers: The Conduct Layer

The Brokers primitive is the conduct layer. Stanford describes it because the half that turns mannequin functionality into structured motion below actual gadget constraints akin to bounded context home windows, restricted working reminiscence, and effectivity limits. Quite than counting on one general-purpose agent, OpenJarvis helps composable roles. The Stanford article particularly mentions roles such because the Orchestrator, which breaks complicated duties into subtasks, and the Operative, which is meant as a light-weight executor for recurring private workflows. The docs additionally describe the agent harness as dealing with the system immediate, instruments, context, retry logic, and exit logic.

Instruments & Reminiscence: Grounding the Agent

The Instruments & Reminiscence primitive is the grounding layer. This primitive consists of help for MCP (Mannequin Context Protocol) for standardized instrument use, Google A2A for agent-to-agent communication, and semantic indexing for native retrieval over notes, paperwork, and papers. It additionally help for messaging platforms, webchat, and webhooks. It additionally covers a narrower instruments view that features internet search, calculator entry, file I/O, code interpretation, retrieval, and exterior MCP servers. OpenJarvis isn’t just a neighborhood chat interface; it’s supposed to attach native fashions to instruments and protracted private context whereas protecting storage and management native by default.

Studying: Closed-Loop Enchancment

The fifth primitive, Studying, is what provides the framework a closed-loop enchancment path. Stanford researchers describe it as a layer that makes use of native interplay traces to synthesize coaching information, refine agent conduct, and enhance mannequin choice over time. OpenJarvis helps optimization throughout 4 layers of the stack: mannequin weights, LM prompts, agentic logic, and the inference engine. Examples listed by the analysis workforce embrace SFT, GRPO, DPO, immediate optimization with DSPy, agent optimization with GEPA, and engine-level tuning akin to quantization choice and batch scheduling.

Effectivity as a First-Class Metric

A serious technical level in OpenJarvis is its emphasis on efficiency-aware analysis. The framework treats power, FLOPs, latency, and greenback value as first-class constraints alongside activity high quality. It additionally emphasizes on a hardware-agnostic telemetry system for profiling power on NVIDIA GPUs by way of NVML, AMD GPUs, and Apple Silicon by way of powermetrics, with 50 ms sampling intervals. The jarvis bench command is supposed to standardize benchmarking for latency, throughput, and power per question. That is necessary as a result of native deployment isn’t solely about whether or not a mannequin can reply a query, however whether or not it may accomplish that inside actual limits on energy, reminiscence, and response time.

Developer Interfaces and Deployment Choices

From a developer perspective, OpenJarvis exposes a number of entry factors. The official docs present a browser app, a desktop app, a Python SDK, and a CLI. The browser-based interface might be launched with ./scripts/quickstart.sh, which installs dependencies, begins Ollama and a neighborhood mannequin, launches the backend and frontend, and opens the native UI. The desktop app is obtainable for macOS, Home windows, and Linux, with the backend nonetheless operating on the person’s machine. The Python SDK exposes a Jarvis() object and strategies akin to ask() and ask_full(), whereas the CLI consists of instructions like jarvis ask, jarvis serve, jarvis reminiscence index, and jarvis reminiscence search.

The docs additionally state that all core performance works and not using a community connection, whereas cloud APIs are non-compulsory. For dev groups constructing native purposes, one other sensible function is jarvis serve, which begins a FastAPI server with SSE streaming and is described as a drop-in alternative for OpenAI purchasers. That lowers the migration value for builders who need to prototype towards an API-shaped interface whereas nonetheless protecting inference native.

Take a look at Repo, Docs and Technical particulars. Additionally, be at liberty to observe us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be a part of us on telegram as properly.