By: Dr. Charles Vardeman, Dr. Christ Candy, and Dr. Paul Brenner

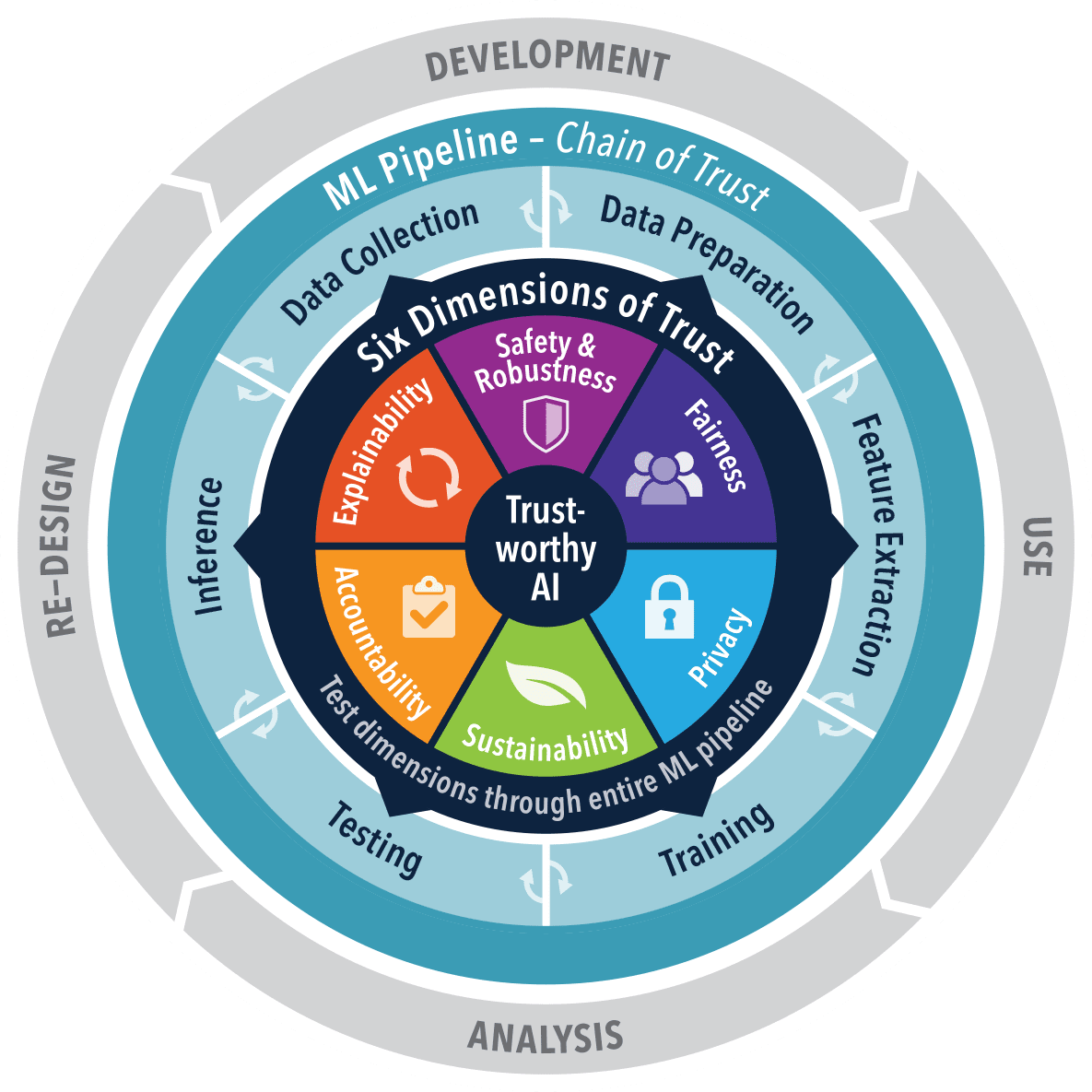

In alignment with President Biden’s current Government Order emphasizing protected, safe, and reliable AI, we share our Trusted AI (TAI) classes discovered two years into the course of our analysis tasks. This analysis initiative, visualized within the determine under, focuses on operationalizing AI that meets rigorous moral and efficiency requirements. It aligns with a rising trade development in direction of transparency and accountability in AI programs, notably in delicate areas like nationwide safety. This text displays on the shift from conventional software program engineering to AI approaches the place belief is paramount.

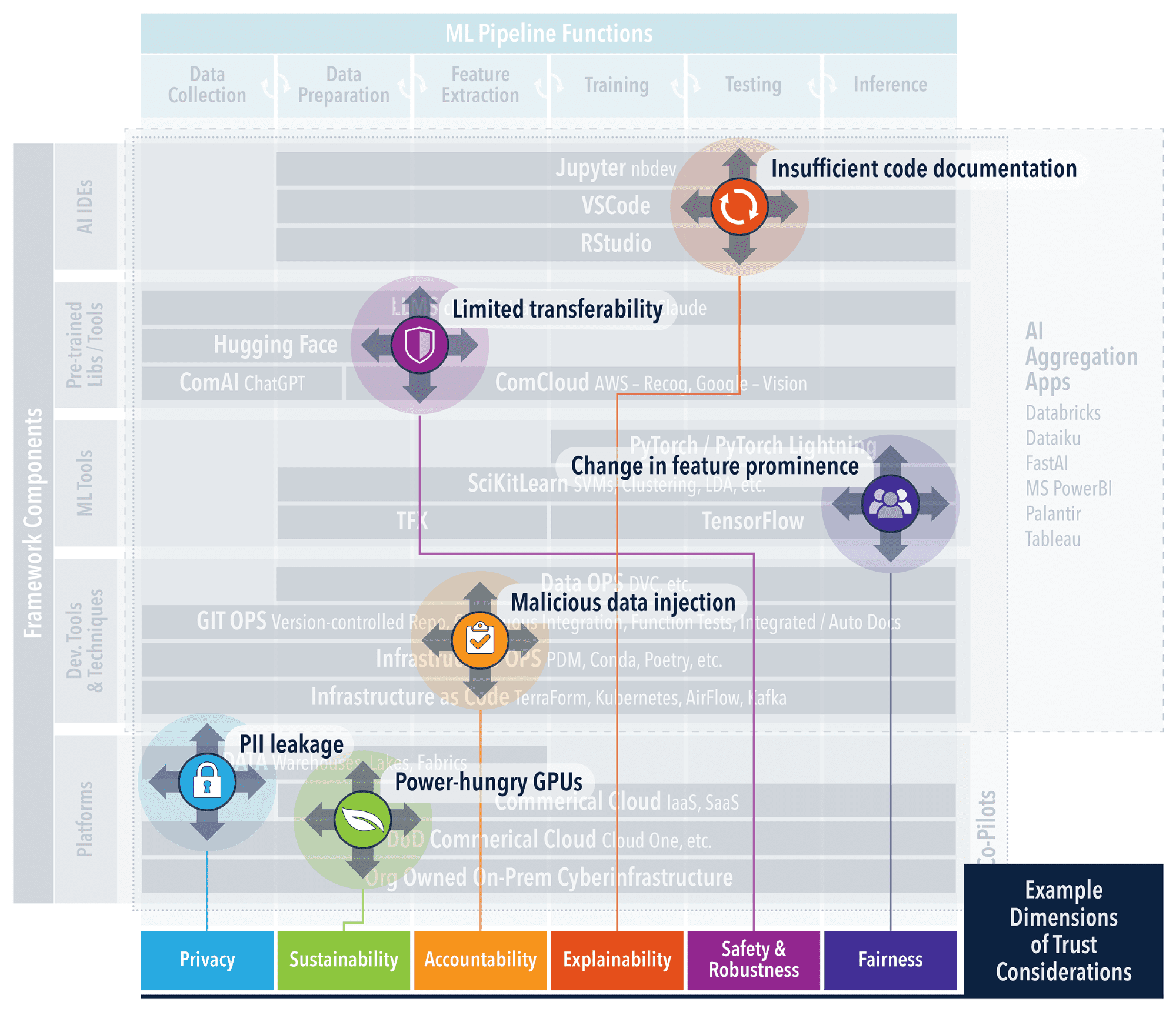

Transitioning from “Software program 1.0 to 2.0 and notions of three.0″ necessitates a dependable infrastructure that not solely conceptualizes but in addition virtually enforces belief in AI. Even a easy set of instance ML elements similar to that proven within the determine under, demonstrates the numerous complexity that have to be understood to handle belief considerations at every stage. Our TAI Frameworks sub-Undertaking addresses this want by providing an integration level for software program and greatest practices from TAI analysis merchandise. Frameworks like these decrease the boundaries to TAI implementation. By automating the setup, builders and decision-makers can channel their efforts towards innovation and technique, slightly than grappling with preliminary complexities. This ensures that belief isn’t an afterthought however a prerequisite, with every part from information administration to mannequin deployment being inherently aligned with moral and operational requirements. The result’s a streamlined path to deploying AI programs that aren’t solely technologically superior but in addition ethically sound and strategically dependable for high-stakes environments. The TAI Frameworks venture surveys and leverages present software program instruments and greatest practices which have their very own open supply, sustainable communities and could be straight leveraged throughout the present operational environments.

GitOps has turn out to be integral to AI engineering, particularly throughout the framework of TAI. It represents an evolution in how software program improvement and operational workflows are managed, providing a declarative method to infrastructure and software lifecycle administration. This system is pivotal in guaranteeing steady high quality and incorporating moral duty in AI programs. The TAI Frameworks Undertaking leverages GitOps as a foundational part to automate and streamline the event pipeline, from code to deployment. This method ensures that greatest practices in software program engineering are adhered to routinely, permitting for an immutable audit path, version-controlled setting, and seamless rollback capabilities. It simplifies advanced deployment processes. Furthermore, GitOps facilitates the combination of moral issues by offering a construction the place moral checks could be automated as a part of the CI/CD pipeline. The adoption of CI/CD in AI improvement is not only about sustaining code high quality; it is about guaranteeing that AI programs are dependable, protected, and carry out as anticipated. TAI promotes automated testing protocols that handle the distinctive challenges of AI, notably as we enter the period of generative AI and prompt-based programs. Testing is now not confined to static code evaluation and unit assessments. It extends to dynamic validation of AI behaviors, encompassing the outputs of generative fashions and the efficacy of prompts. Automated take a look at suites should now be able to assessing not simply the accuracy of responses, but in addition their relevance and security.

Within the pursuit of TAI, a data-centric method is foundational, because it prioritizes the standard and readability of the information over the intricacies of algorithms, thereby establishing belief and interpretability from the bottom up. Inside this framework, a variety of instruments is obtainable to uphold information integrity and traceability. dvc (information model management) is especially favored for its congruence with the GitOps framework, enhancing Git to embody information and experiment administration (see alternate options right here). It facilitates exact model management for datasets and fashions, simply as Git does for code, which is important for efficient CI/CD practices. This ensures that the information engines powering AI programs are persistently fed with correct and auditable information, a prerequisite for reliable AI. We leverage nbdev which compliments dvc by turning Jupyter Notebooks right into a medium for literate programming and exploratory programming, streamlining the transition from exploratory evaluation to well-documented code. The character of software program improvement is evolving to this type of “programming” and is simply accelerated by the evolution of AI “Co-Pilots” that support within the documentation and development of AI Functions. Software program Invoice of Supplies (SBoMs) and AI BoMs, advocated by open requirements like SPDX, are integral to this ecosystem. They function detailed data that complement dvc and nbdev, encapsulating the provenance, composition, and compliance of AI fashions. SBoMs present a complete checklist of elements, guaranteeing that every ingredient of the AI system is accounted for and verified. AI BoMs lengthen this idea to incorporate information sources and transformation processes, providing a stage of transparency to fashions and information in an AI software. Collectively, they kind a whole image of an AI system’s lineage, selling belief and facilitating understanding amongst stakeholders.

Moral and data-centric approaches are elementary to TAI, guaranteeing AI programs are each efficient and dependable. Our TAI frameworks venture leverages instruments like dvc for information versioning and nbdev for literate programming, reflecting a shift in software program engineering that accommodates the nuances of AI. These instruments are emblematic of a larger development in direction of integrating information high quality, transparency, and moral issues from the beginning of the AI improvement course of. Within the civilian and protection sectors alike, the ideas of TAI stay fixed: a system is simply as dependable as the information it is constructed on and the moral framework it adheres to. Because the complexity of AI will increase, so does the necessity for sturdy frameworks that may deal with this complexity transparently and ethically. The way forward for AI, notably in mission crucial functions, will hinge on the adoption of those data-centric and moral approaches, solidifying belief in AI programs throughout all domains.

About Authors

Charles Vardeman, Christ Candy, and Paul Brenner are analysis scientists on the College of Notre Dame Heart for Analysis Computing. They’ve many years of expertise in scientific software program and algorithm improvement with a deal with utilized analysis for know-how switch into product operations. They’ve quite a few technical papers, patents and funded analysis actions within the realms of information science and cyberinfrastructure. Weekly TAI nuggets aligned with scholar analysis tasks could be discovered right here.

Dr. Charles Vardeman is a analysis scientists on the College of Notre Dame Heart for Analysis Computing.