Sponsored Content material

This information, “5 Necessities of Each Semantic Layer“, will help you perceive the breadth of the fashionable semantic layer.

The AI-powered knowledge expertise

The evolution of front-end applied sciences made it potential to embed high quality analytics experiences instantly into many software program merchandise, additional accelerating the proliferation of information merchandise and experiences.

And now, with the arrival of enormous language fashions, we live via one other step change in know-how that can allow many new options and even within the creation of a wholly new class of merchandise throughout a number of use circumstances and domains—together with knowledge.

LLMs are taking the information consumption layer to the subsequent stage with AI-powered knowledge experiences starting from chatbots answering questions on your corporation knowledge to AI brokers making actions based mostly on the indicators and anomalies in knowledge.

![]()

Semantic layer provides context to LLMs

LLMs are certainly a step change, however inevitably, as with each know-how, it comes with its limitations. LLMs hallucinate; the rubbish in, rubbish out drawback has by no means been extra of an issue. Let’s give it some thought like this: when it’s laborious for people to grasp inconsistent and disorganized knowledge, LLM will merely compound that confusion to provide fallacious solutions.

We will’t feed LLM with database schema and anticipate it to generate the right SQL. To function appropriately and execute reliable actions, it must have sufficient context and semantics concerning the knowledge it consumes; it should perceive the metrics, dimensions, entities, and relational elements of the information by which it is powered. Principally—LLM wants a semantic layer.

The semantic layer organizes knowledge into significant enterprise definitions after which permits for querying these definitions—fairly than querying the database instantly.

The ‘querying’ software is equally essential as that of ‘definitions’ as a result of it enforces LLM to question knowledge via the semantic layer, guaranteeing the correctness of the queries and returned knowledge. With that, the semantic layer solves the LLM hallucination drawback.

![]()

Furthermore, combining LLMs and semantic layers can allow a brand new era of AI-powered knowledge experiences. At Dice, we’ve already witnessed many organizations construct customized in-house LLM-powered purposes, and startups, like Delphi, construct out-of-the-box options on prime of Dice’s semantic layer (demo right here).

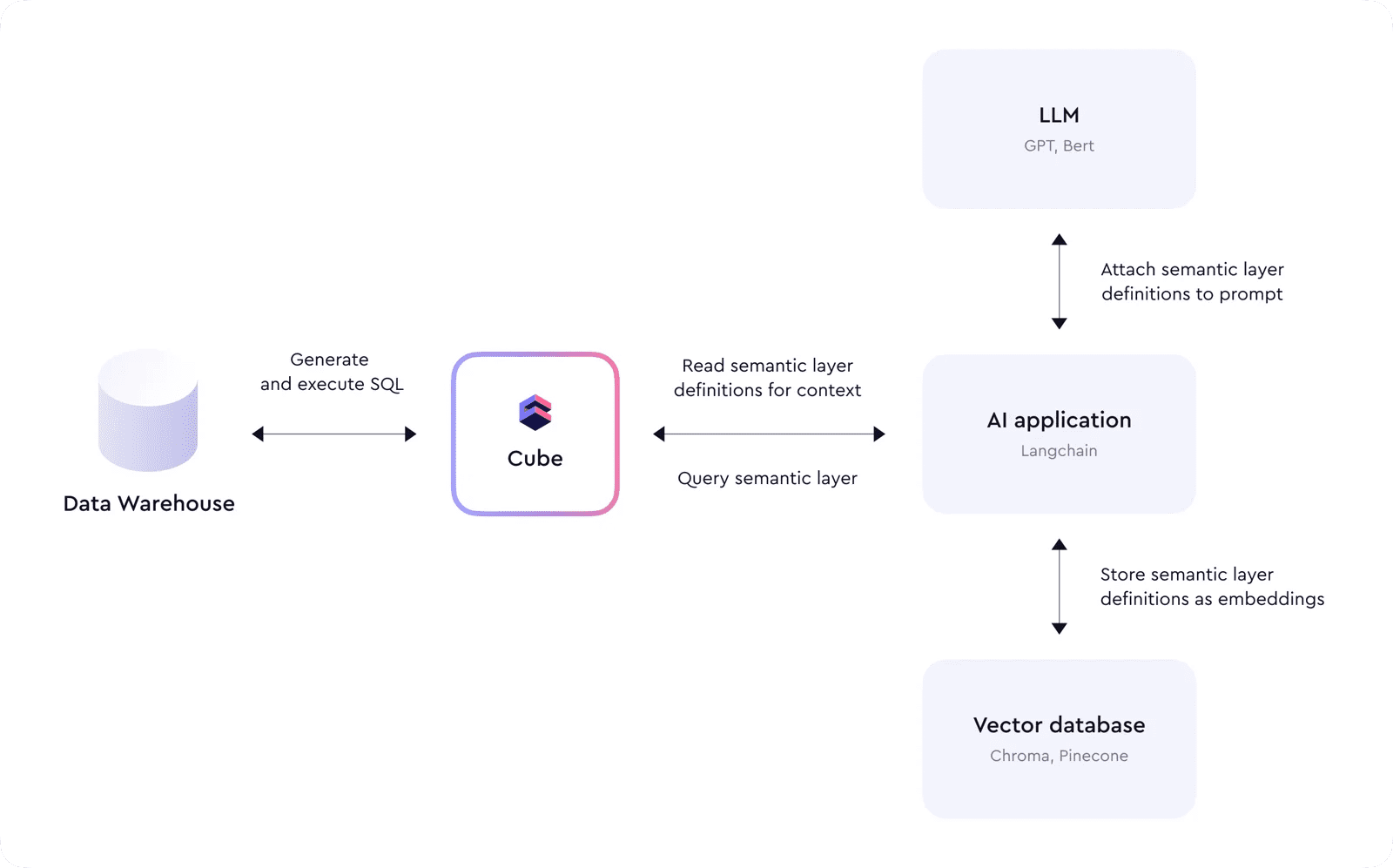

On the sting of this developmental forefront, we see Dice being an integral a part of the fashionable AI tech stack because it sits on prime of information warehouses, offering context to AI brokers and appearing as an interface to question knowledge.

Dice’s knowledge mannequin offers construction and definitions used as a context for LLM to know knowledge and generate right queries. LLM doesn’t must navigate advanced joins and metrics calculations as a result of Dice abstracts these and offers a easy interface that operates on the business-level terminology as a substitute of SQL desk and column names. This simplification helps LLM to be much less error-prone and keep away from hallucinations.

For instance, an AI-based software would first learn Dice’s meta API endpoint, downloading all of the definitions of the semantic layer and storing them as embeddings in a vector database. Later, when a person sends a question, these embeddings can be used within the immediate to LLM to supply extra context. LLM would then reply with a generated question to Dice, and the applying would execute it. This course of may be chained and repeated a number of occasions to reply sophisticated questions or create abstract experiences.

Efficiency

Relating to response occasions—when engaged on sophisticated queries and duties, the AI system may have to question the semantic layer a number of occasions, making use of totally different filters.

So, to make sure cheap efficiency, these queries have to be cached and never all the time pushed all the way down to the underlying knowledge warehouses. Dice offers a relational cache engine to construct pre-aggregations on prime of uncooked knowledge and implements mixture consciousness to route queries to those aggregates when potential.

Safety

And, lastly, safety and entry management ought to by no means be an afterthought when constructing AI-based purposes. As talked about above, producing uncooked SQL and executing it in a knowledge warehouse might result in fallacious outcomes.

Nonetheless, AI poses an extra danger: because it can’t be managed and should generate arbitrary SQL, direct entry between AI and uncooked knowledge shops will also be a big safety vulnerability. As an alternative, producing SQL via the semantic layer can guarantee granular entry management insurance policies are in place.

And extra…

We’ve numerous thrilling integrations with the AI ecosystem in retailer and might’t wait to share them with you. In the meantime, in case you are engaged on an AI-powered software, take into account testing Dice Cloud free of charge.

Obtain the information “5 Important Options of Each Semantic Layer” to be taught extra.