OPENAI

The researchers level out that the issue is tough to check as a result of superhuman machines don’t exist. In order that they used stand-ins. As a substitute of how people might supervise superhuman machines, they checked out how GPT-2, a mannequin that OpenAI launched 5 years in the past, might supervise GPT-4, OpenAI’s newest and strongest mannequin. “If you are able to do that, it may be proof that you should utilize comparable methods to have people supervise superhuman fashions,” says Collin Burns, one other researcher on the superalignment workforce.

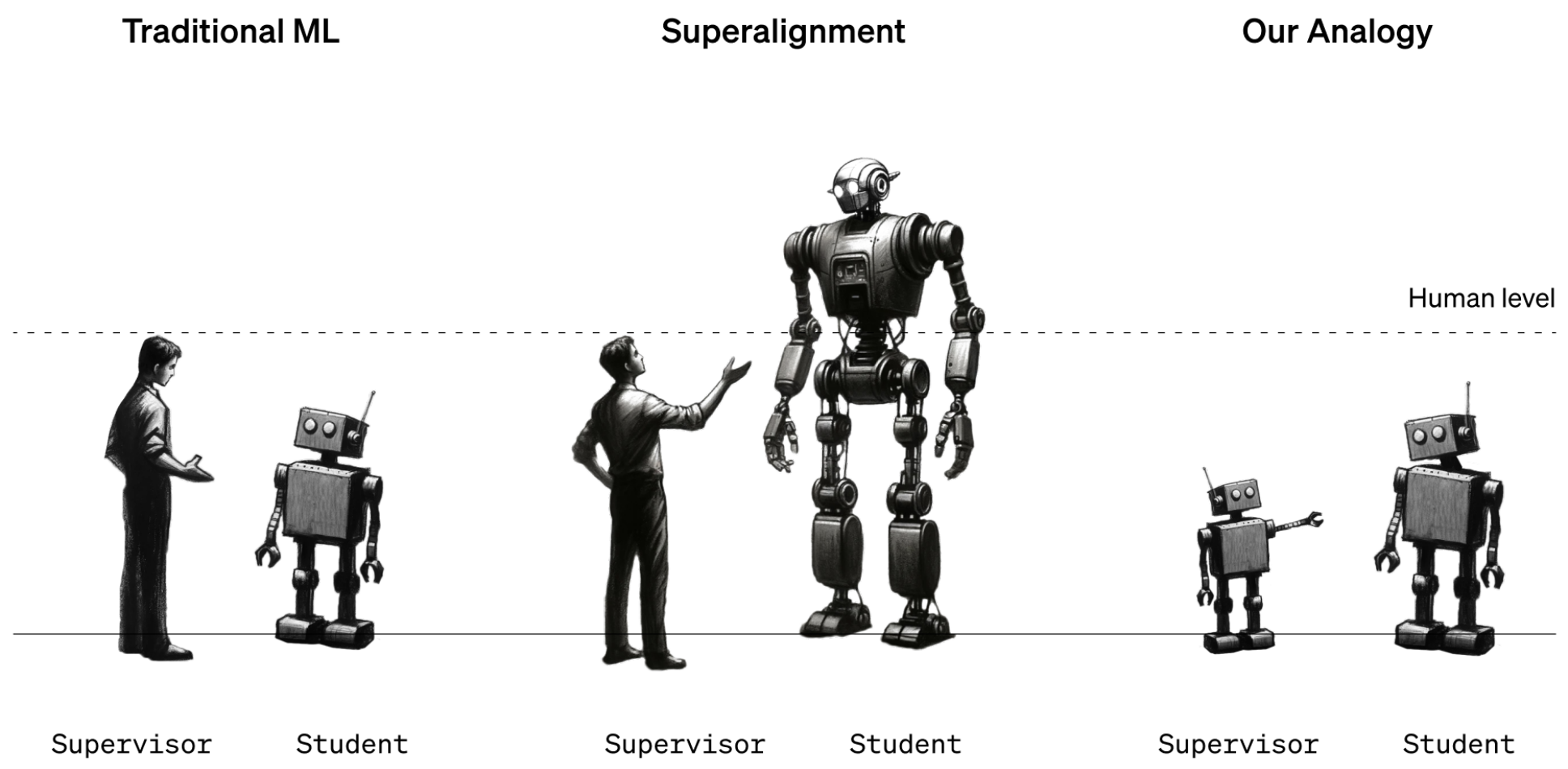

The workforce took GPT-2 and skilled it to carry out a handful of various duties, together with a set of chess puzzles and 22 widespread natural-language-processing checks that assess inference, sentiment evaluation, and so forth. They used GPT-2’s responses to these checks and puzzles to coach GPT-4 to carry out the identical duties. It’s as if a twelfth grader have been taught the right way to do a job by a 3rd grader. The trick was to do it with out GPT-4 taking too huge a success in efficiency.

The outcomes have been blended. The workforce measured the hole in efficiency between GPT-4 skilled on GPT-2’s greatest guesses and GPT-4 skilled on appropriate solutions. They discovered that GPT-4 skilled by GPT-2 carried out 20% to 70% higher than GPT-2 on the language duties however did much less properly on the chess puzzles.

The truth that GPT-4 outdid its trainer in any respect is spectacular, says workforce member Pavel Izmailov: “This can be a actually stunning and optimistic end result.” But it surely fell far in need of what it might do by itself, he says. They conclude that the strategy is promising however wants extra work.

“It’s an attention-grabbing thought,” says Thilo Hagendorff, an AI researcher on the College of Stuttgart in Germany who works on alignment. However he thinks that GPT-2 may be too dumb to be a very good trainer. “GPT-2 tends to offer nonsensical responses to any job that’s barely complicated or requires reasoning,” he says. Hagendorff wish to know what would occur if GPT-3 have been used as a substitute.

He additionally notes that this strategy doesn’t deal with Sutskever’s hypothetical situation by which a superintelligence hides its true conduct and pretends to be aligned when it isn’t. “Future superhuman fashions will possible possess emergent talents that are unknown to researchers,” says Hagendorff. “How can alignment work in these circumstances?”

However it’s simple to level out shortcomings, he says. He’s happy to see OpenAI shifting from hypothesis to experiment: “I applaud OpenAI for his or her effort.”

OpenAI now desires to recruit others to its trigger. Alongside this analysis replace, the corporate introduced a new $10 million cash pot that it plans to make use of to fund folks engaged on superalignment. It would supply grants of as much as $2 million to college labs, nonprofits, and particular person researchers and one-year fellowships of $150,000 to graduate college students. “We’re actually enthusiastic about this,” says Aschenbrenner. “We actually suppose there’s so much that new researchers can contribute.”