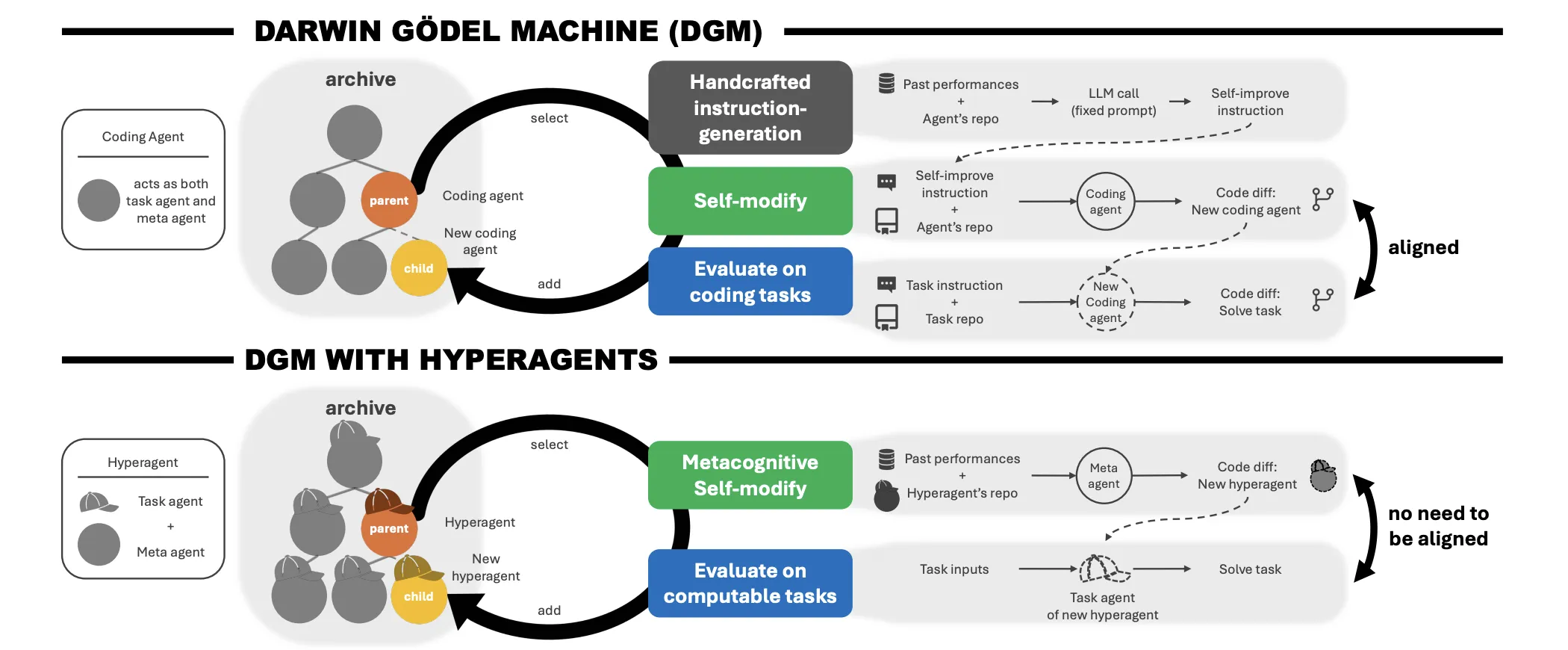

The dream of recursive self-improvement in AI—the place a system doesn’t simply get higher at a process, however will get higher at studying—has lengthy been the ‘holy grail’ of the sector. Whereas theoretical fashions just like the Gödel Machine have existed for many years, they remained largely impractical in real-world settings. That modified with the Darwin Gödel Machine (DGM), which proved that open-ended self-improvement was achievable in coding.

Nevertheless, DGM confronted a major hurdle: it relied on a hard and fast, handcrafted meta-level mechanism to generate enchancment directions. This restricted the system’s development to the boundaries of its human-designed meta agent. Researchers from the College of British Columbia, Vector Institute, College of Edinburgh, New York College, Canada CIFAR AI Chair, FAIR at Meta, and Meta Superintelligence Labs have launched Hyperagents. This framework makes the meta-level modification process itself editable, eradicating the belief that process efficiency and self-modification abilities should be domain-aligned.

The Downside: The Infinite Regress of Meta-Ranges

The issue with present self-improving methods is commonly ‘infinite regress’. When you’ve got a process agent (the half that solves the issue) and a meta agent (the half that improves the duty agent), who improves the meta agent?. Including a ‘meta-meta’ layer merely shifts the problem upward.

Moreover, earlier methods relied on an alignment between the duty and the advance course of. In coding, getting higher on the process usually interprets to getting higher at self-modification. However in non-coding domains—like poetry or robotics—enhancing the task-solving talent doesn’t essentially enhance the flexibility to investigate and modify supply code.

Hyperagents: One Editable Program

The DGM-Hyperagent (DGM-H) framework addresses this by integrating the duty agent and the meta agent right into a single, self-referential, and totally modifiable program. On this structure, an agent is outlined as any computable program that may embrace basis mannequin (FM) calls and exterior instruments.

As a result of the meta agent is a part of the identical editable codebase as the duty agent, it could rewrite its personal modification procedures. The analysis staff calls this metacognitive self-modification. The hyperagent doesn’t simply seek for a greater answer; it improves the mechanism liable for producing future enhancements.

Comparability of Self-Enchancment Architectures

| Element | Darwin Gödel Machine (DGM) | DGM with Hyperagents (DGM-H) |

| Meta-level Mechanism | Mounted and handcrafted | Totally editable and modifiable |

| Area Alignment | Required (primarily coding) | Not required (any computable process) |

| Modification Kind | Process-level solely | Metacognitive (process + meta) |

Outcomes: Past Native Optima in Robotics and Evaluate

The analysis staff examined DGM-H throughout numerous domains: coding, paper evaluation, robotics reward design, and Olympiad-level math grading.

In robotics reward design, the hyperagent was tasked with designing Python reward features to coach a quadruped robotic within the Genesis simulator. Throughout the coaching part, brokers have been required to design rewards for strolling ahead. For held-out testing, the brokers needed to zero-shot generate reward features for a unique process: maximizing the robotic’s torso top.

The DGM-H considerably improved efficiency, rising from an preliminary rating of 0.060 to 0.372 (CI: 0.355–0.436). It efficiently found non-myopic reward features that induced leaping habits—a extra optimum technique for top than the native optimum of merely standing tall.

Within the paper evaluation area, DGM-H improved test-set efficiency from 0.0 to 0.710 (CI: 0.590–0.750), surpassing a consultant static baseline. It moved past superficial behavioral directions to create multi-stage analysis pipelines with specific checklists and determination guidelines.

Transferring the ‘Skill to Enhance‘

A crucial discovering for AI researchers is that these meta-level enhancements are common and transferable. To quantify this, the analysis staff launched the enchancment@ok (imp@ok) metric, which measures the efficiency acquire achieved by a hard and fast meta agent over ok modification steps.

Hyperagents optimized on paper evaluation and robotics duties have been transferred to the Olympiad-level math grading area. Whereas the meta brokers from human-customized DGM runs did not generate enhancements on this new setting (imp@50 = 0.0), the transferred DGM-H hyperagents achieved an imp@50 of 0.630. This demonstrates that the system autonomously acquired transferable self-improvement methods.

Emergent Infrastructure: Monitoring and Reminiscence

With out specific instruction, hyperagents developed subtle engineering instruments to help their very own development:

- Efficiency Monitoring: They launched courses to log metrics throughout generations, figuring out which adjustments led to sustained positive aspects versus regressions.

- Persistent Reminiscence: They carried out timestamped storage for synthesized insights and causal hypotheses, permitting later generations to construct on earlier discoveries.

- Compute-Conscious Planning: They developed logic to regulate modification methods based mostly on the remaining experiment funds—prioritizing basic architectural adjustments early and conservative refinements late.

Key Takeaways

- Unification of Process and Meta Brokers: Hyperagents finish the ‘infinite regress’ of meta-levels by merging the process agent (which solves issues) and the meta agent (which improves the system) right into a single, self-referential program.

- Metacognitive Self-Modification: Not like prior methods with fastened enchancment logic, DGM-H can edit its personal ‘enchancment process,’ basically rewriting the principles of the way it generates higher variations of itself.

- Area-Agnostic Scaling: By eradicating the requirement for domain-specific alignment (beforehand restricted principally to coding), Hyperagents show efficient self-improvement throughout any computable process, together with robotics reward design and educational paper evaluation.

- Transferable ‘Studying’ Expertise: Meta-level enhancements are generalizable; a hyperagent that learns to enhance robotics rewards can switch these optimization methods to speed up efficiency in a wholly completely different area, like Olympiad-level math grading.

- Emergent Engineering Infrastructure: Of their pursuit of higher efficiency, hyperagents autonomously develop subtle engineering instruments—akin to persistent reminiscence, efficiency monitoring, and compute-aware planning—with out specific human directions.

Try the Paper and Repo. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be a part of us on telegram as nicely.