Picture by Creator. Base picture from pch-vector.

Pure Language Processing —or NLP-has developed enormously, and GPT fashions are on the forefront of this revolution.

At present LLM fashions can be utilized in all kinds of purposes.

To keep away from pointless duties and improve my workflow, I started exploring the potential for coaching GPT to formulate SQL queries for me.

And that is when a superb concept appeared:

Utilizing the ability of GPT fashions in deciphering pure language and remodeling it into structured SQL queries.

Might this be potential?

Let’s uncover all of it collectively!

So let’s begin from the start…

A few of you could be already aware of the idea of few shot prompting, whereas others may need not heard of it by no means earlier than.

So…What’s it?

The fundamental concept right here is to make use of some express examples-or shots-to information the LLM to reply in a particular manner.

For this reason it’s known as Few Shot prompting.

To place it merely, by showcasing a couple of examples of the consumer input-sample prompts-along with the specified LLM output, we are able to train the mannequin to ship some enhanced output that follows our preferences.

By doing so we’re increasing the information of the mannequin on some particular area to generate some output that aligns higher with our desired activity.

So let’s exemplify this!

All through this tutorial, I’ll be utilizing a predefined operate known as chatgpt_call() to immediate the GPT mannequin. If you wish to additional perceive it, you go test the next article.

Think about I would like ChatGPT to explain the time period optimism.

If I merely ask GPT to explain it, I’ll acquire a serious-and boring-description.

## Code Block

response = chatgpt_call("Train me about optimism. Maintain it brief.")

print(response)

With the corresponding output:

Screenshot of my Jupyter Pocket book. Prompting GPT.

Nonetheless, think about I’d fairly prefer to get one thing extra poetic. I can add to my immediate some extra element specifying that I need a poetic definition.

## Code Block

response = chatgpt_call("Train me about optimism. Maintain it brief. Attempt to create a poetic definition.")

print(response)

However this second output seems to be identical to a poem and has nothing to do with my desired output.

Screenshot of my Jupyter Pocket book. Prompting GPT.

What can I do?

I may element much more the immediate, and hold iterating till I obtain some good output. Nonetheless, this might take plenty of time.

As a substitute, I can present the mannequin what the form of poetic description I favor designing an instance and displaying it to the mannequin.

## Code Block

immediate = """

Your activity is to reply in a constant fashion aligned with the next fashion.

: Train me about resilience.

: Resilience is sort of a tree that bends with the wind however by no means breaks.

It's the potential to bounce again from adversity and hold shifting ahead.

: Train me about optimism.

"""

response = chatgpt_call(immediate)

print(response)

And the output is precisely what I used to be searching for.

Screenshot of my Jupyter Pocket book. Prompting GPT.

So… how can we translate this into our particular case of SQL queries?

ChatGPT is already able to producing SQL queries out of Pure Language prompts. We don’t even have to point out the mannequin any desk, simply formulate a hypothetical computation and it’ll do it for us.

## Code Block

user_input = """

Assuming I've each product and order tables, may you generate a single desk that contained all the data

of each product along with what number of occasions has it been offered?

"""

immediate = f"""

Given the next pure language immediate, generate a hypothetical question that fulfills the required activity in SQL.

{user_input}

"""

response = chatgpt_call(immediate)

print(response)

Nonetheless, and as you already know, the extra context we give to the mannequin, the higher outputs it would generate.

Screenshot of my Jupyter Pocket book. Prompting GPT.

All through this tutorial I’m splitting the enter prompts into the particular demand of the consumer and the high-level behaviour anticipated from the mannequin. It is a good observe to enhance our interplay with the LLM and be extra concise in our prompts. You’ll be able to be taught extra within the following article.

So let’s think about I’m working with two primary tables: PRODUCTS and ORDERS

Picture by Creator. Tables for use all through the tutorial.

If I ask GPT for a easy question, the mannequin will give an answer immediately, simply because it did at first, however with particular tables for my case.

## Code Block

user_input = """

What mannequin of TV has been offered probably the most within the retailer?

"""

immediate = f"""

Given the next SQL tables, your job is to offer the required SQL queries to fulfil any consumer request.

Tables: <{sql_tables}>

Person request: ```{user_input}```

"""

response = chatgpt_call(immediate)

print(response)

You could find the sql_tables ultimately of this text!

And the output seems to be like as follows!

Screenshot of my Jupyter Pocket book. Prompting GPT.

Nonetheless, we are able to observe some issues within the earlier output.

- The computation is partially fallacious, as it’s only contemplating these TVs which have been already delivered. And any issued order-be it delivered or not-should be thought-about as a sale.

- The question just isn’t formatted as I would love it to be.

So first let’s give attention to displaying the mannequin the right way to compute the required question.

#1. Fixing some misunderstandings of the mannequin

On this first case, the mannequin considers solely these merchandise which have been delivered as offered, however this isn’t true. We will merely repair this misunderstanding by displaying two totally different examples the place I compute related queries.

## Few_shot examples

fewshot_examples = """

-------------- FIRST EXAMPLE

Person: What mannequin of TV has been offered probably the most within the retailer when contemplating all issued orders.

System: You first want to hitch each orders and merchandise tables, filter solely these orders that correspond to TVs

and rely the variety of orders which have been issued:

SELECT P.product_name AS model_of_tv, COUNT(*) AS total_sold

FROM merchandise AS P

JOIN orders AS O ON P.product_id = O.product_id

WHERE P.product_type="TVs"

GROUP BY P.product_name

ORDER BY total_sold DESC

LIMIT 1;

-------------- SECOND EXAMPLE

Person: What is the offered product that has been already delivered probably the most?

System: You first want to hitch each orders and merchandise tables, rely the variety of orders which have

been already delivered and simply hold the primary one:

SELECT P.product_name AS model_of_tv, COUNT(*) AS total_sold

FROM merchandise AS P

JOIN orders AS O ON P.product_id = O.product_id

WHERE P.order_status="Delivered"

GROUP BY P.product_name

ORDER BY total_sold DESC

LIMIT 1;

"""

And now if we immediate once more the mannequin and embrace the earlier examples on it, one can see that the corresponding question won’t be solely correct-the earlier question was already working-but may also take into account gross sales as we would like it to!

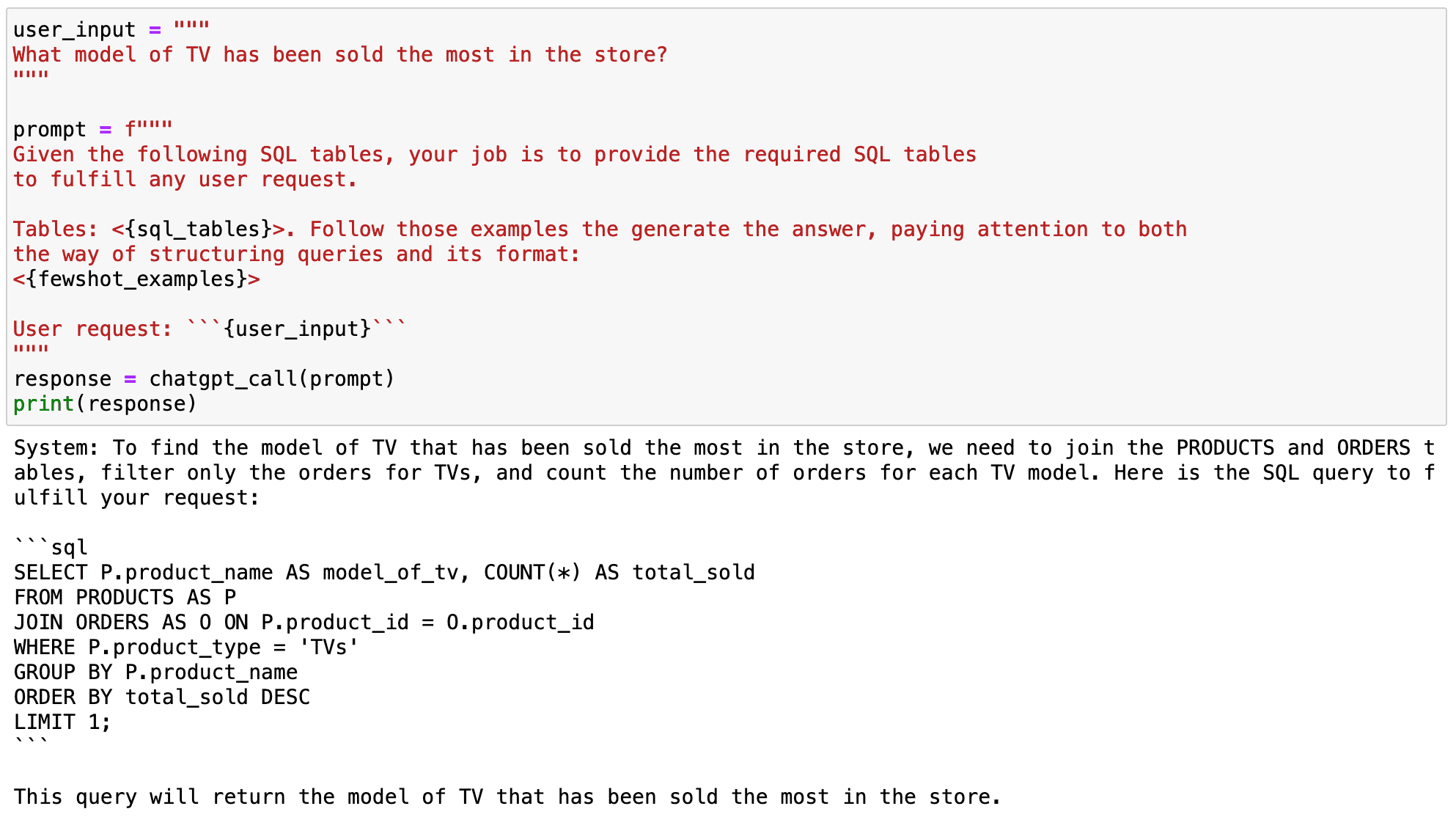

## Code Block

user_input = """

What mannequin of TV has been offered probably the most within the retailer?

"""

immediate = f"""

Given the next SQL tables, your job is to offer the required SQL tables

to meet any consumer request.

Tables: <{sql_tables}>. Observe these examples the generate the reply, listening to each

the best way of structuring queries and its format:

<{fewshot_examples}>

Person request: ```{user_input}```

"""

response = chatgpt_call(immediate)

print(response)

With the next output:

Screenshot of my Jupyter Pocket book. Prompting GPT.

Now if we test the corresponding question…

## Code Block

pysqldf("""

SELECT P.product_name AS model_of_tv, COUNT(*) AS total_sold

FROM PRODUCTS AS P

JOIN ORDERS AS O ON P.product_id = O.product_id

WHERE P.product_type="TVs"

GROUP BY P.product_name

ORDER BY total_sold DESC

LIMIT 1;

""")

It really works completely!

Screenshot of my Jupyter Pocket book. Prompting GPT.

#2. Formatting SQL Queries

Few-short prompting can be a option to customise the mannequin for our personal function or fashion.

If we return to the examples earlier than, the queries had no format in any respect. And everyone knows there are some good practices-together with some private oddities-that enable us to higher learn SQL queries.

For this reason we are able to use few-shot prompting to point out the mannequin the best way we like to question – with our good practices or simply our oddities-and prepare the mannequin to offer us our formatted desired SQL queries.

So, now I’ll put together the identical examples as earlier than however following my format preferences.

## Code Block

fewshot_examples = """

---- EXAMPLE 1

Person: What mannequin of TV has been offered probably the most within the retailer when contemplating all issued orders.

System: You first want to hitch each orders and merchandise tables, filter solely these orders that correspond to TVs

and rely the variety of orders which have been issued:

SELECT

P.product_name AS model_of_tv,

COUNT(*) AS total_sold

FROM merchandise AS P

JOIN orders AS O

ON P.product_id = O.product_id

WHERE P.product_type="TVs"

GROUP BY P.product_name

ORDER BY total_sold DESC

LIMIT 1;

---- EXAMPLE 2

Person: What's the newest order that has been issued?

System: You first want to hitch each orders and merchandise tables and filter by the newest order_creation datetime:

SELECT

P.product_name AS model_of_tv

FROM merchandise AS P

JOIN orders AS O

ON P.product_id = O.product_id

WHERE O.order_creation = (SELECT MAX(order_creation) FROM orders)

GROUP BY p.product_name

LIMIT 1;

"""

As soon as the examples have been outlined, we are able to enter them into the mannequin in order that it might probably mimic the fashion showcased.

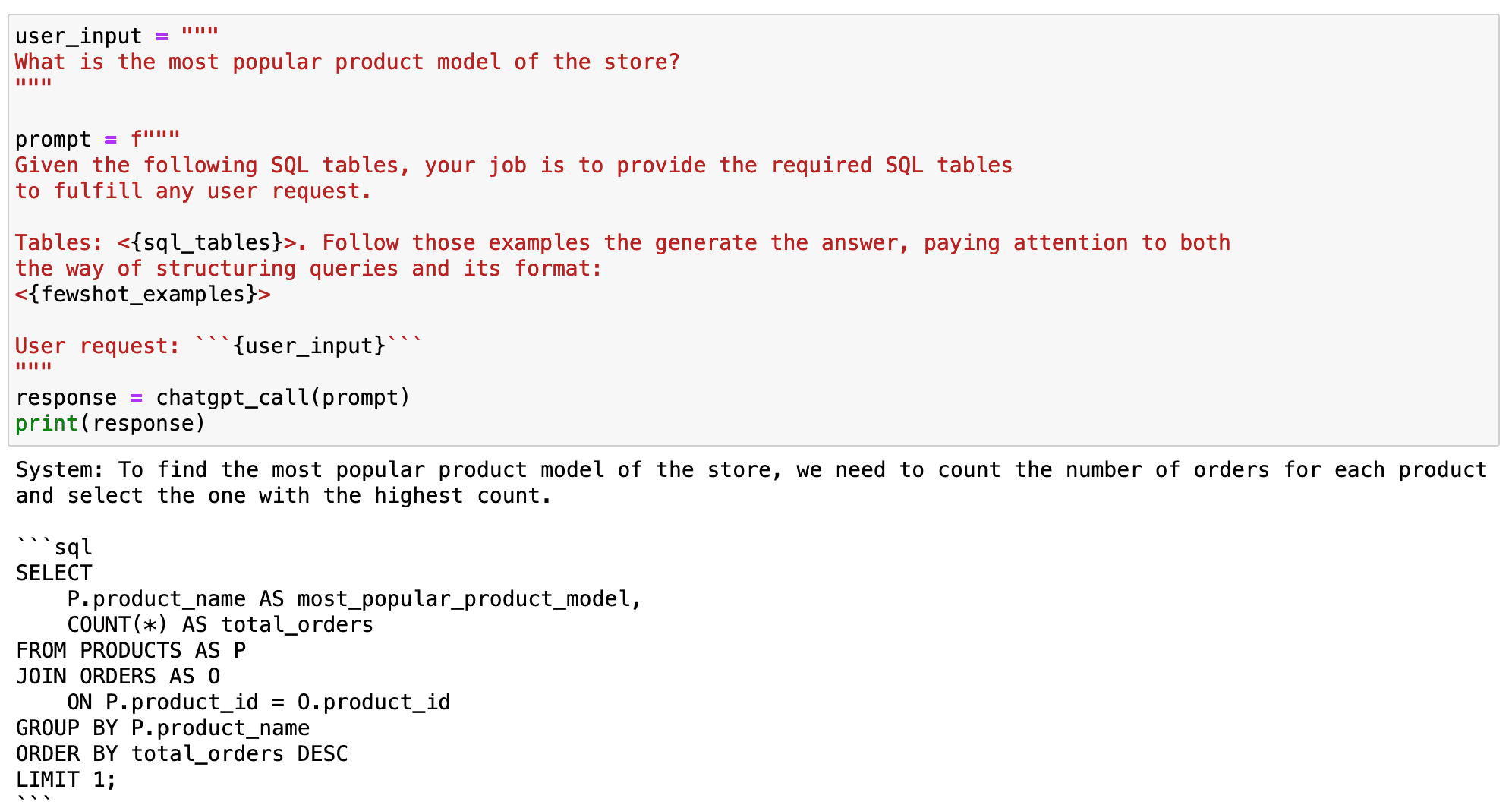

As you’ll be able to observe within the following code field, after displaying GPT what we anticipate from it, it replicates the fashion of the given examples to provide any new output accordingly.

## Code Block

user_input = """

What's the hottest product mannequin of the shop?

"""

immediate = f"""

Given the next SQL tables, your job is to offer the required SQL tables

to meet any consumer request.

Tables: <{sql_tables}>. Observe these examples the generate the reply, listening to each

the best way of structuring queries and its format:

<{fewshot_examples}>

Person request: ```{user_input}```

"""

response = chatgpt_call(immediate)

print(response)

And as you’ll be able to observe within the following output, it labored!

Screenshot of my Jupyter Pocket book. Prompting GPT.

#3. Coaching the mannequin to compute some particular variable.

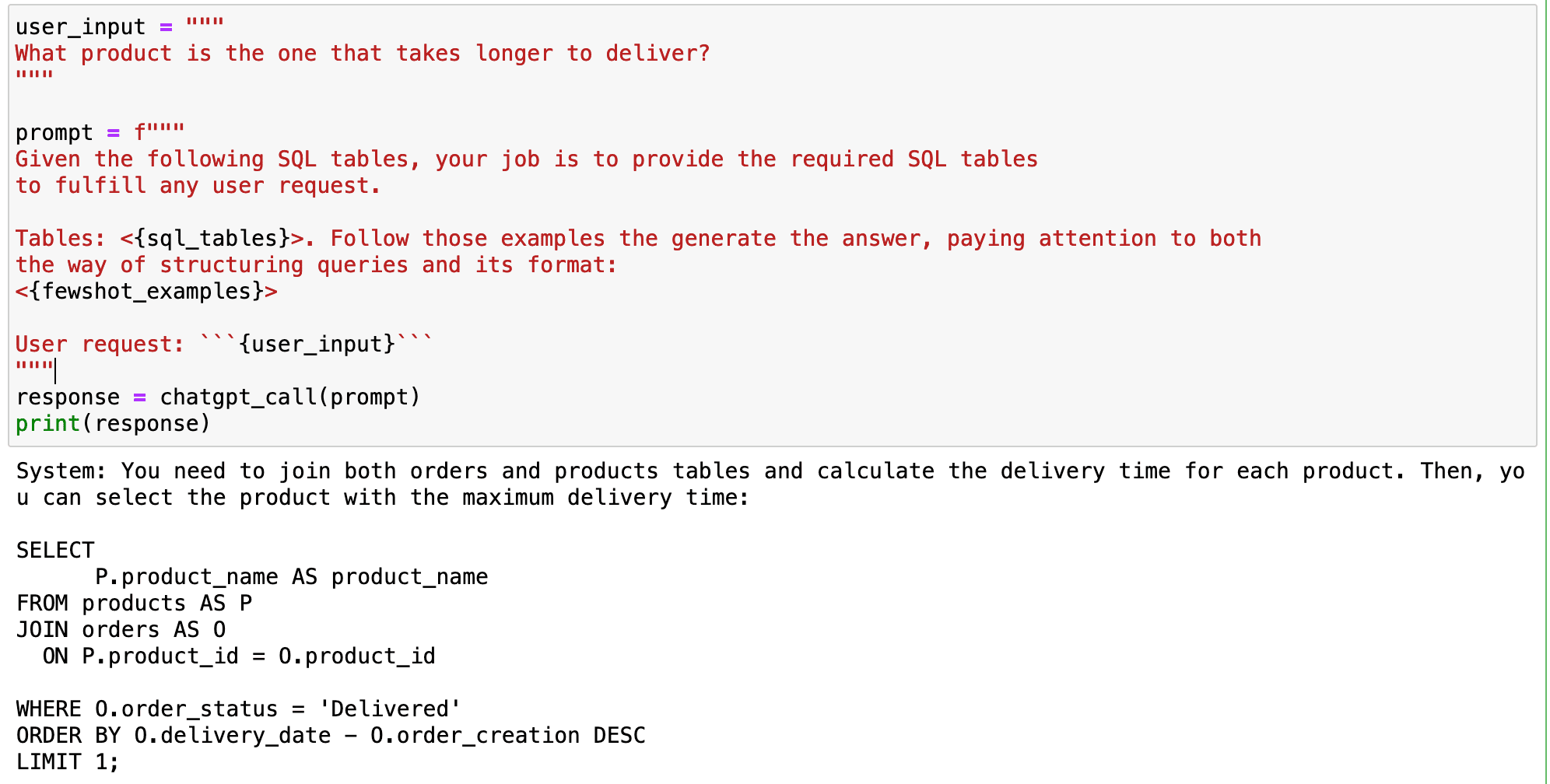

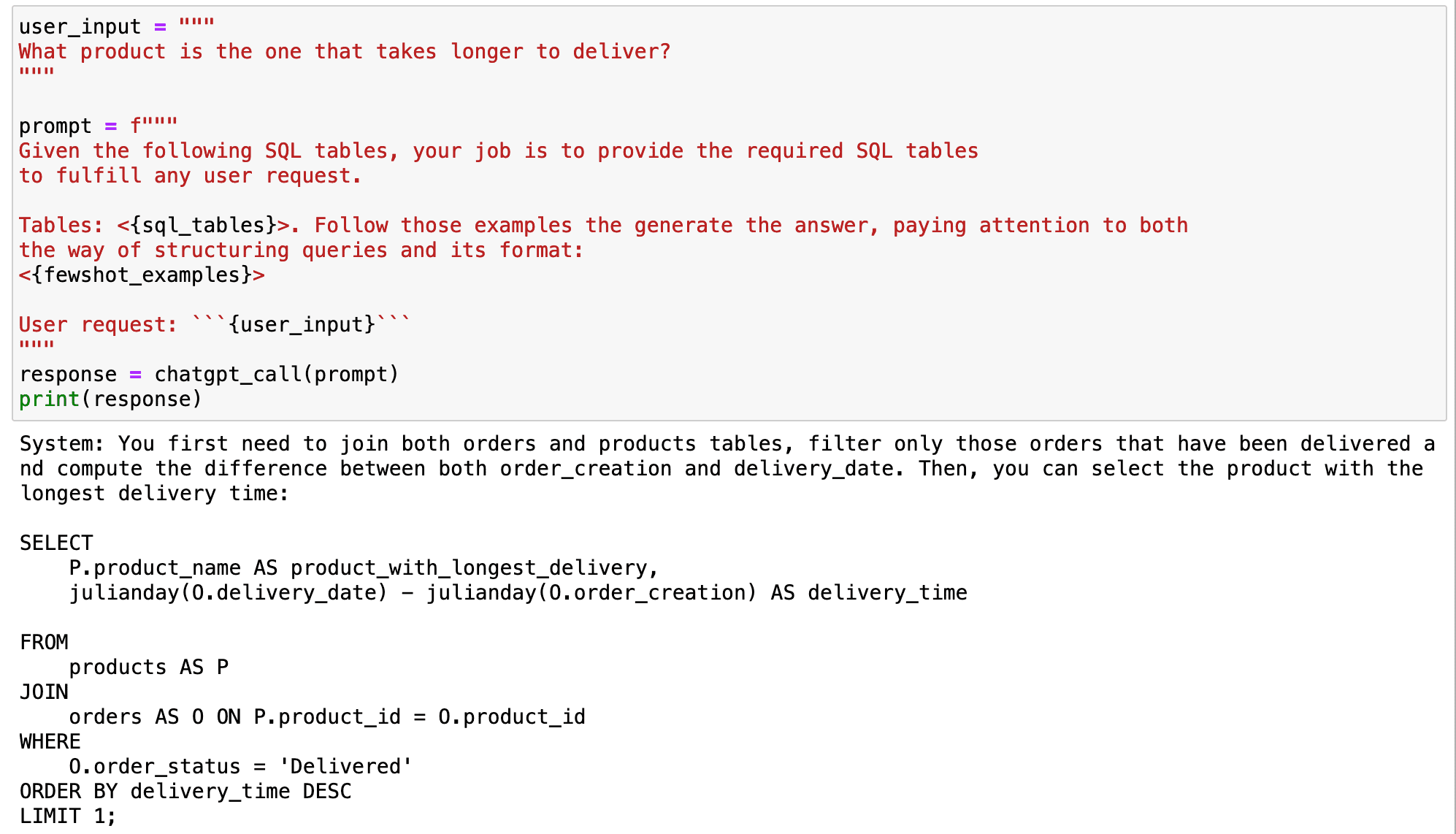

Let’s dive deeper into an illustrative situation. Suppose we goal to compute which product takes the longest to ship. We pose this query to the mannequin in pure language, anticipating an accurate SQL question.

## Code Block

user_input = """

What product is the one which takes longer to ship?

"""

immediate = f"""

Given the next SQL tables, your job is to offer the required SQL tables

to meet any consumer request.

Tables: <{sql_tables}>. Observe these examples the generate the reply, listening to each

the best way of structuring queries and its format:

<{fewshot_examples}>

Person request: ```{user_input}```

"""

response = chatgpt_call(immediate)

print(response)

But, the reply we obtain is much from right.

Screenshot of my Jupyter Pocket book. Prompting GPT.

What went fallacious?

The GPT mannequin makes an attempt to calculate the distinction between two datetime SQL variables straight. This computation is incompatible with most SQL variations, creating a difficulty, particularly for SQLite customers.

How will we rectify this downside?

The answer is true underneath our noses-we resort again to few-shot prompting.

By demonstrating to the mannequin how we usually compute time variables-in this case, the supply time-we prepare it to copy the method each time it encounters related variable sorts.

For instance, SQLite customers could use the julianday() operate. This operate converts any date into the variety of days which have elapsed for the reason that preliminary epoch within the Julian calendar.

This might assist GPT mannequin to deal with date variations in SQLite database higher.

## Including yet another instance

fewshot_examples += """

------ EXAMPLE 4

Person: Compute the time that it takes to supply each product?

System: You first want to hitch each orders and merchandise tables, filter solely these orders which have

been delivered and compute the distinction between each order_creation and delivery_date.:

SELECT

P.product_name AS product_with_longest_delivery,

julianday(O.delivery_date) - julianday(O.order_creation) AS TIME_DIFF

FROM

merchandise AS P

JOIN

orders AS O ON P.product_id = O.product_id

WHERE

O.order_status="Delivered";

"""

Once we use this methodology for example for the mannequin, it learns our most popular manner of computing the supply time. This makes the mannequin higher suited to generate useful SQL queries which might be customised to our particular atmosphere.

If we use the earlier instance as an enter, the mannequin will replicate the best way we compute the supply time and can present useful queries for our concrete atmosphere any longer.

## Code Block

user_input = """

What product is the one which takes longer to ship?

"""

immediate = f"""

Given the next SQL tables, your job is to offer the required SQL tables

to meet any consumer request.

Tables: <{sql_tables}>. Observe these examples the generate the reply, listening to each

the best way of structuring queries and its format:

<{fewshot_examples}>

Person request: ```{user_input}```

"""

response = chatgpt_call(immediate)

print(response)

Screenshot of my Jupyter Pocket book. Prompting GPT.

In conclusion, the GPT mannequin is a wonderful instrument for changing pure language into SQL queries.

Nonetheless, it’s not good.

The mannequin could not be capable to perceive context-aware queries or particular operations with out correct coaching.

Through the use of few-shot prompting, we are able to information the mannequin to know our question fashion and computing preferences.

This enables us to totally harness the ability of the GPT mannequin in our knowledge science workflows, turning the mannequin into a strong instrument that adapts to our distinctive wants.

From unformatted queries to completely customised SQL queries, GPT fashions carry the magic of personalization to our fingertips!

You’ll be able to go test my code straight in my GitHub.

## SQL TABLES

sql_tables = """

CREATE TABLE PRODUCTS (

product_name VARCHAR(100),

value DECIMAL(10, 2),

low cost DECIMAL(5, 2),

product_type VARCHAR(50),

score DECIMAL(3, 1),

product_id VARCHAR(100)

);

INSERT INTO PRODUCTS (product_name, value, low cost, product_type, score, product_id)

VALUES

('UltraView QLED TV', 2499.99, 15, 'TVs', 4.8, 'K5521'),

('ViewTech Android TV', 799.99, 10, 'TVs', 4.6, 'K5522'),

('SlimView OLED TV', 3499.99, 5, 'TVs', 4.9, 'K5523'),

('PixelMaster Professional DSLR', 1999.99, 20, 'Cameras and Camcorders', 4.7, 'K5524'),

('ActionX Waterproof Digicam', 299.99, 15, 'Cameras and Camcorders', 4.4, 'K5525'),

('SonicBlast Wi-fi Headphones', 149.99, 10, 'Audio and Headphones', 4.8, 'K5526'),

('FotoSnap DSLR Digicam', 599.99, 0, 'Cameras and Camcorders', 4.3, 'K5527'),

('CineView 4K TV', 599.99, 10, 'TVs', 4.5, 'K5528'),

('SoundMax House Theater', 399.99, 5, 'Audio and Headphones', 4.2, 'K5529'),

('GigaPhone 12X', 1199.99, 8, 'Smartphones and Equipment', 4.9, 'K5530');

CREATE TABLE ORDERS (

order_number INT PRIMARY KEY,

order_creation DATE,

order_status VARCHAR(50),

product_id VARCHAR(100)

);

INSERT INTO ORDERS (order_number, order_creation, order_status, delivery_date, product_id)

VALUES

(123456, '2023-07-01', 'Shipped','', 'K5521'),

(789012, '2023-07-02', 'Delivered','2023-07-06', 'K5524'),

(345678, '2023-07-03', 'Processing','', 'K5521'),

(901234, '2023-07-04', 'Shipped','', 'K5524'),

(567890, '2023-07-05', 'Delivered','2023-07-15', 'K5521'),

(123789, '2023-07-06', 'Processing','', 'K5526'),

(456123, '2023-07-07', 'Shipped','', 'K5529'),

(890567, '2023-07-08', 'Delivered','2023-07-12', 'K5522'),

(234901, '2023-07-09', 'Processing','', 'K5528'),

(678345, '2023-07-10', 'Shipped','', 'K5530');

"""

Josep Ferrer is an analytics engineer from Barcelona. He graduated in physics engineering and is at the moment working within the Information Science subject utilized to human mobility. He’s a part-time content material creator centered on knowledge science and expertise. You’ll be able to contact him on LinkedIn, Twitter or Medium.