Knowledge analytics has skilled exceptional progress lately, pushed by developments in how knowledge is utilized in key decision-making processes. The gathering, storage, and evaluation of information have additionally progressed considerably attributable to these developments. Furthermore, the demand for expertise in knowledge analytics has skyrocketed, turning the job market right into a extremely aggressive enviornment for people possessing the required expertise and expertise.

The speedy enlargement of data-driven applied sciences has correspondingly led to an elevated demand for specialised roles, akin to “knowledge engineer.” This surge in demand extends past knowledge engineering alone and encompasses associated positions like knowledge scientist and knowledge analyst.

Recognizing the importance of those professions, our collection of weblog posts goals to gather real-world knowledge from on-line job postings and analyze it to grasp the character of the demand for these jobs, in addition to the varied talent units required inside every of those classes.

On this weblog, we introduce a browser-based “Knowledge Analytics Job Tendencies” utility for the visualization and evaluation of job tendencies within the knowledge analytics market. After scraping knowledge from on-line job businesses, it makes use of NLP strategies to determine key talent units required within the job posts. Determine 1 reveals a snapshot of the information app, exploring tendencies within the knowledge analytics job market.

Determine 1: Snapshot of the KNIME Knowledge App “Knowledge Analytics Job Tendencies”

For the implementation, we adopted the low-code knowledge science platform: KNIME Analytics Platform. This open-source and free platform for end-to-end knowledge science relies on visible programming and provides an in depth vary of functionalities, from pure ETL operations and a wide selection of information supply connectors for knowledge mixing by way of to machine studying algorithms, together with deep studying.

The set of workflows underlying the applying is offered free of charge obtain from the KNIME Neighborhood Hub at “Knowledge Analytics Job Tendencies”. A browser-based occasion might be evaluated at “Knowledge Analytics Job Tendencies”.

This utility is generated by 4 workflows proven in Determine 2 to be executed sequentially for the next sequence of steps:

- Net scraping for knowledge assortment

- NLP parsing and knowledge cleansing

- Matter modeling

- Evaluation of attribution of job position – expertise

The workflows can be found on the KNIME Neighborhood Hub Public Area – Knowledge Analytics Job Tendencies.

Determine 2: KNIME Neighborhood Hub Area – Knowledge Analytics Job Tendencies comprises a set of 4 workflows used for constructing the applying “Knowledge Analytics Job Tendencies”

- “01_Web Scraping for knowledge assortment” workflow crawls by way of the web job postings and extracts the textual info right into a structured format

- “02_NLP Parsing and cleansing” workflow performs the required cleansing steps after which parses the lengthy texts into smaller sentences

- “03_Topic Modeling and Exploration Knowledge App” makes use of clear knowledge to construct a subject mannequin after which to visualise its outcomes inside a knowledge app

- “04_Job Talent Attribution” workflow evaluates the affiliation of expertise throughout job roles, like Knowledge Scientist, Knowledge Engineer, and Knowledge Analyst, primarily based on the LDA outcomes.

Net scraping for knowledge assortment

So as to have an up-to-date understanding of the abilities required within the job market, we opted for the evaluation of internet scraping job posts from on-line job businesses. Given the regional variations and the range of languages, we targeted on job postings in the US. This ensures {that a} vital proportion of the job postings are offered within the English language. We additionally targeted on job postings from February 2023 to April 2023.

The KNIME workflow ”01_Web Scraping for Knowledge Assortment” in Determine 3 crawls by way of a listing of URLs of searches on job businesses’ web sites.

To extract the related job postings pertaining to Knowledge Analytics, we used searches with six key phrases that collectively cowl the sector of information analytics, specifically: “large knowledge”, “knowledge science”, “enterprise intelligence”, “knowledge mining”, “machine studying” and “knowledge analytics”. Search key phrases are saved in an Excel file and browse through the Excel Reader node.

Determine 3: KNIME Workflow “01_Web Scraping for Knowledge Assortment” scraps job postings in line with quite a few search URLs

The core node of this workflow is the Webpage Retriever node. It’s used twice. The primary time (outer loop), the node crawls the positioning in line with the key phrase supplied as enter and produces the associated record of URLs for job postings revealed within the US throughout the final 24 hours. The second time (inside loop), the node retrieves the textual content content material from every job posting URL. The Xpath nodes following the Webpage Retriever nodes parse the extracted texts to succeed in the specified info, akin to job title, required {qualifications}, job description, wage, and firm rankings. Lastly, the outcomes are written to a neighborhood file for additional evaluation. Determine 4 reveals a pattern of the job postings scraped for February 2023.

Determine 4: Pattern of the Net Scraping Outcomes for February 2023

NLP parsing and knowledge cleansing

Determine 5: 02_NLP Parsing and cleansing Workflow for Textual content Extraction and Knowledge Cleansing

Like all freshly collected knowledge, our internet scraping outcomes wanted some cleansing. We carry out NLP parsing together with knowledge cleansing and write the respective knowledge information utilizing the workflow 02_NLP Parsing and cleansing proven in Determine 5.

A number of fields from the scraped knowledge have been saved within the type of a concatenation of string values. Right here, we extracted the person sections utilizing a collection of String Manipulation nodes throughout the meta node ”Title-Location-Firm Title Extraction” after which we eliminated pointless columns and removed duplicate rows.

We then assigned a singular ID to every job posting textual content and fragmented the entire doc into sentences through the Cell Splitter node. The meta info for every job – title, location, and firm – was additionally extracted and saved together with the Job ID.

The record of essentially the most frequent 1000 phrases was extracted from all paperwork, in order to generate a stop-word record, together with phrases like “applicant”, “collaboration”, “employment” and so forth … These phrases are current in each job posting and subsequently don’t add any info for the following NLP duties.

The results of this cleansing section is a set of three information:

– A desk containing the paperwork’ sentences;

– A desk containing the job description metadata;

– A desk containing the stopword record.

Matter modeling and outcomes exploration

Determine 6: 03_Topic Modeling and Exploration Knowledge App workflow builds a subject mannequin and permits the consumer to discover the outcomes visually with the Matter Explorer View Part

The workflow 03_Topic Modeling and Exploration Knowledge App (Determine 6) makes use of the cleaned knowledge information from the earlier workflow. On this stage, we goal to:

- Detect and take away frequent sentences (Cease Phrases) showing in lots of job postings

- Carry out customary textual content processing steps to organize the information for subject modeling

- Construct the Matter Mannequin and Visualize the Outcomes.

We focus on the above duties intimately within the following subsections.

3.1 Take away cease phrases with N-grams

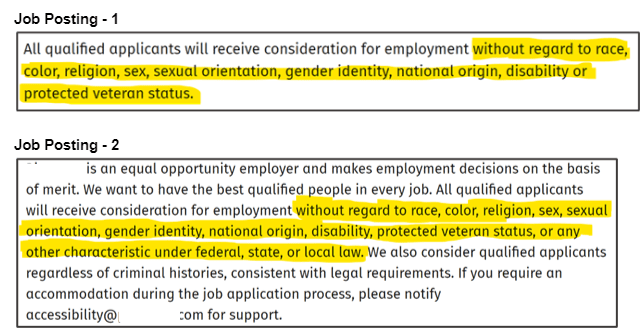

Many job postings embody sentences which can be generally present in firm insurance policies or common agreements, akin to “Non-Discrimination coverage” or “Non-Disclosure Agreements.” Determine 7 offers an instance the place job postings 1 and a pair of point out the “Non-Discrimination” coverage. These sentences usually are not related to our evaluation and subsequently must be faraway from our textual content corpus. We discuss with them as “Cease Phrases” and make use of two strategies to determine and filter them.

The primary methodology is simple: we calculate the frequency of every sentence in our corpus and eradicate any sentences with a frequency better than 10.

The second methodology includes an N-gram method, the place N might be within the vary of values from 20 to 40. We choose a price for N and assess the relevance of N-grams derived from the corpus by counting the variety of N-grams that classify as cease phrases. We repeat this course of for every worth of N throughout the vary. We selected N=35 as the perfect worth for N to determine the very best variety of Cease Phrases.

Determine 7: Instance of Widespread Sentences in Job Postings that may be considered “Cease Phrases”

We used each strategies to take away the “Cease Phrases” as proven by the workflow depicted in Determine 7. At first, we eliminated essentially the most frequent sentences, then we created N-grams with N=35 and tagged them in each doc with the Dictionary Tagger node, and, ultimately, we eliminated these N-grams utilizing the Dictionary Replacer node.

3.2 Put together knowledge for subject modeling with textual content preprocessing strategies

After eradicating the Cease Phrases, we carry out the usual textual content preprocessing to be able to put together the information for subject modeling.

First, we eradicate numeric and alphanumeric values from the corpus. Then, we take away punctuation marks and customary English cease phrases. Moreover, we use the customized cease glossary that we created earlier to filter out job domain-specific cease phrases. Lastly, we convert all characters to lowercase.

We determined to concentrate on the phrases that carry significance, which is why we filtered the paperwork to hold solely nouns and verbs. This may be carried out by assigning a Elements of Speech (POS) tag to every phrase within the doc. We make the most of the POS Tagger node to assign these tags and filter them primarily based on their worth, particularly holding phrases with POS = Noun and POS = Verb.

Lastly, we apply Stanford lemmatization to make sure the corpus is prepared for subject modeling. All of those preprocessing steps are carried out by the “Pre-processing” element proven in Determine 6.

3.3 Construct subject mannequin and visualize it

Within the last stage of our implementation, we utilized the Latent Dirichlet Allocation (LDA) algorithm to assemble a subject mannequin utilizing the Matter Extractor (Parallel LDA) node proven in Determine 6. The LDA algorithm produces quite a few subjects (okay), every subject described by way of a (m) variety of key phrases. Parameters (okay,m) have to be outlined.

As a aspect word, okay and m can’t be too giant, since we need to visualize and interpret the subjects (talent units) by reviewing the key phrases (expertise) and their respective weights. We explored a spread [1, 10] for okay and glued the worth of m=15. After cautious evaluation, we discovered that okay=7 led to essentially the most various and distinct subjects with a minimal overlap in key phrases. Thus, we decided okay=7 to be the optimum worth for our evaluation.

Discover Matter Modeling Outcomes with an Interactive Knowledge App

To allow everybody to entry the subject modeling outcomes and have their very own go at it, we deployed the workflow (in Determine 6) as a Knowledge App on KNIME Enterprise Hub and made it public, for everybody to entry it. You’ll be able to test it out at: Knowledge Analytics Job Tendencies.

The visible a part of this knowledge app comes from the Matter Explorer View element by Francesco Tuscolano and Paolo Tamagnini, accessible free of charge obtain from the KNIME Neighborhood Hub, and offers quite a few interactive visualizations of subjects by subject and doc.

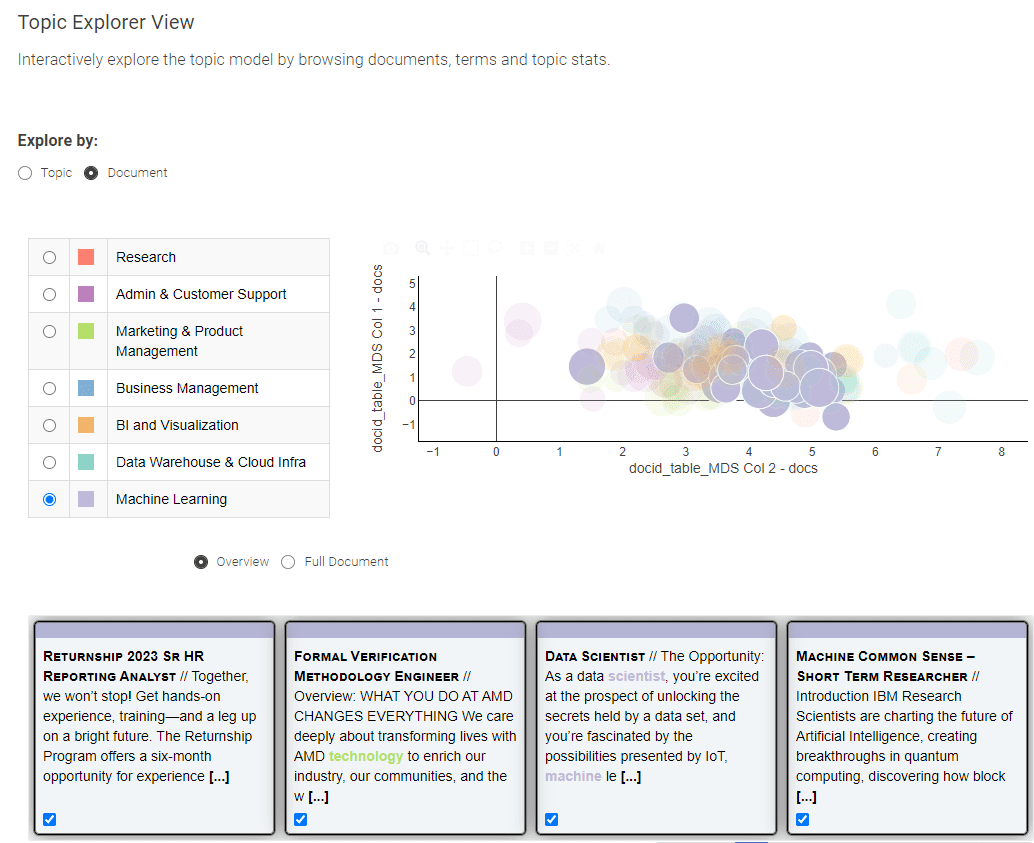

Determine 8: Knowledge Analytics Job Tendencies for exploration of the subject modeling outcomes

Offered in Determine 8, this Knowledge App provides you a selection between two distinct views: the “Matter” and the “Doc” view.

The “Matter” view employs a Multi-Dimensional Scaling algorithm to painting subjects on a 2-dimensional plot, successfully illustrating the semantic relationships between them. On the left panel, you may conveniently choose a subject of curiosity, prompting the show of its corresponding high key phrases.

To enterprise into the exploration of particular person job postings, merely go for the “Doc” view. The “Doc” view presents a condensed portrayal of all paperwork throughout two dimensions. Make the most of the field choice methodology to pinpoint paperwork of significance, and on the backside, an summary of your chosen paperwork awaits

Now we have supplied right here a abstract of the “Knowledge Analytics Job Tendencies” utility, that was carried out and used to discover the newest talent necessities and job roles within the knowledge science job market. For this weblog, we restricted our space of motion to job descriptions for the US, written in English, from February to April 2023.

To know the job tendencies and supply a evaluation, the “Knowledge Analytics Job Tendencies” crawls job company websites, extracts textual content from on-line job postings, extracts subjects and key phrases after performing a collection of NLP duties, and eventually visualizes the outcomes by subject and by doc to discern the patterns within the knowledge.

The applying consists of a set of 4 KNIME workflows to run sequentially for internet scraping, knowledge processing, subject modeling, after which interactive visualizations to permit the consumer to identify the job tendencies.

We deployed the workflow on KNIME Enterprise Hub and made it public, so everybody can entry it. You’ll be able to test it out at: Knowledge Analytics Job Tendencies.

The total set of workflows is offered and free to obtain from KNIME Neighborhood Hub at Knowledge Analytics Job Tendencies. The workflows can simply be modified and tailored to find tendencies in different fields of the job market. It is sufficient to change the record of search key phrases within the Excel file, the web site, and the time vary for the search.

What in regards to the outcomes? That are the abilities and the skilled roles most wanted in at this time’s knowledge science job market? In our subsequent weblog put up, we’ll information you thru the exploration of the outcomes of this subject modeling. Collectively, we’ll carefully look at the intriguing interaction between job roles and expertise, gaining precious insights in regards to the knowledge science job market alongside the way in which. Keep tuned for an enlightening exploration!

Sources

- A Systematic Overview of Knowledge Analytics Job Necessities and On-line Programs by A. Mauro et al.

Andrea De Mauro has over 15 years of expertise constructing enterprise analytics and knowledge science groups at multinational corporations akin to P&G and Vodafone. Aside from his company position, he enjoys instructing Advertising Analytics and Utilized Machine Studying at a number of universities in Italy and Switzerland. Via his analysis and writing, he has explored the enterprise and societal impression of Knowledge and AI, satisfied {that a} broader analytics literacy will make the world higher. His newest guide is ‘Knowledge Analytics Made Simple’, revealed by Packt. He appeared in CDO journal’s 2022 international ‘Forty Below 40’ record.

Mahantesh Pattadkal brings greater than 6 years of expertise in consulting on knowledge science tasks and merchandise. With a Grasp’s Diploma in Knowledge Science, his experience shines in Deep Studying, Pure Language Processing, and Explainable Machine Studying. Moreover, he actively engages with the KNIME Neighborhood for collaboration on knowledge science-based tasks.