The acceleration of text-to-video synthetic intelligence all through 2025 and 2026 marks a decisive shift in digital media manufacturing.

Relatively than merely visualizing textual content, trendy architectures display a whole convergence of video era, audio synthesis, and bodily simulation.

As platforms evolve from single-clip mills to complete manufacturing engines, the technical barrier to cinematic creation continues to break down.

For expertise leaders, digital creators, and forward-looking professionals, mastering particular person software program interfaces is now not an ample technique. Understanding the underlying agentic AI techniques that drive these platforms has develop into an pressing skilled requirement.

On this weblog, we are going to dissect the present state of video era fashions and clarify why structured training in AI gives a important aggressive benefit.

Summarize this text with ChatGPT

Get key takeaways & ask questions

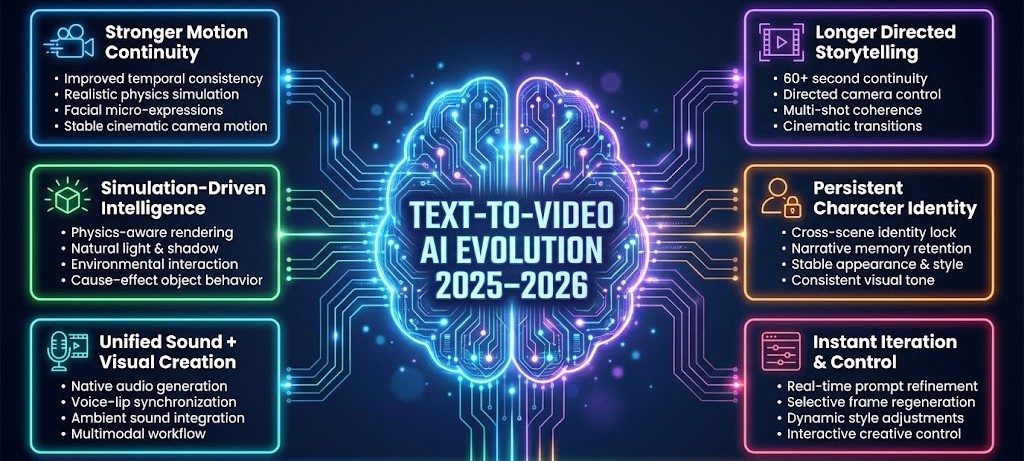

How Textual content-to-Video AI Is Growing?

1. Stronger Movement Continuity & Lifelike Output

Textual content-to-video AI in 2025–2026 is reaching unprecedented visible realism and movement stability by means of the next developments:

- Improved temporal consistency: Successive frames now preserve exact architectural and structural integrity, stopping the morphing artifacts that plagued earlier generations of fashions.

- Reasonable physics simulation: Programs generate correct gravitational reactions and materials physics, making certain falling particles, splashing liquids, and object collisions behave with mathematical precision.

- Facial micro-expressions: Era algorithms map refined muscular shifts on human faces, delivering emotional authenticity as a substitute of robotic stiffness.

- Lowered body instability: Flickering backgrounds and jittery edges have been largely eradicated, enabling professional-grade visible stability appropriate for business manufacturing.

- Cinematic-quality motion: Easy digital camera monitoring and intentional topic movement substitute the chaotic motion patterns of earlier instruments.

- Use case: A movie studio can generate high-quality pre-visualization (previs) sequences for motion scenes, full with practical explosions, facial reactions, and steady digital camera motion earlier than committing to costly on-set manufacturing.

2. Simulation-Pushed Intelligence

Trendy techniques are more and more powered by simulation-based logic that grounds visuals in bodily and environmental realism:

- Physics-aware modeling: Superior architectures calculate how mild, shadow, and mass work together in 3D area earlier than rendering a 2D body.

- Environmental interplay: Topics displace water, forged proportionate shadows, and work together naturally with digital environment as a substitute of showing layered over static backgrounds.

- Context-aware scene era: AI infers environmental particulars reminiscent of climate situations or background exercise with out requiring express prompts for each component.

- Object habits understanding: Generative AI fashions acknowledge trigger and impact, reminiscent of a dropped glass shattering or footsteps creating ripples in water.

- Use case: An structure agency can generate immersive walkthrough movies of proposed buildings, the place lighting shifts realistically all through the day and environmental parts reply naturally to climate simulations.

3. Unified Sound and Visible Creation

Multimodal integration is redefining content material era by merging audio and visible manufacturing right into a single workflow:

- Native audio era: Fashions synthesize soundscapes concurrently with video rendering, eradicating the necessity for separate audio engineering.

- Synchronized dialogue: Generated speech aligns exactly with facial actions and phonetic timing.

- Ambient sound integration: Contextual background noise, city visitors, wind, and rustling leaves are embedded naturally primarily based on the visible setting.

- Voice–lip alignment: Spoken syllables and lip articulation function seamlessly, remodeling silent clips into full audiovisual media.

- Use case: A advertising and marketing crew can create absolutely produced product explainer movies, together with narration, dialogue, and background ambiance, with out hiring separate voice artists or sound designers.

As video era evolves from easy job execution to clever, goal-driven habits, the trade is shifting towards Agentic AI techniques that may plan, adapt, and act with minimal oversight.

To steer on this new period of digital autonomy, professionals want greater than artistic instinct; they require a robust technical basis to design techniques that cause and function independently.

Addressing this want, Johns Hopkins College affords a 16-week on-line Certificates Program in Agentic AI that bridges the hole between utilizing AI instruments and constructing autonomous AI ecosystems, equipping learners with the experience to develop techniques that drive real-world organizational outcomes.

Certificates Program in Agentic AI

Be taught the structure of clever agentic techniques. Construct brokers that understand, plan, be taught, and act utilizing Python-based initiatives and cutting-edge agentic architectures.

How This Program Empowers You?

- Construct Autonomous Programs: Be taught to design brokers able to perceiving, reasoning, and appearing independently to unravel complicated, multi-step challenges.

- Grasp Superior Architectures: Achieve experience in symbolic reasoning, Perception-Need-Intention (BDI) fashions, and Reinforcement Studying to boost adaptability and decision-making.

- Coordinate Multi-Agent Ecosystems: Perceive how a number of brokers collaborate utilizing frameworks such because the Mannequin Context Protocol (MCP) and ideas of Sport Principle to scale clever operations.

- Apply Agentic RAG: Transfer past conventional retrieval strategies by constructing techniques that synthesize, refine, and validate data iteratively for greater accuracy.

- Navigate Ethics and Security: Handle alignment challenges and mitigate dangers in autonomous techniques by means of Accountable AI ideas and governance frameworks.

Even and not using a prior technical background, this system features a structured Python pre-work module to construct the mandatory basis, making certain you’re absolutely ready to achieve an AI-powered future.

4. Longer, Directed Storytelling

Textual content-to-video AI is transitioning from brief experimental clips to structured, cinematic narratives:

- Prolonged scene continuity: Steady sequences exceeding 60 seconds preserve environmental coherence and character placement.

- Directed digital camera motion: Granular management over panning, tilting, monitoring, and dolly zooms allows deliberate cinematographic framing.

- Multi-shot coherence: Easy transitions between extensive establishing photographs and tight close-ups protect visible consistency.

- Use case: Unbiased creators can produce brief movies or episodic net collection solely by means of AI, sustaining narrative consistency throughout a number of scenes with out conventional manufacturing crews.

5. Persistent Character Id

Character consistency throughout scenes has advanced right into a core functionality of contemporary text-to-video techniques, eliminating one of many largest limitations of earlier fashions:

- Cross-scene identification locking: Facial construction, physique proportions, hairstyles, clothes, and defining attributes stay steady at the same time as characters transfer throughout totally different environments, lighting situations, or digital camera angles.

- Narrative reminiscence retention: The mannequin preserves contextual particulars established earlier within the storyline, reminiscent of equipment, accidents, emotional states, or objects being carried,d making certain continuity all through scene transitions.

- Stylistic continuity: Lighting schemes, coloration grading, costume design, and general directorial tone stay constant throughout the venture, stopping visible drift and sustaining a unified cinematic identification.

- Use case: Manufacturers can create a recurring AI-generated mascot or spokesperson who seems persistently throughout commercials, social media campaigns, and explainer movies, constructing long-term model recognition.

6. Prompt Iteration & Interactive Management

The latest era of platforms emphasizes artistic agility, permitting creators to refine and direct outputs with precision quite than counting on static one-shot prompts:

- Actual-time immediate refinement: Customers can modify descriptive inputs throughout era to right away appropriate inconsistencies, regulate tone, or improve visible element with out restarting the whole sequence.

- Type modification: Lighting situations, textures, coloration palettes, and visible aesthetics might be altered dynamically whereas preserving the core scene composition and character positioning.

- Selective scene regeneration: Particular frames or segments might be re-rendered independently, making certain focused enhancements with out disrupting surrounding footage or narrative stream.

- Person-driven route: Interfaces more and more resemble skilled 3D manufacturing environments, providing interactive management over digital camera motion, framing, spatial structure, and environmental parts.

- Use case: Promoting companies can quickly check a number of artistic variations of the identical marketing campaign, altering tone, lighting, or messaging in minutes earlier than choosing the highest-performing model for launch.

This shift transforms text-to-video AI from a passive era software into an adaptive artistic system that helps speedy experimentation and production-level workflows.

Main Instance

A defining instance of current progress in text-to-video AI is Seedance 2.0, launched by ByteDance in February 2025 as a significant improve to its generative video mannequin.

The platform is positioned as a robust competitor to main Western techniques reminiscent of OpenAI’s Sora 2 and Google’s Veo. In contrast to earlier fashions that rely primarily on textual content prompts, Seedance 2.0 introduces multimodal era with superior artistic controls:

- Multimodal Directional Management: Combines textual content prompts with as much as 9 reference pictures, 3 choreography video clips, and MP3 information for synchronized audio-visual output.

- Excessive-quality video output: Generates cinematic clips between 4 –15 seconds at as much as 2K decision.

- Sooner efficiency: Operates roughly 30% sooner than its predecessor.

- Improved movement dealing with: Precisely renders complicated bodily actions, together with martial arts sequences.

- Stronger character consistency: Maintains steady identification throughout a number of photographs.

- Watermark-free output: Delivers clear, production-ready movies.

- Skilled enhancing instruments: Features a Common @-tag system for locking visible parts, Scene Extension for seamless shot additions, and Focused Modifying for modifying particular segments with out regenerating the complete video.

- Present availability: Accessible to pick beta customers on Jimeng AI, with deliberate integration into Dreamina.

Total, Seedance 2.0 highlights the speedy tempo of AI video innovation in China, at the same time as geopolitical and regulatory components could affect its potential enlargement into the US market.

How an AI Agent Program Helps You Construct Job-Prepared Experience?

This altering shift in AI platforms presents a stark actuality: mastering software program interfaces affords solely a brief benefit. To take care of skilled relevance, technological leaders should pivot from working functions to architecting autonomous options.

A structured studying path, such because the 8-week Certificates Program in Generative AI & Brokers Fundamentals from Johns Hopkins College, bridges this hole by assuming no prior technical or programming background whereas offering a complete basis in utilized AI.

Understanding agentic techniques the place AI operates autonomously to realize complicated aims is the strategic differentiator that builds job-ready experience and insulates careers towards automated obsolescence. Right here is the way it helps

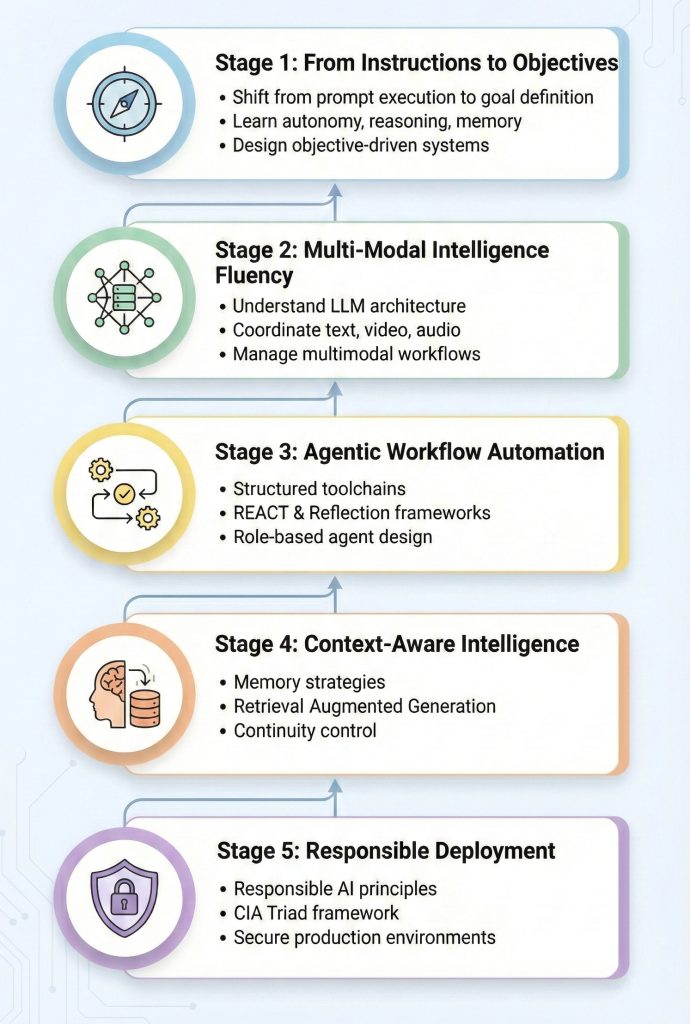

1. From Directions to Goals

Textual content-to-Video AI is shifting from executing single prompts to reaching complicated artistic objectives. As a substitute of telling the system what to generate body by body, professionals should outline aims reminiscent of “Create a cinematic 30-second product launch sequence with emotional development and synchronized narration.

An AI Agent course teaches how agentic techniques transfer from instruction-based interplay to goal-driven intelligence. Learners perceive core parts reminiscent of surroundings, autonomy, reasoning, reminiscence, and power utilization.

2. Fluency in Multi-Modal Intelligence

Trendy Textual content-to-Video techniques mix textual content reasoning, video synthesis, audio era, and contextual reminiscence in a single workflow. To handle such techniques, professionals should perceive how generative AI and NLP perform at a foundational stage.

This system builds fluency in Giant Language Mannequin (LLM) structure and generative mechanics, making certain learners perceive how multimodal techniques coordinate totally different knowledge varieties.

3. Automation with Built-in Toolchains

Textual content-to-Video manufacturing more and more entails engaged on a number of AI instruments, script mills, visible engines, sound fashions, and enhancing modules right into a unified workflow.

The course trains learners to design structured agentic workflows by defining agent roles, managing prompts, and controlling software entry. Trendy frameworks reminiscent of REACT and Reflection are launched to enhance task-specific agent design.

4. Context-Conscious Intelligence

Superior Textual content-to-Video techniques require reminiscence and contextual consciousness to take care of continuity throughout scenes. With out this, characters, lighting, or narrative tone could reset with every new enter.

This system emphasizes reminiscence methods and superior methods like Retrieval-Augmented Era (RAG) to make sure outputs stay correct, related, and constant.

5. Business-Prepared and Accountable Deployment

As Textual content-to-Video AI turns into commercially viable, professionals should additionally perceive accountable AI practices and safety dangers. Manufacturing environments require protected deployment, knowledge safety, and moral safeguards.

The curriculum covers Accountable AI ideas, main LLM vulnerabilities, and safety frameworks such because the CIA Triad (Confidentiality, Integrity, Availability).

Textual content-to-Video AI is now not nearly producing clips; it’s about managing clever techniques that plan, create, adapt, and optimize content material autonomously. An AI Agent course gives the structured basis wanted to design, management, and deploy these techniques successfully.

Capabilities You Develop

1. Core Agentic Ideas

Professionals grasp the ideas of autonomous decision-making, enabling AI techniques to function independently inside complicated video manufacturing pipelines quite than counting on fixed human intervention.

2. Structure & Modeling

Learners perceive the way to construction AI frameworks that guarantee steady interplay between massive language fashions and video diffusion fashions, decreasing breakdowns in multimodal workflows.

3. Reasoning Strategies

This system teaches AI reasoning methods that assist techniques logically decide occasion sequences important for sustaining narrative stream in long-form Textual content-to-Video era.

4. Information Integration

Practitioners be taught to combine exterior datasets and APIs into AI workflows, permitting generated movies to adapt dynamically to real-time data.

5. Machine Studying Paradigms

Understanding ML algorithms, reminiscent of supervised, unsupervised, and reinforcement studying, allows professionals to fine-tune enterprise AI techniques for particular model types or visible aesthetics.

6. Superior AI Programs

Learners achieve the flexibility to handle complicated frameworks the place specialised AI parts deal with duties reminiscent of coloration grading, dialogue era, sound design, and visible rendering concurrently.

7. Ethics & Security Implementation

The curriculum emphasizes accountable AI deployment by implementing safeguards towards copyright violations, bias, misinformation, and malicious use in automated media era.

8. Superior Immediate Engineering

Learners develop the flexibility to craft structured, machine-readable directions that persistently produce correct visible and audio outputs throughout totally different AI fashions.

9. Agentic Workflow Design

This system trains professionals to construct end-to-end automated pipelines that scale back handbook enhancing whereas growing scalability and effectivity.

10. Strategic AI Optimization

Past technical abilities, learners develop strategic pondering to establish which manufacturing duties might be optimized by means of AI brokers to maximise operational effectivity.

By mastering these capabilities, professionals transfer past executing predefined duties to designing clever techniques that function independently and at scale.

This shift positions them for the calls for of the 2026 workforce, the place worth lies in constructing and optimizing AI-driven options.

Because of this, they improve their long-term profession relevance and future-proof themselves in an more and more automated financial system.

Conclusion

Textual content-to-Video AI is evolving into a complicated, autonomous manufacturing ecosystem the place success will depend on greater than artistic prompting.

As multimodal intelligence, contextual reminiscence, and system-level automation develop into customary, professionals should transfer past utilizing instruments to grasp and design the AI techniques behind them.

An AI Agent program gives the structured basis to construct this experience, positioning people to remain related, aggressive, and future-ready within the quickly advancing AI-driven financial system.