Introduction

Within the generative‑AI increase of latest years, big language fashions have dominated headlines, however they aren’t the one recreation on the town. Small language fashions (SLMs) – typically starting from just a few hundred million to about ten billion parameters – are quickly rising as a practical selection for builders and enterprises who care about latency, value and useful resource effectivity. Advances in distillation, quantization and inference‑time optimizations imply these nimble fashions can deal with many actual‑world duties with out the heavy GPU payments of their bigger siblings. In the meantime, suppliers and platforms are racing to supply low‑value, excessive‑pace APIs in order that groups can combine SLMs into merchandise shortly. Clarifai, a market chief in AI platforms, affords a singular edge with its Reasoning Engine, Compute Orchestration and Native Runners, enabling you to run fashions anyplace and save on cloud prices.

This text explores the rising ecosystem of small and environment friendly mannequin APIs. We’ll dive into the why, cowl choice standards, examine high suppliers, focus on underlying optimization strategies, spotlight actual‑world use instances, discover rising tendencies and share sensible steps to get began. All through, we’ll weave in professional insights, business statistics and artistic examples to complement your understanding. Whether or not you’re a developer in search of an reasonably priced API or a CTO evaluating a hybrid deployment technique, this information will assist you to make assured selections.

Fast Digest

Earlier than diving in, right here’s a succinct overview to orient you:

- What are SLMs? Compact fashions (lots of of tens of millions to ~10 B parameters) designed for environment friendly inference on restricted {hardware}.

- Why select them? They ship decrease latency, diminished value and may run on‑premise or edge gadgets; the hole in reasoning capacity is shrinking due to distillation and excessive‑high quality coaching.

- Key choice metrics: Value per million tokens, latency and throughput, context window size, deployment flexibility (cloud vs. native), and knowledge privateness.

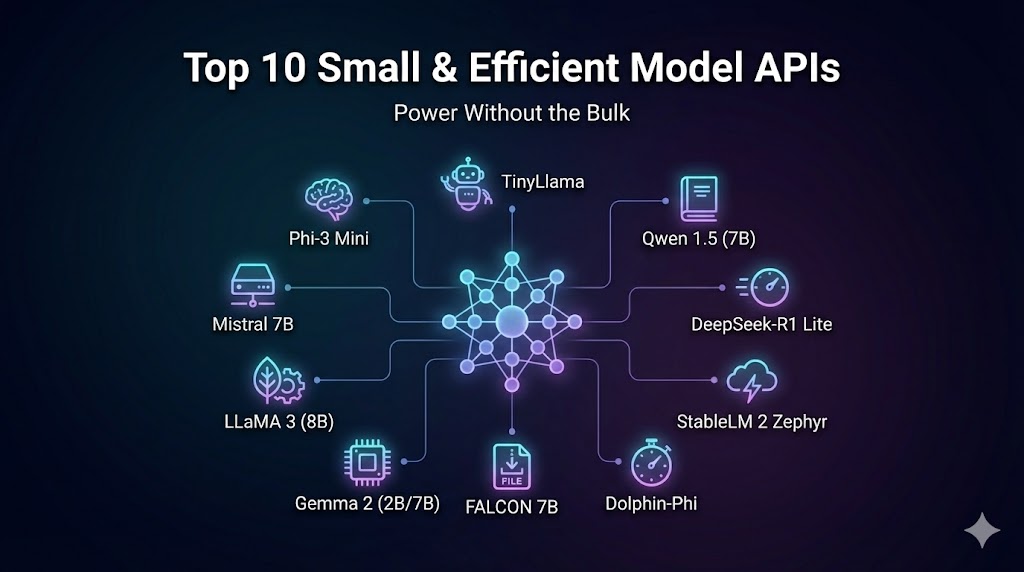

- High suppliers: Clarifai, Collectively AI, Fireworks AI, Hyperbolic, Helicone (observability), enterprise SLM distributors (Private AI, Arcee AI, Cohere), open‑supply fashions corresponding to Gemma, Phi‑4, Qwen and MiniCPM4.

- Optimizations: Quantization, speculative decoding, LoRA/QLoRA, combination‑of‑consultants and edge deployment strategies.

- Use instances: Buyer‑service bots, doc summarization, multimodal cell apps, enterprise AI employees and academic experiments.

- Tendencies: Multimodal SLMs, extremely‑lengthy context home windows, agentic workflows, decentralized inference and sustainability initiatives.

With this roadmap, let’s unpack the small print.

Why Do Small & Environment friendly Fashions Matter?

Fast Abstract: Why have small and environment friendly fashions change into indispensable in as we speak’s AI panorama?

Reply: As a result of they decrease the barrier to entry for generative AI by decreasing computational calls for, latency and value. They allow on‑gadget and edge deployments, assist privateness‑delicate workflows and are sometimes adequate for a lot of duties due to advances in distillation and coaching knowledge high quality.

Understanding SLMs

Small language fashions are outlined much less by a precise parameter depend than by deployability. In follow, the time period contains fashions from a few hundred million to roughly 10 B parameters. In contrast to their bigger counterparts, SLMs are explicitly engineered to run on restricted {hardware}—generally even on a laptop computer or cell gadget. They leverage strategies like selective parameter activation, the place solely a subset of weights is used throughout inference, dramatically decreasing reminiscence utilization. For instance, Google DeepMind’s Gemma‑3n E2B has a uncooked parameter depend round 5 B however operates with the footprint of a 2 B mannequin due to selective activation.

Advantages and Commerce‑offs

The first attract of SLMs lies in value effectivity and latency. Research report that working giant fashions corresponding to 70 B‑parameter LLMs can require lots of of gigabytes of VRAM and costly GPUs, whereas SLMs match comfortably on a single GPU and even CPU. As a result of they compute fewer parameters per token, SLMs can reply sooner, making them appropriate for actual‑time purposes like chatbots, interactive brokers and edge‑deployed providers. Consequently, some suppliers declare sub‑100 ms latency and as much as 11× value financial savings in comparison with deploying frontier fashions.

Nevertheless, there’s traditionally been a compromise: diminished reasoning depth and data breadth. Many SLMs wrestle with complicated logic, lengthy‑vary context or area of interest data. But the hole is closing. Distillation from bigger fashions transfers reasoning behaviours into smaller architectures, and excessive‑high quality coaching knowledge boosts generalization. Some SLMs now obtain efficiency similar to fashions 2–3× their dimension.

When Dimension Issues Much less Than Expertise

For a lot of purposes, pace, value and management matter greater than uncooked intelligence. Working AI on private {hardware} could also be a regulatory requirement (e.g. in healthcare or finance) or a tactical determination to chop inference prices. Clarifai’s Native Runners enable organizations to deploy fashions on their very own laptops, servers or non-public clouds and expose them through a sturdy API. This hybrid method preserves knowledge privateness—delicate info by no means leaves your atmosphere—and leverages present {hardware}, yielding vital financial savings on GPU leases. The flexibility to make use of the identical API for each native and cloud inference, with seamless MLOps options like monitoring, mannequin chaining and versioning, blurs the road between small and enormous fashions: you select the appropriate dimension for the duty and run it the place it is smart.

Professional Insights

- Useful resource‑environment friendly AI is a analysis precedence. A 2025 evaluate of submit‑coaching quantization strategies notes that quantization can reduce reminiscence necessities and computational value considerably with out substantial accuracy loss.

- Inference serving challenges stay. A survey on LLM inference serving highlights that giant fashions impose heavy reminiscence and compute overhead, prompting improvements like request scheduling, KV‑cache administration and disaggregated architectures to attain low latency.

- Trade shift: Experiences present that by late 2025, main suppliers launched mini variations of their flagship fashions (e.g., GPT‑5 Mini, Claude Haiku, Gemini Flash) that reduce inference prices by an order of magnitude whereas retaining excessive benchmark scores.

- Product perspective: Clarifai engineers emphasize that SLMs allow customers to check and deploy fashions shortly on private {hardware}, making AI accessible to groups with restricted assets.

Methods to Choose the Proper Small & Environment friendly Mannequin API

Fast Abstract: What elements must you think about when selecting a small mannequin API?

Reply: Consider value, latency, context window, multimodal capabilities, deployment flexibility and knowledge privateness. Search for clear pricing and assist for monitoring and scaling.

Key Metrics

Choosing an API isn’t nearly mannequin high quality; it’s about how the service meets your operational wants. Vital metrics embody:

- Value per million tokens: The value distinction between enter and output tokens might be vital. A comparability desk for DeepSeek R1 throughout suppliers exhibits enter prices starting from $0.55/M to $3/M and output prices from $2.19/M to $8/M. Some suppliers additionally supply free credit or free tiers for trial use.

- Latency and throughput: Time to first token (TTFT) and tokens per second (throughput) straight affect person expertise. Suppliers like Collectively AI promote sub‑100 ms TTFT, whereas Clarifai’s Reasoning Engine has been benchmarked at 3.6 s TTFT and 544 tokens per second throughput. Inference serving surveys recommend evaluating metrics like TTFT, throughput, normalized latency and percentile latencies.

- Context window & modality: SLMs fluctuate broadly in context size—from 32 Ok tokens for Qwen 0.6B to 1 M tokens for Gemini Flash and 10 M tokens for Llama 4 Scout. Decide how a lot reminiscence your software wants. Additionally think about whether or not the mannequin helps multimodal enter (textual content, photos, audio, video), as in Gemma‑3n E2B.

- Deployment flexibility: Are you locked right into a single cloud, or are you able to run the mannequin anyplace? Clarifai’s platform is {hardware}‑ and vendor‑agnostic—supporting NVIDIA, AMD, Intel and even TPUs—and allows you to deploy fashions on‑premise or throughout clouds.

- Privateness & safety: For regulated industries, on‑premise or native inference could also be necessary. Native Runners guarantee knowledge by no means leaves your atmosphere.

Sensible Issues

When evaluating suppliers, ask:

Does the API assist the frameworks you employ? Many providers supply REST and OpenAI‑suitable endpoints. Clarifai’s API, as an example, is absolutely suitable with OpenAI’s consumer libraries.

How simple is it to modify fashions? Collectively AI permits fast swapping amongst lots of of open‑supply fashions, whereas Hyperbolic focuses on reasonably priced GPU rental and versatile compute.

What assist and observability instruments can be found? Helicone provides monitoring for token utilization, latency and value.

Professional Insights

- Impartial benchmarks validate vendor claims. Synthetic Evaluation ranked Clarifai’s Reasoning Engine within the “most engaging quadrant” for delivering each excessive throughput and aggressive value per token.

- Value vs. efficiency commerce‑off: Analysis exhibits that SLMs can attain close to state‑of‑the‑artwork benchmarks for math and reasoning duties whereas costing one‑tenth of earlier fashions. Consider whether or not paying further for barely greater efficiency is price it in your use case.

- Latency distribution issues: The inference survey recommends inspecting percentile latencies (P50, P90, P99) to make sure constant efficiency.

- Hybrid deployment: Clarifai consultants word that combining Native Runners for delicate duties with cloud inference for public options can stability privateness and scalability.

Who Are the High Suppliers of Small & Environment friendly Mannequin APIs?

Fast Abstract: Which platforms lead the pack for low‑value, excessive‑pace mannequin inference?

Reply: A mixture of established AI platforms (Clarifai, Collectively AI, Fireworks AI, Hyperbolic) and specialised enterprise suppliers (Private AI, Arcee AI, Cohere) supply compelling SLM APIs. Open‑supply fashions corresponding to Gemma, Phi‑4, Qwen and MiniCPM4 present versatile choices for self‑internet hosting, whereas “mini” variations of frontier fashions from main labs ship funds‑pleasant efficiency.

Beneath is an in depth comparability of the highest providers and mannequin households. Every profile summarizes distinctive options, pricing highlights and the way Clarifai integrates or enhances the providing.

Clarifai Reasoning Engine & Native Runners

Clarifai stands out by combining state‑of‑the‑artwork efficiency with deployment flexibility. Its Reasoning Engine delivers 544 tokens per second throughput, 3.6 s time to first reply and $0.16 per million blended tokens in unbiased benchmarks. In contrast to many cloud‑solely suppliers, Clarifai affords Compute Orchestration to run fashions throughout any {hardware} and Native Runners for self‑internet hosting. This hybrid method lets organizations save as much as 90 % of compute by optimizing workloads throughout environments. Builders may add their very own fashions or select from trending open‑supply ones (GPT‑OSS‑120B, DeepSeek‑V3 1, Llama‑4 Scout, Qwen3 Subsequent, MiniCPM4) and deploy them in minutes.

Clarifai Integration Suggestions:

- Use Native Runners when coping with knowledge‑delicate duties or token‑hungry fashions to maintain knowledge on‑premise.

- Leverage Clarifai’s OpenAI‑suitable API for straightforward migration from different providers.

- Chain a number of fashions (e.g. extraction, summarization, reasoning) utilizing Clarifai’s workflow instruments for finish‑to‑finish pipelines.

Collectively AI

Collectively AI positions itself as a excessive‑efficiency inferencing platform for open‑supply fashions. It affords sub‑100 ms latency, automated optimization and horizontal scaling throughout 200+ fashions. Token caching, mannequin quantization and cargo balancing are constructed‑in, and pricing might be 11× cheaper than utilizing proprietary providers when working fashions like Llama 3. A free tier makes it simple to check.

Clarifai Perspective: Clarifai’s platform can complement Collectively AI by offering observability (through Helicone) or serving fashions domestically. For instance, you might run analysis experiments on Collectively AI after which deploy the ultimate pipeline through Clarifai for manufacturing stability.

Fireworks AI

Fireworks AI makes a speciality of serverless multimodal inference. Its proprietary FireAttention engine delivers sub‑second latency and helps textual content, picture and audio duties with HIPAA and SOC2 compliance. It’s designed for straightforward integration of open‑supply fashions and affords pay‑as‑you‑go pricing.

Clarifai Perspective: For groups requiring HIPAA compliance and multi‑modal processing, Fireworks might be built-in with Clarifai workflows. Alternatively, Clarifai’s Generative AI modules could deal with comparable duties with much less vendor lock‑in.

Hyperbolic

Hyperbolic offers a singular mixture of AI inferencing providers and reasonably priced GPU rental. It claims as much as 80 % decrease prices in contrast with giant cloud suppliers and affords entry to numerous base, textual content, picture and audio fashions. The platform appeals to startups and researchers who want versatile compute with out lengthy‑time period contracts.

Clarifai Perspective: You should use Hyperbolic for prototype improvement or low‑value mannequin coaching, then deploy through Clarifai’s compute orchestration for manufacturing. This break up can scale back prices whereas gaining enterprise‑grade MLOps.

Helicone (Observability Layer)

Helicone isn’t a mannequin supplier however an observability platform that integrates with a number of mannequin APIs. It tracks token utilization, latency and value in actual time, enabling groups to handle budgets and determine efficiency bottlenecks. Helicone can plug into Clarifai’s API or providers like Collectively AI and Fireworks. For complicated pipelines, it’s a vital instrument to keep up value transparency.

Enterprise SLM Distributors – Private AI, Arcee AI & Cohere

The rise of enterprise‑targeted SLM suppliers displays the necessity for safe, customizable AI options.

- Private AI: Gives a multi‑reminiscence, multi‑modal “MODEL‑3” structure the place organizations can create AI personas (e.g., AI CFO, AI Authorized Counsel). It boasts a zero‑hallucination design and powerful privateness assurances, making it perfect for regulated industries.

- Arcee AI: Routes duties to specialised 7 B‑parameter fashions utilizing an orchestral platform, enabling no‑code agent workflows with deep compliance controls.

- Cohere: Whereas recognized for bigger fashions, its Command R7B is a 7 B SLM with a 128 Ok context window and enterprise‑grade safety; it’s trusted by main companies.

Clarifai Perspective: Clarifai’s compute orchestration can host or interoperate with these fashions, permitting enterprises to mix proprietary fashions with open‑supply or customized ones in unified workflows.

Open‑Supply SLM Households

Open‑supply fashions give builders the liberty to self‑host and customise. Notable examples embody:

- Gemma‑3n E2B: A 5 B parameter multimodal mannequin from Google DeepMind. It makes use of selective activation to run with a footprint much like a 2 B mannequin and helps textual content, picture, audio and video inputs. Its cell‑first structure and assist for 140+ languages make it perfect for on‑gadget experiences.

- Phi‑4‑mini instruct: A 3.8 B parameter mannequin from Microsoft, skilled on reasoning‑dense knowledge. It matches the efficiency of bigger 7 B–9 B fashions and affords a 128 Ok context window underneath an MIT license.

- Qwen3‑0.6B: A 0.6 B mannequin with a 32 Ok context, supporting 100+ languages and hybrid reasoning behaviours. Regardless of its tiny dimension, it competes with greater fashions and is good for international on‑gadget merchandise.

- MiniCPM4: A part of a collection of environment friendly LLMs optimized for edge gadgets. By means of improvements in structure, knowledge and coaching, these fashions ship robust efficiency at low latency.

- SmolLM3 and different 3–4 B fashions: Excessive‑efficiency instruction fashions that outperform some 7 B and 4 B options.

Clarifai Perspective: You may add and deploy any of those open‑supply fashions through Clarifai’s Add Your Personal Mannequin function. The platform handles provisioning, scaling and monitoring, turning uncooked fashions into manufacturing providers in minutes.

Finances & Pace Fashions from Main Suppliers

Main AI labs have launched mini variations of their flagship fashions, shifting the fee‑efficiency frontier.

- GPT‑5 Mini: Gives practically the identical capabilities as GPT‑5 with enter prices round $0.25/M tokens and output prices round $2/M tokens—dramatically cheaper than earlier fashions. It maintains robust efficiency on math benchmarks, attaining 91.1 % on the AIME contest whereas being way more reasonably priced.

- Claude 3.5 Haiku: Anthropic’s smallest mannequin within the 3.5 collection. It emphasises quick responses with a 200 Ok token context and sturdy instruction following.

- Gemini 2.5 Flash: Google’s 1 M context hybrid mannequin optimized for pace and value.

- Grok 4 Quick: xAI’s funds variant of the Grok mannequin, that includes 2 M context and modes for reasoning or direct answering.

- DeepSeek V3.2 Exp: An open‑supply experimental mannequin that includes Combination‑of‑Consultants and sparse consideration for effectivity.

Clarifai Perspective: Many of those fashions can be found through Clarifai’s Reasoning Engine or might be uploaded by means of its compute orchestration. As a result of pricing can change quickly, Clarifai screens token prices and throughput to make sure aggressive efficiency.

Professional Insights

- Hybrid technique: A typical sample is to make use of a draft small mannequin (e.g., Qwen 0.6B) for preliminary reasoning and name a bigger mannequin just for complicated queries. This speculative or cascade method reduces prices whereas sustaining high quality.

- Observability issues: Value, latency and efficiency fluctuate throughout suppliers. Combine observability instruments corresponding to Helicone to observe utilization and keep away from funds surprises.

- Vendor lock‑in: Platforms like Clarifai deal with lock‑in by permitting you to run fashions on any {hardware} and swap suppliers with an OpenAI‑suitable API.

- Enterprise AI groups: Private AI’s capacity to create specialised AI employees and preserve good reminiscence throughout classes demonstrates how SLMs can scale throughout departments.

What Methods Make SLM Inference Environment friendly?

Fast Abstract: Which underlying strategies allow small fashions to ship low‑value, quick inference?

Reply: Effectivity comes from a mix of quantization, speculative decoding, LoRA/QLoRA adapters, combination‑of‑consultants, edge‑optimized architectures and sensible inference‑serving methods. Clarifai’s platform helps or enhances many of those strategies.

Quantization

Quantization reduces the numerical precision of mannequin weights and activations (e.g. from 32‑bit to eight‑bit and even 4‑bit). A 2025 survey explains that quantization drastically reduces reminiscence consumption and compute whereas sustaining accuracy. By lowering the mannequin’s reminiscence footprint, quantization permits deployment on cheaper {hardware} and reduces power utilization. Submit‑coaching quantization (PTQ) strategies enable builders to quantize pre‑skilled fashions with out retraining them, making it perfect for SLMs.

Speculative Decoding & Cascade Fashions

Speculative decoding accelerates autoregressive technology by utilizing a small draft mannequin to suggest a number of future tokens, which the bigger mannequin then verifies. This method can ship 2–3× pace enhancements and is more and more obtainable in inference frameworks. It pairs properly with SLMs: you should utilize a tiny mannequin like Qwen 0.6B because the drafter and a bigger reasoning mannequin for verification. Some analysis extends this concept to three‑mannequin speculative decoding, layering a number of draft fashions for additional beneficial properties. Clarifai’s reasoning engine is optimized to assist such speculative and cascade workflows.

LoRA & QLoRA

Low‑Rank Adaptation (LoRA) high quality‑tunes solely a small subset of parameters by injecting low‑rank matrices. QLoRA combines LoRA with quantization to scale back reminiscence utilization even throughout high quality‑tuning. These strategies reduce coaching prices by orders of magnitude and scale back the penalty on inference. Builders can shortly adapt open‑supply SLMs for area‑particular duties with out retraining the complete mannequin. Clarifai’s coaching modules assist high quality‑tuning through adapters, enabling customized fashions to be deployed by means of its inference API.

Combination‑of‑Consultants (MoE)

MoE architectures allocate completely different “consultants” to course of particular tokens. As a substitute of utilizing all parameters for each token, a router selects a subset of consultants, permitting the mannequin to have very excessive parameter counts however solely activate a small portion throughout inference. This ends in decrease compute per token whereas retaining total capability. Fashions like Llama‑4 Scout and Qwen3‑Subsequent leverage MoE for lengthy‑context reasoning. MoE fashions introduce challenges round load balancing and latency, however analysis proposes dynamic gating and professional buffering to mitigate these.

Edge Deployment & KV‑Cache Optimizations

Working fashions on the sting affords privateness and value advantages. Nevertheless, useful resource constraints demand optimizations corresponding to KV‑cache administration and request scheduling. The inference survey notes that occasion‑degree strategies like prefill/decoding separation, dynamic batching and multiplexing can considerably scale back latency. Clarifai’s Native Runners incorporate these methods robotically, enabling fashions to ship manufacturing‑grade efficiency on laptops or on‑premise servers.

Professional Insights

- Quantization commerce‑offs: Researchers warning that low‑bit quantization can degrade accuracy in some duties; use adaptive precision or combined‑precision methods.

- Cascade design: Consultants suggest constructing pipelines the place a small mannequin handles most requests and solely escalates to bigger fashions when mandatory. This reduces common value per request.

- MoE greatest practices: To keep away from load imbalance, mix dynamic gating with load‑balancing algorithms that distribute site visitors evenly throughout consultants.

- Edge vs. cloud: On‑gadget inference reduces community latency and will increase privateness however could restrict entry to giant context home windows. A hybrid method—working summarization domestically and lengthy‑context reasoning within the cloud—can ship one of the best of each worlds.

How Are Small & Environment friendly Fashions Used within the Actual World?

Fast Abstract: What sensible purposes profit most from SLMs and low‑value inference?

Reply: SLMs energy chatbots, doc summarization providers, multimodal cell apps, enterprise AI groups and academic instruments. Their low latency and value make them perfect for top‑quantity, actual‑time and edge‑based mostly workloads.

Buyer‑Service & Conversational Brokers

Companies deploy SLMs to create responsive chatbots and AI brokers that may deal with giant volumes of queries with out ballooning prices. As a result of SLMs have shorter context home windows and sooner response instances, they excel at transactional conversations, routing queries or offering fundamental assist. For extra complicated requests, techniques can seamlessly hand off to a bigger reasoning mannequin. Clarifai’s Reasoning Engine helps such agentic workflows, enabling multi‑step reasoning with low latency.

Inventive Instance: Think about an e‑commerce platform utilizing a 3‑B SLM to reply product questions. For robust queries, it invokes a deeper reasoning mannequin, however 95 % of interactions are served by the small mannequin in underneath 100 ms, slashing prices.

Doc Processing & Retrieval‑Augmented Era (RAG)

SLMs with lengthy context home windows (e.g., Phi‑4 mini with 128 Ok tokens or Llama 4 Scout with 10 M tokens) are properly‑fitted to doc summarization, authorized contract evaluation and RAG techniques. Mixed with vector databases and search algorithms, they’ll shortly extract key info and generate correct summaries. Clarifai’s compute orchestration helps chaining SLMs with vector search fashions for sturdy RAG pipelines.

Multimodal & Cellular Functions

Fashions like Gemma‑3n E2B and MiniCPM4 settle for textual content, photos, audio and video inputs, enabling multimodal experiences on cell gadgets. As an illustration, a information app might use such a mannequin to generate audio summaries of articles or translate dwell speech to textual content. The small reminiscence footprint means they’ll run on smartphones or low‑energy edge gadgets, the place bandwidth and latency constraints make cloud‑based mostly inference impractical.

Enterprise AI Groups & Digital Co‑Employees

Enterprises are transferring past chatbots towards AI workforces. Options like Private AI let firms practice specialised SLMs – AI CFOs, AI attorneys, AI gross sales assistants – that preserve institutional reminiscence and collaborate with people. Clarifai’s platform can host such fashions domestically for compliance and combine them with different providers. SLMs’ decrease token prices enable organizations to scale the variety of AI crew members with out incurring prohibitive bills.

Analysis & Schooling

Universities and researchers use SLM APIs to prototype experiments shortly. SLMs’ decrease useful resource necessities allow college students to high quality‑tune fashions on private GPUs or college clusters. Open‑supply fashions like Qwen and Phi encourage transparency and reproducibility. Clarifai affords educational credit and accessible pricing, making it a precious associate for instructional establishments.

Professional Insights

- Healthcare state of affairs: A hospital makes use of Clarifai’s Native Runners to deploy a multimodal mannequin domestically for radiology report summarization, guaranteeing HIPAA compliance whereas avoiding cloud prices.

- Help heart success: A tech firm changed its LLM‑based mostly assist bot with a 3 B SLM, decreasing common response time by 70 % and reducing month-to-month inference prices by 80 %.

- On‑gadget translation: A journey app leverages Gemma‑3n’s multimodal capabilities to carry out speech‑to‑textual content translation on smartphones, delivering offline translations even with out connectivity.

What’s Subsequent? Rising & Trending Subjects

Fast Abstract: Which tendencies will form the way forward for small mannequin APIs?

Reply: Anticipate to see multimodal SLMs, extremely‑lengthy context home windows, agentic workflows, decentralized inference, and sustainability‑pushed optimizations. Regulatory and moral concerns may also affect deployment decisions.

Multimodal & Cross‑Area Fashions

SLMs are increasing past pure textual content. Fashions like Gemma‑3n settle for textual content, photos, audio and video, demonstrating how SLMs can function common cross‑area engines. As coaching knowledge turns into extra numerous, anticipate fashions that may reply a written query, describe a picture and translate speech all throughout the identical small footprint.

Extremely‑Lengthy Context Home windows & Reminiscence Architectures

Current releases present fast progress in context size: 10 M tokens for Llama 4 Scout, 1 M tokens for Gemini Flash, and 32 Ok tokens even for sub‑1 B fashions like Qwen 0.6B. Analysis into phase routing, sliding home windows and reminiscence‑environment friendly consideration will enable SLMs to deal with lengthy paperwork with out ballooning compute prices.

Agentic & Software‑Use Workflows

Agentic AI—the place fashions plan, name instruments and execute duties—requires constant reasoning and multi‑step determination making. Many SLMs now combine instrument‑use capabilities and are being optimized to work together with exterior APIs, databases and code. Clarifai’s Reasoning Engine, as an example, helps superior instrument invocation and may orchestrate chains of fashions for complicated duties.

Decentralized & Privateness‑Preserving Inference

As privateness rules tighten, the demand for on‑gadget inference and self‑hosted AI will develop. Platforms like Clarifai’s Native Runners exemplify this development, enabling hybrid architectures the place delicate workloads run domestically whereas much less delicate duties leverage cloud scalability. Rising analysis explores federated inference and distributed mannequin serving to protect person privateness with out sacrificing efficiency.

Sustainability & Power Effectivity

Power consumption is a rising concern. Quantization and integer‑solely inference strategies scale back energy utilization, whereas combination‑of‑consultants and sparse consideration decrease computation. Researchers are exploring transformer options—corresponding to Mamba, Hyena and RWKV—that will supply higher scaling with fewer parameters. Sustainability will change into a key promoting level for AI platforms.

Professional Insights

- Regulatory foresight: Information safety legal guidelines like GDPR and HIPAA will more and more favour native or hybrid inference, accelerating adoption of self‑hosted SLMs.

- Benchmark evolution: New benchmarks that issue power consumption, latency consistency and complete value of possession will information mannequin choice.

- Neighborhood involvement: Open‑supply collaborations (e.g., Hugging Face releases, educational consortia) will drive innovation in SLM architectures, guaranteeing that enhancements stay accessible.

Methods to Get Began with Small & Environment friendly Mannequin APIs

Fast Abstract: What are the sensible steps to combine SLMs into your workflow?

Reply: Outline your use case and funds, examine suppliers on key metrics, check fashions with free tiers, monitor utilization with observability instruments and deploy through versatile platforms like Clarifai for manufacturing. Use code samples and greatest practices to speed up improvement.

Step‑by‑Step Information

- Outline the Activity & Necessities: Establish whether or not your software wants chat, summarization, multimodal processing or complicated reasoning. Estimate token volumes and latency necessities. For instance, a assist bot may tolerate 1–2 s latency however want low value per million tokens.

- Examine Suppliers: Use the standards in Part 2 to shortlist APIs. Take note of pricing tables, context home windows, multimodality and deployment choices. Clarifai’s Reasoning Engine, Collectively AI and Fireworks AI are good beginning factors.

- Signal Up & Get hold of API Keys: Most providers supply free tiers. Clarifai offers a Begin totally free plan and OpenAI‑suitable endpoints.

- Check Fashions: Ship pattern prompts and measure latency, high quality and value. Use Helicone or comparable instruments to observe token utilization. For area‑particular duties, strive high quality‑tuning with LoRA or QLoRA.

- Deploy Regionally or within the Cloud: If privateness or value is a priority, run fashions through Clarifai’s Native Runners. In any other case, deploy in Clarifai’s cloud for elasticity. You may combine each utilizing compute orchestration.

- Combine Observability & Management: Implement monitoring to trace prices, latency and error charges. Alter token budgets and select fallback fashions to keep up SLAs.

- Iterate & Scale: Analyze person suggestions, refine prompts and fashions, and scale up by including extra AI brokers or pipelines. Clarifai’s workflow builder can chain fashions to create complicated duties.

Instance API Name

Beneath is a pattern Python snippet exhibiting use Clarifai’s OpenAI‑suitable API to work together with a mannequin. Change YOUR_PAT together with your private entry token and choose any Clarifai mannequin URL (e.g., GPT‑OSS‑120B or your uploaded SLM):

import os

from openai import OpenAI

# Change these two parameters to level to Clarifai

consumer = OpenAI(

base_url=”https://api.clarifai.com/v2/ext/openai/v1″,

api_key=”YOUR_PAT”,

)

response = consumer.chat.completions.create(

mannequin=”https://clarifai.com/openai/chat-completion/fashions/gpt-oss-120b”,

messages=[

{“role”: “user”, “content”: “What is the capital of France?”}

]

)

print(response.decisions[0].message.content material)

The identical sample works for different Clarifai fashions or your customized uploads.

Finest Practices & Suggestions

- Immediate Engineering: Small fashions might be delicate to immediate formatting. Comply with really helpful codecs (e.g., system/person/assistant roles for Phi‑4 mini).

- Caching: Use caching for repeated prompts to scale back prices. Clarifai robotically caches tokens when doable.

- Batching: Group a number of requests to enhance throughput and scale back per‑token overhead.

- Finances Alerts: Arrange value thresholds and alerts in your observability layer to keep away from surprising payments.

- Moral Deployment: Respect person knowledge privateness. Use on‑gadget or native fashions for delicate info and guarantee compliance with rules.

Professional Insights

- Pilot first: Begin with non‑mission‑crucial options to gauge value and efficiency earlier than scaling.

- Neighborhood assets: Take part in developer boards, attend webinars and watch movies on SLM integration to remain updated. Main AI educators emphasise the significance of sharing greatest practices to speed up adoption.

- Lengthy‑time period imaginative and prescient: Plan for a hybrid structure that may alter as fashions evolve. You may begin with a mini mannequin and later improve to a reasoning engine or multi‑modal powerhouse as your wants develop.

Conclusion

Small and environment friendly fashions are reshaping the AI panorama. They allow quick, reasonably priced and personal inference, opening the door for startups, enterprises and researchers to construct AI‑powered merchandise with out the heavy infrastructure of big fashions. From chatbots and doc summarizers to multimodal cell apps and enterprise AI employees, SLMs unlock a variety of potentialities. The ecosystem of suppliers—from Clarifai’s hybrid Reasoning Engine and Native Runners to open‑supply gems like Gemma and Phi‑4—affords decisions tailor-made to each want.

Shifting ahead, we anticipate to see multimodal SLMs, extremely‑lengthy context home windows, agentic workflows and decentralized inference change into mainstream. Regulatory pressures and sustainability considerations will drive adoption of privateness‑preserving and power‑environment friendly architectures. By staying knowledgeable, leveraging greatest practices and partnering with versatile platforms corresponding to Clarifai, you’ll be able to harness the facility of small fashions to ship massive influence.

FAQs

What’s the distinction between an SLM and a conventional LLM? Massive language fashions have tens or lots of of billions of parameters and require substantial compute. SLMs have far fewer parameters (typically underneath 10 B) and are optimized for deployment on constrained {hardware}.

How a lot can I save by utilizing a small mannequin? Financial savings rely on supplier and job, however case research point out as much as 11× cheaper inference in contrast with utilizing high‑tier giant fashions. Clarifai’s Reasoning Engine prices about $0.16 per million tokens, highlighting the fee benefit.

Are SLMs adequate for complicated reasoning? Distillation and higher coaching knowledge have narrowed the hole in reasoning capacity. Fashions like Phi‑4 mini and Gemma‑3n ship efficiency similar to 7 B–9 B fashions, whereas mini variations of frontier fashions preserve excessive benchmark scores at decrease value. For probably the most demanding duties, combining a small mannequin for draft reasoning with a bigger mannequin for closing verification (speculative decoding) is efficient.

How do I run a mannequin domestically? Clarifai’s Native Runners allow you to deploy fashions in your {hardware}. Obtain the runner, join it to your Clarifai account and expose an endpoint. Information stays on‑premise, decreasing cloud prices and guaranteeing compliance.

Can I add my very own mannequin? Sure. Clarifai’s platform means that you can add any suitable mannequin and obtain a manufacturing‑prepared API endpoint. You may then monitor and scale it utilizing Clarifai’s compute orchestration.

What’s the way forward for small fashions? Anticipate multimodal, lengthy‑context, power‑environment friendly and agentic SLMs to change into mainstream. Hybrid architectures that mix native and cloud inference will dominate as privateness and sustainability change into paramount.