Picture by Editor

AI functions possess unparalleled computational capabilities that may propel progress at an unprecedented tempo. However, these instruments rely closely on energy-intensive information facilities for his or her operations, leading to a regarding lack of power sensitivity that contributes considerably to their carbon footprint. Surprisingly, these AI functions already account for a considerable 2.5 to three.7 % of world greenhouse fuel emissions, surpassing the emissions from the aviation business.

And sadly, this carbon footprint is growing at a quick tempo.

Presently, the urgent want is to measure the carbon footprint of machine studying functions, as emphasised by Peter Drucker’s knowledge that “You possibly can’t handle what you possibly can’t measure.” At present, there exists a big lack of readability in quantifying the environmental impression of AI, with exact figures eluding us.

Along with measuring the carbon footprint, the AI business’s leaders should actively concentrate on optimizing it. This twin strategy is important to addressing the environmental considerations surrounding AI functions and making certain a extra sustainable path ahead.

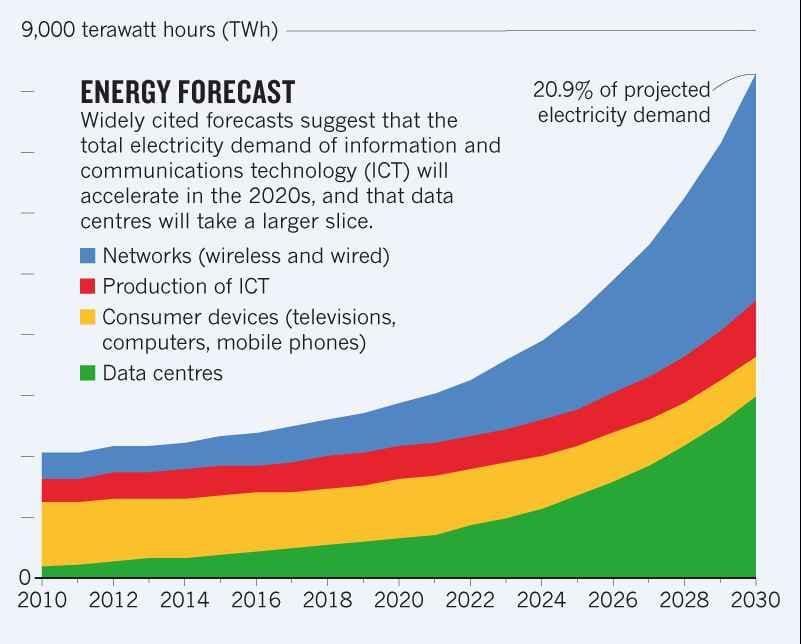

The elevated use of machine studying requires elevated information facilities, lots of that are energy hungry and thus have a big carbon footprint. The worldwide electrical energy utilization by information facilities amounted to 0.9 to 1.3 % in 2021.

A 2021 research estimated that this utilization can enhance to 1.86 % by 2030. This determine represents the growing pattern of power demand resulting from information facilities

© Vitality consumption pattern and share of use for information facilities

Notably, the upper the power consumption is, the upper the carbon footprint can be. Information facilities warmth up throughout processing and might change into defective and even cease functioning resulting from overheating. Therefore, they want cooling, which requires further power. Round 40 % of the electrical energy consumed by information facilities is for air con.

Given the growing footprint of AI utilization, these instruments’ carbon depth must be accounted for. At present, the analysis on this topic is proscribed to analyses of some fashions and doesn’t adequately handle the variety of the mentioned fashions.

Right here is an advanced methodology and some efficient instruments to compute carbon depth of AI programs.

The Software program Carbon Depth (SCI) customary is an efficient strategy for estimating carbon depth of AI programs. Not like the traditional methodologies that make use of attributional carbon accounting strategy, it makes use of a consequential computing strategy.

Consequential strategy makes an attempt to calculate the marginal change in emissions arising from an intervention or determination, reminiscent of the choice to generate an additional unit. Whereas, attribution refers to accounting common depth information or static inventories of emissions.

A paper on “Measuring the Carbon Depth of AI in Cloud Situations” by Jesse Doge et al. has employed this technique to usher in extra knowledgeable analysis. Since a big quantity of AI mannequin coaching is performed on cloud computing cases, it may be a legitimate framework to compute the carbon footprint of AI fashions. The paper refines SCI formulation for such estimations as:

which is refined from:

![]() that derives from

that derives from ![]()

the place:

E: Vitality consumed by a software program system, primarily of graphical processing units-GPUs which is specialised ML {hardware}.

I: Location-based marginal carbon emissions by the grid powering the datacenter.

M: Embedded or embodied carbon, which is the carbon emitted throughout utilization, creation, and disposal of {hardware}.

R: Purposeful unit, which on this case is one machine studying coaching activity.

C= O+M, the place O equals E*I

The paper makes use of the formulation to estimate electrical energy utilization of a single cloud occasion. In ML programs based mostly on deep studying, main electrical energy consumption owes it to the GPU, which is included on this formulation. They skilled a BERT-base mannequin utilizing a single NVIDIA TITAN X GPU (12 GB) in a commodity server with two Intel Xeon E5-2630 v3 CPUs (2.4GHz) and 256GB RAM (16x16GB DIMMs) to experiment the appliance of this formulation. The next determine reveals the outcomes of this experiment:

© Vitality consumption and break up between parts of a server

The GPU claims 74 % of the power consumption. Though it’s nonetheless claimed as an underestimation by the paper’s authors, inclusion of GPU is the step in the appropriate path. It isn’t the main focus of the traditional estimation strategies, which implies that a significant contributor of carbon footprint is being missed within the estimations. Evidently, SCI gives a extra healthful and dependable computation of carbon depth.

AI mannequin coaching is commonly performed on cloud compute cases, as cloud makes it versatile, accessible, and cost-efficient. Cloud computing supplies the infrastructure and sources to deploy and practice AI fashions at scale. That’s why mannequin coaching on cloud computing is growing regularly.

It’s vital to measure the real-time carbon depth of cloud compute cases to establish areas appropriate for mitigation efforts. Accounting time-based and location-specific marginal emissions per unit of power may also help calculate operational carbon emissions, as executed by a 2022 paper.

An opensource device, Cloud Carbon Footprint (CCF) software program can be obtainable to compute the impression of cloud cases.

Listed here are 7 methods to optimize the carbon depth of AI programs.

1. Write higher, extra environment friendly code

Optimized codes can cut back power consumption by 30 % by decreased reminiscence and processor utilization. Writing a carbon-efficient code entails optimizing algorithms for sooner execution, lowering pointless computations, and deciding on energy-efficient {hardware} to carry out duties with much less energy.

Builders can use profiling instruments to establish efficiency bottlenecks and areas for optimization of their code. This course of can result in extra energy-efficient software program. Additionally, take into account implementing energy-aware programming strategies, the place code is designed to adapt to the obtainable sources and prioritize energy-efficient execution paths.

2. Choose extra environment friendly mannequin

Selecting the best algorithms and information buildings is essential. Builders ought to go for algorithms that reduce computational complexity and consequently, power consumption. If the extra advanced mannequin solely yields 3-5% enchancment however takes 2-3x extra time to coach; then choose the less complicated and sooner mannequin.

Mannequin distillation is one other approach for condensing giant fashions into smaller variations to make them extra environment friendly whereas retaining important information. It may be achieved by coaching a small mannequin to imitate the massive one or eradicating pointless connections from a neural community.

3. Tune mannequin parameters

Tune hyperparameters for the mannequin utilizing dual-objective optimization that steadiness mannequin efficiency (e.g., accuracy) and power consumption. This dual-objective strategy ensures that you’re not sacrificing one for the opposite, making your fashions extra environment friendly.

Leverage strategies like Parameter-Environment friendly High-quality-Tuning (PEFT) whose aim is to achieve efficiency just like conventional fine-tuning however with a diminished variety of trainable parameters. This strategy entails fine-tuning a small subset of mannequin parameters whereas retaining the vast majority of the pre-trained Giant Language Fashions (LLMs) frozen, leading to important reductions in computational sources and power consumption.

4. Compress information and use low-energy storage

Implement information compression strategies to scale back the quantity of knowledge transmitted. Compressed information requires much less power to switch and occupies decrease house on disk. Through the mannequin serving section, utilizing a cache may also help cut back the calls made to the web storage layer thereby lowering

Moreover, selecting the correct storage expertise can lead to important positive aspects. For eg. AWS Glacier is an environment friendly information archiving resolution and could be a extra sustainable strategy than utilizing S3 if the information doesn’t should be accessed incessantly.

5. Prepare fashions on cleaner power

If you’re utilizing a cloud service for mannequin coaching, you possibly can select the area to function computations. Select a area that employs renewable power sources for this objective, and you may cut back the emissions by as much as 30 occasions. AWS weblog submit outlines the steadiness between optimizing for enterprise and sustainability objectives.

Another choice is to pick the opportune time to run the mannequin. At sure occasions of the day; the power is cleaner and such information might be acquired by a paid service reminiscent of Electrical energy Map, which gives entry to real-time information and future predictions concerning the carbon depth of electrical energy in numerous areas.

6. Use specialised information facilities and {hardware} for mannequin coaching

Selecting extra environment friendly information facilities and {hardware} could make an enormous distinction on carbon depth. ML-specific information facilities and {hardware} might be 1.4-2 and 2-5 occasions extra power environment friendly than the overall ones.

7. Use serverless deployments like AWS Lambda, Azure Features

Conventional deployments require the server to be at all times on, which implies 24×7 power consumption. Serverless deployments like AWS Lambda and Azure Features work simply superb with minimal carbon depth.

The AI sector is experiencing exponential development, permeating each aspect of enterprise and each day existence. Nevertheless, this growth comes at a value—a burgeoning carbon footprint that threatens to steer us additional away from the aim of limiting world temperature will increase to simply 1°C.

This carbon footprint isn’t just a gift concern; its repercussions could prolong throughout generations, affecting those that bear no accountability for its creation. Subsequently, it turns into crucial to take decisive actions to mitigate AI-related carbon emissions and discover sustainable avenues for harnessing its potential. It’s essential to make sure that AI’s advantages don’t come on the expense of the setting and the well-being of future generations.

Ankur Gupta is an engineering chief with a decade of expertise spanning sustainability, transportation, telecommunication and infrastructure domains; at present holds the place of Engineering Supervisor at Uber. On this position, he performs a pivotal position in driving the development of Uber’s Autos Platform, main the cost in direction of a zero-emissions future by the mixing of cutting-edge electrical and linked autos.