Google expanded its Gemini mannequin household with the discharge of Gemini Embedding 2. This second-generation mannequin succeeds the text-only gemini-embedding-001 and is designed particularly to handle the high-dimensional storage and cross-modal retrieval challenges confronted by AI builders constructing production-grade Retrieval-Augmented Era (RAG) techniques. The Gemini Embedding 2 launch marks a big technical shift in how embedding fashions are architected, transferring away from modality-specific pipelines towards a unified, natively multimodal latent house.

Native Multimodality and Interleaved Inputs

The first architectural development in Gemini Embedding 2 is its potential to map 5 distinct media varieties—Textual content, Picture, Video, Audio, and PDF—right into a single, high-dimensional vector house. This eliminates the necessity for advanced pipelines that beforehand required separate fashions for various information varieties, resembling CLIP for photographs and BERT-based fashions for textual content.

The mannequin helps interleaved inputs, permitting builders to mix completely different modalities in a single embedding request. That is notably related to be used circumstances the place textual content alone doesn’t present enough context. The technical limits for these inputs are outlined as:

- Textual content: As much as 8,192 tokens per request.

- Pictures: As much as 6 photographs (PNG, JPEG, WebP, HEIC/HEIF).

- Video: As much as 120 seconds of video (MP4, MOV, and so on.).

- Audio: As much as 80 seconds of native audio (MP3, WAV, and so on.) with out requiring a separate transcription step.

- Paperwork: As much as 6 pages of PDF recordsdata.

By processing these inputs natively, Gemini Embedding 2 captures the semantic relationships between a visible body in a video and the spoken dialogue in an audio observe, projecting them as a single vector that may be in contrast towards textual content queries utilizing customary distance metrics like Cosine Similarity.

Effectivity through Matryoshka Illustration Studying (MRL)

Storage and compute prices are sometimes the first bottlenecks in large-scale vector search. To mitigate this, Gemini Embedding 2 implements Matryoshka Illustration Studying (MRL).

Customary embedding fashions distribute semantic data evenly throughout all dimensions. If a developer truncates a 3,072-dimension vector to 768 dimensions, the accuracy usually collapses as a result of the data is misplaced. In distinction, Gemini Embedding 2 is skilled to pack essentially the most important semantic data into the earliest dimensions of the vector.

The mannequin defaults to 3,072 dimensions, however Google crew has optimized three particular tiers for manufacturing use:

- 3,072: Most precision for advanced authorized, medical, or technical datasets.

- 1,536: A steadiness of efficiency and storage effectivity.

- 768: Optimized for low-latency retrieval and lowered reminiscence footprint.

Matryoshka Illustration Studying (MRL) allows a ‘short-listing’ structure. A system can carry out a rough, high-speed search throughout thousands and thousands of things utilizing the 768-dimension sub-vectors, then carry out a exact re-ranking of the highest outcomes utilizing the complete 3,072-dimension embeddings. This reduces the computational overhead of the preliminary retrieval stage with out sacrificing the ultimate accuracy of the RAG pipeline.

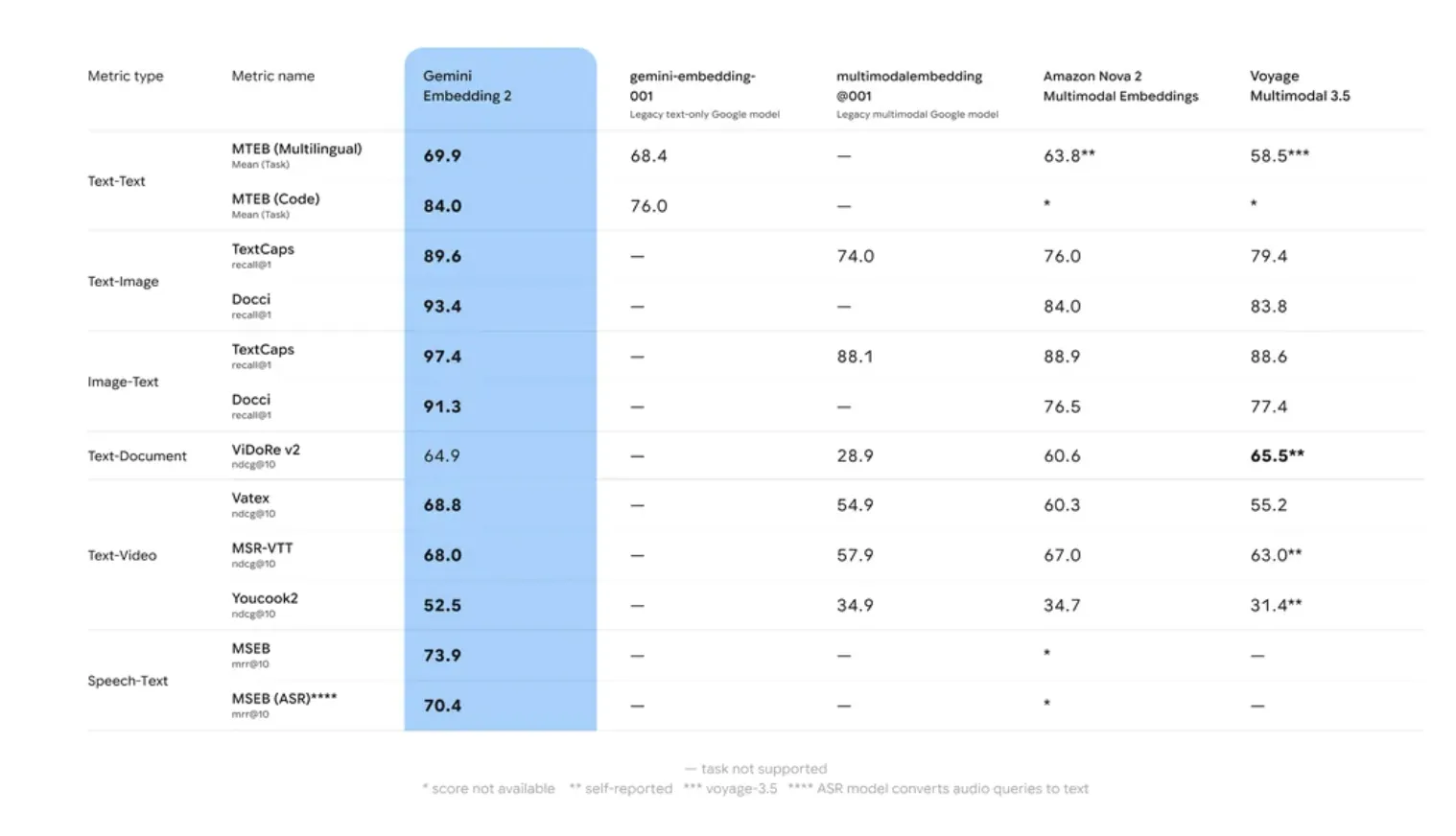

Benchmarking: MTEB and Lengthy-Context Retrieval

Google AI’s inner analysis and efficiency on the Large Textual content Embedding Benchmark (MTEB) point out that Gemini Embedding 2 outperforms its predecessor in two particular areas: Retrieval Accuracy and Robustness to Area Shift.

Many embedding fashions undergo from ‘area drift,’ the place accuracy drops when transferring from generic coaching information (like Wikipedia) to specialised domains (like proprietary codebases). Gemini Embedding 2 utilized a multi-stage coaching course of involving numerous datasets to make sure greater zero-shot efficiency throughout specialised duties.

The mannequin’s 8,192-token window is a important specification for RAG. It permits for the embedding of bigger ‘chunks’ of textual content, which preserves the context crucial for resolving coreferences and long-range dependencies inside a doc. This reduces the chance of ‘context fragmentation,’ a standard difficulty the place a retrieved chunk lacks the data wanted for the LLM to generate a coherent reply.

Key Takeaways

- Native Multimodality: Gemini Embedding 2 helps 5 distinct media varieties—Textual content, Picture, Video, Audio, and PDF—inside a unified vector house. This enables for interleaved inputs (e.g., a picture mixed with a textual content caption) to be processed as a single embedding with out separate mannequin pipelines.

- Matryoshka Illustration Studying (MRL): The mannequin is architected to retailer essentially the most important semantic data within the early dimensions of a vector. Whereas it defaults to 3,072 dimensions, it helps environment friendly truncation to 1,536 or 768 dimensions with minimal loss in accuracy, decreasing storage prices and growing retrieval velocity.

- Expanded Context and Efficiency: The mannequin options an 8,192-token enter window, permitting for bigger textual content ‘chunks’ in RAG pipelines. It exhibits vital efficiency enhancements on the Large Textual content Embedding Benchmark (MTEB), particularly in retrieval accuracy and dealing with specialised domains like code or technical documentation.

- Job-Particular Optimization: Builders can use

task_typeparameters (resemblingRETRIEVAL_QUERY,RETRIEVAL_DOCUMENT, orCLASSIFICATION) to supply hints to the mannequin. This optimizes the vector’s mathematical properties for the particular operation, bettering the “hit fee” in semantic search.

Take a look at Technical particulars, in Public Preview through the Gemini API and Vertex AI. Additionally, be happy to comply with us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be a part of us on telegram as properly.