Context Engineering Defined in 3 Ranges of Problem | Picture by Creator

# Introduction

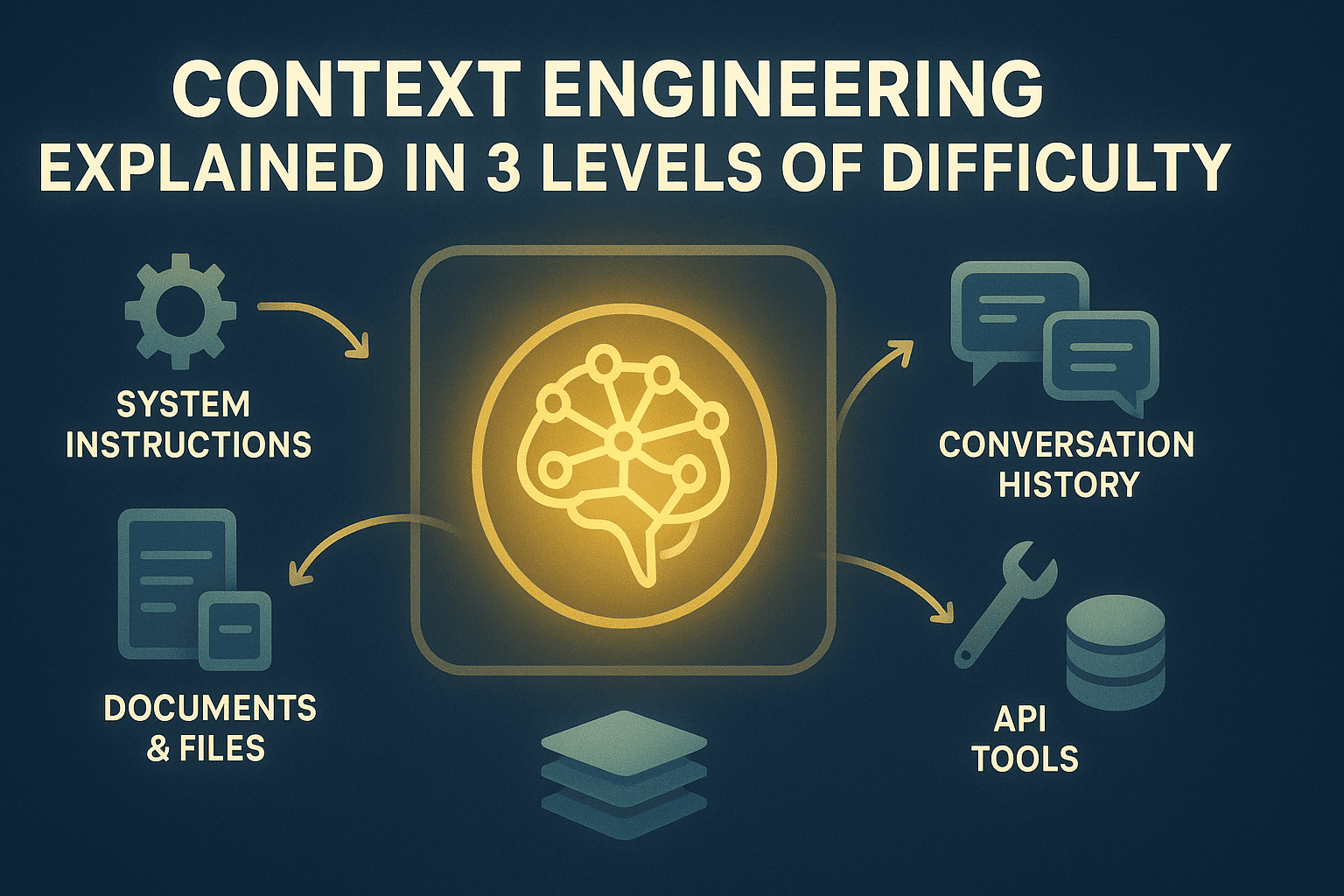

Massive language mannequin (LLM) functions hit context window limits continually. The mannequin forgets earlier directions, loses monitor of related data, or degrades in high quality as interactions lengthen. It’s because LLMs have mounted token budgets, however functions generate unbounded data — dialog historical past, retrieved paperwork, file uploads, utility programming interface (API) responses, and consumer information. With out administration, necessary data will get randomly truncated or by no means enters context in any respect.

Context engineering treats the context window as a managed useful resource with express allocation insurance policies and reminiscence methods. You determine what data enters context, when it enters, how lengthy it stays, and what will get compressed or archived to exterior reminiscence for retrieval. This orchestrates data movement throughout the applying’s runtime slightly than hoping every part matches or accepting degraded efficiency.

This text explains context engineering at three ranges:

- Understanding the basic necessity of context engineering

- Implementing sensible optimization methods in manufacturing methods

- Reviewing superior reminiscence architectures, retrieval methods, and optimization methods

The next sections discover these ranges intimately.

# Degree 1: Understanding The Context Bottleneck

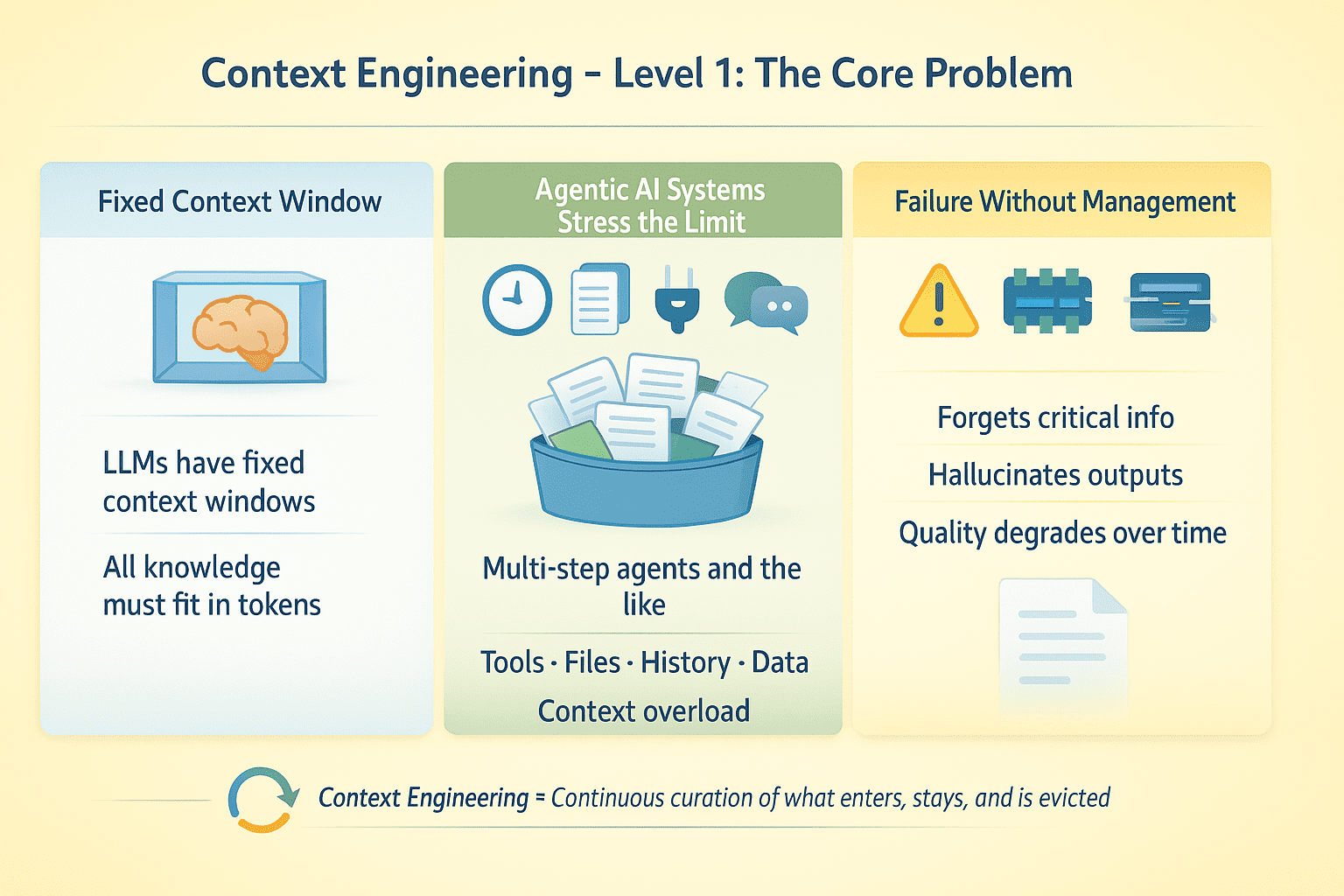

LLMs have mounted context home windows. The whole lot the mannequin is aware of at inference time should slot in these tokens. This isn’t a lot of an issue with single-turn completions. For retrieval-augmented era (RAG) functions and AI brokers working multi-step duties with device calls, file uploads, dialog historical past, and exterior information, this creates an optimization drawback: what data will get consideration and what will get discarded?

Say you may have an agent that runs for a number of steps, makes 50 API calls, and processes 10 paperwork. Such an agentic AI system will almost definitely fail with out express context administration. The mannequin forgets crucial data, hallucinates device outputs, or degrades in high quality because the dialog extends.

Context Engineering Degree 1 | Picture by Creator

Context engineering is about designing for steady curation of the data setting round an LLM all through its execution. This contains managing what enters context, when, for a way lengthy, and what will get evicted when area runs out.

# Degree 2: Optimizing Context In Observe

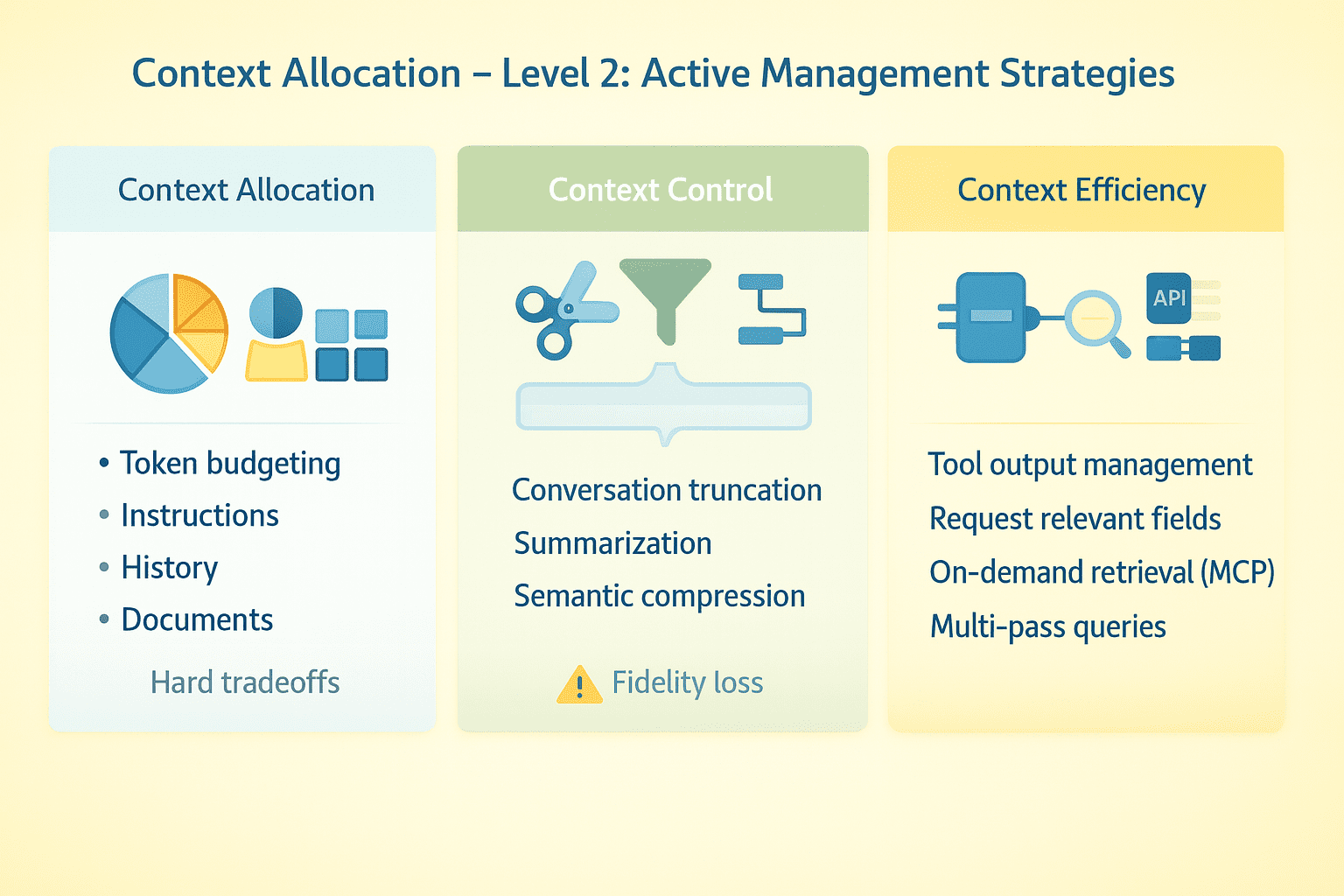

Efficient context engineering requires express methods throughout a number of dimensions.

// Budgeting Tokens

Allocate your context window intentionally. System directions would possibly take 2K tokens. Dialog historical past, device schemas, retrieved paperwork, and real-time information can all add up rapidly. With a really giant context window, there’s loads of headroom. With a a lot smaller window, you might be compelled to make laborious tradeoffs about what to maintain and what to drop.

// Truncating Conversations

Maintain current turns, drop center turns, and protect crucial early context. Summarization works however loses constancy. Some methods implement semantic compression — extracting key details slightly than preserving verbatim textual content. Take a look at the place your agent breaks as conversations lengthen.

// Managing Device Outputs

Massive API responses devour tokens quick. Request particular fields as an alternative of full payloads, truncate outcomes, summarize earlier than returning to the mannequin, or use multi-pass methods the place the agent first will get metadata then requests particulars for related gadgets solely.

// Utilizing The Mannequin Context Protocol And On-demand Retrieval

As a substitute of loading every part upfront, join the mannequin to exterior information sources it queries when wanted utilizing the mannequin context protocol (MCP). The agent decides what to fetch primarily based on activity necessities. This shifts the issue from “match every part in context” to “fetch the appropriate issues on the proper time.”

// Separating Structured States

Put steady directions in system messages. Put variable information in consumer messages the place it may be up to date or eliminated with out touching core directives. Deal with dialog historical past, device outputs, and retrieved paperwork as separate streams with impartial administration insurance policies.

Context Engineering Degree 2 | Picture by Creator

The sensible shift right here is to deal with context as a dynamic useful resource that wants energetic administration throughout an agent’s runtime, not a static factor you configure as soon as.

# Degree 3: Implementing Context Engineering In Manufacturing

Context engineering at scale requires refined reminiscence architectures, compression methods, and retrieval methods working in live performance. Right here is construct production-grade implementations.

// Designing Reminiscence Structure Patterns

Separate reminiscence in agentic AI methods into tiers:

- Working reminiscence (energetic context window)

- Episodic reminiscence (compressed dialog historical past and activity state)

- Semantic reminiscence (details, paperwork, data base)

- Procedural reminiscence (directions)

Working reminiscence is what the mannequin sees now, which is to be optimized for rapid activity wants. Episodic reminiscence shops what occurred. You may compress aggressively however protect temporal relationships and causal chains. For semantic reminiscence, retailer indexes by matter, entity, and relevance for quick retrieval.

// Making use of Compression Strategies

Naive summarization loses crucial particulars. A greater strategy is extractive compression, the place you determine and protect high-information-density sentences whereas discarding filler.

- For device outputs, extract structured information (entities, metrics, relationships) slightly than prose summaries.

- For conversations, protect consumer intents and agent commitments precisely whereas compressing reasoning chains.

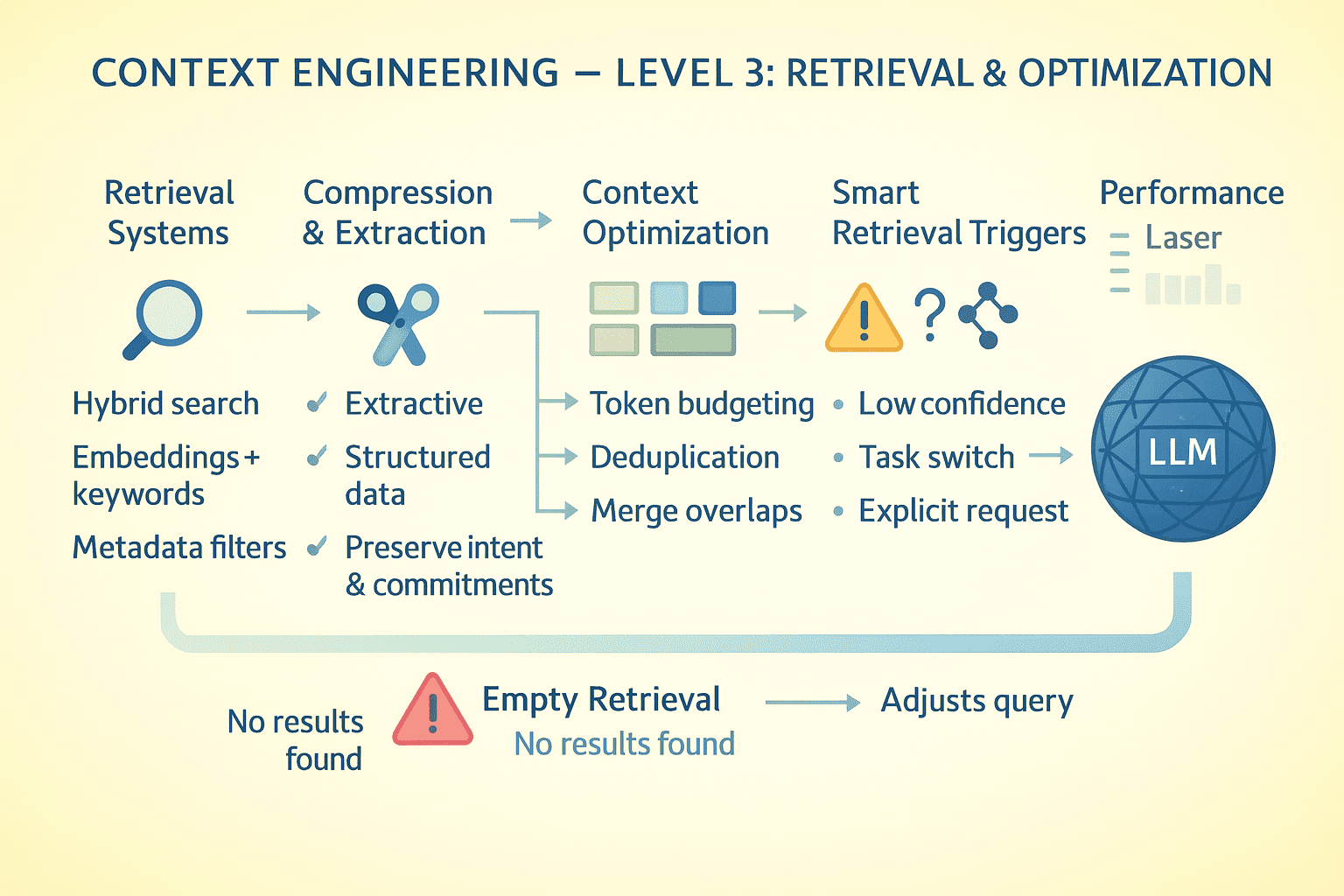

// Designing Retrieval Techniques

When the mannequin wants data not in context, retrieval high quality determines success. Implement hybrid search: dense embeddings for semantic similarity, BM25 for key phrase matching, and metadata filters for precision.

Rank outcomes by recency, relevance, and data density. Return prime Ok but additionally floor near-misses; the mannequin ought to know what virtually matched. Retrieval occurs in-context, so the mannequin sees question formulation and outcomes. Unhealthy queries produce dangerous outcomes; expose this to allow self-correction.

// Optimizing At The Token Degree

Profile your token utilization constantly.

- System directions consuming 5K tokens that could possibly be 1K? Rewrite them.

- Device schemas verbose? Use compact

JSONschemas as an alternative of fullOpenAPIspecs. - Dialog turns repeating related content material? Deduplicate.

- Retrieved paperwork overlapping? Merge earlier than including to context.

Each token saved is a token obtainable for task-critical data.

// Triggering Reminiscence Retrieval

The mannequin mustn’t retrieve continually; it’s costly and provides latency. Implement sensible triggers: retrieve when the mannequin explicitly requests data, when detecting data gaps, when activity switches happen, or when consumer references previous context.

When retrieval returns nothing helpful, the mannequin ought to know this explicitly slightly than hallucinating. Return empty outcomes with metadata: “No paperwork discovered matching question X in data base Y.” This lets the mannequin regulate technique by reformulating the question, looking out a unique supply, or informing the consumer the data shouldn’t be obtainable.

Context Engineering Degree 3 | Picture by Creator

// Synthesizing Multi-document Info

When reasoning requires a number of sources, course of hierarchically.

- First go: extract key details from every doc independently (parallelizable).

- Second go: load extracted details into context and synthesize.

This avoids context exhaustion from loading 10 full paperwork whereas preserving multi-source reasoning functionality. For contradictory sources, protect the contradiction. Let the mannequin see conflicting data and resolve it or flag it for consumer consideration.

// Persisting Dialog State

For brokers that pause and resume, serialize context state to exterior storage. Save compressed dialog historical past, present activity graph, device outputs, and retrieval cache. On resume, reconstruct minimal crucial context; don’t reload every part.

// Evaluating And Measuring Efficiency

Monitor key metrics to grasp how your context engineering technique is performing. Monitor context utilization to see the typical share of the window getting used, and eviction frequency to grasp how typically you might be hitting context limits. Measure retrieval precision by checking what fraction of retrieved paperwork are literally related and used. Lastly, monitor data persistence to see what number of turns necessary details survive earlier than being misplaced.

# Wrapping Up

Context engineering is finally about data structure. You’re constructing a system the place the mannequin has entry to every part in its context window and no entry to what’s not. Each design choice — what to compress, what to retrieve, what to cache, and what to discard — creates the data setting your utility operates in.

If you don’t give attention to context engineering, your system might hallucinate, neglect necessary particulars, or break down over time. Get it proper and also you get an LLM utility that stays coherent, dependable, and efficient throughout advanced, prolonged interactions regardless of its underlying architectural limits.

Comfortable context engineering!

# References And Additional Studying

Bala Priya C is a developer and technical author from India. She likes working on the intersection of math, programming, information science, and content material creation. Her areas of curiosity and experience embody DevOps, information science, and pure language processing. She enjoys studying, writing, coding, and occasional! At the moment, she’s engaged on studying and sharing her data with the developer group by authoring tutorials, how-to guides, opinion items, and extra. Bala additionally creates participating useful resource overviews and coding tutorials.