Picture by Writer

# Introduction

For years, synthetic intelligence music technology was a fancy analysis area, restricted to papers and prototypes. Immediately, that expertise has stepped into the buyer highlight. Main this development is Google’s MusicFX DJ, a web-based utility that interprets textual content prompts right into a steady, controllable stream of music in actual time. On this article, we take a look at MusicFX DJ from a technical perspective, exploring its user-facing options, the expertise powering it, and what its development means for the sector of knowledge science.

# What Is MusicFX DJ?

MusicFX DJ is an experimental, web-based utility developed by Google DeepMind in partnership with Google Labs. It represents a major shift from single-output synthetic intelligence music turbines to an interactive, performance-oriented expertise. The instrument is designed to be accessible, requiring no prior music idea data or digital audio workstation (DAW) experience.

At its core, MusicFX DJ capabilities like a generative mixing deck. Customers can enter a number of textual content prompts like “funky bassline,” “ethereal synth pads,” and “driving hip-hop beat” and layer them concurrently. The interface supplies real-time fader-like controls for parameters equivalent to depth, “chaos,” and density, permitting customers to form the music because it performs. This real-time interactivity and high-quality 48 kHz stereo output differentiate it from earlier static technology instruments.

AI Music Era Goes Client with Google’s MusicFX DJ

# The Know-how Behind the Beats: Lyria and Actual-Time Diffusion

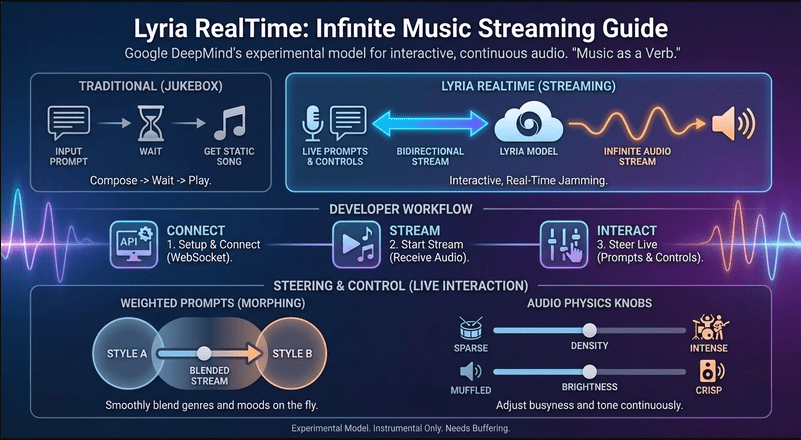

Whereas Google has not launched a full whitepaper on MusicFX DJ’s particular mannequin, it’s publicly recognized to be powered by the Lyria household of fashions, particularly Lyria RealTime. Understanding Lyria supplies the important thing to the instrument’s capabilities.

Lyria is Google DeepMind’s state-of-the-art music technology mannequin. It’s constructed on a diffusion mannequin, which has grow to be the first mannequin for high-fidelity audio and picture technology. Here’s a simplified breakdown of how this expertise probably works inside MusicFX DJ:

- Coaching Course of: The mannequin is educated on an enormous dataset of music audio paired with written explanations. It learns to affiliate patterns within the audio waveform — melody, concord, timbre, rhythm — with semantic ideas from the textual content.

- Diffusion Course of: As a substitute of producing music in a single step, a diffusion mannequin works by way of a strategy of steady enchancment. It begins with pure noise (static) and step by step “denoises” it over many steps, reworking it into coherent music that matches the enter textual content immediate.

- Actual-Time Adaptation (Lyria RealTime): The usual Lyria mannequin generates a full clip from a immediate. Lyria RealTime modifies this course of for streaming. It probably generates quick, overlapping segments of audio in a steady loop, whereas a separate management course of dynamically adjusts the technology parameters primarily based on the person’s real-time enter (altering prompts, sliders). This permits for seamless transitions and reside remixing.

- Conditioning and Management: The “magic” of MusicFX DJ’s layering comes from conditional technology. The mannequin is conditioned not on a single immediate however on a weighted mixture of a number of prompts. Once you regulate a fader for “funky bassline,” you might be adjusting the burden of that situation within the mannequin’s technology course of, making that component kind of dominant within the output audio stream.

Lyria and Actual-Time Diffusion

This construction explains the instrument’s professional-grade audio high quality and its distinctive interactive really feel; it’s not simply taking part in again pre-made clips however producing music on the fly in response to your instructions.

# How MusicFX DJ Works

Utilizing MusicFX DJ feels much less like programming an AI and extra like conducting an orchestra or DJing a set. The workflow is intuitive:

- Immediate Layering: Step one includes including as much as ten completely different textual content prompts into separate tracks.

- Actual-Time Era: Upon beginning, the instrument instantly begins producing a steady piece of music that comes with parts from all energetic prompts.

- Interactive Mixing: Every immediate observe has its personal quantity fader and specialised controls (e.g., “chaos” so as to add unpredictability, “density” to fill out the sound). Adjusting these in actual time modifications the music with out stopping the move.

- Dynamic Evolution: The music will not be on a set loop. The machine studying mannequin repeatedly evolves the composition, introducing variations and guaranteeing it doesn’t grow to be repetitive, all whereas respecting the person’s guiding prompts and slider positions.

This design philosophy lowers the barrier to artistic music exploration, making it a strong instrument for brainstorming, prototyping tune concepts, or just having fun with the method of guided musical discovery.

# Implications for Knowledge Scientists and the AI Group

The launch of MusicFX DJ is greater than a cool demo; it alerts a number of vital developments in utilized AI.

- Consumerization of Complicated Fashions: This demonstrates how cutting-edge analysis — diffusion fashions, large-scale audio coaching — will be packaged into intuitive functions. For knowledge scientists, it highlights the significance of person expertise (UX) design and real-time programs considering in bringing synthetic intelligence to a broad viewers.

- Actual-Time Controllable Era: Shifting from batch inference to real-time, interactive technology is a significant technical problem. MusicFX DJ exhibits that that is now potential for high-dimensional knowledge like audio. This paves the way in which for comparable interactive synthetic intelligence in video, 3D design, and past.

- APIs and Decentralization of Functionality: Google has made the basic Lyria RealTime mannequin obtainable through an utility programming interface (API), initially by way of Gemini API and AI Studio. This permits builders and knowledge scientists to construct their very own functions on high of this highly effective music technology engine, encouraging innovation in gaming, content material creation, and interactive media.

- Moral and Artistic Concerns: The instrument additionally brings urgent inquiries to the middle stage. How are the coaching datasets collected and arranged? What are the copyright implications of AI-generated music? How can we guarantee artists are compensated? By collaborating with musicians like Jacob Collier throughout improvement, Google highlighted a path the place synthetic intelligence augments reasonably than replaces human creativity.

# Conclusion

Google’s MusicFX DJ is a landmark utility that efficiently closes the hole between superior synthetic intelligence analysis and consumer-friendly creativity. By utilizing the Lyria RealTime diffusion mannequin, it delivers a singular, interactive music technology expertise that feels each highly effective and playful.

For knowledge scientists, it serves as a compelling case examine in real-time synthetic intelligence system design, mannequin conditioning, and the commercialization of generative expertise. Because the underlying fashions grow to be accessible through API, we are able to anticipate a wave of recent functions that additional cut back the road between human and machine-assisted artwork. The period of interactive, generative media will not be sooner or later; it’s right here, and instruments like MusicFX DJ are main the way in which.

// References

Shittu Olumide is a software program engineer and technical author obsessed with leveraging cutting-edge applied sciences to craft compelling narratives, with a eager eye for element and a knack for simplifying advanced ideas. It’s also possible to discover Shittu on Twitter.