Query:

Think about your organization’s LLM API prices immediately doubled final month. A deeper evaluation exhibits that whereas consumer inputs look completely different at a textual content degree, a lot of them are semantically related. As an engineer, how would you determine and cut back this redundancy with out impacting response high quality?

What’s Immediate Caching?

Immediate caching is an optimization method utilized in AI techniques to enhance pace and cut back value. As an alternative of sending the identical lengthy directions, paperwork, or examples to the mannequin repeatedly, the system reuses beforehand processed immediate content material reminiscent of static directions, immediate prefixes, or shared context. This helps save each enter and output tokens whereas protecting responses constant.

Take into account a journey planning assistant the place customers continuously ask questions like “Create a 5-day itinerary for Paris targeted on museums and meals.” Even when completely different customers phrase it barely otherwise, the core intent and construction of the request stays the identical. With none optimization, the mannequin has to learn and course of the total immediate each time, repeating the identical computation and rising each latency and price.

With immediate caching, as soon as the assistant processes this request the primary time, the repeated components of the immediate—such because the itinerary construction, constraints, and customary directions—are saved. When an identical request is shipped once more, the system reuses the beforehand processed content material as a substitute of ranging from scratch. This leads to sooner responses and decrease API prices, whereas nonetheless delivering correct and constant outputs.

What Will get Cached and The place It’s Saved

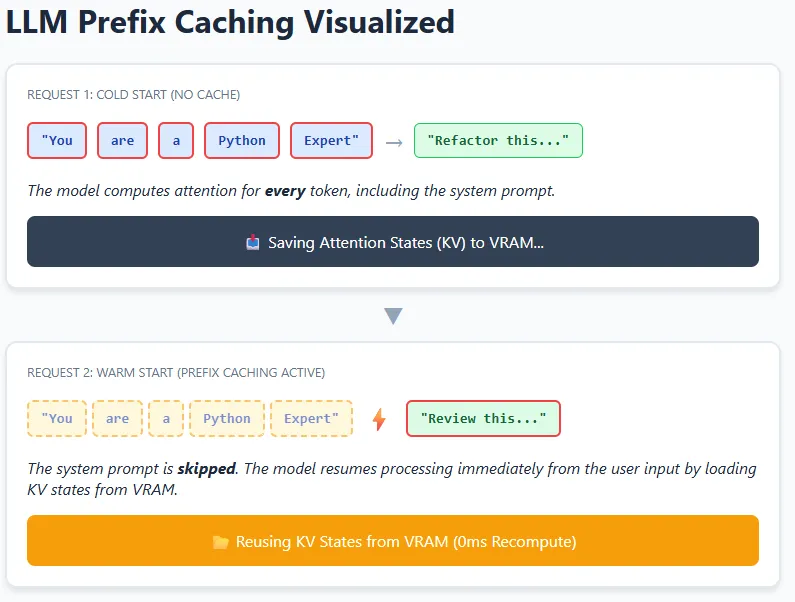

At a excessive degree, caching in LLM techniques can occur at completely different layers—starting from easy token-level reuse to extra superior reuse of inner mannequin states. In observe, fashionable LLMs primarily depend on Key–Worth (KV) caching, the place the mannequin shops intermediate consideration states in GPU reminiscence (VRAM) so it doesn’t must recompute them once more.

Consider a coding assistant with a hard and fast system instruction like “You might be an knowledgeable Python code reviewer.” This instruction seems in each request. When the mannequin processes it as soon as, the eye relationships (keys and values) between its tokens are saved. For future requests, the mannequin can reuse these saved KV states and solely compute consideration for the brand new consumer enter, such because the precise code snippet.

This concept is prolonged throughout requests utilizing prefix caching. If a number of prompts begin with the very same prefix—similar textual content, formatting, and spacing—the mannequin can skip recomputing that total prefix and resume from the cached level. That is particularly efficient in chatbots, brokers, and RAG pipelines the place system prompts and lengthy directions hardly ever change. The result’s decrease latency and diminished compute value, whereas nonetheless permitting the mannequin to completely perceive and reply to new context.

Structuring Prompts for Excessive Cache Effectivity

- Place system directions, roles, and shared context originally of the immediate, and transfer user-specific or altering content material to the top.

- Keep away from including dynamic parts like timestamps, request IDs, or random formatting within the prefix, as even small modifications cut back reuse.

- Guarantee structured information (for instance, JSON context) is serialized in a constant order and format to stop pointless cache misses.

- Usually monitor cache hit charges and group related requests collectively to maximise effectivity at scale.

Conclusion

On this scenario, the objective is to scale back repeated computation whereas preserving response high quality. An efficient strategy is to investigate incoming requests to determine shared construction, intent, or frequent prefixes, after which restructure prompts in order that reusable context stays constant throughout calls. This enables the system to keep away from reprocessing the identical info repeatedly, resulting in decrease latency and diminished API prices with out altering the ultimate output.

For purposes with lengthy and repetitive prompts, prefix-based reuse can ship important financial savings, nevertheless it additionally introduces sensible constraints—KV caches eat GPU reminiscence, which is finite. As utilization scales, cache eviction methods or reminiscence tiering grow to be important to stability efficiency positive aspects with useful resource limits.