Current advances within the growth of LLMs have popularized their utilization for various NLP duties that had been beforehand tackled utilizing older machine studying strategies. Massive language fashions are able to fixing a wide range of language issues corresponding to classification, summarization, info retrieval, content material creation, query answering, and sustaining a dialog — all utilizing only one single mannequin. However how do we all know they’re doing job on all these totally different duties?

The rise of LLMs has delivered to mild an unresolved downside: we don’t have a dependable normal for evaluating them. What makes analysis tougher is that they’re used for extremely various duties and we lack a transparent definition of what’s reply for every use case.

This text discusses present approaches to evaluating LLMs and introduces a brand new LLM leaderboard leveraging human analysis that improves upon current analysis strategies.

The primary and ordinary preliminary type of analysis is to run the mannequin on a number of curated datasets and study its efficiency. HuggingFace created an Open LLM Leaderboard the place open-access massive fashions are evaluated utilizing 4 well-known datasets (AI2 Reasoning Problem , HellaSwag , MMLU , TruthfulQA). This corresponds to computerized analysis and checks the mannequin’s capacity to get the info for some particular questions.

That is an instance of a query from the MMLU dataset.

Topic: college_medicine

Query: An anticipated aspect impact of creatine supplementation is.

- A) muscle weak point

- B) acquire in physique mass

- C) muscle cramps

- D) lack of electrolytes

Reply: (B)

Scoring the mannequin on answering this sort of query is a vital metric and serves effectively for fact-checking however it doesn’t take a look at the generative capacity of the mannequin. That is in all probability the largest drawback of this analysis methodology as a result of producing free textual content is likely one of the most essential options of LLMs.

There appears to be a consensus throughout the group that to guage the mannequin correctly we’d like human analysis. That is usually carried out by evaluating the responses from totally different fashions.

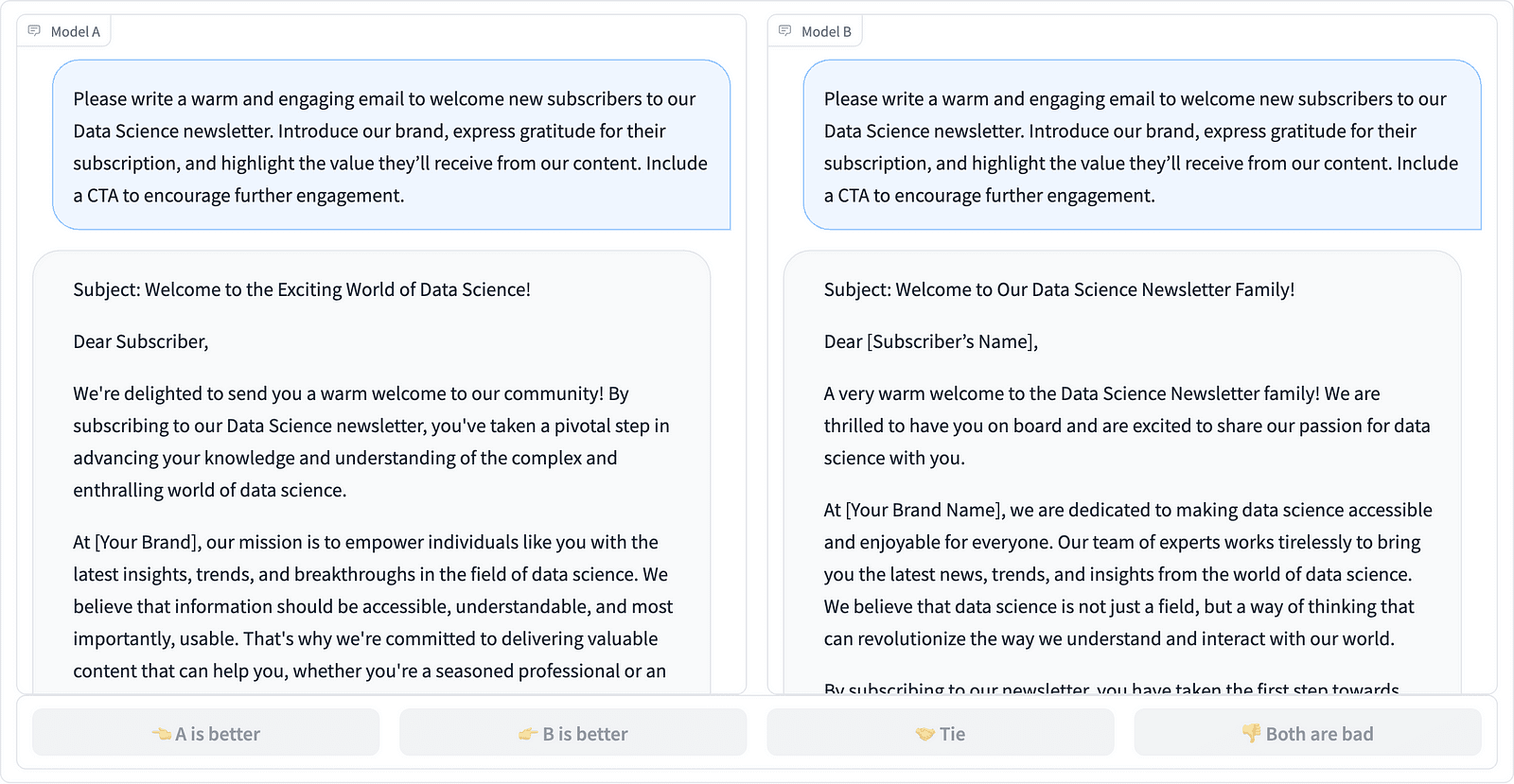

Evaluating two immediate completions within the LMSYS venture – screenshot by the Writer

Annotators resolve which response is best, as seen within the instance above, and generally quantify the distinction in high quality of the immediate completions. LMSYS Org has created a leaderboard that makes use of this sort of human analysis and compares 17 totally different fashions, reporting the Elo score for every mannequin.

As a result of human analysis could be laborious to scale, there have been efforts to scale and pace up the analysis course of and this resulted in an fascinating venture referred to as AlpacaEval. Right here every mannequin is in comparison with a baseline (text-davinci-003 supplied by GPT-4) and human analysis is changed with GPT-4 judgment. This certainly is quick and scalable however can we belief the mannequin right here to carry out the scoring? We’d like to concentrate on mannequin biases. The venture has really proven that GPT-4 could favor longer solutions.

LLM analysis strategies are persevering with to evolve because the AI group searches for straightforward, honest, and scalable approaches. The most recent growth comes from the group at Toloka with a brand new leaderboard to additional advance present analysis requirements.

The brand new leaderboard compares mannequin responses to real-world consumer prompts which are categorized by helpful NLP duties as outlined in this InstructGPT paper. It additionally exhibits every mannequin’s total win charge throughout all classes.

Toloka leaderboard – screenshot by the Writer

The analysis used for this venture is just like the one carried out in AlpacaEval. The scores on the leaderboard characterize the win charge of the respective mannequin compared to the Guanaco 13B mannequin, which serves right here as a baseline comparability. The selection of Guanaco 13B is an enchancment to the AlpacaEval methodology, which makes use of the soon-to-be outdated text-davinci-003 mannequin because the baseline.

The precise analysis is completed by human professional annotators on a set of real-world prompts. For every immediate, annotators are given two completions and requested which one they like. You’ll find particulars concerning the methodology right here.

This sort of human analysis is extra helpful than some other computerized analysis methodology and may enhance on the human analysis used for the LMSYS leaderboard. The draw back of the LMSYS methodology is that anyone with the hyperlink can participate within the analysis, elevating severe questions concerning the high quality of information gathered on this method. A closed crowd of professional annotators has higher potential for dependable outcomes, and Toloka applies extra high quality management strategies to make sure information high quality.

On this article, we’ve got launched a promising new resolution for evaluating LLMs — the Toloka Leaderboard. The method is progressive, combines the strengths of current strategies, provides task-specific granularity, and makes use of dependable human annotation strategies to match the fashions.

Discover the board, and share your opinions and options for enhancements with us.

Magdalena Konkiewicz is a Information Evangelist at Toloka, a world firm supporting quick and scalable AI growth. She holds a Grasp’s diploma in Synthetic Intelligence from Edinburgh College and has labored as an NLP Engineer, Developer, and Information Scientist for companies in Europe and America. She has additionally been concerned in instructing and mentoring Information Scientists and often contributes to Information Science and Machine Studying publications.