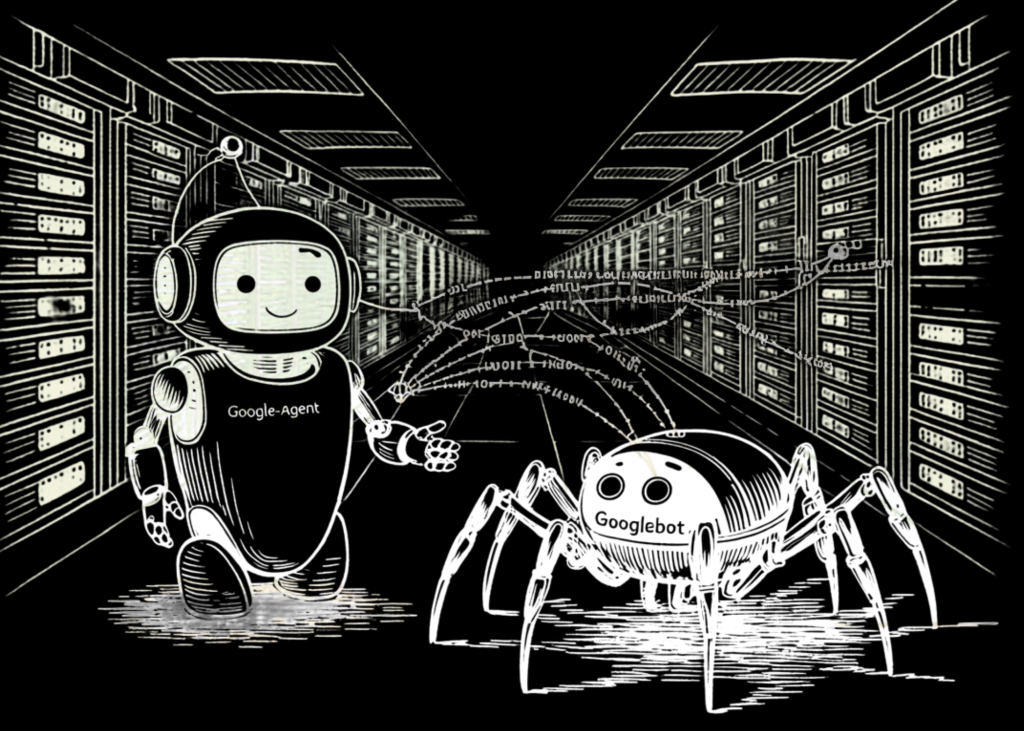

As Google integrates AI capabilities throughout its product suite, a brand new technical entity has surfaced in server logs: Google-Agent. For software program devs, understanding this entity is crucial for distinguishing between automated indexers and real-time, user-initiated requests.

Not like the autonomous crawlers which have outlined the net for many years, Google-Agent operates underneath a special algorithm and protocols.

The Core Distinction: Fetchers vs. Crawlers

The basic technical distinction between Google’s legacy bots and Google-Agent lies within the set off mechanism.

- Autonomous Crawlers (e.g., Googlebot): These uncover and index pages on a schedule decided by Google’s algorithms to take care of the Search index.

- Consumer-Triggered Fetchers (e.g., Google-Agent): These instruments solely act when a person performs a selected motion. Based on Google’s developer documentation, Google-Agent is utilized by Google AI merchandise to fetch content material from the net in response to a direct person immediate.

As a result of these fetchers are reactive slightly than proactive, they don’t ‘crawl’ the net by following hyperlinks to find new content material. As a substitute, they act as a proxy for the person, retrieving particular URLs as requested.

The Robots.txt Exception

One of the vital technical nuances of Google-Agent is its relationship with robots.txt. Whereas autonomous crawlers like Googlebot strictly adhere to robots.txt directives to find out which elements of a web site to index, user-triggered fetchers usually function underneath a special protocol.

Google’s documentation explicitly states that user-triggered fetchers ignore robots.txt.

The logic behind this bypass is rooted within the ‘proxy’ nature of the agent. As a result of the fetch is initiated by a human person requesting to work together with a selected piece of content material, the fetcher behaves extra like a regular net browser than a search crawler. If a web site proprietor blocks Google-Agent by way of robots.txt, the instruction will usually be ignored as a result of the request is considered as a guide motion on behalf of the person slightly than an automatic mass-collection effort.

Identification and Consumer-Agent Strings

Devs should be capable to precisely determine this visitors to stop it from being flagged as malicious or unauthorized scraping. Google-Agent identifies itself via particular Consumer-Agent strings.

The first string for this fetcher is:

Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Construct/MMB29P)

AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Cellular

Safari/537.36 (suitable; Google-Agent)In some situations, the simplified token Google-Agent is used.

For safety and monitoring, you will need to be aware that as a result of these are user-triggered, they could not originate from the identical predictable IP blocks as Google’s main search crawlers. Google recommends utilizing their printed JSON IP ranges to confirm that requests showing underneath this Consumer-Agent are reputable.

Why the Distinction Issues for Builders

For software program engineers managing net infrastructure, the rise of Google-Agent shifts the main focus from Search engine optimization-centric ‘crawl budgets’ to real-time request administration.

- Observability: Fashionable log parsing ought to deal with Google-Agent as a reputable user-driven request. In case your WAF (Net Utility Firewall) or rate-limiting software program treats all ‘bots’ the identical, you could inadvertently block customers from utilizing Google’s AI instruments to work together along with your web site.

- Privateness and Entry: Since

robots.txtdoesn’t govern Google-Agent, builders can’t depend on it to cover delicate or private knowledge from AI fetchers. Entry management for these fetchers have to be dealt with by way of commonplace authentication or server-side permissions, simply as it might be for a human customer. - Infrastructure Load: As a result of these requests are ‘bursty’ and tied to human utilization, the visitors quantity of Google-Agent will scale with the recognition of your content material amongst AI customers, slightly than the frequency of Google’s indexing cycles.

Conclusion

Google-Agent represents a shift in how Google interacts with the net. By transferring from autonomous crawling to user-triggered fetching, Google is making a extra direct hyperlink between the person’s intent and the dwell net content material. The takeaway is obvious: the protocols of the previous—particularly robots.txt—are not the first instrument for managing AI interactions. Correct identification by way of Consumer-Agent strings and a transparent understanding of the ‘user-triggered’ designation are the brand new necessities for sustaining a contemporary net presence.

Take a look at the Google Docs right here. Additionally, be at liberty to observe us on Twitter and don’t neglect to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be part of us on telegram as nicely.