On the earth of Massive Language Fashions (LLMs), pace is the one characteristic that issues as soon as accuracy is solved. For a human, ready 1 second for a search result’s nice. For an AI agent performing 10 sequential searches to unravel a fancy activity, a 1-second delay per search creates a 10-second lag. This latency kills the person expertise.

Exa, the search engine startup previously often known as Metaphor, simply launched Exa On the spot. It’s a search mannequin designed to offer the world’s net knowledge to AI brokers in beneath 200ms. For software program engineers and knowledge scientists constructing Retrieval-Augmented Technology (RAG) pipelines, this removes the largest bottleneck in agentic workflows.

Why Latency is the Enemy of RAG

If you construct a RAG software, your system follows a loop: the person asks a query, your system searches the net for context, and the LLM processes that context. If the search step takes 700ms to 1000ms, the whole ‘time to first token’ turns into sluggish.

Exa On the spot delivers outcomes with a latency between 100ms and 200ms. In checks carried out from the us-west-1 (northern california) area, the community latency was roughly 50ms. This pace permits brokers to carry out a number of searches in a single ‘thought’ course of with out the person feeling a delay.

No Extra ‘Wrapping’ Google

Most search APIs obtainable as we speak are ‘wrappers.’ They ship a question to a conventional search engine like Google or Bing, scrape the outcomes, and ship them again to you. This provides layers of overhead.

Exa On the spot is totally different. It’s constructed on a proprietary, end-to-end neural search and retrieval stack. As a substitute of matching key phrases, Exa makes use of embeddings and transformers to grasp the which means of a question. This neural method ensures the outcomes are related to the AI’s intent, not simply the particular phrases used. By proudly owning the complete stack from the crawler to the inference engine, Exa can optimize for pace in ways in which ‘wrapper’ APIs can’t.

Benchmarking the Pace

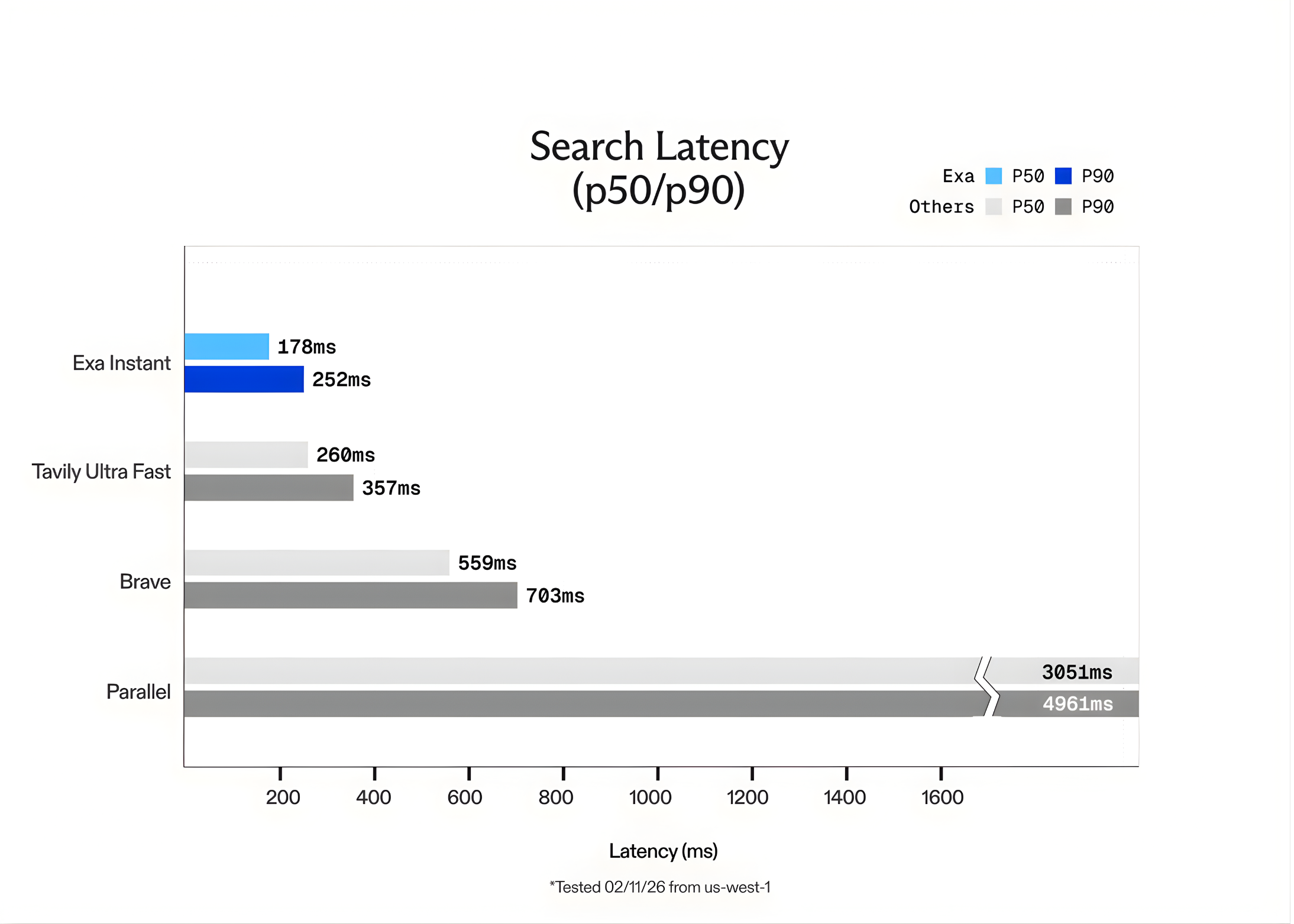

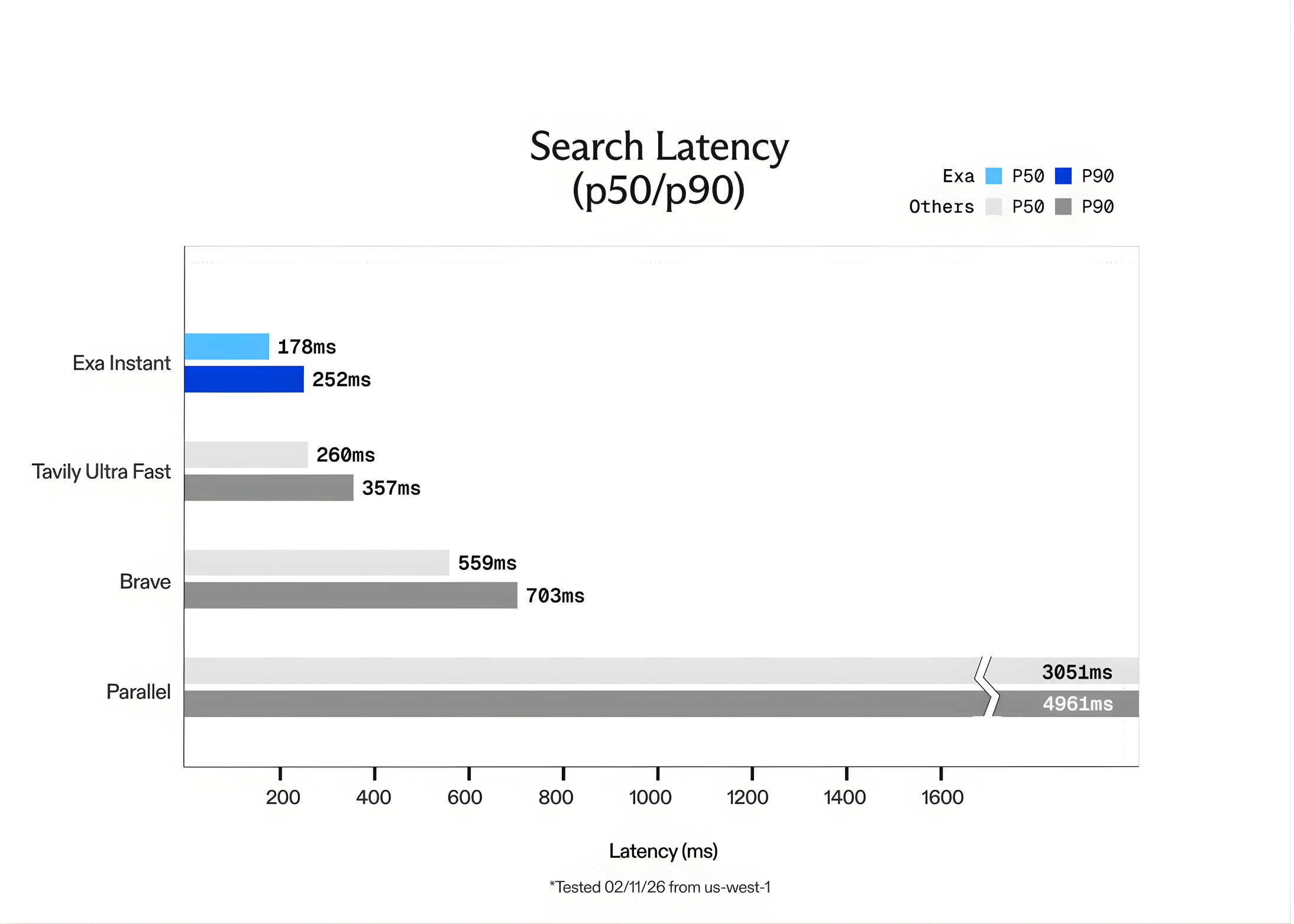

The Exa crew benchmarked Exa On the spot in opposition to different well-liked choices like Tavily Extremely Quick and Courageous. To make sure the checks had been honest and prevented ‘cached’ outcomes, the crew used the SealQA question dataset. Additionally they added random phrases generated by GPT-5 to every question to power the engine to carry out a contemporary search each time.

The outcomes confirmed that Exa On the spot is as much as 15x quicker than opponents. Whereas Exa presents different fashions like Exa Quick and Exa Auto for higher-quality reasoning, Exa On the spot is the clear selection for real-time purposes the place each millisecond counts.

Pricing and Developer Integration

The transition to Exa On the spot is easy. The API is accessible by the dashboard.exa.ai platform.

- Value: Exa On the spot is priced at $5 per 1,000 requests.

- Capability: It searches the identical huge index of the net as Exa’s extra highly effective fashions.

- Accuracy: Whereas designed for pace, it maintains excessive relevance. For specialised entity searches, Exa’s Websets product stays the gold commonplace, proving to be 20x extra appropriate than Google for complicated queries.

The API returns clear content material prepared for LLMs, eradicating the necessity for builders to jot down customized scraping or HTML cleansing code.

Key Takeaways

- Sub-200ms Latency for Actual-Time Brokers: Exa On the spot is optimized for ‘agentic’ workflows the place pace is a bottleneck. By delivering ends in beneath 200ms (and community latency as little as 50ms), it permits AI brokers to carry out multi-step reasoning and parallel searches with out the lag related to conventional search engines like google and yahoo.

- Proprietary Neural Stack vs. ‘Wrappers‘: Not like many search APIs that merely ‘wrap’ Google or Bing (including 700ms+ of overhead), Exa On the spot is constructed on a proprietary, end-to-end neural search engine. It makes use of a customized transformer-based structure to index and retrieve net knowledge, providing as much as 15x quicker efficiency than current options like Tavily or Courageous.

- Value-Environment friendly Scaling: The mannequin is designed to make search a ‘primitive’ relatively than an costly luxurious. It’s priced at $5 per 1,000 requests, permitting builders to combine real-time net lookups at each step of an agent’s thought course of with out breaking the price range.

- Semantic Intent over Key phrases: Exa On the spot leverages embeddings to prioritize the ‘which means’ of a question relatively than actual phrase matches. That is significantly efficient for RAG (Retrieval-Augmented Technology) purposes, the place discovering ‘link-worthy’ content material that matches an LLM’s context is extra precious than easy key phrase hits.

- Optimized for LLM Consumption: The API gives extra than simply URLs; it presents clear, parsed HTML, Markdown, and token-efficient highlights. This reduces the necessity for customized scraping scripts and minimizes the variety of tokens the LLM must course of, additional rushing up the complete pipeline.

Try the Technical particulars. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you’ll be able to be part of us on telegram as effectively.