NVIDIA has launched VIBETENSOR, an open-source analysis system software program stack for deep studying. VIBETENSOR is generated by LLM-powered coding brokers underneath high-level human steering.

The system asks a concrete query: can coding brokers generate a coherent deep studying runtime that spans Python and JavaScript APIs all the way down to C++ runtime elements and CUDA reminiscence administration and validate it solely by means of instruments.

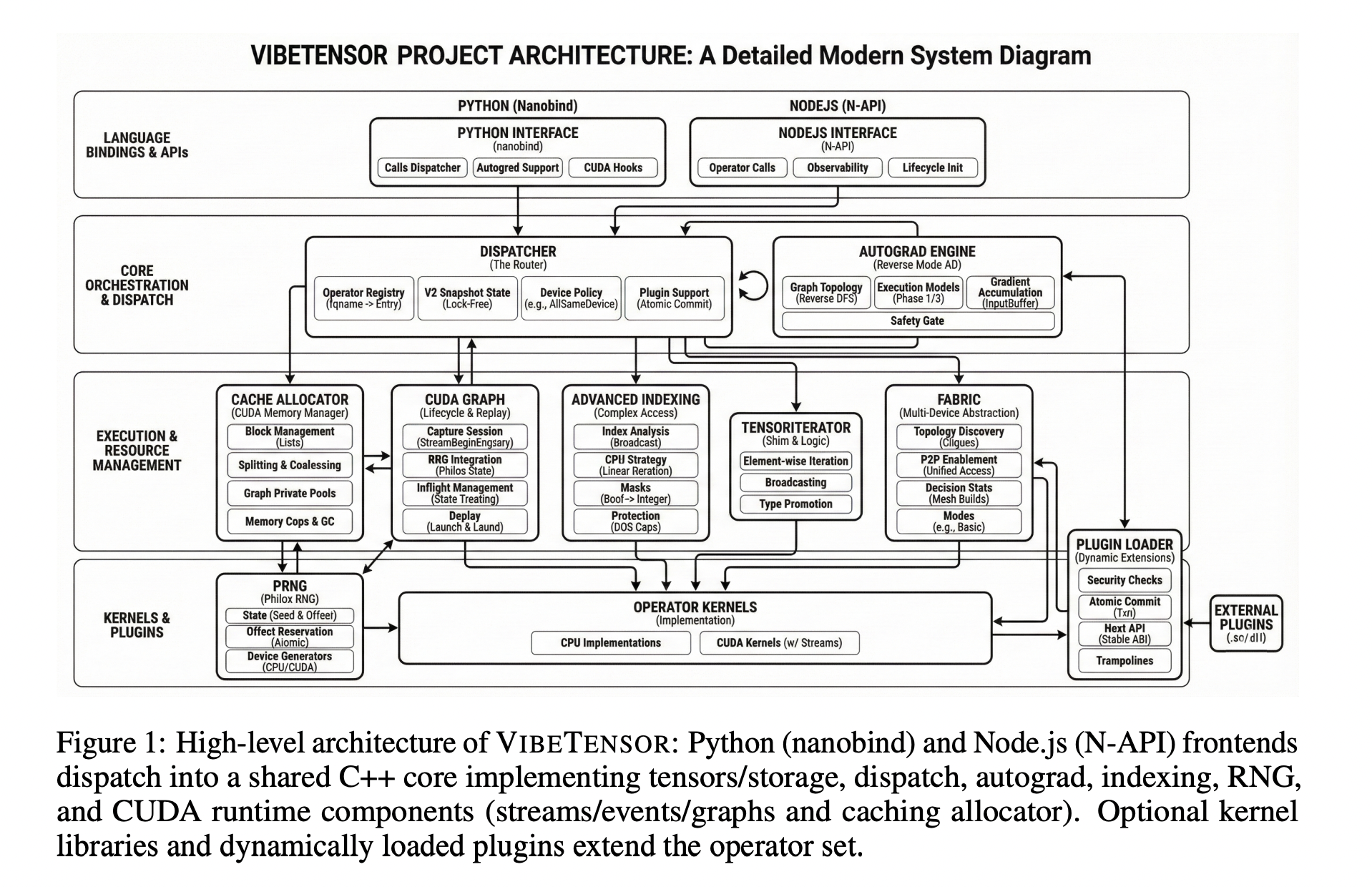

Structure from frontends to CUDA runtime

VIBETENSOR implements a PyTorch-style keen tensor library with a C++20 core for CPU and CUDA, a torch-like Python overlay through nanobind, and an experimental Node.js / TypeScript interface. It targets Linux x86_64 and NVIDIA GPUs through CUDA, and builds with out CUDA are deliberately disabled.

The core stack contains its personal tensor and storage system, a schema-lite dispatcher, a reverse-mode autograd engine, a CUDA subsystem with streams, occasions, and CUDA graphs, a stream-ordered caching allocator with diagnostics, and a secure C ABI for dynamically loaded operator plugins. Frontends in Python and Node.js share a C++ dispatcher, tensor implementation, autograd engine, and CUDA runtime.

The Python overlay exposes a vibetensor.torch namespace with tensor factories, operator dispatch, and CUDA utilities. The Node.js frontend is constructed on Node-API and focuses on async execution, utilizing employee scheduling with bounds on concurrent inflight work as described within the implementation sections.

On the runtime degree, TensorImpl represents a view over reference-counted Storage, with sizes, strides, storage offsets, dtype, system metadata, and a shared model counter. This helps non-contiguous views and aliasing. A TensorIterator subsystem computes iteration shapes and per-operand strides for elementwise and discount operators, and the identical logic is uncovered by means of the plugin ABI so exterior kernels comply with the identical aliasing and iteration guidelines.

The dispatcher is schema-lite. It maps operator names to implementations throughout CPU and CUDA dispatch keys and permits wrapper layers for autograd and Python overrides. System insurance policies implement invariants corresponding to “all tensor inputs on the identical system,” whereas leaving room for specialised multi-device insurance policies.

Autograd, CUDA subsystem, and multi-GPU Cloth

Reverse-mode autograd makes use of Node and Edge graph objects and per-tensor AutogradMeta. Throughout backward, the engine maintains dependency counts, per-input gradient buffers, and a prepared queue. For CUDA tensors, it data and waits on CUDA occasions to synchronize cross-stream gradient flows. The system additionally incorporates an experimental multi-device autograd mode for analysis on cross-device execution.

The CUDA subsystem supplies C++ wrappers for CUDA streams and occasions, a caching allocator with stream-ordered semantics, and CUDA graph seize and replay. The allocator contains diagnostics corresponding to snapshots, statistics, memory-fraction caps, and GC ladders to make reminiscence conduct observable in assessments and debugging. CUDA graphs combine with allocator “graph swimming pools” to handle reminiscence lifetime throughout seize and replay.

The Cloth subsystem is an experimental multi-GPU layer. It exposes express peer-to-peer GPU entry through CUDA P2P and unified digital addressing when the topology helps it. Cloth focuses on single-process multi-GPU execution and supplies observability primitives corresponding to statistics and occasion snapshots slightly than a full distributed coaching stack.

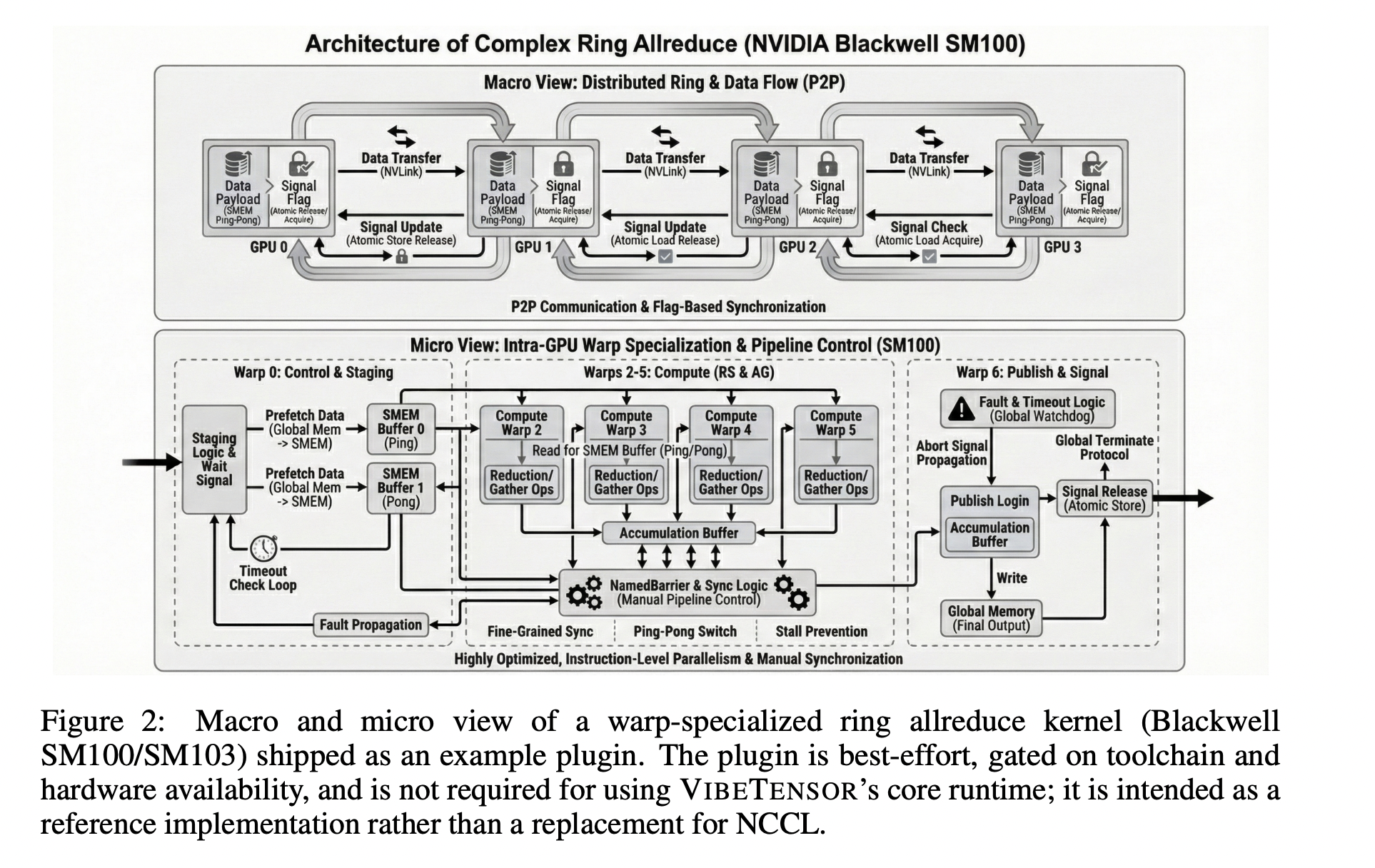

As a reference extension, VIBETENSOR ships a best-effort CUTLASS-based ring allreduce plugin for NVIDIA Blackwell-class GPUs. This plugin binds experimental ring-allreduce kernels, doesn’t name NCCL, and is positioned as an illustrative instance, not as an NCCL alternative. Multi-GPU leads to the paper depend on Cloth plus this non-compulsory plugin, and they’re reported just for Blackwell GPUs.

Interoperability and extension factors

VIBETENSOR helps DLPack import and export for CPU and CUDA tensors and supplies a C++20 Safetensors loader and saver for serialization. Extensibility mechanisms embody Python-level overrides impressed by torch.library, a versioned C plugin ABI, and hooks for customized GPU kernels authored in Triton and CUDA template libraries corresponding to CUTLASS. The plugin ABI exposes DLPack-based dtype and system metadata and TensorIterator helpers so exterior kernels combine with the identical iteration and aliasing guidelines as built-in operators.

AI-assisted improvement

VIBETENSOR was constructed utilizing LLM-powered coding brokers as the primary code authors, guided solely by high-level human specs. Over roughly 2 months, people outlined targets and constraints, then brokers proposed code diffs and executed builds and assessments to validate them. The work doesn’t introduce a brand new agent framework, it treats brokers as black-box instruments that modify the codebase underneath tool-based checks. Validation depends on C++ assessments (CTest), Python assessments through pytest, and differential checks towards reference implementations corresponding to PyTorch for chosen operators. The analysis group additionally embody longer coaching regressions and allocator and CUDA diagnostics to catch stateful bugs and efficiency pathologies that don’t present up in unit assessments.

Key Takeaways

- AI-generated, CUDA-first deep studying stack: VIBETENSOR is an Apache 2.0, open-source PyTorch-style keen runtime whose implementation adjustments have been generated by LLM coding brokers, concentrating on Linux x86_64 with NVIDIA GPUs and CUDA as a tough requirement.

- Full runtime structure, not simply kernels: The system features a C++20 tensor core (TensorImpl/Storage/TensorIterator), a schema-lite dispatcher, reverse-mode autograd, a CUDA subsystem with streams, occasions, graphs, a stream-ordered caching allocator, and a versioned C plugin ABI, uncovered by means of Python (

vibetensor.torch) and experimental Node.js frontends. - Instrument-driven, agent-centric improvement workflow: Over ~2 months, people specified high-level targets, whereas brokers proposed diffs and validated them through CTest, pytest, differential checks towards PyTorch, allocator diagnostics, and long-horizon coaching regressions, with out per-diff handbook code evaluation.

- Sturdy microkernel speedups, slower end-to-end coaching: AI-generated kernels in Triton/CuTeDSL obtain as much as ~5–6× speedups over PyTorch baselines in remoted benchmarks, however full coaching workloads (Transformer toy duties, CIFAR-10 ViT, miniGPT-style LM) run 1.7× to six.2× slower than PyTorch, emphasizing the hole between kernel and system-level efficiency.

Try the Paper and Repo right here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you’ll be able to be part of us on telegram as nicely.