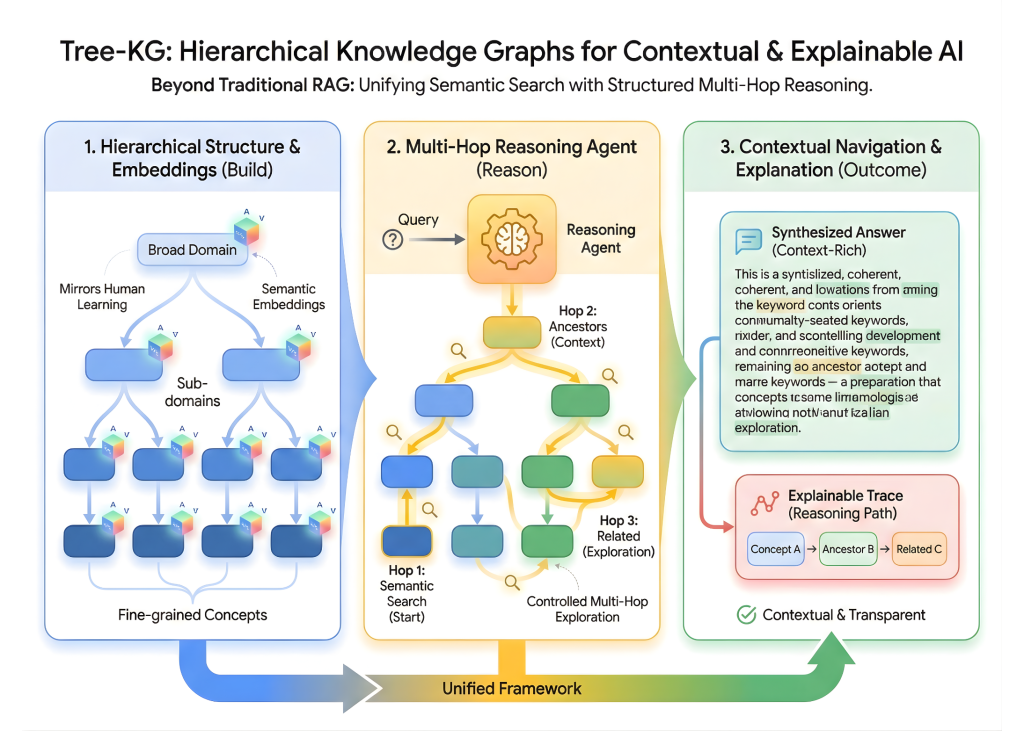

On this tutorial, we implement Tree-KG, a complicated hierarchical data graph system that goes past conventional retrieval-augmented technology by combining semantic embeddings with specific graph construction. We present how we will arrange data in a tree-like hierarchy that mirrors how people be taught, from broad domains to fine-grained ideas, after which cause throughout this construction utilizing managed multi-hop exploration. By constructing the graph from scratch, enriching nodes with embeddings, and designing a reasoning agent that navigates ancestors, descendants, and associated ideas, we show how we will obtain contextual navigation and explainable reasoning somewhat than flat, chunk-based retrieval. Try the FULL CODES right here.

!pip set up networkx matplotlib anthropic sentence-transformers scikit-learn numpy

import networkx as nx

import matplotlib.pyplot as plt

from typing import Checklist, Dict, Tuple, Non-obligatory, Set

import numpy as np

from sklearn.metrics.pairwise import cosine_similarity

from sentence_transformers import SentenceTransformer

from collections import defaultdict, deque

import jsonWe set up and import all of the core libraries required to construct and cause over the Tree-KG system. We arrange instruments for graph development and visualization, semantic embedding and similarity search, and environment friendly knowledge dealing with for traversal and scoring. Try the FULL CODES right here.

class TreeKnowledgeGraph:

"""

Hierarchical Information Graph that mimics human studying patterns.

Helps multi-hop reasoning and contextual navigation.

"""

def __init__(self, embedding_model: str="all-MiniLM-L6-v2"):

self.graph = nx.DiGraph()

self.embedder = SentenceTransformer(embedding_model)

self.node_embeddings = {}

self.node_metadata = {}

def add_node(self,

node_id: str,

content material: str,

node_type: str="idea",

metadata: Non-obligatory[Dict] = None):

"""Add a node with semantic embedding and metadata."""

embedding = self.embedder.encode(content material, convert_to_tensor=False)

self.graph.add_node(node_id,

content material=content material,

node_type=node_type,

metadata=metadata or {})

self.node_embeddings[node_id] = embedding

self.node_metadata[node_id] = {

'content material': content material,

'kind': node_type,

'metadata': metadata or {}

}

def add_edge(self,

guardian: str,

youngster: str,

relationship: str="accommodates",

weight: float = 1.0):

"""Add hierarchical or associative edge between nodes."""

self.graph.add_edge(guardian, youngster,

relationship=relationship,

weight=weight)

def get_ancestors(self, node_id: str, max_depth: int = 5) -> Checklist[str]:

"""Get all ancestor nodes (hierarchical context)."""

ancestors = []

present = node_id

depth = 0

whereas depth < max_depth:

predecessors = listing(self.graph.predecessors(present))

if not predecessors:

break

present = predecessors[0]

ancestors.append(present)

depth += 1

return ancestors

def get_descendants(self, node_id: str, max_depth: int = 2) -> Checklist[str]:

"""Get all descendant nodes."""

descendants = []

queue = deque([(node_id, 0)])

visited = {node_id}

whereas queue:

present, depth = queue.popleft()

if depth >= max_depth:

proceed

for youngster in self.graph.successors(present):

if youngster not in visited:

visited.add(youngster)

descendants.append(youngster)

queue.append((youngster, depth + 1))

return descendants

def semantic_search(self, question: str, top_k: int = 5) -> Checklist[Tuple[str, float]]:

"""Discover most semantically related nodes to question."""

query_embedding = self.embedder.encode(question, convert_to_tensor=False)

similarities = []

for node_id, embedding in self.node_embeddings.gadgets():

sim = cosine_similarity(

query_embedding.reshape(1, -1),

embedding.reshape(1, -1)

)[0][0]

similarities.append((node_id, float(sim)))

similarities.kind(key=lambda x: x[1], reverse=True)

return similarities[:top_k]

def get_subgraph_context(self, node_id: str, depth: int = 2) -> Dict:

"""Get wealthy contextual data round a node."""

context = {

'node': self.node_metadata.get(node_id, {}),

'ancestors': [],

'descendants': [],

'siblings': [],

'associated': []

}

ancestors = self.get_ancestors(node_id)

context['ancestors'] = [

self.node_metadata.get(a, {}) for a in ancestors

]

descendants = self.get_descendants(node_id, depth)

context['descendants'] = [

self.node_metadata.get(d, {}) for d in descendants

]

mother and father = listing(self.graph.predecessors(node_id))

if mother and father:

siblings = listing(self.graph.successors(mother and father[0]))

siblings = [s for s in siblings if s != node_id]

context['siblings'] = [

self.node_metadata.get(s, {}) for s in siblings

]

return contextWe outline the core TreeKnowledgeGraph class that constructions data as a directed hierarchy enriched with semantic embeddings. We retailer each graph relationships and dense representations to navigate ideas structurally whereas additionally performing similarity-based retrieval. Try the FULL CODES right here.

class MultiHopReasoningAgent:

"""

Agent that performs clever multi-hop reasoning throughout the data graph.

"""

def __init__(self, kg: TreeKnowledgeGraph):

self.kg = kg

self.reasoning_history = []

def cause(self,

question: str,

max_hops: int = 3,

exploration_width: int = 3) -> Dict:

"""

Carry out multi-hop reasoning to reply a question.

Technique:

1. Discover preliminary related nodes (semantic search)

2. Discover graph context round these nodes

3. Carry out breadth-first exploration with relevance scoring

4. Mixture data from a number of hops

"""

reasoning_trace = {

'question': question,

'hops': [],

'final_context': {},

'reasoning_path': []

}

initial_nodes = self.kg.semantic_search(question, top_k=exploration_width)

reasoning_trace['hops'].append({

'hop_number': 0,

'motion': 'semantic_search',

'nodes_found': initial_nodes

})

visited = set()

current_frontier = [node_id for node_id, _ in initial_nodes]

all_relevant_nodes = set(current_frontier)

for hop in vary(1, max_hops + 1):

next_frontier = []

hop_info = {

'hop_number': hop,

'explored_nodes': [],

'new_discoveries': []

}

for node_id in current_frontier:

if node_id in visited:

proceed

visited.add(node_id)

context = self.kg.get_subgraph_context(node_id, depth=1)

connected_nodes = []

for ancestor in context['ancestors']:

if 'content material' in ancestor:

connected_nodes.append(ancestor)

for descendant in context['descendants']:

if 'content material' in descendant:

connected_nodes.append(descendant)

for sibling in context['siblings']:

if 'content material' in sibling:

connected_nodes.append(sibling)

relevant_connections = self._score_relevance(

question, connected_nodes, top_k=exploration_width

)

hop_info['explored_nodes'].append({

'node_id': node_id,

'content material': self.kg.node_metadata[node_id]['content'][:100],

'connections_found': len(relevant_connections)

})

for conn_content, rating in relevant_connections:

for nid, meta in self.kg.node_metadata.gadgets():

if meta['content'] == conn_content and nid not in visited:

next_frontier.append(nid)

all_relevant_nodes.add(nid)

hop_info['new_discoveries'].append({

'node_id': nid,

'relevance_score': rating

})

break

reasoning_trace['hops'].append(hop_info)

current_frontier = next_frontier

if not current_frontier:

break

final_context = self._aggregate_context(question, all_relevant_nodes)

reasoning_trace['final_context'] = final_context

reasoning_trace['reasoning_path'] = listing(all_relevant_nodes)

self.reasoning_history.append(reasoning_trace)

return reasoning_trace

def _score_relevance(self,

question: str,

candidates: Checklist[Dict],

top_k: int = 3) -> Checklist[Tuple[str, float]]:

"""Rating candidate nodes by relevance to question."""

if not candidates:

return []

query_embedding = self.kg.embedder.encode(question)

scores = []

for candidate in candidates:

content material = candidate.get('content material', '')

if not content material:

proceed

candidate_embedding = self.kg.embedder.encode(content material)

similarity = cosine_similarity(

query_embedding.reshape(1, -1),

candidate_embedding.reshape(1, -1)

)[0][0]

scores.append((content material, float(similarity)))

scores.kind(key=lambda x: x[1], reverse=True)

return scores[:top_k]

def _aggregate_context(self, question: str, node_ids: Set[str]) -> Dict:

"""Mixture and rank data from all found nodes."""

aggregated = {

'total_nodes': len(node_ids),

'hierarchical_paths': [],

'key_concepts': [],

'synthesized_answer': []

}

for node_id in node_ids:

ancestors = self.kg.get_ancestors(node_id)

if ancestors:

path = ancestors[::-1] + [node_id]

path_contents = [

self.kg.node_metadata[n]['content']

for n in path if n in self.kg.node_metadata

]

aggregated['hierarchical_paths'].append(path_contents)

for node_id in node_ids:

meta = self.kg.node_metadata.get(node_id, {})

aggregated['key_concepts'].append({

'id': node_id,

'content material': meta.get('content material', ''),

'kind': meta.get('kind', 'unknown')

})

for node_id in node_ids:

content material = self.kg.node_metadata.get(node_id, {}).get('content material', '')

if content material:

aggregated['synthesized_answer'].append(content material)

return aggregated

def explain_reasoning(self, hint: Dict) -> str:

"""Generate human-readable clarification of reasoning course of."""

clarification = [f"Query: {trace['query']}n"]

clarification.append(f"Whole hops carried out: {len(hint['hops']) - 1}n")

clarification.append(f"Whole related nodes found: {len(hint['reasoning_path'])}nn")

for hop_info in hint['hops']:

hop_num = hop_info['hop_number']

clarification.append(f"--- Hop {hop_num} ---")

if hop_num == 0:

clarification.append(f"Motion: Preliminary semantic search")

clarification.append(f"Discovered {len(hop_info['nodes_found'])} candidate nodes")

for node_id, rating in hop_info['nodes_found'][:3]:

clarification.append(f" - {node_id} (relevance: {rating:.3f})")

else:

clarification.append(f"Explored {len(hop_info['explored_nodes'])} nodes")

clarification.append(f"Found {len(hop_info['new_discoveries'])} new related nodes")

clarification.append("")

clarification.append("n--- Remaining Aggregated Context ---")

context = hint['final_context']

clarification.append(f"Whole ideas built-in: {context['total_nodes']}")

clarification.append(f"Hierarchical paths discovered: {len(context['hierarchical_paths'])}")

return "n".be part of(clarification)We implement a multi-hop reasoning agent that actively navigates the data graph as a substitute of passively retrieving nodes. We begin from semantically related ideas, develop by way of ancestors, descendants, and siblings, and iteratively rating connections to information exploration throughout hops. By aggregating hierarchical paths and synthesizing content material, we produce each an explainable reasoning hint and a coherent, context-rich reply. Try the FULL CODES right here.

def build_software_development_kb() -> TreeKnowledgeGraph:

"""Construct a complete software program growth data graph."""

kg = TreeKnowledgeGraph()

kg.add_node('root', 'Software program Improvement and Laptop Science', 'area')

kg.add_node('programming',

'Programming encompasses writing, testing, and sustaining code to create software program functions',

'area')

kg.add_node('structure',

'Software program Structure entails designing the high-level construction and parts of software program programs',

'area')

kg.add_node('area')

kg.add_edge('root', 'programming', 'accommodates')

kg.add_edge('root', 'structure', 'accommodates')

kg.add_edge('root', 'devops', 'accommodates')

kg.add_node('python',

'language')

kg.add_node('javascript',

'JavaScript is a dynamic language primarily used for internet growth, enabling interactive client-side and server-side functions',

'language')

kg.add_node('rust',

'language')

kg.add_edge('programming', 'python', 'consists of')

kg.add_edge('programming', 'javascript', 'consists of')

kg.add_edge('programming', 'rust', 'consists of')

kg.add_node('python_basics',

'Python fundamentals embrace variables, knowledge sorts, management stream, features, and object-oriented programming fundamentals',

'idea')

kg.add_node('python_performance',

'Python Efficiency optimization entails methods like profiling, caching, utilizing C extensions, and leveraging async programming',

'idea')

kg.add_node('python_data',

'Python for Knowledge Science makes use of libraries like NumPy, Pandas, and Scikit-learn for knowledge manipulation, evaluation, and machine studying',

'idea')

kg.add_edge('python', 'python_basics', 'accommodates')

kg.add_edge('python', 'python_performance', 'accommodates')

kg.add_edge('python', 'python_data', 'accommodates')

kg.add_node('async_io',

'Asynchronous IO in Python permits non-blocking operations utilizing async/await syntax with asyncio library for concurrent duties',

'approach')

kg.add_node('multiprocessing',

'Python Multiprocessing makes use of separate processes to bypass GIL, enabling true parallel execution for CPU-bound duties',

'approach')

kg.add_node('cython',

'Cython compiles Python to C for important efficiency positive factors, particularly in numerical computations and tight loops',

'device')

kg.add_node('profiling',

'Python Profiling identifies efficiency bottlenecks utilizing instruments like cProfile, line_profiler, and memory_profiler',

'approach')

kg.add_edge('python_performance', 'async_io', 'accommodates')

kg.add_edge('python_performance', 'multiprocessing', 'accommodates')

kg.add_edge('python_performance', 'cython', 'accommodates')

kg.add_edge('python_performance', 'profiling', 'accommodates')

kg.add_node('event_loop',

'Occasion Loop is the core of asyncio that manages and schedules asynchronous duties, dealing with callbacks and coroutines',

'idea')

kg.add_node('coroutines',

'Coroutines are particular features outlined with async def that may pause execution with await, enabling cooperative multitasking',

'idea')

kg.add_node('asyncio_patterns',

'AsyncIO patterns embrace collect for concurrent execution, create_task for background duties, and queues for producer-consumer',

'sample')

kg.add_edge('async_io', 'event_loop', 'accommodates')

kg.add_edge('async_io', 'coroutines', 'accommodates')

kg.add_edge('async_io', 'asyncio_patterns', 'accommodates')

kg.add_node('microservices',

'Microservices structure decomposes functions into small, unbiased providers that talk by way of APIs',

'sample')

kg.add_edge('structure', 'microservices', 'accommodates')

kg.add_edge('async_io', 'microservices', 'related_to')

kg.add_node('containers',

'Containers bundle functions with dependencies into remoted items, guaranteeing consistency throughout environments',

'expertise')

kg.add_edge('devops', 'containers', 'accommodates')

kg.add_edge('microservices', 'containers', 'deployed_with')

kg.add_node('numpy_optimization',

'NumPy optimization makes use of vectorization and broadcasting to keep away from Python loops, leveraging optimized C and Fortran libraries',

'approach')

kg.add_edge('python_data', 'numpy_optimization', 'accommodates')

kg.add_edge('python_performance', 'numpy_optimization', 'related_to')

return kgWe assemble a wealthy, hierarchical software program growth data base that progresses from high-level domains right down to concrete methods and instruments. We explicitly encode guardian–youngster and cross-domain relationships in order that ideas resembling Python efficiency, async I/O, and microservices are structurally related somewhat than remoted. This setup permits us to simulate how data is realized and revisited throughout layers, enabling significant multi-hop reasoning over real-world software program subjects. Try the FULL CODES right here.

def visualize_knowledge_graph(kg: TreeKnowledgeGraph,

highlight_nodes: Non-obligatory[List[str]] = None):

"""Visualize the data graph construction."""

plt.determine(figsize=(16, 12))

pos = nx.spring_layout(kg.graph, ok=2, iterations=50, seed=42)

node_colors = []

for node in kg.graph.nodes():

if highlight_nodes and node in highlight_nodes:

node_colors.append('yellow')

else:

node_type = kg.graph.nodes[node].get('node_type', 'idea')

color_map = {

'area': 'lightblue',

'language': 'lightgreen',

'idea': 'lightcoral',

'approach': 'lightyellow',

'device': 'lightpink',

'sample': 'lavender',

'expertise': 'peachpuff'

}

node_colors.append(color_map.get(node_type, 'lightgray'))

nx.draw_networkx_nodes(kg.graph, pos,

node_color=node_colors,

node_size=2000,

alpha=0.9)

nx.draw_networkx_edges(kg.graph, pos,

edge_color="grey",

arrows=True,

arrowsize=20,

alpha=0.6,

width=2)

nx.draw_networkx_labels(kg.graph, pos,

font_size=8,

font_weight="daring")

plt.title("Tree-KG: Hierarchical Information Graph", fontsize=16, fontweight="daring")

plt.axis('off')

plt.tight_layout()

plt.present()

def run_demo():

"""Run full demonstration of Tree-KG system."""

print("=" * 80)

print("Tree-KG: Hierarchical Information Graph Demo")

print("=" * 80)

print()

print("Constructing data graph...")

kg = build_software_development_kb()

print(f"✓ Created graph with {kg.graph.number_of_nodes()} nodes and {kg.graph.number_of_edges()} edgesn")

print("Visualizing data graph...")

visualize_knowledge_graph(kg)

agent = MultiHopReasoningAgent(kg)

queries = [

"How can I improve Python performance for IO-bound tasks?",

"What are the best practices for async programming?",

"How does microservices architecture relate to Python?"

]

for i, question in enumerate(queries, 1):

print(f"n{'=' * 80}")

print(f"QUERY {i}: {question}")

print('=' * 80)

hint = agent.cause(question, max_hops=3, exploration_width=3)

clarification = agent.explain_reasoning(hint)

print(clarification)

print("n--- Pattern Hierarchical Paths ---")

for j, path in enumerate(hint['final_context']['hierarchical_paths'][:3], 1):

print(f"nPath {j}:")

for ok, idea in enumerate(path):

indent = " " * ok

print(f"{indent}→ {idea[:80]}...")

print("n--- Synthesized Context ---")

answer_parts = hint['final_context']['synthesized_answer'][:5]

for half in answer_parts:

print(f"• {half[:150]}...")

print()

print("nVisualizing reasoning path for final question...")

last_trace = agent.reasoning_history[-1]

visualize_knowledge_graph(kg, highlight_nodes=last_trace['reasoning_path'])

print("n" + "=" * 80)

print("Demo full!")

print("=" * 80)We visualize the hierarchical construction of the data graph utilizing shade and format to tell apart domains, ideas, methods, and instruments, and optionally spotlight the reasoning path. We then run an end-to-end demo through which we construct the graph, execute multi-hop reasoning on lifelike queries, and print each the reasoning hint and the synthesized context. It permits us to watch how the agent navigates the graph, surfaces hierarchical paths, and explains its conclusions in a clear and interpretable method. Try the FULL CODES right here.

class AdvancedTreeKG(TreeKnowledgeGraph):

"""Prolonged Tree-KG with superior options."""

def __init__(self, embedding_model: str="all-MiniLM-L6-v2"):

tremendous().__init__(embedding_model)

self.node_importance = {}

def compute_node_importance(self):

"""Compute significance scores utilizing PageRank-like algorithm."""

if self.graph.number_of_nodes() == 0:

return

pagerank = nx.pagerank(self.graph)

betweenness = nx.betweenness_centrality(self.graph)

for node in self.graph.nodes():

self.node_importance[node] = {

'pagerank': pagerank.get(node, 0),

'betweenness': betweenness.get(node, 0),

'mixed': pagerank.get(node, 0) * 0.7 + betweenness.get(node, 0) * 0.3

}

def find_shortest_path_with_context(self,

supply: str,

goal: str) -> Dict:

"""Discover shortest path and extract all context alongside the way in which."""

strive:

path = nx.shortest_path(self.graph, supply, goal)

context = {

'path': path,

'path_length': len(path) - 1,

'nodes_detail': []

}

for node in path:

element = {

'id': node,

'content material': self.node_metadata.get(node, {}).get('content material', ''),

'significance': self.node_importance.get(node, {}).get('mixed', 0)

}

context['nodes_detail'].append(element)

return context

besides nx.NetworkXNoPath:

return {'path': [], 'error': 'No path exists'}We prolong the bottom Tree-KG with graph-level intelligence by computing node significance utilizing centrality measures. We mix PageRank and betweenness scores to determine ideas that play a structurally crucial function in connecting data throughout the graph. It additionally permits us to retrieve shortest paths enriched with contextual and significance data, enabling extra knowledgeable and explainable reasoning between any two ideas. Try the FULL CODES right here.

if __name__ == "__main__":

run_demo()

print("nn" + "=" * 80)

print("ADVANCED FEATURES DEMO")

print("=" * 80)

print("nBuilding superior Tree-KG...")

adv_kg = AdvancedTreeKG()

adv_kg = build_software_development_kb()

adv_kg_new = AdvancedTreeKG()

adv_kg_new.graph = adv_kg.graph

adv_kg_new.node_embeddings = adv_kg.node_embeddings

adv_kg_new.node_metadata = adv_kg.node_metadata

print("Computing node significance scores...")

adv_kg_new.compute_node_importance()

print("nTop 5 most vital nodes:")

sorted_nodes = sorted(

adv_kg_new.node_importance.gadgets(),

key=lambda x: x[1]['combined'],

reverse=True

)[:5]

for node, scores in sorted_nodes:

content material = adv_kg_new.node_metadata[node]['content'][:60]

print(f" {node}: {content material}...")

print(f" Mixed rating: {scores['combined']:.4f}")

print("n✓ Tree-KG Tutorial Full!")

print("nKey Takeaways:")

print("1. Tree-KG allows contextual navigation vs easy chunk retrieval")

print("2. Multi-hop reasoning discovers related data throughout graph construction")

print("3. Hierarchical group mirrors human studying patterns")

print("4. Semantic search + graph traversal = highly effective RAG various")We execute the total Tree-KG demo after which showcase the superior options to shut the loop on the system’s capabilities. We compute node significance scores to floor probably the most influential ideas within the graph and examine how structural centrality aligns with semantic relevance.

In conclusion, we demonstrated how Tree-KG allows richer understanding by unifying semantic search, hierarchical context, and multi-hop reasoning inside a single framework. We confirmed that, as a substitute of merely retrieving remoted textual content fragments, we will traverse significant data paths, mixture insights throughout ranges, and produce explanations that mirror how conclusions are shaped. By extending the system with significance scoring and path-aware context extraction, we illustrated how Tree-KG can function a powerful basis for constructing clever brokers, analysis assistants, or domain-specific reasoning programs that demand construction, transparency, and depth past typical RAG approaches.

Try the FULL CODES right here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to hitch our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be part of us on telegram as nicely.