In deep studying, classification fashions don’t simply must make predictions—they should specific confidence. That’s the place the Softmax activation operate is available in. Softmax takes the uncooked, unbounded scores produced by a neural community and transforms them right into a well-defined likelihood distribution, making it potential to interpret every output because the chance of a particular class.

This property makes Softmax a cornerstone of multi-class classification duties, from picture recognition to language modeling. On this article, we’ll construct an intuitive understanding of how Softmax works and why its implementation particulars matter greater than they first seem. Take a look at the FULL CODES right here.

Implementing Naive Softmax

import torch

def softmax_naive(logits):

exp_logits = torch.exp(logits)

return exp_logits / exp_logits.sum(dim=1, keepdim=True)This operate implements the Softmax activation in its most simple kind. It exponentiates every logit and normalizes it by the sum of all exponentiated values throughout courses, producing a likelihood distribution for every enter pattern.

Whereas this implementation is mathematically right and straightforward to learn, it’s numerically unstable—massive optimistic logits could cause overflow, and huge adverse logits can underflow to zero. Because of this, this model must be prevented in actual coaching pipelines. Take a look at the FULL CODES right here.

Pattern Logits and Goal Labels

This instance defines a small batch with three samples and three courses as an instance each regular and failure circumstances. The primary and third samples include affordable logit values and behave as anticipated throughout Softmax computation. The second pattern deliberately contains excessive values (1000 and -1000) to exhibit numerical instability—that is the place the naive Softmax implementation breaks down.

The targets tensor specifies the right class index for every pattern and might be used to compute the classification loss and observe how instability propagates throughout backpropagation. Take a look at the FULL CODES right here.

# Batch of three samples, 3 courses

logits = torch.tensor([

[2.0, 1.0, 0.1],

[1000.0, 1.0, -1000.0],

[3.0, 2.0, 1.0]

], requires_grad=True)

targets = torch.tensor([0, 2, 1])Ahead Move: Softmax Output and the Failure Case

In the course of the ahead move, the naive Softmax operate is utilized to the logits to supply class chances. For regular logit values (first and third samples), the output is a sound likelihood distribution the place values lie between 0 and 1 and sum to 1.

Nonetheless, the second pattern clearly exposes the numerical challenge: exponentiating 1000 overflows to infinity, whereas -1000 underflows to zero. This leads to invalid operations throughout normalization, producing NaN values and 0 chances. As soon as NaN seems at this stage, it contaminates all subsequent computations, making the mannequin unusable for coaching. Take a look at the FULL CODES right here.

# Ahead move

probs = softmax_naive(logits)

print("Softmax chances:")

print(probs)Goal Chances and Loss Breakdown

Right here, we extract the expected likelihood similar to the true class for every pattern. Whereas the primary and third samples return legitimate chances, the second pattern’s goal likelihood is 0.0, attributable to numerical underflow within the Softmax computation. When the loss is calculated utilizing -log(p), taking the logarithm of 0.0 leads to +∞.

This makes the general loss infinite, which is a crucial failure throughout coaching. As soon as the loss turns into infinite, gradient computation turns into unstable, resulting in NaNs throughout backpropagation and successfully halting studying. Take a look at the FULL CODES right here.

# Extract goal chances

target_probs = probs[torch.arange(len(targets)), targets]

print("nTarget chances:")

print(target_probs)

# Compute loss

loss = -torch.log(target_probs).imply()

print("nLoss:", loss)Backpropagation: Gradient Corruption

When backpropagation is triggered, the influence of the infinite loss turns into instantly seen. The gradients for the primary and third samples stay finite as a result of their Softmax outputs have been well-behaved. Nonetheless, the second pattern produces NaN gradients throughout all courses because of the log(0) operation within the loss.

These NaNs propagate backward via the community, contaminating weight updates and successfully breaking coaching. That is why numerical instability on the Softmax–loss boundary is so harmful—as soon as NaNs seem, restoration is almost unattainable with out restarting coaching. Take a look at the FULL CODES right here.

loss.backward()

print("nGradients:")

print(logits.grad)Numerical Instability and Its Penalties

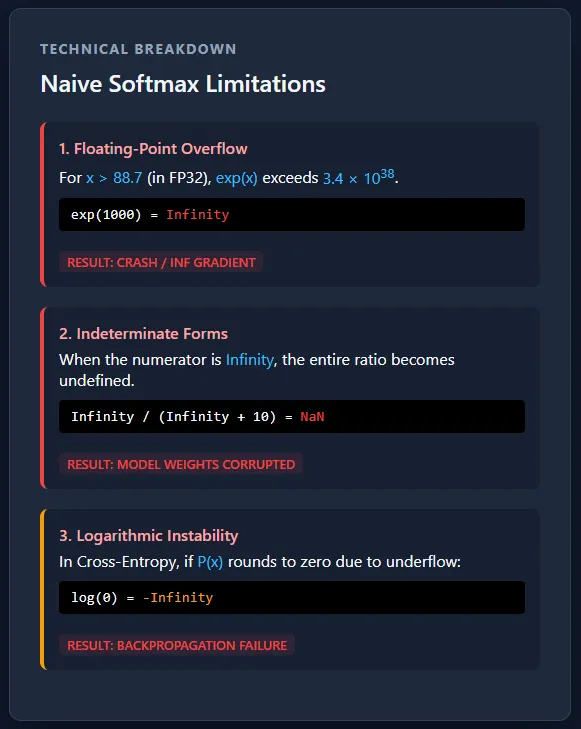

Separating Softmax and cross-entropy creates a severe numerical stability danger because of exponential overflow and underflow. Giant logits can push chances to infinity or zero, inflicting log(0) and resulting in NaN gradients that shortly corrupt coaching. At manufacturing scale, this isn’t a uncommon edge case however a certainty—with out steady, fused implementations, massive multi-GPU coaching runs would fail unpredictably.

The core numerical downside comes from the truth that computer systems can’t characterize infinitely massive or infinitely small numbers. Floating-point codecs like FP32 have strict limits on how massive or small a worth could be saved. When Softmax computes exp(x), massive optimistic values develop so quick that they exceed the utmost representable quantity and switch into infinity, whereas massive adverse values shrink a lot that they change into zero. As soon as a worth turns into infinity or zero, subsequent operations like division or logarithms break down and produce invalid outcomes. Take a look at the FULL CODES right here.

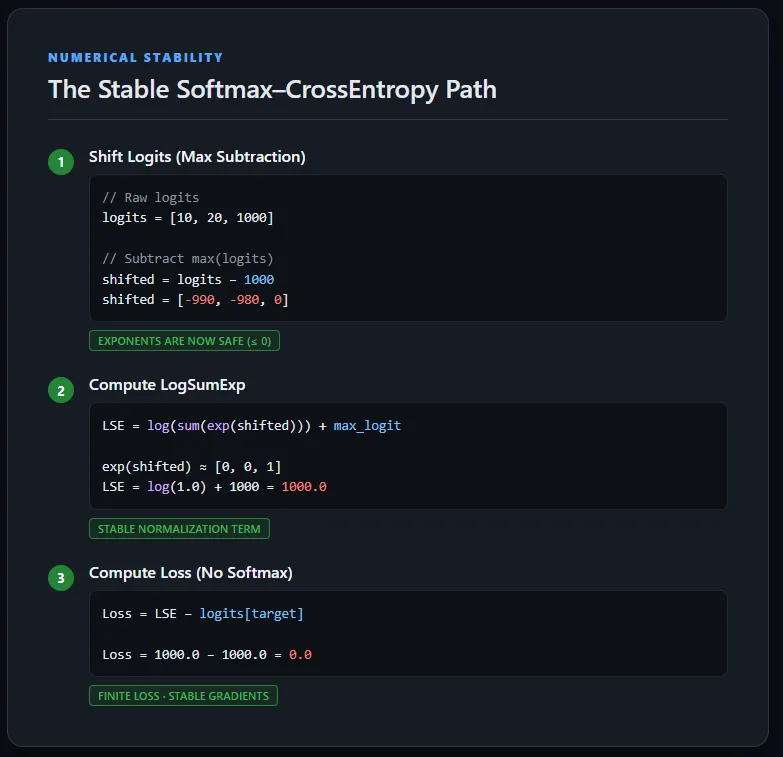

Implementing Steady Cross-Entropy Loss Utilizing LogSumExp

This implementation computes cross-entropy loss instantly from uncooked logits with out explicitly calculating Softmax chances. To take care of numerical stability, the logits are first shifted by subtracting the utmost worth per pattern, making certain exponentials keep inside a protected vary.

The LogSumExp trick is then used to compute the normalization time period, after which the unique (unshifted) goal logit is subtracted to acquire the right loss. This strategy avoids overflow, underflow, and NaN gradients, and mirrors how cross-entropy is carried out in production-grade deep studying frameworks. Take a look at the FULL CODES right here.

def stable_cross_entropy(logits, targets):

# Discover max logit per pattern

max_logits, _ = torch.max(logits, dim=1, keepdim=True)

# Shift logits for numerical stability

shifted_logits = logits - max_logits

# Compute LogSumExp

log_sum_exp = torch.log(torch.sum(torch.exp(shifted_logits), dim=1)) + max_logits.squeeze(1)

# Compute loss utilizing ORIGINAL logits

loss = log_sum_exp - logits[torch.arange(len(targets)), targets]

return loss.imply()Steady Ahead and Backward Move

Operating the steady cross-entropy implementation on the identical excessive logits produces a finite loss and well-defined gradients. Although one pattern accommodates very massive values (1000 and -1000), the LogSumExp formulation retains all intermediate computations in a protected numerical vary. Because of this, backpropagation completes efficiently with out producing NaNs, and every class receives a significant gradient sign.

This confirms that the instability seen earlier was not attributable to the info itself, however by the naive separation of Softmax and cross-entropy—a difficulty totally resolved through the use of a numerically steady, fused loss formulation. Take a look at the FULL CODES right here.

logits = torch.tensor([

[2.0, 1.0, 0.1],

[1000.0, 1.0, -1000.0],

[3.0, 2.0, 1.0]

], requires_grad=True)

targets = torch.tensor([0, 2, 1])

loss = stable_cross_entropy(logits, targets)

print("Steady loss:", loss)

loss.backward()

print("nGradients:")

print(logits.grad)

Conclusion

In observe, the hole between mathematical formulation and real-world code is the place many coaching failures originate. Whereas Softmax and cross-entropy are mathematically well-defined, their naive implementation ignores the finite precision limits of IEEE 754 {hardware}, making underflow and overflow inevitable.

The important thing repair is straightforward however crucial: shift logits earlier than exponentiation and function within the log area each time potential. Most significantly, coaching hardly ever requires express chances—steady log-probabilities are enough and much safer. When a loss all of the sudden turns into NaN in manufacturing, it’s usually a sign that Softmax is being computed manually someplace it shouldn’t be.

Take a look at the FULL CODES right here. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be a part of us on telegram as nicely.

Take a look at our newest launch of ai2025.dev, a 2025-focused analytics platform that turns mannequin launches, benchmarks, and ecosystem exercise right into a structured dataset you possibly can filter, evaluate, and export