Jina AI has launched Jina-VLM, a 2.4B parameter imaginative and prescient language mannequin that targets multilingual visible query answering and doc understanding on constrained {hardware}. The mannequin {couples} a SigLIP2 imaginative and prescient encoder with a Qwen3 language spine and makes use of an consideration pooling connector to scale back visible tokens whereas preserving spatial construction. Amongst open 2B scale VLMs, it reaches state-of-the-art outcomes on multilingual benchmarks akin to MMMB and Multilingual MMBench.

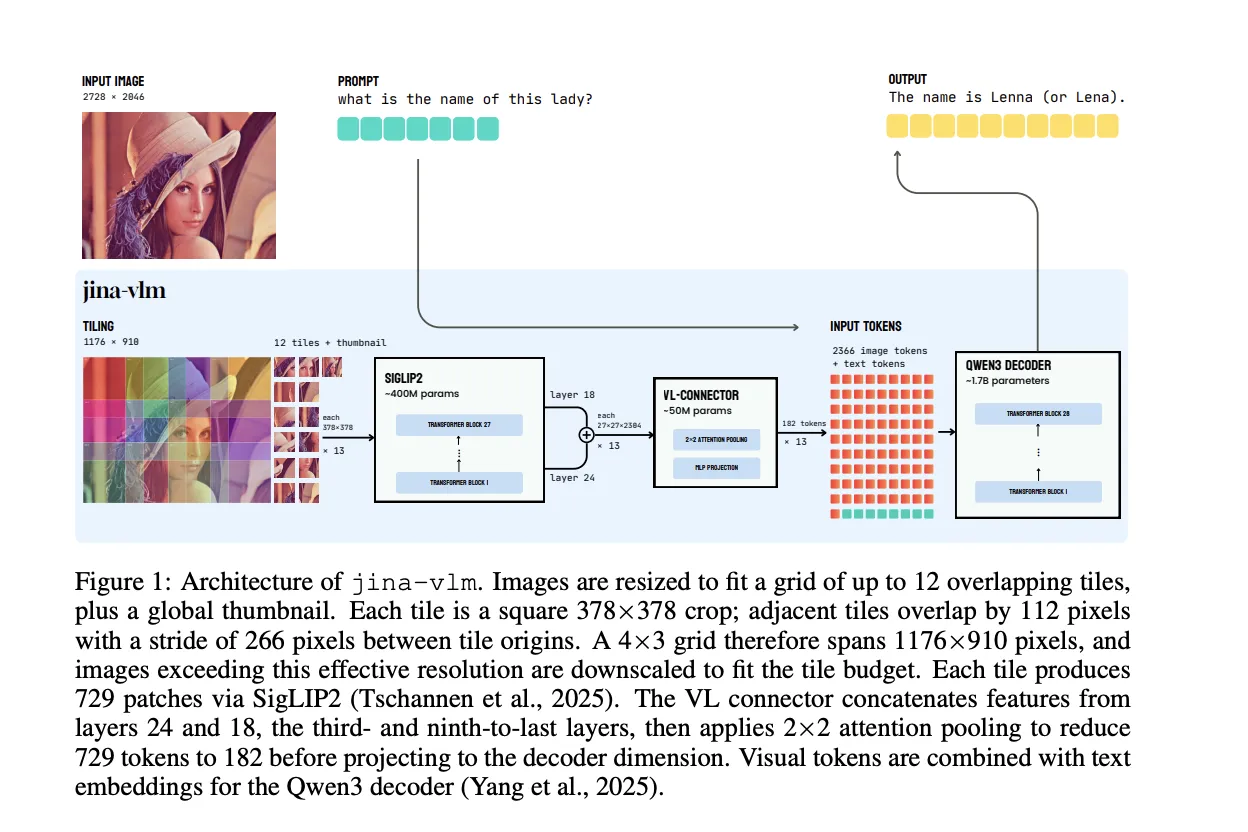

Structure, overlapping tiles with consideration pooling connector

Jina-VLM retains the usual VLM structure, however optimizes the imaginative and prescient aspect for arbitrary decision and low token depend. The imaginative and prescient encoder is SigLIP2 So400M/14 384, a 27 layer Imaginative and prescient Transformer with about 400M parameters. It processes 378×378 pixel crops right into a 27×27 grid of 14×14 patches, so every tile produces 729 patch tokens.

To deal with excessive decision photographs, the mannequin doesn’t resize the total enter to a single sq.. As a substitute, it constructs a grid of as much as 12 overlapping tiles together with a worldwide thumbnail. Every tile is a 378×378 crop, adjoining tiles overlap by 112 pixels, and the stride between tile origins is 266 pixels. A 4×3 grid covers an efficient decision of 1176×910 pixels earlier than downscaling bigger photographs to suit contained in the tile finances.

The core design is the imaginative and prescient language connector. Relatively than utilizing the ultimate ViT layer, Jina-VLM concatenates options from two intermediate layers, the third from final and ninth from final, that correspond to layers 24 and 18. This combines excessive degree semantics and mid degree spatial element. The connector then applies consideration pooling over 2×2 patch neighborhoods. It computes a imply pooled question for every 2×2 area, attends over the total concatenated characteristic map, and outputs a single pooled token per neighborhood. This reduces 729 visible tokens per tile to 182 tokens, which is a 4 instances compression. A SwiGLU projection maps the pooled options to the Qwen3 embedding dimension.

With the default 12 tile configuration plus thumbnail, a naive connector would feed 9,477 visible tokens into the language mannequin. Consideration pooling cuts this to 2,366 visible tokens. The ViT compute doesn’t change, however for the language spine this yields about 3.9 instances fewer prefill FLOPs and 4 instances smaller KV cache. When together with the shared ViT value, the general FLOPs drop by about 2.3 instances for the default setting.

The language decoder is Qwen3-1.7B-Base. The mannequin introduces particular tokens for photographs, with <im_start> and <im_end> across the tile sequence and <im_col> to mark rows within the patch grid. Visible tokens from the connector and textual content embeddings are concatenated and handed to Qwen3 to generate solutions.

Coaching pipeline and multilingual knowledge combine

Coaching proceeds in 2 phases. All parts, encoder, connector and decoder, are up to date collectively, with out freezing. The complete corpus incorporates about 5M multimodal samples and 12B textual content tokens throughout greater than 30 languages. Roughly half of the textual content is English, and the remainder covers excessive and mid useful resource languages akin to Chinese language, Arabic, German, Spanish, French, Italian, Japanese and Korean.

Stage 1 is alignment coaching. The aim is cross language visible grounding, not instruction following. The group makes use of caption heavy datasets PixmoCap and PangeaIns, which span pure photographs, paperwork, diagrams and infographics. They add 15 % textual content solely knowledge from the PleiAS frequent corpus to regulate degradation on pure language duties. The connector makes use of a better studying charge and shorter warmup than the encoder and decoder to hurry up adaptation with out destabilizing the backbones.

Stage 2 is instruction high quality tuning. Right here Jina VLM learns to comply with prompts for visible query answering and reasoning. The combination combines LLaVA OneVision, Cauldron, Cambrian, PangeaIns and FineVision, plus Aya type multilingual textual content solely directions. The Jina analysis group first prepare for 30,000 steps with single supply batches, then for an additional 30,000 steps with combined supply batches. This schedule stabilizes studying within the presence of very heterogeneous supervision.

Throughout pretraining and high quality tuning, the mannequin sees about 10B tokens within the first stage and 37B tokens within the second stage, with a complete of roughly 1,300 GPU hours reported for the primary experiments.

Benchmark profile, 2.4B mannequin with multilingual power

On customary English VQA duties that embody diagrams, charts, paperwork, OCR and combined scenes, Jina-VLM reaches a mean rating of 72.3 throughout 8 benchmarks. These are AI2D, ChartQA, TextVQA, DocVQA, InfoVQA, OCRBench, SEED Bench 2 Plus and CharXiv. That is the perfect common among the many 2B scale comparability fashions in this analysis paper from Jina AI.

On multimodal comprehension and actual world understanding duties, the mannequin scores 67.4 on the multimodal group, which incorporates MME, MMB v1.1 and MMStar. It scores 61.9 on the true world group, which incorporates RealWorldQA, MME RealWorld and R Bench, and it reaches 68.2 accuracy on RealWorldQA itself, which is the perfect consequence among the many baselines thought-about.

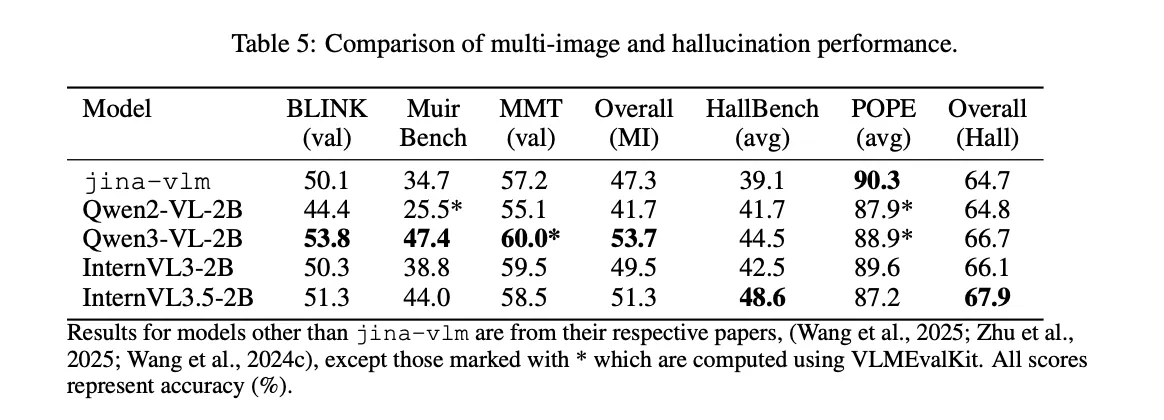

Multi picture reasoning is a weaker space. On BLINK, MuirBench and MMT, Jina-VLM reaches a mean of 47.3. The analysis group level to restricted multi-image coaching knowledge as the explanation. In distinction, hallucination management is powerful. On the POPE benchmark, which measures object hallucination, the mannequin scores 90.3, the perfect rating within the comparability desk.

For mathematical and structured reasoning, the mannequin makes use of the identical structure, with out considering mode. It reaches 59.5 on MMMU and an total math rating of 33.3 throughout MathVista, MathVision, MathVerse, WeMath and LogicVista. Jina-VLM is akin to InternVL3-2B on this set and clearly forward of Qwen2-VL-2B, whereas InternVL3.5-2B stays stronger as a result of its bigger scale and extra specialised math coaching.

On pure textual content benchmarks, the image is combined. The analysis group stories that Jina-VLM retains many of the Qwen3-1.7B efficiency on MMLU, GSM 8K, ARC C and HellaSwag. Nonetheless, MMLU-Professional drops from 46.4 for the bottom mannequin to 30.3 after multimodal tuning. The analysis group attribute this to instruction tuning that pushes the mannequin towards very quick solutions, which clashes with the lengthy multi step reasoning required by MMLU Professional.

The principle spotlight is multilingual multimodal understanding. On MMMB throughout Arabic, Chinese language, English, Portuguese, Russian and Turkish, Jina-VLM reaches a mean of 78.8. On Multilingual MMBench throughout the identical languages, it reaches 74.3. The analysis group stories these as state-of-the-art averages amongst open 2B scale VLMs.

Comparability Desk

| Mannequin | Params | VQA Avg | MMMB | Multi. MMB | DocVQA | OCRBench |

|---|---|---|---|---|---|---|

| Jina-VLM | 2.4B | 72.3 | 78.8 | 74.3 | 90.6 | 778 |

| Qwen2-VL-2B | 2.1B | 66.4 | 71.3 | 69.4 | 89.2 | 809 |

| Qwen3-VL-2B | 2.8B | 71.6 | 75.0 | 72.3 | 92.3 | 858 |

| InternVL3-2B | 2.2B | 69.2 | 73.6 | 71.9 | 87.4 | 835 |

| InternVL3.5-2B | 2.2B | 71.6 | 74.6 | 70.9 | 88.5 | 836 |

Key Takeaways

- Jina-VLM is a 2.4B parameter VLM that {couples} SigLIP2 So400M as imaginative and prescient encoder with Qwen3-1.7B as language spine by way of an consideration pooling connector that cuts visible tokens by 4 instances whereas conserving spatial construction.

- The mannequin makes use of overlapping 378×378 tiles, 12 tiles plus a worldwide thumbnail, to deal with arbitrary decision photographs as much as roughly 4K, then feeds solely pooled visible tokens to the LLM which reduces prefill FLOPs and KV cache measurement by about 4 instances in comparison with naive patch token utilization.

- Coaching makes use of about 5M multimodal samples and 12B textual content tokens throughout practically 30 languages in a 2 stage pipeline, first alignment with caption type knowledge, then instruction high quality tuning with LLaVA OneVision, Cauldron, Cambrian, PangeaIns, FineVision and multilingual instruction units.

- On English VQA, Jina-VLM reaches 72.3 common throughout 8 VQA benchmarks, and on multilingual multimodal benchmarks it leads the open 2B scale class with 78.8 on MMMB and 74.3 on Multilingual MMBench whereas conserving aggressive textual content solely efficiency.

Take a look at the Paper, Mannequin on HF and Technical particulars. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be part of us on telegram as nicely.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.