The Chinese language AI startup DeepSeek releases DeepSeek-V3.1, it’s newest flagship language mannequin. It builds on the structure of DeepSeek-V3, including important enhancements to reasoning, instrument use, and coding efficiency. Notably, DeepSeek fashions have quickly gained a status for delivering OpenAI and Anthropic-level efficiency at a fraction of the associated fee.

Mannequin Structure and Capabilities

- Hybrid Pondering Mode: DeepSeek-V3.1 helps each considering (chain-of-thought reasoning, extra deliberative) and non-thinking (direct, stream-of-consciousness) technology, switchable by way of the chat template. This can be a departure from earlier variations and provides flexibility for diverse use instances.

- Device and Agent Help: The mannequin has been optimized for instrument calling and agent duties (e.g., utilizing APIs, code execution, search). Device calls use a structured format, and the mannequin helps customized code brokers and search brokers, with detailed templates supplied within the repository.

- Large Scale, Environment friendly Activation: The mannequin boasts 671B complete parameters, with 37B activated per token—a Combination-of-Specialists (MoE) design that lowers inference prices whereas sustaining capability. The context window is 128K tokens, a lot bigger than most opponents.

- Lengthy Context Extension: DeepSeek-V3.1 makes use of a two-phase long-context extension strategy. The primary section (32K) was skilled on 630B tokens (10x greater than V3), and the second (128K) on 209B tokens (3.3x greater than V3). The mannequin is skilled with FP8 microscaling for environment friendly arithmetic on next-gen {hardware}.

- Chat Template: The template helps multi-turn conversations with specific tokens for system prompts, person queries, and assistant responses. The considering and non-thinking modes are triggered by

<suppose>and</suppose>tokens within the immediate sequence.

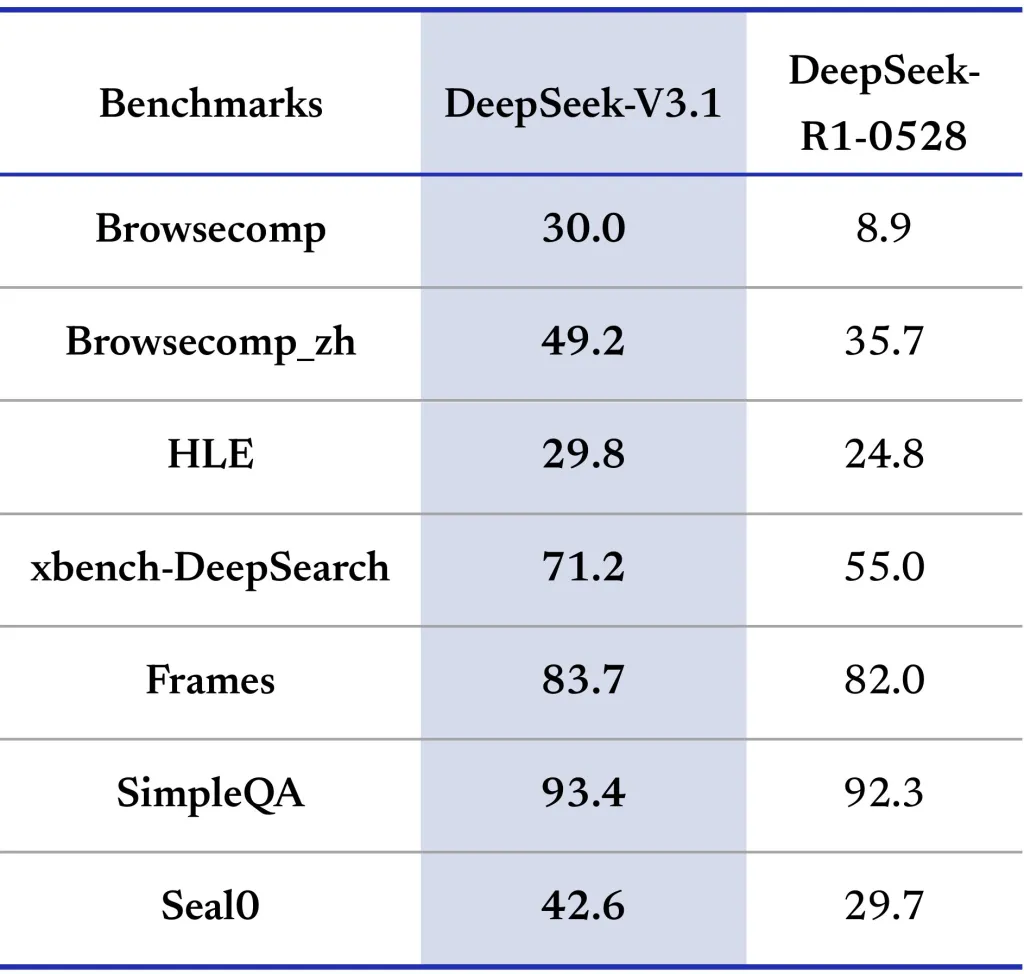

Efficiency Benchmarks

DeepSeek-V3.1 is evaluated throughout a variety of benchmarks (see desk under), together with basic data, coding, math, instrument use, and agent duties. Listed here are highlights:

| Metric | V3.1-NonThinking | V3.1-Pondering | Opponents |

|---|---|---|---|

| MMLU-Redux (EM) | 91.8 | 93.7 | 93.4 (R1-0528) |

| MMLU-Professional (EM) | 83.7 | 84.8 | 85.0 (R1-0528) |

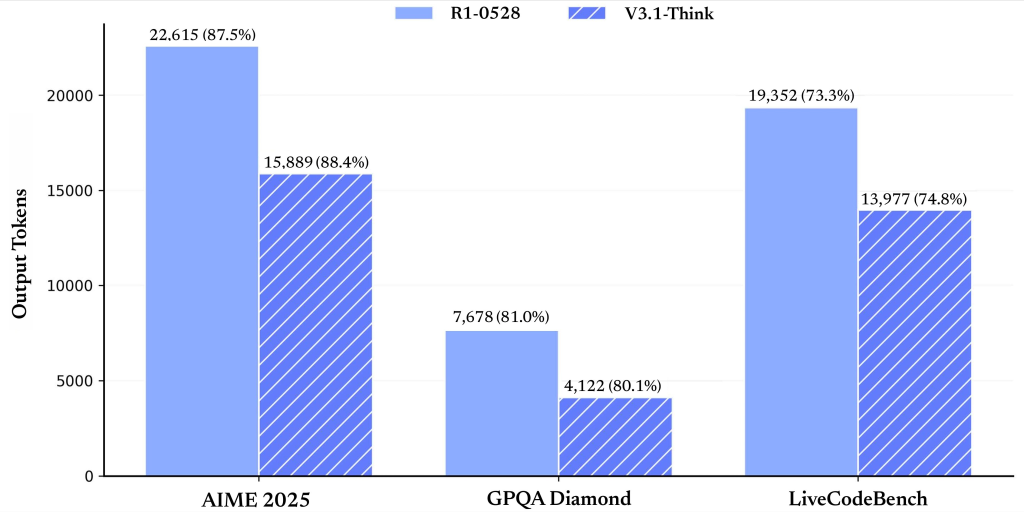

| GPQA-Diamond (Move@1) | 74.9 | 80.1 | 81.0 (R1-0528) |

| LiveCodeBench (Move@1) | 56.4 | 74.8 | 73.3 (R1-0528) |

| AIMÉ 2025 (Move@1) | 49.8 | 88.4 | 87.5 (R1-0528) |

| SWE-bench (Agent mode) | 54.5 | — | 30.5 (R1-0528) |

The considering mode persistently matches or exceeds earlier state-of-the-art variations, particularly in coding and math. The non-thinking mode is quicker however barely much less correct, making it splendid for latency-sensitive purposes.

Device and Code Agent Integration

- Device Calling: Structured instrument invocations are supported in non-thinking mode, permitting for scriptable workflows with exterior APIs and companies.

- Code Brokers: Builders can construct customized code brokers by following the supplied trajectory templates, which element the interplay protocol for code technology, execution, and debugging. DeepSeek-V3.1 can use exterior search instruments for up-to-date data, a function vital for enterprise, finance, and technical analysis purposes.

Deployment

- Open Supply, MIT License: All mannequin weights and code are freely obtainable on Hugging Face and ModelScope underneath the MIT license, encouraging each analysis and business use.

- Native Inference: The mannequin construction is suitable with DeepSeek-V3, and detailed directions for native deployment are supplied. Operating requires important GPU sources as a result of mannequin’s scale, however the open ecosystem and group instruments decrease obstacles to adoption.

Abstract

DeepSeek-V3.1 represents a milestone within the democratization of superior AI, demonstrating that open-source, cost-efficient, and extremely succesful language fashions. Its mix of scalable reasoning, instrument integration, and distinctive efficiency in coding and math duties positions it as a sensible alternative for each analysis and utilized AI improvement.

Take a look at the Mannequin on Hugging Face. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our E-newsletter.