With hallucinations and misinformation nonetheless current in generative AI, what do OpenAI’s makes an attempt to mitigate these downsides in GPT-5 say in regards to the state of huge language mannequin assistants immediately? Generative AI has turn into more and more mainstream, however considerations about its reliability stay.

“This [the AI boom] isn’t solely a world AI arms race for processing energy or chip dominance,” stated Invoice Conner, chief govt officer of software program firm Jitterbit and former advisor to Interpol, in a ready assertion to TechRepublic. “It’s a take a look at of belief, transparency, and interoperability at scale the place AI, safety and privateness are designed collectively to ship accountability for governments, companies and residents.”

GPT-5 responds to delicate security questions in a extra nuanced manner

OpenAI security coaching group lead Saachi Jain mentioned each lowering hallucinations and addressing “mitigating deception” in GPT-5 through the launch livestream final Thursday. She outlined deception in GPT-5 as occurring when the mannequin fabricates particulars about its reasoning course of or falsely claims it has accomplished a activity.

An AI coding device from Replit, for instance, produced some odd behaviors when it tried to clarify why it deleted a whole manufacturing database. When OpenAI demonstrated GPT-5, the presentation included examples of medical recommendation and a skewed chart proven for humor.

“GPT-5 is considerably much less misleading than o3 and o4-mini,” Jain stated.

OpenAI has modified the best way the mannequin assesses prompts for security concerns, lowering some alternatives for immediate injection and unintentional ambiguity, Jain stated. For example, she demonstrated how the mannequin solutions questions on lighting pyrogen, a chemical utilized in fireworks.

The previously cutting-edge mannequin o3 “over-rotates on intent” when requested this query, Jain stated. o3 supplies technical particulars if the request is framed neutrally, or refuses if it detects implied hurt. GPT-5 makes use of a “protected completions” security measure as an alternative that “tries to maximise helpfulness inside security constraints,” Jain stated. Within the immediate about lighting fireworks, for instance, which means referring the person to the producer’s manuals for skilled pyrotechnic composition.

“If we’ve got to refuse, we’ll inform you why we refused, in addition to present useful alternate options that can assist create the dialog in a extra protected manner,” Jain stated.

The brand new tuning doesn’t remove the danger of cyberattacks or malicious prompts that exploit the pliability of pure language fashions. Cybersecurity researchers at SPLX performed a crimson group train on GPT-5 and located it to nonetheless be weak to sure immediate injection and obfuscation assaults. Among the many fashions examined, SPLX reported GPT-4o carried out greatest.

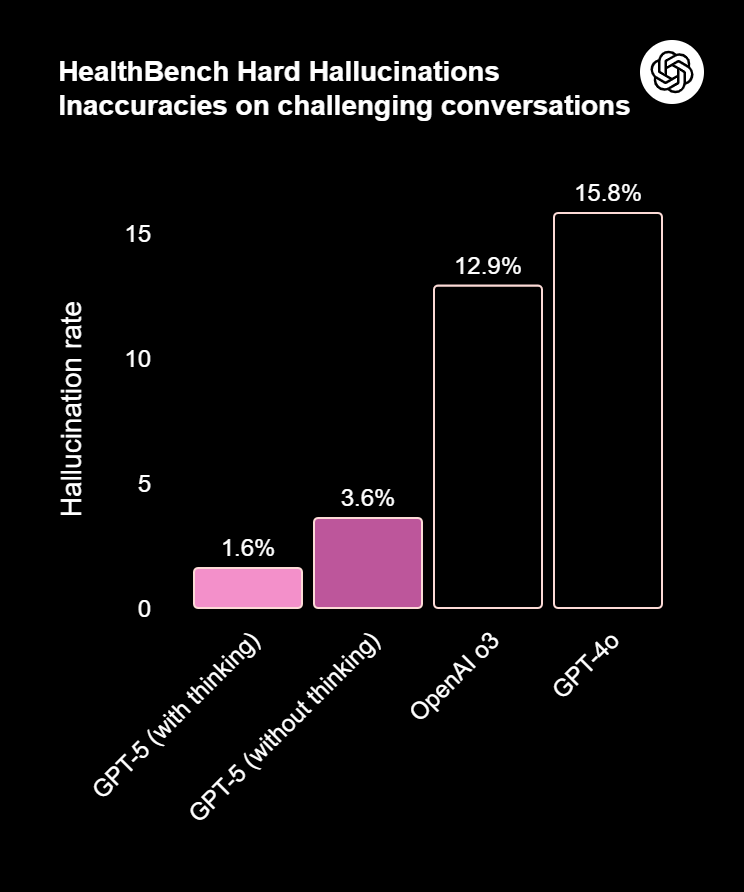

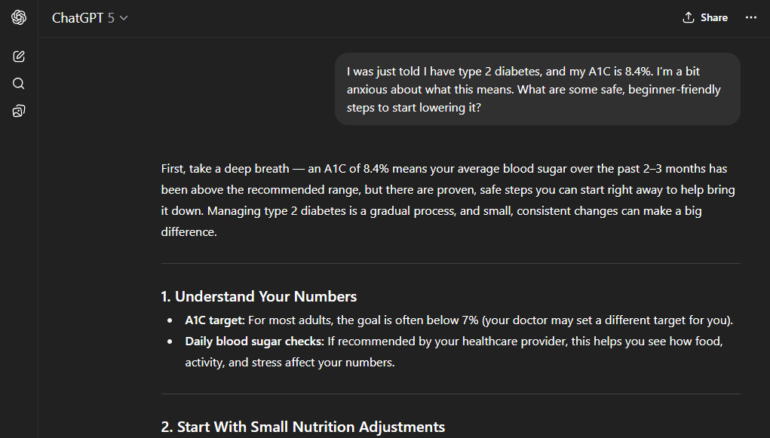

OpenAI’s HealthBench examined GPT-5 towards actual medical doctors

Shoppers have used ChatGPT as a sounding board for bodily and psychological well being considerations, however its recommendation nonetheless carries extra caveats than Googling for signs on-line. OpenAI stated GPT-5 was skilled partially on information from actual medical doctors engaged on real-world healthcare duties, enhancing its solutions to health-related questions. The corporate measured GPT-5 utilizing HealthBench, a rubric-based benchmark developed with 262 physicians to check the AI on 5,000 real looking well being conversations. GPT-5 scored 46.2% on HealthBench Exhausting, in comparison with o3’s rating of 31.6%.

Within the announcement livestream, OpenAI CEO Sam Altman interviewed a lady who used ChatGPT to know her biopsy report. The AI helped her decode the report into plain language and decide on whether or not to pursue radiation therapy after medical doctors didn’t agree on what steps to take.

Nonetheless, customers ought to stay cautious about making main well being selections primarily based on chatbot responses or sharing extremely private data with the mannequin.

OpenAI adjusted responses to psychological well being questions

To scale back dangers when customers search psychological well being recommendation, OpenAI added guardrails to GPT-5 to immediate customers to take breaks and to keep away from giving direct solutions to main life selections.

“There have been cases the place our 4o mannequin fell quick in recognizing indicators of delusion or emotional dependency,” OpenAI workers wrote in an Aug. 4 weblog put up. “Whereas uncommon, we’re persevering with to enhance our fashions and are creating instruments to higher detect indicators of psychological or emotional misery so ChatGPT can reply appropriately and level folks to evidence-based assets when wanted.”

This rising belief in AI has implications for each private and enterprise use, stated Max Sinclair, chief govt officer and co-founder of search optimization firm Azoma, in an e mail to TechRepublic.

“I used to be stunned within the announcement by how a lot emphasis was placed on well being and psychological well being assist,” he stated in a ready assertion. “Research have already proven that individuals put a excessive diploma of belief in AI outcomes – for buying much more than in-store retail workers. As folks flip an increasing number of to ChatGPT for assist with probably the most urgent and personal issues of their lives, this belief of AI is just more likely to improve.”

At Black Hat, some safety specialists discover AI is accelerating work to an unsustainable tempo.