Estimated studying time: 4 minutes

The paper “A Survey of Context Engineering for Giant Language Fashions” establishes Context Engineering as a proper self-discipline that goes far past immediate engineering, offering a unified, systematic framework for designing, optimizing, and managing the data that guides Giant Language Fashions (LLMs). Right here’s an outline of its major contributions and framework:

What Is Context Engineering?

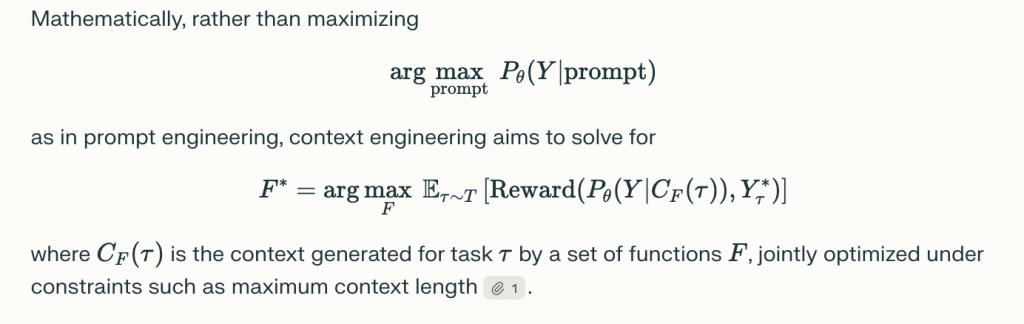

Context Engineering is outlined because the science and engineering of organizing, assembling, and optimizing all types of context fed into LLMs to maximise efficiency throughout comprehension, reasoning, adaptability, and real-world software. Slightly than viewing context as a static string (the premise of immediate engineering), context engineering treats it as a dynamic, structured meeting of parts—every sourced, chosen, and arranged by way of specific features, typically beneath tight useful resource and architectural constraints.

Taxonomy of Context Engineering

The paper breaks down context engineering into:

1. Foundational Parts

a. Context Retrieval and Era

- Encompasses immediate engineering, in-context studying (zero/few-shot, chain-of-thought, tree-of-thought, graph-of-thought), exterior information retrieval (e.g., Retrieval-Augmented Era, information graphs), and dynamic meeting of context elements1.

- Methods like CLEAR Framework, dynamic template meeting, and modular retrieval architectures are highlighted.

b. Context Processing

- Addresses long-sequence processing (with architectures like Mamba, LongNet, FlashAttention), context self-refinement (iterative suggestions, self-evaluation), and integration of multimodal and structured data (imaginative and prescient, audio, graphs, tables).

- Methods embrace consideration sparsity, reminiscence compression, and in-context studying meta-optimization.

c. Context Administration

- Includes reminiscence hierarchies and storage architectures (short-term context home windows, long-term reminiscence, exterior databases), reminiscence paging, context compression (autoencoders, recurrent compression), and scalable administration over multi-turn or multi-agent settings.

2. System Implementations

a. Retrieval-Augmented Era (RAG)

- Modular, agentic, and graph-enhanced RAG architectures combine exterior information and assist dynamic, generally multi-agent retrieval pipelines.

- Allows each real-time information updates and complicated reasoning over structured databases/graphs.

b. Reminiscence Techniques

- Implement persistent and hierarchical storage, enabling longitudinal studying and information recall for brokers (e.g., MemGPT, MemoryBank, exterior vector databases).

- Key for prolonged, multi-turn dialogs, personalised assistants, and simulation brokers.

c. Software-Built-in Reasoning

- LLMs use exterior instruments (APIs, search engines like google, code execution) through operate calling or setting interplay, combining language reasoning with world-acting skills.

- Allows new domains (math, programming, net interplay, scientific analysis).

d. Multi-Agent Techniques

- Coordination amongst a number of LLMs (brokers) through standardized protocols, orchestrators, and context sharing—important for advanced, collaborative problem-solving and distributed AI purposes.

Key Insights and Analysis Gaps

- Comprehension–Era Asymmetry: LLMs, with superior context engineering, can comprehend very subtle, multi-faceted contexts however nonetheless wrestle to generate outputs matching that complexity or size.

- Integration and Modularity: Finest efficiency comes from modular architectures combining a number of methods (retrieval, reminiscence, instrument use).

- Analysis Limitations: Present analysis metrics/benchmarks (like BLEU, ROUGE) typically fail to seize the compositional, multi-step, and collaborative behaviors enabled by superior context engineering. New benchmarks and dynamic, holistic analysis paradigms are wanted.

- Open Analysis Questions: Theoretical foundations, environment friendly scaling (particularly computationally), cross-modal and structured context integration, real-world deployment, security, alignment, and moral considerations stay open analysis challenges.

Purposes and Affect

Context engineering helps sturdy, domain-adaptive AI throughout:

- Lengthy-document/query answering

- Customized digital assistants and memory-augmented brokers

- Scientific, medical, and technical problem-solving

- Multi-agent collaboration in enterprise, schooling, and analysis

Future Instructions

- Unified Principle: Creating mathematical and information-theoretic frameworks.

- Scaling & Effectivity: Improvements in consideration mechanisms and reminiscence administration.

- Multi-Modal Integration: Seamless coordination of textual content, imaginative and prescient, audio, and structured information.

- Strong, Secure, and Moral Deployment: Making certain reliability, transparency, and equity in real-world techniques.

In abstract: Context Engineering is rising because the pivotal self-discipline for guiding the following technology of LLM-based clever techniques, shifting the main focus from artistic immediate writing to the rigorous science of data optimization, system design, and context-driven AI.

Take a look at the Paper. Be happy to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our Publication.