Amazon Internet Providers introduced an AI chatbot for enterprise use, new generations of its AI coaching chips, expanded partnerships and extra throughout AWS re:Invent, held from November 27 to December 1, in Las Vegas.

The main focus of AWS CEO Adam Selipsky’s keynote held on day two of the convention was on generative AI and methods to allow organizations to coach highly effective fashions by means of cloud providers.

Soar to:

Graviton4 and Trainium2 chips introduced

AWS introduced new generations of its Graviton chips, that are server processors for cloud workloads and Trainium, which gives compute energy for AI basis mannequin coaching.

Graviton4 (Determine A) has 30% higher compute efficiency, 50% extra cores and 75% extra reminiscence bandwidth than Graviton3, Selipsky stated. The primary occasion primarily based on Graviton4 would be the R8g Situations for EC2 for memory-intensive workloads, out there by means of AWS.

Trainium2 is coming to Amazon EC2 Trn2 cases, and every occasion will be capable of scale as much as 100,000 Trainium2 chips. That gives the flexibility to coach a 300-billion parameter massive language mannequin in weeks, AWS acknowledged in a press launch.

Determine A

Anthropic will use Trainium and Amazon’s high-performance machine studying chip Inferentia for its AI fashions, Selipsky and Dario Amodei, chief govt officer and co-founder of Anthropic, introduced. These chips might assist Amazon muscle into Microsoft’s house within the AI chip market.

Amazon Bedrock: Content material guardrails and different options added

Selipsky made a number of bulletins about Amazon Bedrock, the inspiration mannequin constructing service, throughout re:Invent:

- Brokers for Amazon Bedrock are usually out there in preview at present.

- Customized fashions constructed with bespoke fine-tuning and ongoing pretraining are open in preview for patrons within the U.S. at present.

- Guardrails for Amazon Bedrock are coming quickly; Guardrails lets organizations conform Bedrock to their very own AI content material limitations utilizing a pure language wizard.

- Information Bases for Amazon Bedrock, which bridge basis fashions in Amazon Bedrock to inner firm knowledge for retrieval augmented era, are actually usually out there within the U.S.

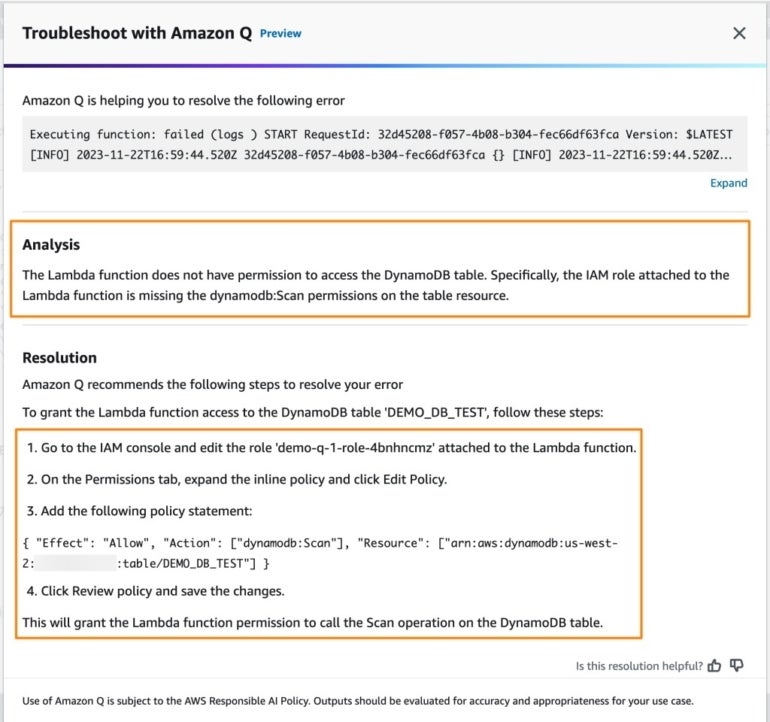

Amazon Q: Amazon enters the chatbot race

Amazon launched its personal generative AI assistant, Amazon Q, designed for pure language interactions and content material era for work. It will possibly match into current identities, roles and permissions in enterprise safety permissions.

Amazon Q can be utilized all through a corporation and might entry a variety of different enterprise software program. Amazon is pitching Amazon Q as business-focused and specialised for particular person workers who might ask particular questions on their gross sales or duties.

Amazon Q is very suited to builders and IT professionals working inside AWS CodeCatalyst as a result of it could actually assist troubleshoot errors or community connections. Amazon Q will exist within the AWS administration console and documentation inside CodeWhisperer, within the serverless computing platform AWS Lambda, or in office communication apps like Slack (Determine B).

Determine B

Amazon Q has a function that enables utility builders to replace their purposes utilizing pure language directions. This function of Amazon Q is obtainable in preview in AWS CodeCatalyst at present and can quickly be coming to supported built-in improvement environments.

SEE: Knowledge governance is likely one of the many elements that must be thought of throughout generative AI deployment. (TechRepublic)

Many Amazon Q options inside different Amazon providers and merchandise can be found in preview at present. For instance, contact middle directors can entry Amazon Q in Amazon Join now.

Amazon S3 Specific One Zone opens its doorways

The Amazon S3 Specific One Zone, now in common availability, is a brand new S3 storage class purpose-built for high-performance and low-latency cloud object storage for frequently-accessed knowledge, Selipsky stated. It’s designed for workloads that require single-digit millisecond latency equivalent to finance or machine studying. As we speak, clients transfer knowledge from S3 to customized caching options; with the Amazon S3 Specific One Zone, they will select their very own geographical availability zone and produce their steadily accessed knowledge subsequent to their high-performance computing. Selipsky stated Amazon S3 Specific One Zone might be run with 50% decrease entry prices than the usual Amazon S3.

Salesforce now out there on AWS Market

On Nov. 27, AWS introduced Salesforce’s partnership with Amazon will develop to sure Salesforce CRM merchandise accessed on AWS Market. Particularly, Salesforce’s Knowledge Cloud, Service Cloud, Gross sales Cloud, Trade Clouds, Tableau, MuleSoft, Platform and Heroku will probably be out there for joint clients of Salesforce and AWS within the U.S. Extra merchandise are anticipated to be out there, and the geographical availability is anticipated to be expanded subsequent 12 months.

New choices embody:

- The Amazon Bedrock AI service will probably be out there inside Salesforce’s Einstein Belief Layer.

- Salesforce Knowledge Cloud will help knowledge sharing throughout AWS applied sciences together with Amazon Easy Storage Service.

“Salesforce and AWS make it simple for builders to securely entry and leverage knowledge and generative AI applied sciences to drive fast transformation for his or her organizations and industries,” Selipsky stated in a press launch.

Conversely, AWS will probably be utilizing Salesforce merchandise equivalent to Salesforce Knowledge Cloud extra typically internally.

Amazon removes ETL from extra Amazon Redshift integrations

ETL is usually a cumbersome a part of coding with transactional knowledge. Final 12 months, Amazon introduced a zero-ETL integration between Amazon Aurora, MySQL and Amazon Redshift.

As we speak AWS launched extra zero-ETL integrations with Amazon Redshift:

- Aurora PostgreSQL

- Amazon RDS for MySQL

- Amazon DynamoDB

All three can be found globally in preview now.

The subsequent factor Amazon wished to do is make search in transactional knowledge extra clean; many individuals use Amazon OpenSearch Service for this. In response, Amazon introduced DynamoDB zero-ETL with OpenSearch Service is obtainable at present.

Plus, in an effort to make knowledge extra discoverable in Amazon DataZone, Amazon added a brand new functionality so as to add enterprise descriptions to knowledge units utilizing generative AI.

Introducing Amazon One Enterprise authentication scanner

Amazon One Enterprise allows safety administration for entry to bodily areas in industries equivalent to hospitality, training or applied sciences. It’s a fully-managed on-line service paired with the AWS One palm scanner for biometric authentication administered by means of the AWS Administration Console. Amazon One Enterprise is presently out there in preview within the U.S.

NVIDIA and AWS make cloud pact

NVIDIA introduced a brand new set of GPUs out there by means of AWS, the NVIDIA L4 GPUs, NVIDIA L40S GPUs and NVIDIA H200 GPUs. AWS would be the first cloud supplier to carry the H200 chips with NV hyperlink to the cloud. By this hyperlink, the GPU and CPU can share reminiscence to hurry up processing, NVIDIA CEO Jensen Huang defined throughout Selipsky’s keynote. Amazon EC2 G6e cases that includes NVIDIA L40S GPUs and Amazon G6 cases powered by L4 GPUs will begin to roll out in 2024.

As well as, the NVIDIA DGX Cloud, NVIDIA’s AI constructing platform, is coming to AWS. An actual date for its availability hasn’t but been introduced.

NVIDIA introduced on AWS as a main accomplice in Venture Ceiba, NVIDIA’s 65 exaflop supercomputer together with 16,384 NVIDIA GH200 Superchips.

NVIDIA NeMo Retriever

One other announcement made throughout re:Invent is the NVIDIA NeMo Retriever, which permits enterprise clients to offer extra correct responses from their multimodal generative AI purposes utilizing retrieval-augmented era.

Particularly, NVIDIA NeMo Retriever is a semantic-retrieval microservice that connects customized LLMs to purposes. NVIDIA NeMo Retriever’s embedding fashions decide the semantic relationships between phrases. Then, that knowledge is fed into an LLM, which processes and analyzes the textual knowledge. Enterprise clients can join that LLM to their very own knowledge sources and information bases.

NVIDIA NeMo Retriever is obtainable in early entry now by means of the NVIDIA AI Enterprise Software program platform wherever it may be accessed by means of the AWS Market.

Early companions working with NVIDIA on retrieval-augmented era providers embody Cadence, Dropbox, SAP and ServiceNow.

Word: TechRepublic is protecting AWS re:Invent nearly.