You’ve got most likely heard or learn folks speaking about moral AI. Whereas computer systems cannot truly assume and thus don’t have any precise bias or sense of duty, the folks creating the software program that powers all of it definitely do and that is what makes it essential.

It isn’t a straightforward factor to explain or to note. That does not take something away from how important it’s that huge tech corporations who develop consumer-facing merchandise powered by AI do it proper. Let’s check out what it’s and what’s being achieved to ensure AI makes our lives higher as a substitute of worse.

AI is nothing greater than a piece of software program that takes in one thing, processes it, and spits out one thing else. Actually, that is what all software program does. What makes AI totally different is that the output will be wildly totally different from the enter.

Android & Chill

One of many internet’s longest-running tech columns, Android & Chill is your Saturday dialogue of Android, Google, and all issues tech.

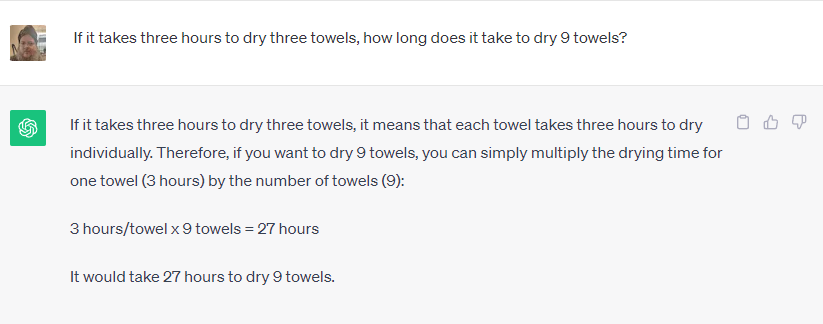

Take ChatGPT or Google Bard as an illustration. You possibly can ask both to unravel math issues, look one thing up on the internet, let you know what day Elvis Presley died, or write you a brief story in regards to the historical past of the Catholic church. No one would have thought you may use your cellphone for this just a few years in the past.

A few of these are fairly simple duties. Software program that is built-in with the web can fetch details about something and regurgitate it again as a response to a question. It is nice as a result of it may well save time, but it surely’s probably not superb.

A kind of issues is totally different, although. Software program is used to create significant content material that makes use of human mannerisms and speech, which is not one thing that may be seemed up on Google. The software program acknowledges what you’re asking (write me a narrative) and the parameters for use for it (historic knowledge in regards to the Catholic church) however the remainder appears superb.

Imagine it or not, accountable improvement issues in each kinds of use. Software program does not magically resolve something and it must be skilled responsibly, utilizing sources which can be both unbiased (inconceivable) or equally biased from all sides.

You don’t need Bard to make use of somebody who thinks Elvis remains to be alive as the only real supply for the day he died. You additionally would not need somebody who hates the Catholic church to program software program used to put in writing a narrative about it. Each factors of view have to be thought of and weighed in opposition to different legitimate factors of view.

Ethics matter as a lot as retaining pure human bias in test. An organization that may develop one thing wants to ensure it’s used responsibly. If it may well’t do this, it must not develop it in any respect. That is a slippery slope — one that could be a lot tougher to handle.

The businesses that develop intricate AI software program are conscious of this and imagine it or not, principally comply with the rule saying that some issues simply should not be made even when they can be made.

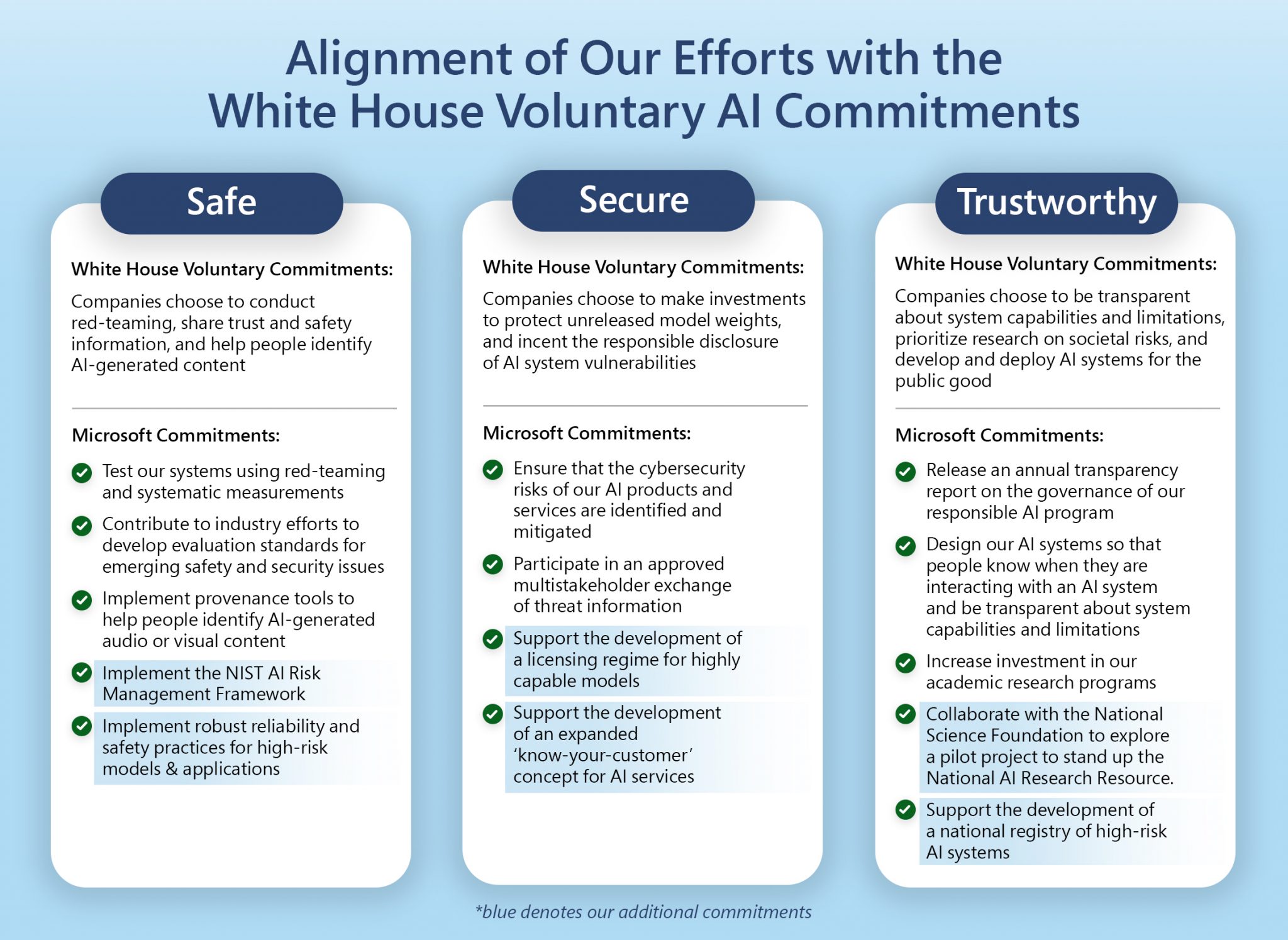

I reached out to each Microsoft and Google about what’s being achieved to ensure AI is used responsibly. Microsoft instructed me they did not have something new to share however directed me to a collection of company hyperlinks and weblog posts in regards to the topic. studying via them reveals that Microsoft is dedicated to “be certain that superior AI programs are protected, safe, and reliable” in accordance with the present U.S. coverage and descriptions its inner requirements in nice element. Microsoft at present has a whole crew engaged on accountable AI.

In distinction, Google has a lot to say along with its already printed statements. I not too long ago attended a presentation in regards to the topic the place senior Google staff mentioned the one AI race that issues is the race for accountable AI. They defined that simply because one thing is technically doable is not a adequate motive to greenlight a product: harms have to be recognized and mitigated as a part of the event course of to allow them to then be used to additional refine that course of.

Facial recognition and emotional detection got as examples. Google is admittedly good at each and in case you do not imagine that, take a look at Google Images the place you’ll be able to seek for a selected individual or for completely happy folks in your individual footage. It is also the primary characteristic requested by potential prospects however Google refuses to develop it additional. The potential hurt is sufficient for Google to refuse to take cash for it.

Google too is in favor of each governmental regulation in addition to inner regulation. That is each stunning and essential as a result of a stage taking part in discipline the place no firm can create dangerous AI is one thing that each Google and Microsoft assume outweighs any potential revenue or monetary achieve.

What impressed me probably the most was Google’s response once I requested about balancing accuracy and ethics. For one thing like plant identification, accuracy is essential whereas bias is much less essential. For one thing like figuring out somebody’s gender in a photograph, each are equally essential. Google breaks AI into what it calls slices; every slice does a unique factor and the result is evaluated by a crew of actual folks to test the outcomes. Changes are made till the crew is happy, then additional improvement can occur.

None of that is excellent and all of us have seen AI do or repeat very silly issues, generally even hurtful issues. If these biases and moral breaches can discover their manner into one product, they’ll (and do) discover their manner into all of them.

What’s essential is that the businesses creating the software program of the long run acknowledge this and maintain making an attempt to enhance the method. It is going to by no means be excellent however so long as every iteration is healthier than the final we’re transferring in the best path.