Walkthrough of neural community evolution (picture by creator).

Neural networks, the elemental constructing blocks of synthetic intelligence, have revolutionized the way in which we course of info, providing a glimpse into the way forward for expertise. These advanced computational methods, impressed by the intricacies of the human mind, have turn out to be pivotal in duties starting from picture recognition and pure language understanding to autonomous driving and medical analysis. As we discover neural networks’ historic evolution, we are going to uncover their outstanding journey of how they’ve advanced to form the fashionable panorama of AI.

Neural networks, the foundational parts of deep studying, owe their conceptual roots to the intricate organic networks of neurons inside the human mind. This outstanding idea started with a basic analogy, drawing parallels between organic neurons and computational networks.

This analogy facilities across the mind, which consists of roughly 100 billion neurons. Every neuron maintains about 7,000 synaptic connections with different neurons, creating a posh neural community that underlies human cognitive processes and resolution making.

Individually, a organic neuron operates by way of a collection of straightforward electrochemical processes. It receives indicators from different neurons by way of its dendrites. When these incoming indicators add as much as a sure degree (a predetermined threshold), the neuron switches on and sends an electrochemical sign alongside its axon. This, in flip, impacts the neurons related to its axon terminals. The important thing factor to notice right here is {that a} neuron’s response is sort of a binary change: it both fires (prompts) or stays quiet, with none in-between states.

Organic neurons had been the inspiration for synthetic neural networks (picture: Wikipedia).

Synthetic neural networks, as spectacular as they’re, stay a far cry from even remotely approaching the astonishing intricacies and profound complexities of the human mind. Nonetheless, they’ve demonstrated important prowess in addressing issues which are difficult for typical computer systems however seem intuitive to human cognition. Some examples are picture recognition and predictive analytics primarily based on historic information.

Now that we have explored the foundational ideas of how organic neurons perform and their inspiration for synthetic neural networks, let’s journey by way of the evolution of neural community frameworks which have formed the panorama of synthetic intelligence.

Feed ahead neural networks, also known as a multilayer perceptron, are a basic kind of neural networks, whose operation is deeply rooted within the ideas of knowledge move, interconnected layers, and parameter optimization.

At their core, FFNNs orchestrate a unidirectional journey of knowledge. All of it begins with the enter layer containing n neurons, the place information is initially ingested. This layer serves because the entry level for the community, performing as a receptor for the enter options that must be processed. From there, the info embarks on a transformative voyage by way of the community’s hidden layers.

One essential side of FFNNs is their related construction, which signifies that every neuron in a layer is intricately related to each neuron in that layer. This interconnectedness permits the community to carry out computations and seize relationships inside the information. It is like a communication community the place each node performs a job in processing info.

As the info passes by way of the hidden layers, it undergoes a collection of calculations. Every neuron in a hidden layer receives inputs from all neurons within the earlier layer, applies a weighted sum to those inputs, provides a bias time period, after which passes the outcome by way of an activation perform (generally ReLU, Sigmoid, or tanH). These mathematical operations allow the community to extract related patterns from the enter, and seize advanced, nonlinear relationships inside information. That is the place FFNNs actually excel in comparison with extra shallow ML fashions.

Structure of fully-connected feed-forward neural networks (picture by creator).

Nevertheless, that is not the place it ends. The true energy of FFNNs lies of their capacity to adapt. Throughout coaching the community adjusts its weights to reduce the distinction between its predictions and the precise goal values. This iterative course of, typically primarily based on optimization algorithms like gradient descent, is named backpropagation. Backpropagation empowers FFNNs to really study from information and enhance their accuracy in making predictions or classifications.

Instance KNIME workflow of FFNN used for the binary classification of certification exams (move vs fail). Within the higher department, we are able to see the community structure, which is product of an enter layer, a completely related hidden layer with a tanH activation perform, and an output layer that makes use of a Sigmoid activation perform (picture by creator).

Whereas highly effective and versatile, FFNNs show some related limitations. For instance, they fail to seize sequentiality and temporal/syntactic dependencies within the information –two essential features for duties in language processing and time collection evaluation. The necessity to overcome these limitations prompted the evolution of a brand new kind of neural community structure. This transition paved the way in which for Recurrent Neural Networks (RNNs), which launched the idea of suggestions loops to higher deal with sequential information.

At their core, RNNs share some similarities with FFNNs. They too are composed of layers of interconnected nodes, processing information to make predictions or classifications. Nevertheless, their key differentiator lies of their capacity to deal with sequential information and seize temporal dependencies.

In a FFNN, info flows in a single, unidirectional path from the enter layer to the output layer. That is appropriate for duties the place the order of knowledge does not matter a lot. Nevertheless, when coping with sequences like time collection information, language, or speech, sustaining context and understanding the order of knowledge is essential. That is the place RNNs shine.

RNNs introduce the idea of suggestions loops. These act as a kind of “reminiscence” and permit the community to keep up a hidden state that captures details about earlier inputs and to affect the present enter and output. Whereas conventional neural networks assume that inputs and outputs are unbiased of one another, the output of recurrent neural networks rely on the prior parts inside the sequence. This recurrent connection mechanism makes RNNs significantly match to deal with sequences by “remembering” previous info.

One other distinguishing attribute of recurrent networks is that they share the identical weight parameter inside every layer of the community, and people weights are adjusted leveraging the backpropagation by way of time (BPTT) algorithm, which is barely totally different from conventional backpropagation as it’s particular to sequence information.

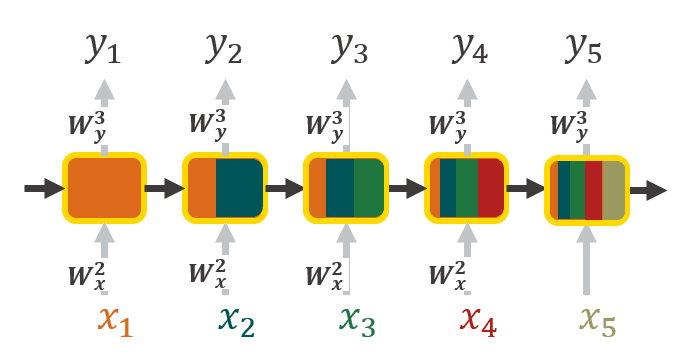

Unrolled illustration of RNNs, the place every enter is enriched with context info coming from earlier inputs. The colour represents the propagation of context info (picture by creator).

Nevertheless, conventional RNNs have their limitations. Whereas in principle they need to have the ability to seize long-range dependencies, in actuality they wrestle to take action successfully, and may even endure from the vanishing gradient downside, which hinders their capacity to study and bear in mind info over many time steps.

That is the place Lengthy Brief-Time period Reminiscence (LSTM) models come into play. They’re particularly designed to deal with these points by incorporating three gates into their construction: the Overlook gate, Enter gate, and Output gate.

- Overlook gate: This gate decides which info from the time step ought to be discarded or forgotten. By analyzing the cell state and the present enter, it determines which info is irrelevant for making predictions within the current.

- Enter gate: This gate is chargeable for incorporating info into the cell state. It takes under consideration each the enter and the earlier cell state to resolve what new info ought to be added to boost its state.

- Output gate: This gate concludes what output will likely be generated by the LSTM unit. It considers each the present enter and the up to date cell state to provide an output that may be utilized for predictions or handed on to time steps.

Visible illustration of Lengthy-Brief Time period Reminiscence models (picture by Christopher Olah).

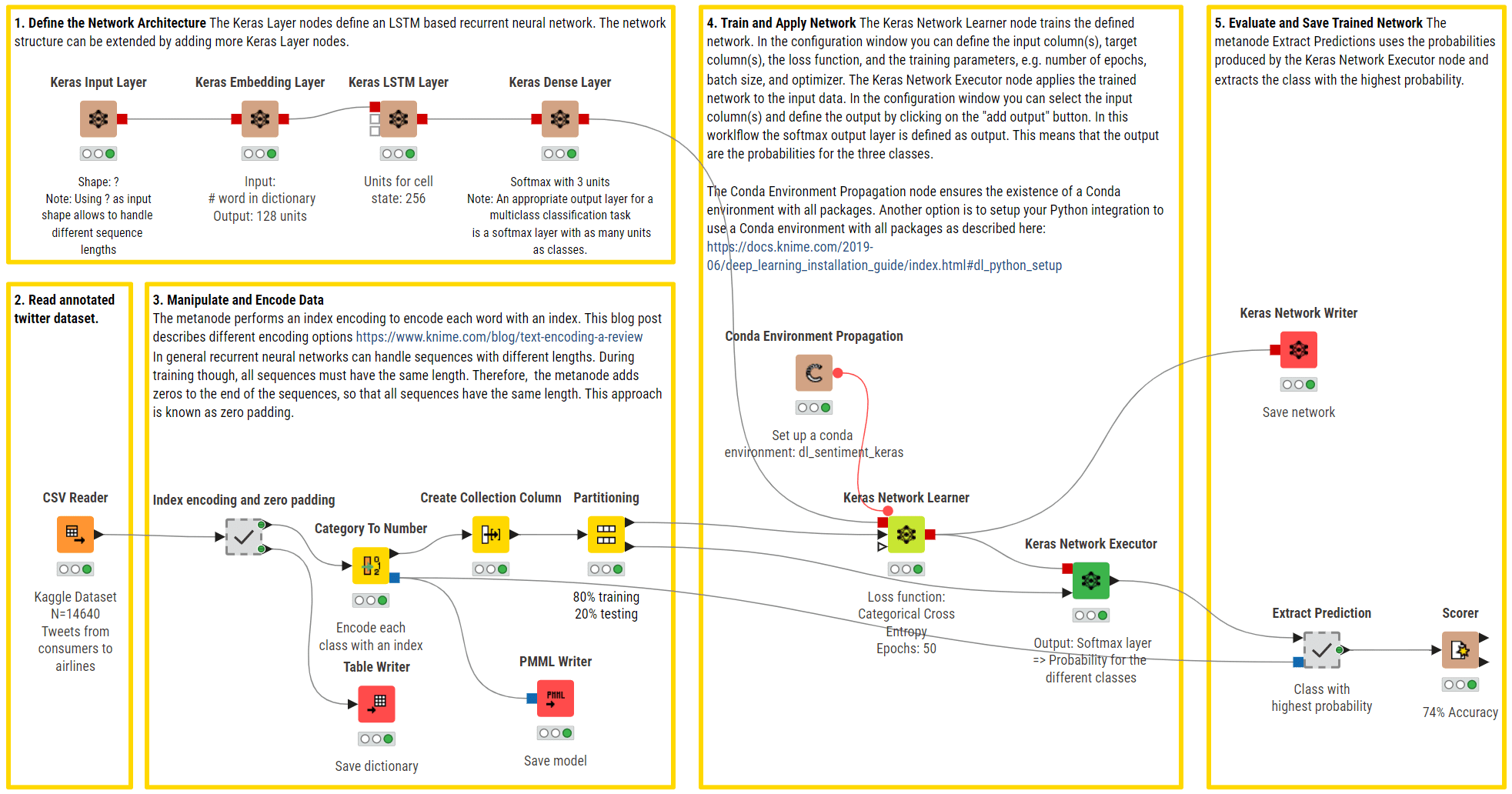

Instance KNIME workflow of RNNs with LSTM models used for a multi-class sentiment prediction (optimistic, unfavourable, impartial). The higher department defines the community structure utilizing an enter layer to deal with strings of various lengths, an embedding layer, an LSTM layer with a number of models, and a completely related output layer with a Softmax activation perform to return predictions.

In abstract, RNNs, and particularly LSTM models, are tailor-made for sequential information, permitting them to keep up reminiscence and seize temporal dependencies, which is a vital functionality for duties like pure language processing, speech recognition, and time collection prediction.

As we shift from RNNs capturing sequential dependencies, the evolution continues with Convolutional Neural Networks (CNNs). Not like RNNs, CNNs excel at spatial characteristic extraction from structured grid-like information, making them best for picture and sample recognition duties. This transition displays the various purposes of neural networks throughout totally different information varieties and constructions.

CNNs are a particular breed of neural networks, significantly well-suited for processing picture information, corresponding to 2D photographs and even 3D video information. Their structure depends on a multilayered feed-forward neural community with no less than one convolutional layer.

What makes CNNs stand out is their community connectivity and strategy to characteristic extraction, which permits them to routinely establish related patterns within the information. Not like conventional FFNNs, which join each neuron in a single layer to each neuron within the subsequent, CNNs make use of a sliding window referred to as a kernel or filter. This sliding window scans throughout the enter information and is very highly effective for duties the place spatial relationships matter, like figuring out objects in photographs or monitoring movement in movies. Because the kernel is moved throughout the picture, a convolution operation is carried out between the kernel and the pixel values (from a strictly mathematical standpoint, this operation is a cross correlation), and a nonlinear activation perform, normally ReLU, is utilized. This produces a excessive worth if the characteristic is within the picture patch and a small worth if it’s not.

Along with the kernel, the addition and fine-tuning of hyperparameters, corresponding to stride (i.e., the variety of pixels by which we slide the kernel) and dilation fee (i.e., the areas between every kernel cell), permits the community to concentrate on particular options, recognizing patterns and particulars in particular areas with out contemplating all the enter without delay.

Convolution operation with stride size = 2 (GIF by Sumit Saha).

Some kernels could concentrate on detecting edges or corners, whereas others could be tuned to acknowledge extra advanced objects like cats, canines, or avenue indicators inside a picture. By stacking collectively a number of convolutional and pooling layers, CNNs construct a hierarchical illustration of the enter, steadily abstracting options from low-level to high-level, simply as our brains course of visible info.

Instance KNIME workflow of CNN for binary picture classification (cats vs canines). The higher department defines the community structure utilizing a collection of convolutional layers and max pooling layers for computerized characteristic extraction from photographs. A flatten layer is then used to organize the extracted options as a unidimensional enter for the FFNN to carry out a binary classification.

Whereas CNNs excel at characteristic extraction and have revolutionized pc imaginative and prescient duties, they act as passive observers, for they aren’t designed to generate new information or content material. This isn’t an inherent limitation of the community per se however having a robust engine and no gas makes a quick automotive ineffective. Certainly, actual and significant picture and video information are typically exhausting and costly to gather and have a tendency to face copyright and information privateness restrictions. This constraint led to the event of a novel paradigm that builds on CNNs however marks a leap from picture classification to inventive synthesis: Generative Adversarial Networks (GANs).

GANs are a specific household of neural networks whose main, however not the one, function is to provide artificial information that carefully mimics a given dataset of actual information. Not like most neural networks, GANs’ ingenious architectural design consisting of two core fashions:

- Generator mannequin: The primary participant on this neural community duet is the generator mannequin. This element is tasked with a captivating mission: given random noise or enter vectors, it strives to create synthetic samples which are as near resembling actual samples as doable. Think about it as an artwork forger, trying to craft work which are indistinguishable from masterpieces.

- Discriminator mannequin: Enjoying the adversary function is the discriminator mannequin. Its job is to distinguish between the generated samples produced by the generator and the genuine samples from the unique dataset. Consider it as an artwork connoisseur, making an attempt to identify the forgeries among the many real artworks.

Now, this is the place the magic occurs: GANs have interaction in a steady, adversarial dance. The generator goals to enhance its artistry, frequently fine-tuning its creations to turn out to be extra convincing. In the meantime, the discriminator turns into a sharper detective, honing its capacity to inform the true from the pretend.

GAN structure (picture by creator).

As coaching progresses, this dynamic interaction between the generator and discriminator results in a captivating end result. The generator strives to generate samples which are so reasonable that even the discriminator cannot inform them other than the real ones. This competitors drives each parts to refine their skills repeatedly.

The outcome? A generator that turns into astonishingly adept at producing information that seems genuine, be it photographs, music, or textual content. This functionality has led to outstanding purposes in numerous fields, together with picture synthesis, information augmentation, image-to-image translation, and picture enhancing.

Instance KNIME workflow of GANs for the era of artificial photographs (i.e., animals, human faces and Simpson characters).

GANs pioneered reasonable picture and video content material creation by pitting a generator towards a discriminator. Extending the necessity for creativity and superior operations from picture to sequential information, fashions for extra refined pure language understanding, machine translation, and textual content era had been launched. This initiated the event of Transformers, a outstanding deep neural community structure that not solely outperformed earlier architectures by successfully capturing long-range language dependencies and semantic context, but in addition grew to become the undisputed basis of the newest AI-driven purposes.

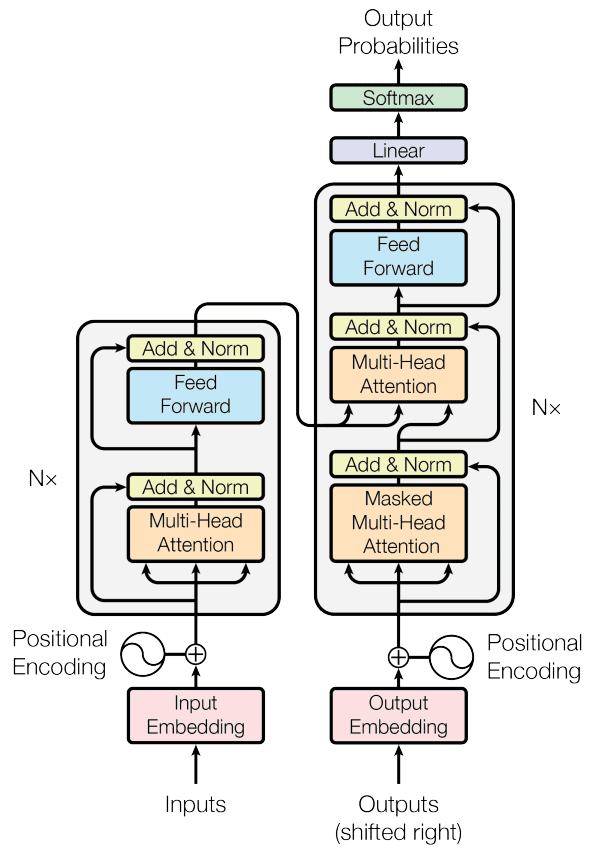

Developed in 2017, Transformers boast a singular characteristic that enables them to switch conventional recurrent layers: a self-attention mechanism that enables them to mannequin intricate relationships between all phrases in a doc, no matter their place. This makes Transformers glorious at tackling the problem of long-range dependencies in pure language. Transformer architectures include two primary constructing blocks:

- Encoder. Right here the enter sequence is embedded into vectors after which is uncovered to the self-attention mechanism. The latter computes consideration scores for every token, figuring out its significance in relation to others. These scores are used to create weighted sums, that are fed right into a FFNN to generate context-aware representations for every token. A number of encoder layers repeat this course of, enhancing the mannequin’s capacity to seize hierarchical and contextual info.

- Decoder. This block is chargeable for producing output sequences and follows the same course of to that of the encoder. It is ready to place the right concentrate on and perceive the encoder’s output and its personal previous tokens throughout every step, guaranteeing correct era by contemplating each enter context and beforehand generated output.

Transformer mannequin structure (picture by: Vaswani et al., 2017).

Think about this sentence: “I arrived on the financial institution after crossing the river”. The phrase “financial institution” can have two meanings –both a monetary establishment or the sting of a river. Here is the place transformers shine. They will swiftly concentrate on the phrase “river” to disambiguate “financial institution” by evaluating “financial institution” to each different phrase within the sentence and assigning consideration scores. These scores decide the affect of every phrase on the subsequent illustration of “financial institution”. On this case, “river” will get a better rating, successfully clarifying the meant that means.

To work that effectively, Transformers depend on hundreds of thousands of trainable parameters, require giant corpora of texts and complex coaching methods. One notable coaching strategy employed with Transformers is masked language modeling (MLM). Throughout coaching, particular tokens inside the enter sequence are randomly masked, and the mannequin’s goal is to foretell these masked tokens precisely. This technique encourages the mannequin to understand contextual relationships between phrases as a result of it should depend on the encompassing phrases to make correct predictions. This strategy, popularized by the BERT mannequin, has been instrumental in reaching state-of-the-art leads to numerous NLP duties.

An alternative choice to MLM for Transformers is autoregressive modeling. On this methodology, the mannequin is skilled to generate one phrase at a time whereas conditioning on beforehand generated phrases. Autoregressive fashions like GPT (Generative Pre-trained Transformer) observe this system and excel in duties the place the purpose is to foretell unidirectionally the subsequent most fitted phrase, corresponding to free textual content era, query answering and textual content completion.

Moreover, to compensate for the necessity for intensive textual content sources, Transformers excel in parallelization, that means they will course of information throughout coaching quicker than conventional sequential approaches like RNNs or LSTM models. This environment friendly computation reduces coaching time and has led to groundbreaking purposes in pure language processing, machine translation, and extra.

A pivotal Transformer mannequin developed by Google in 2018 that made a considerable impression is BERT (Bidirectional Encoder Representations from Transformers). BERT relied on MLM coaching and launched the idea of bidirectional context, that means it considers each the left and proper context of a phrase when predicting the masked token. This bidirectional strategy considerably enhanced the mannequin’s understanding of phrase meanings and contextual nuances, establishing new benchmarks for pure language understanding and a wide selection of downstream NLP duties.

Instance KNIME workflow of BERT for multi-class sentiment prediction (optimistic, unfavourable, impartial). Minimal preprocessing is carried out and the pretrained BERT mannequin with fine-tuning is leveraged.

On the heels of Transformers that launched highly effective self-attention mechanisms, the rising demand for versatility in purposes and performing advanced pure language duties, corresponding to doc summarization, textual content enhancing, or code era, necessitated the event of giant language fashions. These fashions make use of deep neural networks with billions of parameters to excel in such duties and meet the evolving necessities of the info analytics trade.

Massive language fashions (LLMs) are a revolutionary class of multi-purpose and multi-modal (accepting picture, audio and textual content inputs) deep neural networks which have garnered important consideration in recent times. The adjective giant stems from their huge dimension, as they embody billions of trainable parameters. A few of the most well-known examples embrace OpenAI’s ChatGTP, Google’s Bard or Meta’s LLaMa.

What units LLMs aside is their unparalleled capacity and adaptability to course of and generate human-like textual content. They excel in pure language understanding and era duties, starting from textual content completion and translation to query answering and content material summarization. The important thing to their success lies of their intensive coaching on huge textual content corpora, permitting them to seize a wealthy understanding of language nuances, context, and semantics.

These fashions make use of a deep neural structure with a number of layers of self-attention mechanisms, enabling them to weigh the significance of various phrases and phrases in a given context. This dynamic adaptability makes them exceptionally proficient in processing inputs of varied varieties, comprehending advanced language constructions, and producing outputs primarily based on human-defined prompts.

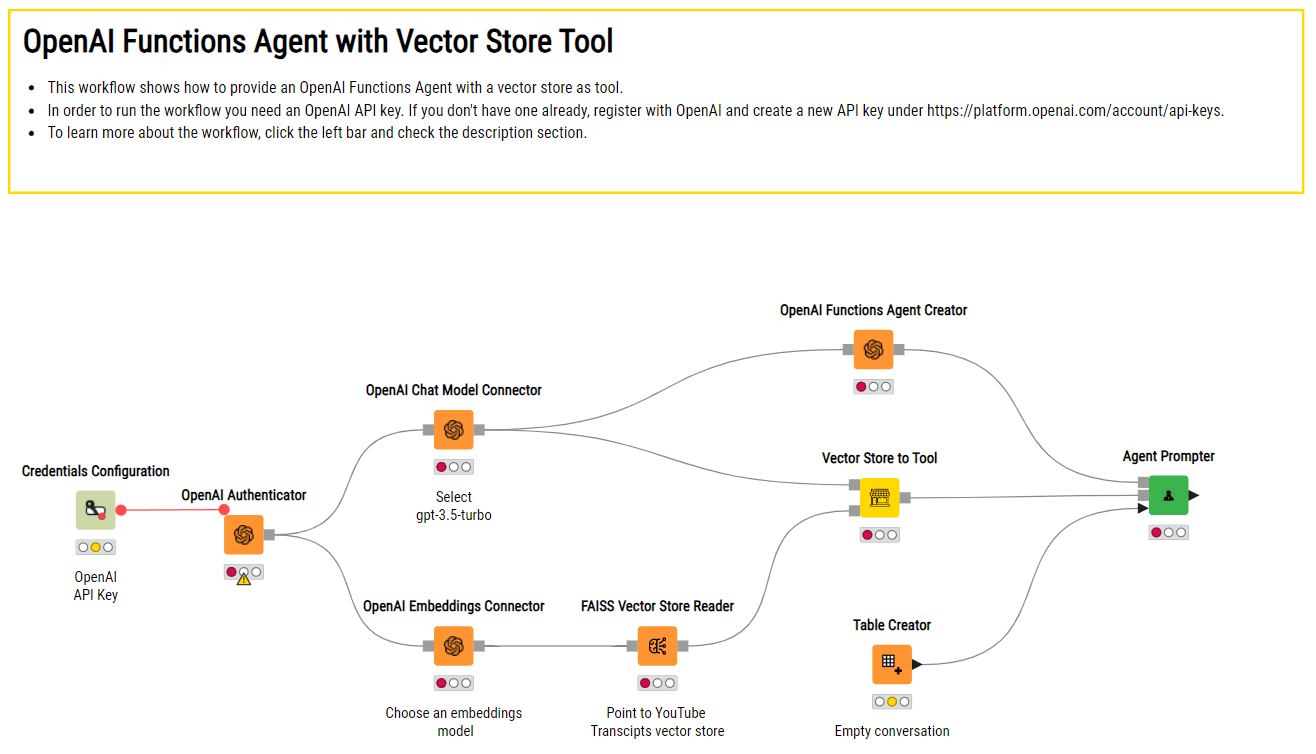

Instance KNIME workflow of making an AI assistant that depends on OpenAI’s ChatGPT and a vector retailer with customized paperwork to reply domain-specific questions.

LLMs have paved the way in which for a mess of purposes throughout numerous industries, from healthcare and finance to leisure and customer support. They’ve even sparked new frontiers in inventive writing and storytelling.

Nevertheless, their huge dimension, resource-intensive coaching processes and potential copyright infringements for generated content material have additionally raised considerations about moral utilization, environmental impression, and accessibility. Lastly, whereas more and more enhanced, LLMs could comprise some critical flaws, corresponding to “hallucinating” incorrect information, being biased, gullible, or persuaded into creating poisonous content material.

The evolution of neural networks, from their humble beginnings to the rebellion of enormous language fashions, raises a profound philosophical query: Will this journey ever come to an finish?

The trajectory of expertise has at all times been marked by relentless development. Every milestone solely serves as a stepping stone to the subsequent innovation. As we try to create machines that may replicate human cognition and understanding, it is tempting to ponder whether or not there’s an final vacation spot, a degree the place we are saying, “That is it; we have reached the head.”

Nevertheless, the essence of human curiosity and the boundless complexities of the pure world recommend in any other case. Simply as our understanding of the universe frequently deepens, the hunt to develop extra clever, succesful, and moral neural networks could also be an infinite journey.Walkthrough of neural community evolution (picture by creator).

Neural networks, the elemental constructing blocks of synthetic intelligence, have revolutionized the way in which we course of info, providing a glimpse into the way forward for expertise. These advanced computational methods, impressed by the intricacies of the human mind, have turn out to be pivotal in duties starting from picture recognition and pure language understanding to autonomous driving and medical analysis. As we discover neural networks’ historic evolution, we are going to uncover their outstanding journey of how they’ve advanced to form the fashionable panorama of AI.

Neural networks, the foundational parts of deep studying, owe their conceptual roots to the intricate organic networks of neurons inside the human mind. This outstanding idea started with a basic analogy, drawing parallels between organic neurons and computational networks.

This analogy facilities across the mind, which consists of roughly 100 billion neurons. Every neuron maintains about 7,000 synaptic connections with different neurons, creating a posh neural community that underlies human cognitive processes and resolution making.

Individually, a organic neuron operates by way of a collection of straightforward electrochemical processes. It receives indicators from different neurons by way of its dendrites. When these incoming indicators add as much as a sure degree (a predetermined threshold), the neuron switches on and sends an electrochemical sign alongside its axon. This, in flip, impacts the neurons related to its axon terminals. The important thing factor to notice right here is {that a} neuron’s response is sort of a binary change: it both fires (prompts) or stays quiet, with none in-between states.

Organic neurons had been the inspiration for synthetic neural networks (picture: Wikipedia).

Synthetic neural networks, as spectacular as they’re, stay a far cry from even remotely approaching the astonishing intricacies and profound complexities of the human mind. Nonetheless, they’ve demonstrated important prowess in addressing issues which are difficult for typical computer systems however seem intuitive to human cognition. Some examples are picture recognition and predictive analytics primarily based on historic information.

Now that we have explored the foundational ideas of how organic neurons perform and their inspiration for synthetic neural networks, let’s journey by way of the evolution of neural community frameworks which have formed the panorama of synthetic intelligence.

Feed ahead neural networks, also known as a multilayer perceptron, are a basic kind of neural networks, whose operation is deeply rooted within the ideas of knowledge move, interconnected layers, and parameter optimization.

At their core, FFNNs orchestrate a unidirectional journey of knowledge. All of it begins with the enter layer containing n neurons, the place information is initially ingested. This layer serves because the entry level for the community, performing as a receptor for the enter options that must be processed. From there, the info embarks on a transformative voyage by way of the community’s hidden layers.

One essential side of FFNNs is their related construction, which signifies that every neuron in a layer is intricately related to each neuron in that layer. This interconnectedness permits the community to carry out computations and seize relationships inside the information. It is like a communication community the place each node performs a job in processing info.

As the info passes by way of the hidden layers, it undergoes a collection of calculations. Every neuron in a hidden layer receives inputs from all neurons within the earlier layer, applies a weighted sum to those inputs, provides a bias time period, after which passes the outcome by way of an activation perform (generally ReLU, Sigmoid, or tanH). These mathematical operations allow the community to extract related patterns from the enter, and seize advanced, nonlinear relationships inside information. That is the place FFNNs actually excel in comparison with extra shallow ML fashions.

Structure of fully-connected feed-forward neural networks (picture by creator).

Nevertheless, that is not the place it ends. The true energy of FFNNs lies of their capacity to adapt. Throughout coaching the community adjusts its weights to reduce the distinction between its predictions and the precise goal values. This iterative course of, typically primarily based on optimization algorithms like gradient descent, is named backpropagation. Backpropagation empowers FFNNs to really study from information and enhance their accuracy in making predictions or classifications.

Instance KNIME workflow of FFNN used for the binary classification of certification exams (move vs fail). Within the higher department, we are able to see the community structure, which is product of an enter layer, a completely related hidden layer with a tanH activation perform, and an output layer that makes use of a Sigmoid activation perform (picture by creator).

Whereas highly effective and versatile, FFNNs show some related limitations. For instance, they fail to seize sequentiality and temporal/syntactic dependencies within the information –two essential features for duties in language processing and time collection evaluation. The necessity to overcome these limitations prompted the evolution of a brand new kind of neural community structure. This transition paved the way in which for Recurrent Neural Networks (RNNs), which launched the idea of suggestions loops to higher deal with sequential information.

At their core, RNNs share some similarities with FFNNs. They too are composed of layers of interconnected nodes, processing information to make predictions or classifications. Nevertheless, their key differentiator lies of their capacity to deal with sequential information and seize temporal dependencies.

In a FFNN, info flows in a single, unidirectional path from the enter layer to the output layer. That is appropriate for duties the place the order of knowledge does not matter a lot. Nevertheless, when coping with sequences like time collection information, language, or speech, sustaining context and understanding the order of knowledge is essential. That is the place RNNs shine.

RNNs introduce the idea of suggestions loops. These act as a kind of “reminiscence” and permit the community to keep up a hidden state that captures details about earlier inputs and to affect the present enter and output. Whereas conventional neural networks assume that inputs and outputs are unbiased of one another, the output of recurrent neural networks rely on the prior parts inside the sequence. This recurrent connection mechanism makes RNNs significantly match to deal with sequences by “remembering” previous info.

One other distinguishing attribute of recurrent networks is that they share the identical weight parameter inside every layer of the community, and people weights are adjusted leveraging the backpropagation by way of time (BPTT) algorithm, which is barely totally different from conventional backpropagation as it’s particular to sequence information.

Unrolled illustration of RNNs, the place every enter is enriched with context info coming from earlier inputs. The colour represents the propagation of context info (picture by creator).

Nevertheless, conventional RNNs have their limitations. Whereas in principle they need to have the ability to seize long-range dependencies, in actuality they wrestle to take action successfully, and may even endure from the vanishing gradient downside, which hinders their capacity to study and bear in mind info over many time steps.

That is the place Lengthy Brief-Time period Reminiscence (LSTM) models come into play. They’re particularly designed to deal with these points by incorporating three gates into their construction: the Overlook gate, Enter gate, and Output gate.

- Overlook gate: This gate decides which info from the time step ought to be discarded or forgotten. By analyzing the cell state and the present enter, it determines which info is irrelevant for making predictions within the current.

- Enter gate: This gate is chargeable for incorporating info into the cell state. It takes under consideration each the enter and the earlier cell state to resolve what new info ought to be added to boost its state.

- Output gate: This gate concludes what output will likely be generated by the LSTM unit. It considers each the present enter and the up to date cell state to provide an output that may be utilized for predictions or handed on to time steps.

Visible illustration of Lengthy-Brief Time period Reminiscence models (picture by Christopher Olah).

Instance KNIME workflow of RNNs with LSTM models used for a multi-class sentiment prediction (optimistic, unfavourable, impartial). The higher department defines the community structure utilizing an enter layer to deal with strings of various lengths, an embedding layer, an LSTM layer with a number of models, and a completely related output layer with a Softmax activation perform to return predictions.

In abstract, RNNs, and particularly LSTM models, are tailor-made for sequential information, permitting them to keep up reminiscence and seize temporal dependencies, which is a vital functionality for duties like pure language processing, speech recognition, and time collection prediction.

As we shift from RNNs capturing sequential dependencies, the evolution continues with Convolutional Neural Networks (CNNs). Not like RNNs, CNNs excel at spatial characteristic extraction from structured grid-like information, making them best for picture and sample recognition duties. This transition displays the various purposes of neural networks throughout totally different information varieties and constructions.

CNNs are a particular breed of neural networks, significantly well-suited for processing picture information, corresponding to 2D photographs and even 3D video information. Their structure depends on a multilayered feed-forward neural community with no less than one convolutional layer.

What makes CNNs stand out is their community connectivity and strategy to characteristic extraction, which permits them to routinely establish related patterns within the information. Not like conventional FFNNs, which join each neuron in a single layer to each neuron within the subsequent, CNNs make use of a sliding window referred to as a kernel or filter. This sliding window scans throughout the enter information and is very highly effective for duties the place spatial relationships matter, like figuring out objects in photographs or monitoring movement in movies. Because the kernel is moved throughout the picture, a convolution operation is carried out between the kernel and the pixel values (from a strictly mathematical standpoint, this operation is a cross correlation), and a nonlinear activation perform, normally ReLU, is utilized. This produces a excessive worth if the characteristic is within the picture patch and a small worth if it’s not.

Along with the kernel, the addition and fine-tuning of hyperparameters, corresponding to stride (i.e., the variety of pixels by which we slide the kernel) and dilation fee (i.e., the areas between every kernel cell), permits the community to concentrate on particular options, recognizing patterns and particulars in particular areas with out contemplating all the enter without delay.

Convolution operation with stride size = 2 (GIF by Sumit Saha).

Some kernels could concentrate on detecting edges or corners, whereas others could be tuned to acknowledge extra advanced objects like cats, canines, or avenue indicators inside a picture. By stacking collectively a number of convolutional and pooling layers, CNNs construct a hierarchical illustration of the enter, steadily abstracting options from low-level to high-level, simply as our brains course of visible info.

Instance KNIME workflow of CNN for binary picture classification (cats vs canines). The higher department defines the community structure utilizing a collection of convolutional layers and max pooling layers for computerized characteristic extraction from photographs. A flatten layer is then used to organize the extracted options as a unidimensional enter for the FFNN to carry out a binary classification.

Whereas CNNs excel at characteristic extraction and have revolutionized pc imaginative and prescient duties, they act as passive observers, for they aren’t designed to generate new information or content material. This isn’t an inherent limitation of the community per se however having a robust engine and no gas makes a quick automotive ineffective. Certainly, actual and significant picture and video information are typically exhausting and costly to gather and have a tendency to face copyright and information privateness restrictions. This constraint led to the event of a novel paradigm that builds on CNNs however marks a leap from picture classification to inventive synthesis: Generative Adversarial Networks (GANs).

GANs are a specific household of neural networks whose main, however not the one, function is to provide artificial information that carefully mimics a given dataset of actual information. Not like most neural networks, GANs’ ingenious architectural design consisting of two core fashions:

- Generator mannequin: The primary participant on this neural community duet is the generator mannequin. This element is tasked with a captivating mission: given random noise or enter vectors, it strives to create synthetic samples which are as near resembling actual samples as doable. Think about it as an artwork forger, trying to craft work which are indistinguishable from masterpieces.

- Discriminator mannequin: Enjoying the adversary function is the discriminator mannequin. Its job is to distinguish between the generated samples produced by the generator and the genuine samples from the unique dataset. Consider it as an artwork connoisseur, making an attempt to identify the forgeries among the many real artworks.

Now, this is the place the magic occurs: GANs have interaction in a steady, adversarial dance. The generator goals to enhance its artistry, frequently fine-tuning its creations to turn out to be extra convincing. In the meantime, the discriminator turns into a sharper detective, honing its capacity to inform the true from the pretend.

GAN structure (picture by creator).

As coaching progresses, this dynamic interaction between the generator and discriminator results in a captivating end result. The generator strives to generate samples which are so reasonable that even the discriminator cannot inform them other than the real ones. This competitors drives each parts to refine their skills repeatedly.

The outcome? A generator that turns into astonishingly adept at producing information that seems genuine, be it photographs, music, or textual content. This functionality has led to outstanding purposes in numerous fields, together with picture synthesis, information augmentation, image-to-image translation, and picture enhancing.

Instance KNIME workflow of GANs for the era of artificial photographs (i.e., animals, human faces and Simpson characters).

GANs pioneered reasonable picture and video content material creation by pitting a generator towards a discriminator. Extending the necessity for creativity and superior operations from picture to sequential information, fashions for extra refined pure language understanding, machine translation, and textual content era had been launched. This initiated the event of Transformers, a outstanding deep neural community structure that not solely outperformed earlier architectures by successfully capturing long-range language dependencies and semantic context, but in addition grew to become the undisputed basis of the newest AI-driven purposes.

Developed in 2017, Transformers boast a singular characteristic that enables them to switch conventional recurrent layers: a self-attention mechanism that enables them to mannequin intricate relationships between all phrases in a doc, no matter their place. This makes Transformers glorious at tackling the problem of long-range dependencies in pure language. Transformer architectures include two primary constructing blocks:

- Encoder. Right here the enter sequence is embedded into vectors after which is uncovered to the self-attention mechanism. The latter computes consideration scores for every token, figuring out its significance in relation to others. These scores are used to create weighted sums, that are fed right into a FFNN to generate context-aware representations for every token. A number of encoder layers repeat this course of, enhancing the mannequin’s capacity to seize hierarchical and contextual info.

- Decoder. This block is chargeable for producing output sequences and follows the same course of to that of the encoder. It is ready to place the right concentrate on and perceive the encoder’s output and its personal previous tokens throughout every step, guaranteeing correct era by contemplating each enter context and beforehand generated output.

Transformer mannequin structure (picture by: Vaswani et al., 2017).

Think about this sentence: “I arrived on the financial institution after crossing the river”. The phrase “financial institution” can have two meanings –both a monetary establishment or the sting of a river. Here is the place transformers shine. They will swiftly concentrate on the phrase “river” to disambiguate “financial institution” by evaluating “financial institution” to each different phrase within the sentence and assigning consideration scores. These scores decide the affect of every phrase on the subsequent illustration of “financial institution”. On this case, “river” will get a better rating, successfully clarifying the meant that means.

To work that effectively, Transformers depend on hundreds of thousands of trainable parameters, require giant corpora of texts and complex coaching methods. One notable coaching strategy employed with Transformers is masked language modeling (MLM). Throughout coaching, particular tokens inside the enter sequence are randomly masked, and the mannequin’s goal is to foretell these masked tokens precisely. This technique encourages the mannequin to understand contextual relationships between phrases as a result of it should depend on the encompassing phrases to make correct predictions. This strategy, popularized by the BERT mannequin, has been instrumental in reaching state-of-the-art leads to numerous NLP duties.

An alternative choice to MLM for Transformers is autoregressive modeling. On this methodology, the mannequin is skilled to generate one phrase at a time whereas conditioning on beforehand generated phrases. Autoregressive fashions like GPT (Generative Pre-trained Transformer) observe this system and excel in duties the place the purpose is to foretell unidirectionally the subsequent most fitted phrase, corresponding to free textual content era, query answering and textual content completion.

Moreover, to compensate for the necessity for intensive textual content sources, Transformers excel in parallelization, that means they will course of information throughout coaching quicker than conventional sequential approaches like RNNs or LSTM models. This environment friendly computation reduces coaching time and has led to groundbreaking purposes in pure language processing, machine translation, and extra.

A pivotal Transformer mannequin developed by Google in 2018 that made a considerable impression is BERT (Bidirectional Encoder Representations from Transformers). BERT relied on MLM coaching and launched the idea of bidirectional context, that means it considers each the left and proper context of a phrase when predicting the masked token. This bidirectional strategy considerably enhanced the mannequin’s understanding of phrase meanings and contextual nuances, establishing new benchmarks for pure language understanding and a wide selection of downstream NLP duties.

Instance KNIME workflow of BERT for multi-class sentiment prediction (optimistic, unfavourable, impartial). Minimal preprocessing is carried out and the pretrained BERT mannequin with fine-tuning is leveraged.

On the heels of Transformers that launched highly effective self-attention mechanisms, the rising demand for versatility in purposes and performing advanced pure language duties, corresponding to doc summarization, textual content enhancing, or code era, necessitated the event of giant language fashions. These fashions make use of deep neural networks with billions of parameters to excel in such duties and meet the evolving necessities of the info analytics trade.

Massive language fashions (LLMs) are a revolutionary class of multi-purpose and multi-modal (accepting picture, audio and textual content inputs) deep neural networks which have garnered important consideration in recent times. The adjective giant stems from their huge dimension, as they embody billions of trainable parameters. A few of the most well-known examples embrace OpenAI’s ChatGTP, Google’s Bard or Meta’s LLaMa.

What units LLMs aside is their unparalleled capacity and adaptability to course of and generate human-like textual content. They excel in pure language understanding and era duties, starting from textual content completion and translation to query answering and content material summarization. The important thing to their success lies of their intensive coaching on huge textual content corpora, permitting them to seize a wealthy understanding of language nuances, context, and semantics.

These fashions make use of a deep neural structure with a number of layers of self-attention mechanisms, enabling them to weigh the significance of various phrases and phrases in a given context. This dynamic adaptability makes them exceptionally proficient in processing inputs of varied varieties, comprehending advanced language constructions, and producing outputs primarily based on human-defined prompts.

Instance KNIME workflow of making an AI assistant that depends on OpenAI’s ChatGPT and a vector retailer with customized paperwork to reply domain-specific questions.

LLMs have paved the way in which for a mess of purposes throughout numerous industries, from healthcare and finance to leisure and customer support. They’ve even sparked new frontiers in inventive writing and storytelling.

Nevertheless, their huge dimension, resource-intensive coaching processes and potential copyright infringements for generated content material have additionally raised considerations about moral utilization, environmental impression, and accessibility. Lastly, whereas more and more enhanced, LLMs could comprise some critical flaws, corresponding to “hallucinating” incorrect information, being biased, gullible, or persuaded into creating poisonous content material.

The evolution of neural networks, from their humble beginnings to the rebellion of enormous language fashions, raises a profound philosophical query: Will this journey ever come to an finish?

The trajectory of expertise has at all times been marked by relentless development. Every milestone solely serves as a stepping stone to the subsequent innovation. As we try to create machines that may replicate human cognition and understanding, it is tempting to ponder whether or not there’s an final vacation spot, a degree the place we are saying, “That is it; we have reached the head.”

Nevertheless, the essence of human curiosity and the boundless complexities of the pure world recommend in any other case. Simply as our understanding of the universe frequently deepens, the hunt to develop extra clever, succesful, and moral neural networks could also be an infinite journey.

Anil is a Knowledge Science Evangelist at KNIME.